My Take: Sam Altman’s Gentle Singularity essay is right that transformation is happening without a dramatic rupture moment. He is wrong that this makes the disruption less severe. Slow is not the same as easy. The people getting displaced by AI do not care whether the process was gentle or abrupt.

Sam Altman published his Gentle Singularity essay making the case that the singularity is already underway, arriving quietly and incrementally rather than as a dramatic rupture.

The essay is well-written and I understand the appeal of the argument. It is also doing something subtle and worth calling out: it reframes a problem as a feature.

This is the essay the CEO of OpenAI would write. My problem is not with the prediction. My problem is with what the framing leaves out.

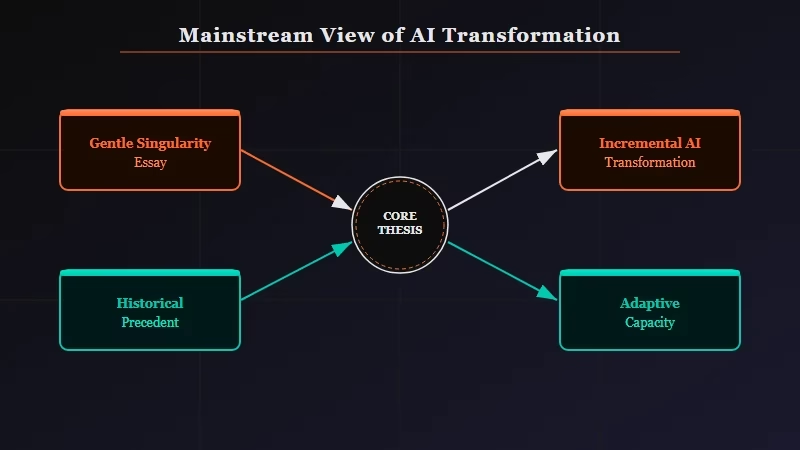

The Mainstream View and Why It Falls Short

The mainstream framing, best represented by Sam Altman’s Gentle Singularity essay, holds that AI transforming society gradually is preferable to a sudden rupture and that society will adapt because it always has.

The steel-man version of Altman’s position is genuinely compelling. Transformation that arrives piece by piece is harder to resist politically, easier to adapt to economically, and less likely to cause panic.

The “boiling frog” dynamic he is describing is real. Society has absorbed enormous technological change over the last 30 years without a collapse.

The argument draws on historical precedent: the industrial revolution was disruptive but ultimately raised living standards. Electrification eliminated entire job categories but created more than it destroyed. The internet killed newspapers and bookstores but the economy absorbed it.

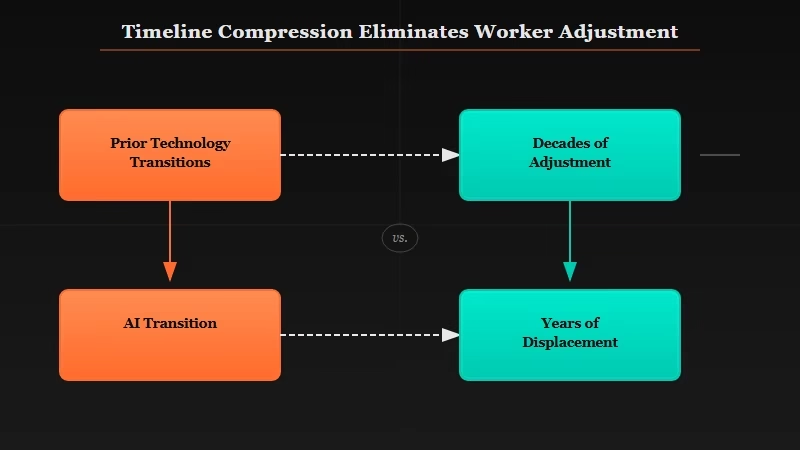

The problem with the historical analogy is the timeline. Previous technological transitions played out over decades, giving labor markets, educational systems, and social contracts time to adjust. The Gentle Singularity argument assumes that time buffer still exists. From what I can see, it does not.

What Is Actually Happening

The pace of AI capability improvement is outrunning the institutional adjustment mechanisms that made prior technological transitions survivable.

When the industrial revolution displaced agricultural labor, the displaced workers’ grandchildren became factory workers. The transition was brutal for individuals, but the institutional response had generations to develop.

When AI replaces knowledge work, the response needs to happen not in generations but in years.

The people writing code, analyzing legal documents, and producing first-draft content in 2024 are the same people who are now watching AI handle significant portions of those workflows in 2026.

The timeline from “AI helps with this” to “AI does this better than I can” is compressing faster than any prior technology transition.

The MAD Bugs story from this week is an example that cuts against the gentle framing. Claude found 500+ zero-day vulnerabilities in production software using conversational prompts. That capability did not exist in meaningful form two years ago. The security research jobs it affects do not have a multi-decade adjustment window.

Altman’s essay acknowledges that disruption is real. What it does not adequately address is that “gentle” describes the trajectory from the outside, not the experience from inside a career being automated away.

The Part Nobody Wants to Admit

The Gentle Singularity framing is politically convenient for AI developers because it deflects the question of who is responsible for managing the transition.

If the singularity is gradual and society always adapts, then the companies driving the transition are not obligated to do anything beyond build good products.

The adaptation is someone else’s problem, distributed across millions of individuals, educational institutions, and governments.

The way I see it, this framing serves a specific interest. OpenAI benefits from the narrative that AI transition is historically normal and self-correcting. It reduces regulatory urgency. It shifts the language from “displacement” to “transformation.”

It makes the people pointing out job losses sound like they are fighting against progress rather than asking legitimate questions about who bears the adjustment cost.

I am not saying AI development should stop or even slow down. The technology is genuinely useful, the improvements are real, and I use it constantly.

What I am saying is that “gentle” is a word that describes how the change looks from 30,000 feet, not how it feels to the people inside it. Those are different things and they deserve different responses.

The historical transitions Altman references also happened before social media made the displaced workers visible to each other in real time.

The political friction from AI-driven displacement will arrive faster than any prior technology transition for the simple reason that the people being disrupted are more connected and more vocal than agricultural workers in the 1800s or factory workers in the 1900s.

Hot Take

The Gentle Singularity is not gentle for the people inside it. Altman is describing the experience of someone watching the transformation from a position of control. For the knowledge worker whose workflow is being automated, there is nothing gentle about discovering that the skill set they spent years developing is now a commodity priced at API cost.

Quick Takeaways

- Altman’s Gentle Singularity argument is correct that transformation is incremental rather than a dramatic rupture moment

- The argument fails by conflating “slow from the outside” with “manageable from the inside” for the people being displaced

- Prior technology transitions had multi-decade adjustment windows; the current AI transition does not

- The “society always adapts” framing is politically convenient for AI developers because it deflects responsibility for managing the transition

- The people being disrupted are more connected and vocal than any prior displaced workforce, meaning political friction will arrive faster than historical precedent suggests