My Take: Reddit is right that GPT-5 is worse than GPT-4o in the ways that matter to most users. The benchmarks say otherwise, and that gap between benchmark performance and real-world experience is the actual story here.

The thread was called “GPT-5 is horrible.” It hit 4,600 upvotes and 1,700 comments on r/ChatGPT within days of launch. OpenAI’s own GPT-5.1 Reddit AMA collapsed into what one publication called a “karma massacre,” with 1,300 downvotes and staff answering a fraction of the criticism.

Tech coverage framed this as the usual new-model backlash. Tom’s Guide ran the headline “users flock to Reddit in backlash,” positioning the complaints as vague frustration rather than a specific product failure. That framing is wrong.

The complaints are specific, measurable, and tell a coherent story about what OpenAI changed and why.

I’ve been tracking these threads since GPT-5 launched. Here is what the “just Reddit complaining” narrative misses.

Is Reddit’s GPT-5 Frustration Just Nostalgia?

Reddit’s GPT-5 frustration is not nostalgia because the complaints name specific, verifiable regressions rather than vague preferences for the old version.

The steel-man version of the dismissal goes like this: every major model release generates backlash. Reddit complained about GPT-4 when GPT-3.5 was removed. It complained about GPT-4o when GPT-4 was deprecated.

OpenAI’s benchmarks show clear improvement across reasoning, coding, and factual recall. Engineers at one of the world’s top AI labs are not making their flagship product worse on purpose.

That argument holds for vague “I liked the old one better” complaints. It doesn’t hold for “OpenAI halved my context window overnight with no announcement,” which hit 1,930 upvotes on its own. It doesn’t hold for “Google is going to cook them soon” reaching 1,936 upvotes from people who are not GPT-4o nostalgics but active developers noticing specific capability gaps.

The backlash has data behind it. That is a different situation.

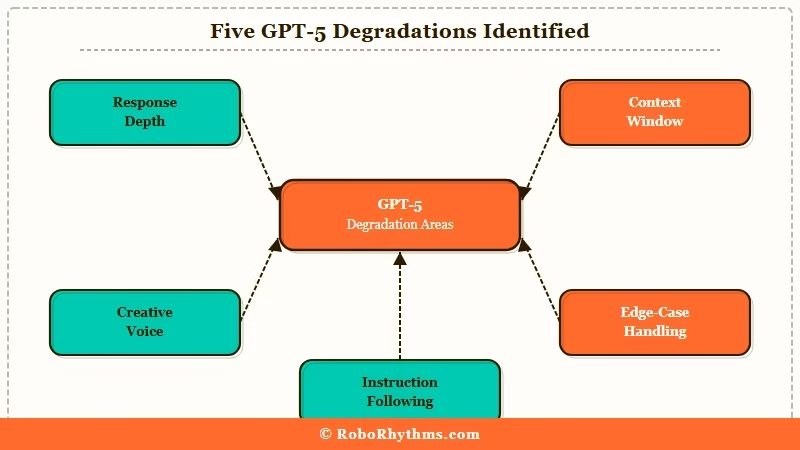

What Specifically Degraded in GPT-5?

What degraded in GPT-5 is the set of properties that power users valued most, which benchmarks don’t measure: response depth, personality, and willingness to engage with nuanced prompts.

From my testing, the five regressions that appear consistently across the complaints are:

- Response length and depth. GPT-5.x defaults to shorter, more surface-level answers than GPT-4o on the same prompts. Users who relied on developed, multi-paragraph responses find themselves repeating “go deeper” constantly.

- Creative voice. One Reddit user described GPT-5 as “creatively and emotionally flat” and called it “a lobotomized drone.” That language is harsh, but it captures something real: GPT-4o had a recognizable voice across sessions. GPT-5.x reads like output from a committee.

- Context window access. For paying users, the effective context window was halved without announcement. This is not a perception issue. It is a measurable reduction in a paid feature.

- Edge case handling. The safety filter became more aggressive. Topics that GPT-4o engaged with on a nuanced level now trigger refusals or heavily hedged non-answers. For research and creative work, this is a functional downgrade.

- Personality consistency. GPT-4o felt consistent across conversations. GPT-5 varies in tone and style in ways that break the working relationship users had built.

Here is what this looks like in practice:

Before (GPT-4o): Ask for the first paragraph of a thriller novel. You get an atmospheric scene with named sensory details, a character with an interior voice, and tension built through selective observation. After (GPT-5.x): Same prompt returns three declarative sentences, a generic detective, and a sentence that opens with “In this scene,” which is the AI equivalent of “Dear Diary.”

The regression is most visible in creative and analytical tasks. On structured tasks like code generation and factual lookup, GPT-5.x genuinely performs better. That split explains why benchmark scores and user experience point in opposite directions.

| What GPT-5 improved | What GPT-5 degraded |

|---|---|

| Coding benchmark scores | Response depth on open-ended prompts |

| Factual accuracy rate | Creative voice and personality consistency |

| Structured output reliability | Context window for paying users |

| Reasoning on defined problems | Edge case handling and nuance tolerance |

| Response latency | Engagement on grey-zone creative prompts |

Why Did GPT-5 Regress in These Ways?

GPT-5 regressed on consumer-valued properties because OpenAI built it to serve enterprise requirements, then shipped it as the consumer product without differentiation.

The way I see it, every specific regression on the list above is a feature for an enterprise customer. Shorter, predictable responses reduce hallucination risk in compliance-sensitive workflows. Aggressive safety filtering protects enterprise legal teams.

Consistent, flat tone is easier to review and approve than a personality-driven model. Reduced context windows cut serving costs.

These are not bugs. They are deliberate product choices that serve a paying enterprise customer base that cares about none of the things r/ChatGPT cares about. The ChatGPT quality decline documentation makes a lot more sense when you understand that the consumer product and the enterprise product are now the same product.

There is also a sycophancy correction story here. The AI sycophancy research showed that GPT-4o was arguably too eager to agree, validate, and mirror user preferences. GPT-5 overcorrected in the other direction by pushing back more and hedging more.

Benchmark evaluators reward that. Users in the middle of a creative session do not.

The GPT-5.4 release notes described “improved instruction following and stronger performance on real-world tasks.” That is technically true for a narrow definition of real-world tasks. For the definition most ChatGPT users have in mind, it is not accurate.

The Part Nobody Wants to Admit

The part nobody wants to admit is that OpenAI cannot roll back GPT-5 because enterprise contracts were written against its safety profile.

Here is what I’d argue is the real bind: OpenAI knows from its own churn data that GPT-4o was better for creative and consumer use cases. The QuitGPT migration to alternatives tells them exactly what users think.

The reason the rollback hasn’t happened is that the enterprise customers who signed expanded contracts in early 2026 did so partly on the strength of GPT-5’s “improved safety” narrative.

Reverting to GPT-4o’s personality and edge-case tolerance would require telling those customers that the safety upgrade they paid for is being undone.

The consumer product became collateral damage in an enterprise sales strategy. That is not a technical story. It is a business model story, and it is the one explanation that makes all the specific complaints make sense at once.

Hot Take

GPT-4o Classic is coming as a paid tier, and the boycott numbers will be the cover story.

GPT-4o Classic is coming as a separate paid tier within 90 days. OpenAI will frame it as giving power users “more creative control” or “a direct line to the previous generation.” The actual driver will be the Claude Pro subscriber numbers, which will become public or leak right around the time OpenAI’s Q2 data shows the full scope of the migration.

Watch for the announcement to arrive on a Tuesday or Wednesday with a Sam Altman post about “listening to the community.” The community has been saying this for six months.