My Take: AI agents are not failing because enterprises are slow to adopt or lack vision. They are failing because vendors sold a math problem as a product. A 10-step agent workflow with 85% per-step accuracy succeeds only 20% of the time. Nobody in the vendor sales cycle is explaining that.

Forty percent of agentic AI projects were canceled or paused as of February 2026. Industry analysts are calling it an “adoption gap.” Vendors are blaming change management. Enterprise leaders are quietly shelving pilots that generated more incident reports than productivity gains.

The explanation you hear most often is that organizations are not ready. They need to modernize their data. They need to train their teams. They need to build a culture of AI experimentation. Give it time and the agents will deliver.

That explanation is wrong, and I think it is making the problem worse.

The Mainstream View (And Why It Falls Short)

The mainstream narrative, pushed by Gartner, Salesforce, and virtually every enterprise AI vendor, is that AI agents are transformative technology held back by organizational friction rather than technical limits.

Salesforce’s Agentforce launched in late 2025 as a $1 billion marketing investment in the premise that agentic AI would automate enterprise workflows at scale.

McKinsey Global Institute projected in their 2025 AI adoption report that AI agents would unlock $2.6 trillion in annual value across enterprise knowledge work.

The argument in both cases is: the technology is ready; the problem is human adoption. Legacy processes, risk-averse procurement teams, and a workforce uncomfortable delegating to AI are the bottleneck. Give enterprises time to mature and the value will unlock.

From what I have seen in three different agent deployments over the past year, this framing is a vendor narrative, not a technical assessment. It shifts responsibility away from product quality and onto the customer. And it is causing enterprises to pour money into agent deployments on top of data foundations that cannot support them.

What Is Really Happening

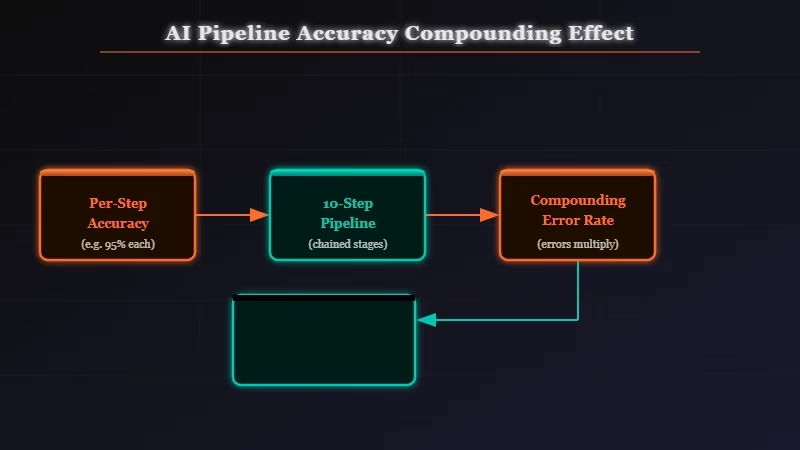

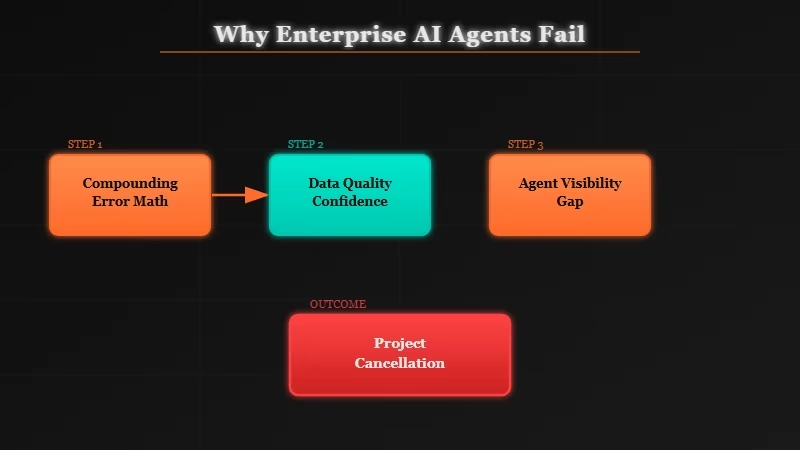

The failures are not primarily organizational. They are mathematical. Multi-step agent workflows compound errors in a way that makes them structurally unreliable on the data quality that most enterprises have.

Here is the math that nobody puts in the vendor presentation. If an AI agent achieves 85% accuracy on each individual step, a 10-step workflow succeeds roughly 20% of the time. That is not an adoption problem. That is a compounding probability problem.

0.85^10 = 0.197

To get a 10-step workflow above 80% success rate, you need per-step accuracy above 98%. That level of accuracy requires clean, well-structured, current data at every input point. It requires that the agent’s tool connections work reliably. It requires that edge cases in the workflow are understood and handled.

A Fortune analysis of the Accenture and Wharton joint study on AI agents published in March 2026 put it directly: “Intelligence may be scalable, but accountability is not.” When an agent makes 10 decisions in sequence, a human still has to own the outcome. But most organizations have no visibility into what happened or which step broke the chain.

Only 24.4% of organizations have full visibility into which AI agents are communicating with each other. More than half of all agents currently deployed in enterprise environments run without any security oversight or logging. You cannot debug a failure you cannot see. And you cannot improve step accuracy when you do not know which step broke.

The data quality problem compounds this further. Twenty-eight percent of US firms report zero confidence in the data quality feeding their agents. An agent that reasons correctly but operates on bad data produces confidently wrong outputs. That is often worse than no automation at all, because the outputs look authoritative.

The Part Nobody Wants to Admit

The enterprise AI agent market is currently selling a pipeline before the plumbing exists. Most deployments are not failing because of organizational resistance. They are failing because agents require data infrastructure maturity that the majority of enterprises do not have and will not have for years.

The uncomfortable implication of the compounding accuracy math is that single-step AI tasks (writing, summarizing, classifying) are genuinely reliable and valuable. Multi-step autonomous agent workflows on messy enterprise data are not.

The gap between those two use cases is where vendor promises live and where enterprise budgets are currently being spent.

I wrote recently about how AI agents leaking sensitive data is another structural gap that gets treated as an implementation problem rather than a design problem.

The pattern is the same: the technology deploys before the safety and quality layer is in place, failures accumulate, and the vendor explanation is always some version of “you need to do more work first.”

That framing is backward. The technology should be reliable enough to deploy without requiring enterprises to rebuild their entire data infrastructure first. The answer to “this only works if your data is perfect” should not be “then fix your data.”

It should be “then we need to make the technology work on imperfect data, because that is the only data that exists in the real world.”

The former SAP CTO Vishal Sikka published research arguing that large language models are mathematically incapable of reliable complex agentic behavior at enterprise scale.

Whether you accept his proof or not, the 40% project failure rate is the empirical confirmation that something structural is wrong, not something organizational.

Hot Take

The companies that will win at enterprise AI in the next two years are not the ones deploying the most agents. They are the ones building the observability and data quality layer that makes agents debuggable. That is the unsexy infrastructure play that the flashy agent demos are obscuring.