My Take: AI agent demos in 2026 are theatre. They show what the model can do under controlled conditions. Real customer environments break the assumptions every demo bakes in: clean data, stable APIs, narrow scope, no edge cases. The model is rarely the problem. The gap between demo and production is the part nobody is selling, and it is why so many agent pilots are dying in their first quarter.

Marc Benioff said on the Salesforce earnings call last quarter that AI agents will “replace half of the work in our customer-facing functions” within 18 months. Sam Altman has been saying versions of this for two years.

Sundar Pichai used “agents will reshape every workflow” three times in his last keynote. The mainstream view in 2026 is clear: AI agents are about to fundamentally restructure knowledge work, the only question is how fast.

I think this view is wrong, or at least so misleading it might as well be wrong. The way I see it, every AI agent demo that ships in 2026 hides three things customers will discover in their first 30 days, and the failure mode is not the model getting worse, it is the customer environment exposing what the demo was never tested against. The agent industry is built on a polished surface and the floor is about to come out.

What follows is the contrarian read on why so many of these pilots are quietly being shut down at the 90-day mark, what is actually broken, and the part nobody in the agent-vendor ecosystem wants to admit.

The Mainstream View and Why It Falls Short

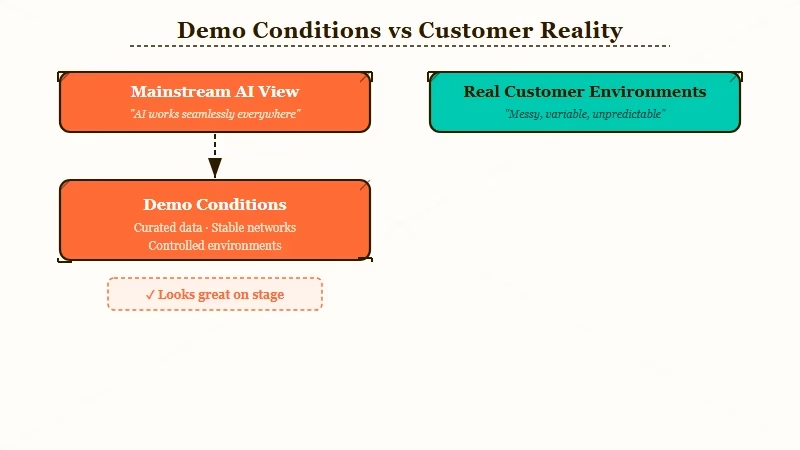

The mainstream view from CEOs like Benioff, Altman, and Pichai is that agent capability is the bottleneck and the next model release will close the remaining gap. This is wrong because the bottleneck is not capability, it is the gap between demo conditions and customer environments.

Read the Salesforce State of the Connected Customer report and you will see vendor-side optimism about agentic adoption that does not match what implementation teams are seeing on the ground.

The mainstream story goes like this: Demo shows the agent doing X. Model improves. Agent now does X reliably. Customer pays for X. The whole industry runs on this loop, and at the demo level it is true. Anthropic’s research shows multi-agent architectures outperforming single-agent setups by 90.2 percent on benchmarks. The capability is there.

What the loop misses is that “customer pays for X” is the part where the model has nothing to do with the outcome. The agent has to actually deliver X inside the customer’s stack, against their data, through their authentication, with their compliance constraints, while their employees mistype things and their CRM has 30 percent stale records and their support docs contradict themselves and their API rate limits cap at 50 requests per minute. The demo did none of that.

The benchmark improvements that vendors point to (SWE-Bench Pro at 58 percent, Humanity’s Last Exam at 54 percent) are real. They also do not predict whether the agent will work for a specific customer next Tuesday at 10 AM under load. From what I have seen across the agent pilots that have shipped to production, the model’s benchmark score is about the fifth most important variable.

What’s Actually Happening Inside Failed Pilots

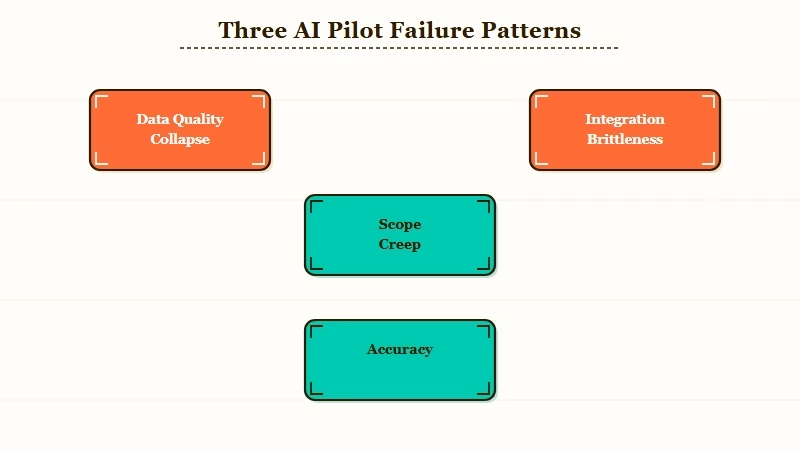

What’s actually happening is that demo conditions hide three things every customer environment exposes: data quality, integration brittleness, and scope creep that destroys the agent’s accuracy budget.

From the agent-builder threads on r/AI_Agents and the post-mortems being written by the implementation teams that survive these projects, the failure mode is consistent.

Three failure patterns from real production rollouts:

- Data quality collapse. The demo runs against a hand-curated dataset. The customer’s data has duplicates, contradictions, stale records, missing fields, and three different spellings of every customer name. The agent’s reasoning is fine. Its inputs are garbage. The agent looks broken to the customer because it produces garbage outputs from garbage inputs.

- Integration brittleness. The demo runs against stable mock APIs. The customer’s integrations break weekly. Salesforce changes a field schema. Slack throttles unexpectedly. The CRM’s webhook fires twice. The agent has no graceful failure mode for “the integration I need just stopped responding,” so it either retries forever or returns nothing useful.

- Scope creep that wrecks accuracy. The demo handles a narrow well-defined task. The customer expects the agent to handle 200 variations of that task plus 50 adjacent ones nobody mentioned. Each new variation drops the agent’s accuracy by a few percentage points. By month two, the agent is at 60 percent reliability on a workload the customer thinks it should handle at 95.

The way I see it, each of these is a structural problem that no model improvement will fix. A 99-percentile model still produces garbage from garbage inputs. A frontier model still fails when the API it depends on returns 503. A multi-agent swarm still drops accuracy when scope balloons past what any of the agents were tuned for.

The Part Nobody Wants to Admit

The part nobody wants to admit is that AI agents in 2026 are basically RPA bots with a better UI, and the demo industry is selling them as autonomous knowledge workers.

What this tells me is that the gap between what is being sold and what is shipping is the real story of this year, and it is going to start mattering when the first wave of pilot contracts comes up for renewal in Q3.

RPA (robotic process automation) has been around for 20 years. It works for narrow well-defined tasks against stable systems. It fails for everything else. AI agents are RPA with three improvements: natural language interfaces, better reasoning on edge cases, and the ability to handle some unstructured input. Those are real improvements. They are not the same as autonomy, judgment, or domain expertise, which are what the agent-vendor pitch deck implies you are buying.

The vendor incentive structure makes this worse. Demos are how agents are sold. Demos are designed to maximize what the agent looks capable of, not what it reliably does. So the entire industry is optimised for an artifact (the demo) that is structurally disconnected from what gets deployed. Implementation teams know this. Vendor sales teams know this. The buyer rarely does until month three.

For the broader picture on what is happening in this space, our take on why AI agents keep failing in production covers the technical detail of the failure modes, the AI agents are winning, not enterprise piece looks at where this is succeeding (it is not the enterprises spending the money), and the AI agents normal users opinion covers the consumer-side reality.

Hot Take

The agent industry in 2026 has the same shape as the chatbot industry in 2018. A hype wave, a flood of vendor demos, a wave of pilots, then a quiet 18-month period where most of those pilots get shut down without anyone calling it a failure.

The pilots that survive will be the ones that scoped down to a single narrow task against clean data, which is to say the pilots that look the least like the demos that sold them. The vendors that win this cycle will be the ones who price for “the boring 70 percent reliability that ships” rather than “the dazzling 99 percent accuracy that demos.”

Half of the named agent companies in 2026 will not exist in 2028. The model will keep improving. That will not save the industry from the demo-to-production gap, because the gap is not a model problem and never was.