What Happened: Drexel University researchers analyzed 300+ Reddit posts from teens aged 13-17 and found that more than half of U.S. teens now regularly use AI companion chatbots. Many are self-reporting six classical behavioral addiction criteria, from withdrawal to relapse. The study is the most detailed evidence yet that teen AI chatbot dependency is real and accelerating.

A lot of people assumed teens were smarter than this. That they’d recognize an AI chatbot for what it is, have a laugh, and move on. That assumption turns out to have been very wrong.

A new Drexel University study published in April 2026 found that more than half of U.S. teens regularly use AI companion chatbots. A meaningful portion of those teens are worried about how attached they’ve become.

This isn’t a story driven by paranoid parents or technophobes. The concern is coming from the teens themselves.

What Did the Drexel Study Actually Find?

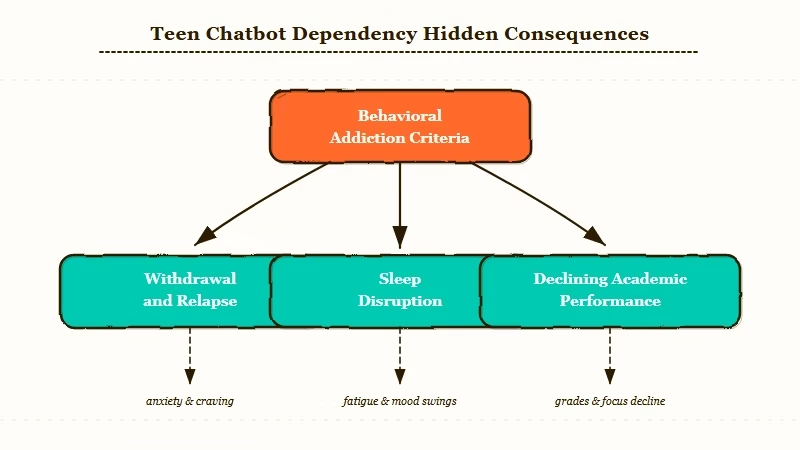

The Drexel study found that teens are self-reporting six behavioral addiction criteria in their AI chatbot use, including withdrawal, relapse, and emotional conflict.

Researchers analyzed more than 300 Reddit posts from users who identified as 13 to 17 years old and had posted specifically about their dependency on Character.AI.

About a quarter of those posts described using the chatbot for emotional support: coping with loneliness, seeking advice for mental health struggles, or processing distress they weren’t comfortable sharing with real people.

The study identified six addiction criteria playing out in teen experiences:

- Salience: deepening emotional attachment to chatbots in place of real relationships

- Tolerance: a pattern of escalating use over time to get the same emotional payoff

- Withdrawal: sadness, anxiety, or feeling incomplete when not interacting with the bot

- Relapse: attempting to stop using the app, only to return within days or weeks

- Mood modification: turning to the chatbot specifically during moments of stress or loneliness

- Conflict: wanting to stop while feeling compelled to continue

Real-world consequences teens reported included sleep disruption, declining academic performance, and strained relationships with people in their offline lives.

From what I’ve seen covering the AI companion space, these patterns track almost exactly with what Replika users described when that platform removed its relationship features in 2023. The dependency dynamic is not new.

What has changed is how many teenagers are now inside it.

| Addiction criterion | What it looks like in practice |

|---|---|

| Salience | Thinking about the chatbot during school, choosing it over friends |

| Tolerance | Needing longer sessions to feel the same comfort |

| Withdrawal | Irritability or sadness on days the app isn’t used |

| Relapse | Deleting the app, reinstalling it within a week |

| Mood modification | Opening the app as the default response to stress |

| Conflict | Feeling ashamed of the habit while continuing it |

Why Is Teen AI Chatbot Dependency a Bigger Problem Than It Looks?

Teen AI chatbot dependency is a bigger structural issue than the numbers alone suggest because these platforms are built to reinforce exactly the patterns the study identified.

The way I see it, this isn’t an accident of design. These platforms are engineered to be responsive, consistent, non-judgmental, and available at 3am when no human friend is.

For a teenager dealing with isolation or stress, those aren’t flaws. They’re the product.

The Drexel researchers proposed a design framework called CARE: Comprehensive Needs, Attachment-awareness, Respectful Empathy, and Ease of Exit.

The fact that “Ease of Exit” needs to be explicitly named in a framework tells you something about how current platforms are not built.

There’s a genuine tension here that design guidelines alone can’t resolve. These companies have real business incentives to grow engagement, not to enforce usage limits. The companion chatbot regulation wave already underway in 2026 is partly a response to exactly this structural problem.

What Does This Mean for Parents and Teens Right Now?

For parents and teens right now, the most important signal from this research is that over-reliance on AI chatbots is not rare or unusual. It’s happening to a meaningful share of the teen population, and teens often recognize the problem before adults do.

What I’d recommend taking from this study is a reframe. Framing this conversation as “AI bad” misses the point. These teens are turning to chatbots because the apps are meeting real emotional needs, often needs that aren’t being met elsewhere.

The question isn’t whether they use AI companions, but whether that use is adding to their life or crowding out more important things. A few connected pieces worth reading alongside this study:

- Why Americans don’t trust AI offers useful context on the broader public sentiment

- The best AI companion apps vary significantly in how they handle emotional engagement and session limits

- For context on policy, the AI chatbot regulation wave covers what Washington, New York, and other states are now requiring

The researchers’ CARE framework is worth noting because it gives platforms an alternative to outright bans. Whether they have the incentive to implement it is the harder question.

What Happens Next for AI Chatbot Regulation of Minors?

The most likely near-term outcome is that this study gets cited in legislative hearings, and more states move toward disclosure requirements or age-gated usage caps.

From what I’ve seen in how these cycles play out, the gap between a study like this and a bill introduction in a state legislature is often measured in weeks, not months.

The Drexel research gives regulators a very specific anchor: named addiction criteria, self-reported real-world consequences, and a proposed design framework they can reference without having to invent one.

That combination is exactly what legislative staff reach for when they need to justify new rules quickly.

The platform most implicated in the study is Character.AI, which already had one high-profile lawsuit shift its policy in 2024. A broader pattern of research documenting teen dependency across multiple apps is a different kind of pressure than a single lawsuit.

Expect more platforms to announce teen-specific safeguards in the coming months, likely timed to get ahead of whatever legislation is moving fastest.