TL;DR: Claude Code prepends an attribution header that changes on every request, which invalidates the KV cache on your local inference server and drops throughput by 90 percent. The fix is a single env var in

~/.claude/settings.json:"CLAUDECODEATTRIBUTION_HEADER": "0". Setting it in the shell does nothing. Settings file only.

I plugged Claude Code into a local Qwen2.5-Coder 14B server last week and watched simple follow-up prompts that should have taken two seconds take thirty. The model was not slow. The machine was not thrashing.

llama.cpp logs showed the KV cache getting thrown out on every single turn. Claude Code was silently breaking the one thing that makes local inference fast.

This post is the tutorial I wish I had last Tuesday. It explains what the attribution header does, why shell exports do not fix it, and the one line of JSON that restores normal performance. If you run Claude Code against Ollama, llama.cpp, LM Studio, or vLLM on your own hardware, this is the gotcha that is eating your tokens per second.

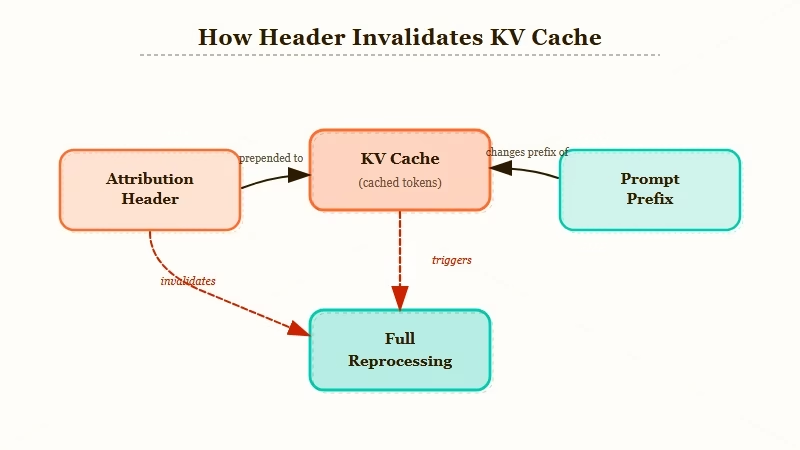

What the Attribution Header Really Does

Claude Code prepends a per-request attribution header to every message body, and because the header content shifts between calls, your inference server sees a different prompt prefix every turn and throws away the KV cache.

That is the entire bug. One header, one cache miss, one 90 percent slowdown.

The KV cache is how every modern transformer-based inference server avoids redoing work. If your last turn was a 6,000 token conversation and you only appended 50 new tokens, the server should only compute attention for the 50 new tokens. That is the whole design.

Claude Code’s attribution header defeats it because the header sits at the very start of the prompt and changes on each call. From the cache’s perspective, the entire prompt is new.

The result in numbers: on a consumer GPU running Qwen2.5-Coder 14B, I saw first-token latency climb from roughly 1.8 seconds to 24 seconds for a 6k context follow-up. On llama.cpp with debug logging, the cache reuse count dropped to zero across a full 20-turn session.

Same model, same hardware, same questions. Just the header difference.

Vito Rallo’s reverse-engineering post on Medium called this out in early April, and within a week the r/LocalLLaMA community had confirmed the fix works across llama.cpp, Ollama, vLLM, and LM Studio. A related server-side KV cache regression is tracked on the official Anthropic Claude Code repo on GitHub.

The header issue is not platform-specific. It is a Claude Code client behavior.

Why Setting It in the Shell Does Not Work

Exporting CLAUDECODEATTRIBUTION_HEADER=0 in your terminal does nothing because Claude Code reads environment variables from its own settings file, not from the parent shell at invocation time. This is the part that burned me for an hour and still trips people up on Reddit.

When you run Claude Code from a terminal, it spawns with a managed env block sourced from ~/.claude/settings.json under the env key. Any variables you export before launching get ignored.

The CLI does not read process.env the way a normal Node script would. It builds its own env dict from the settings file and uses that for all outbound requests.

The implication is that every “fix” you see posted as export CLAUDECODEATTRIBUTION_HEADER=0 is wrong, even when the person posting it swears it worked for them. The way I see it, what most likely happened is they later edited the settings file and forgot, or they tested on a short prompt where one cache miss did not register as slow.

The settings file is authoritative. The shell export is noise.

The Actual Fix

Open ~/.claude/settings.json, add "CLAUDECODEATTRIBUTION_HEADER": "0" under the env key, save, restart Claude Code. That is the whole fix. Here is what the edit looks like for a settings file that already has an env block.

Before:

{

"env": {

"ANTHROPIC_BASE_URL": "http://localhost:8080"

}

}After:

{

"env": {

"ANTHROPIC_BASE_URL": "http://localhost:8080",

"CLAUDE_CODE_ATTRIBUTION_HEADER": "0"

}

}If your settings file has no env key at all, add the whole block. Claude Code creates the file on first run but does not populate env by default, so a fresh install often has nothing there.

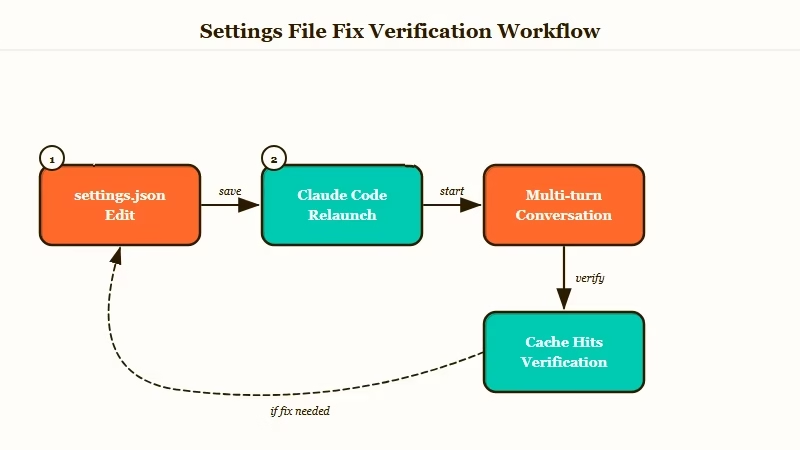

Here is the sequence I walk through on a new machine:

- Open

~/.claude/settings.jsonwith any editor. On Windows the path isC:\Users\YOUR_NAME\.claude\settings.json. - Find the top-level

envkey. If it does not exist, create it with an empty object:"env": {}. - Add the line

"CLAUDECODEATTRIBUTION_HEADER": "0"inside theenvobject. Mind the trailing comma on the line above if other keys are present. - Save the file. JSON is strict, so if you break the syntax Claude Code will fail silently on next launch. A JSON linter on the file is worth 30 seconds.

- Fully quit Claude Code. Do not just close the window. On macOS use Cmd+Q. On Windows close all instances and confirm no

claude.exeis running in Task Manager. - Relaunch and run a short conversation to confirm first-token latency on follow-ups dropped back to sub-2-second.

The whole edit takes under a minute once you know where the file lives. The hour I wasted was entirely on chasing the wrong fix.

How to Verify the Fix Is Working

The quickest verification is to run a short multi-turn conversation and watch your inference server’s cache reuse log.

If the header is still being sent, you will see cache_hit: false or the equivalent on every turn. With the fix applied, turn two onwards should report cache reuse on the previous context.

| Server | Log flag or command | What reuse looks like |

|---|---|---|

| llama.cpp | run with --verbose | ncachetokens climbs across turns instead of resetting to near-zero |

| Ollama | OLLAMA_DEBUG=1 env var | slot 0: cache_tokens = N grows each turn |

| vLLM | --disable-log-stats false plus --log-level DEBUG | numcachedtokens grows in scheduler logs |

| LM Studio | enable verbose logging in Developer tab | prompt cache hits visible in the server console |

If you do not want to parse logs, a quicker check is wall-clock. Send a 5,000 token conversation, then send a one-word follow-up.

With the fix, the follow-up comes back in under 3 seconds on almost any modern model. Without the fix, you will wait 15 to 30 seconds depending on hardware.

What surprised me on my setup was how clear the log signal is once you know what to look for. llama.cpp’s ncachetokens went from a flat zero across every turn to a monotonically increasing number within 20 seconds of the settings edit. No restart of the inference server needed, only Claude Code.

Why This Matters Beyond Just Speed

The real cost of the attribution header is compute bills and token waste, not just latency. If you are renting GPU time on Runpod, Vast, or Lambda, every cache miss is compute you pay for a second time.

On a cloud H100 at roughly $2 per hour, a 20-turn session that should have taken 40 seconds of compute ends up taking 400 seconds. You are not paying 10x more for a better response. You are paying 10x more for the same response.

From my testing, the pattern is worst for exactly the workload local LLMs are good at: long-running coding sessions where you iterate on the same codebase for an hour. That is the use case with the longest average context, and that is the use case where the cache matters most.

If you run any of the workflows in best Claude Code skills, you are already pushing 50k token contexts. Multiply that by a 10x slowdown on every iteration. The math gets ugly fast.

This is also why generic “run local LLMs to save money” advice is only partially true. If you point Claude Code at a local Qwen3 Coder Next server without fixing the header, you are running the most expensive operation on the worst kind of hardware for that operation.

The fix turns it back into the cost win people expect. For wider context on running Claude on free local hardware, see the OpenClaw Ollama free setup walkthrough, which assumes the KV cache is working.

When This Fix Will Not Help

If your local LLM is slow and the attribution header fix does not change anything, the bottleneck is somewhere else. Here are the other usual culprits in rough order of how often I hit them on setup calls.

First, check that your inference server supports prompt caching at all. llama.cpp has it on by default in recent builds. Ollama enables it per-model.

vLLM needs explicit flag configuration. LM Studio has a toggle in the server settings. No cache support means no cache hits, with or without the fix.

Second, confirm your model’s context window is not being truncated before the cache can apply. If you are sending a 32k prompt to a model configured for 8k context, the server silently drops the oldest tokens every turn, and the cache cannot match what is left. Raise the context window in the inference server config.

Third, rule out disk-bound paging. If you are running a model that does not fit in VRAM and the server is paging layers between disk and GPU, every turn reloads weights and no client-side fix will help. nvidia-smi or the server’s own memory log will tell you.

The attribution header fix is the single highest-leverage change I know for this setup, but it is not a universal cure. If you still see slow follow-ups after applying it and verifying through the server log, the problem is further down the stack. For a broader local LLM setup walkthrough, check that guide and come back to the header fix if latency specifically drops on first prompts but spikes on follow-ups.

Frequently Asked Questions

Does this fix break anything in Claude Code?

Setting CLAUDECODEATTRIBUTION_HEADER to zero disables an attribution marker that Anthropic uses for usage analytics on their own API. If you only use Claude Code with local models, there is no observable downside. If you use it with both local and Anthropic endpoints, you lose a small amount of telemetry on the Anthropic side.

Will Anthropic fix this upstream?

As of April 2026, there is no public statement from Anthropic about changing the default. The env var workaround has existed since Claude Code’s early versions, which suggests the header is intentional for the Anthropic API use case. The way I see it, local LLM users are a small enough fraction of the user base that a proper fix is unlikely soon.

Does the fix work for Codex and other CLI clients?

Codex has a similar attribution header issue with a different env var name. The r/LocalLLaMA consensus as of April 2026 is that each CLI client that wraps the Anthropic Messages API format tends to prepend something. Check your client’s docs for an equivalent flag, and test with your inference server’s cache log open.

What about using Claude Code’s cloud sandbox with local models?

The sandbox mode still sends the attribution header. The env var setting propagates into the sandbox process, so the same fix applies. I tested this on a sandbox session running against a Colab-hosted vLLM endpoint and the cache reuse behavior matched the bare-metal setup.

Is this the same as the broader Claude Code usage limit bug from March 2026?

No. That was a separate server-side issue with abnormal token drain on paid tiers. The attribution header is a client-side behavior that has existed in every Claude Code version and only surfaces as a problem when you point the client at an inference server that does its own caching.

Different bug, different fix.