TL;DR: SillyTavern plus OpenRouter is the fastest way to run a no-filter roleplay setup without a beefy local GPU. The connection takes about ten minutes, free models cover most use cases, and a one-time $10 credit unlocks a much higher daily ceiling. The model dropdown is where most people get stuck, so the model picks below matter as much as the setup steps.

A wave of roleplayers landed on SillyTavern this spring after Janitor AI’s mandatory ID verification, Character.AI’s age gate, and CrushOn cutting its free daily messages.

The migration is real, and the setup question I keep seeing is the same one: is there a way to do this without buying a $2,000 GPU.

The answer is yes. SillyTavern is the frontend. OpenRouter is the model marketplace. Together, they give you the SillyTavern interface running against free or near-free DeepSeek, Llama, and Qwen models, with no local hardware needed.

I will walk through the exact connection sequence, which free models are worth picking, the $10 threshold that changes everything, and the small set of errors that trip up most new users.

This guide assumes you already have SillyTavern installed and running. If you do not, the official SillyTavern installer covers the local install in under five minutes on Windows, Mac, or Linux.

We are starting from a fresh SillyTavern instance with no API connections set up yet.

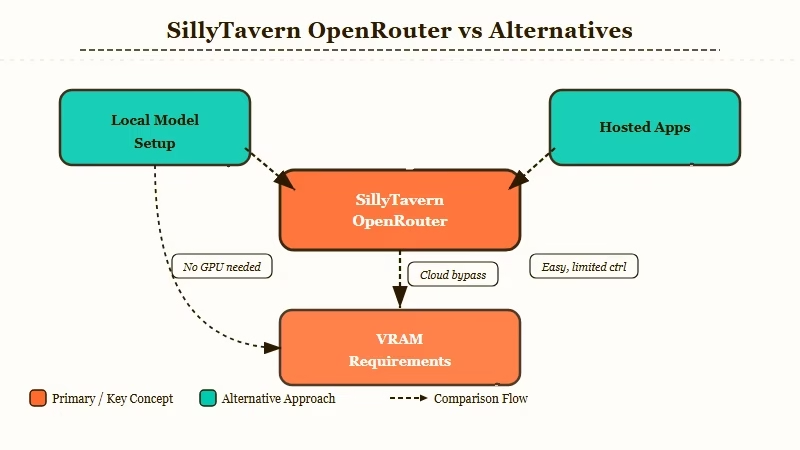

Why SillyTavern + OpenRouter Beats Both Local LLMs and Hosted Companion Apps

SillyTavern with OpenRouter sits in the gap between expensive local-model setups and rule-bound hosted companion apps, giving you the SillyTavern UI plus pay-per-token access to dozens of models with minimal filtering.

A local SillyTavern setup with KoboldCpp or Ollama is genuinely free once you have hardware, but the hardware is the catch. Running a 70B-class model with reasonable context window needs 24GB+ of VRAM, which puts you in 4090 or 5090 territory before you have generated a single token.

Smaller models that run on consumer cards lose memory and personality consistency the way the older Character.AI free tier does.

Hosted companion apps like Character.AI, Chai, or Janitor AI sit on the other end. The setup is zero, but the filter is heavy, the memory window is small, and recent platform changes (mandatory verification, daily caps) are pushing serious users out.

OpenRouter routes around both. From my own setup, the differences that matter are:

| Path | Hardware needed | Filter level | Setup time | Cost |

|---|---|---|---|---|

| Local (KoboldCpp / Ollama) | 16-24GB+ VRAM | None | 1-3 hours first run | Free after hardware |

| OpenRouter via SillyTavern | None | Light to none on most models | 10 minutes | Free tier or pay-per-token |

| Hosted (Character.AI, Chai) | None | Heavy | Zero | Free with caps or subscription |

OpenRouter wins on time-to-first-message for anyone without a 24GB+ GPU. From what I have seen of new users coming from Character.AI’s recent platform purge, this is the path most users settle on.

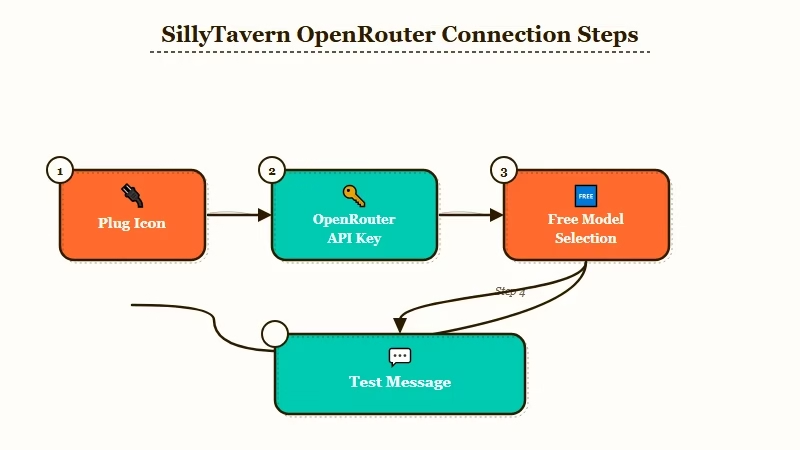

How to Connect SillyTavern to OpenRouter

Connect SillyTavern to OpenRouter by selecting Chat Completion in the API panel, choosing OpenRouter as the source, pasting an API key generated at openrouter.ai, and clicking Connect.

The connection itself takes five clicks once you know where they are. Here is the exact sequence I would walk a new user through, in order:

- Open SillyTavern in your browser at

http://localhost:8000(the default). - Click the plug icon in the top toolbar to open the API Connections panel.

- In the API dropdown, select Chat Completion (not Text Completion, not KoboldAI, this trips up new users).

- In the Source dropdown that appears below, select OpenRouter.

- In a new tab, go to openrouter.ai, sign up for a free account, and click your profile avatar then Keys to generate a new API key. Copy it.

- Back in SillyTavern, paste the key into the OpenRouter API Key field.

- Click Connect. The model dropdown below should populate with available models within a few seconds.

- Pick a model (see the next section for which to start with) and click Test Message to confirm the connection works.

If the model dropdown does not populate after Connect, the API key is wrong or expired. Regenerate one and paste again.

If the test message returns “Insufficient credits,” your account has not been topped up and the model you picked is not in the free tier. Drop down to a model with :free in its slug and try again.

Vague: “Set up OpenRouter in SillyTavern.”

Specific: Plug icon, API = Chat Completion, Source = OpenRouter, paste key from openrouter.ai/keys, click Connect, pick

deepseek/deepseek-chat-v3.1:freefrom the model dropdown, click Test Message, expect a “Hello! How can I help you?” reply within five seconds.

Which Free OpenRouter Models Are Worth Picking

The free OpenRouter tier covers DeepSeek V3, Llama 3.3 70B, Qwen 2.5 7B, Gemma 3 12B, and a rotating set of smaller models, with DeepSeek V3 the strongest pick for roleplay use.

OpenRouter’s free tier is a moving target. Models get added, removed, and rate-limited based on what the upstream provider releases.

As of May 2026, here is how I would rank the free models that matter for roleplay specifically, based on what I have seen in the SillyTavern community:

| Free model | Strength | Weakness | Best for |

|---|---|---|---|

deepseek/deepseek-chat-v3.1:free | Strong roleplay prose, 128K context, light filter | Occasional Chinese-language drift in long sessions | Most users, default pick |

meta-llama/llama-3.3-70b-instruct:free | Long context, stable persona | Slightly stiffer prose than DeepSeek | Long roleplay sessions |

google/gemma-3-12b-it:free | Fast, lightweight | Smaller context, weaker character voice | Quick chats, fallback |

qwen/qwen-2.5-7b-instruct:free | Very fast, multilingual | Loses memory faster | Short scenes, language practice |

The most important thing the SillyTavern docs do not say loudly enough: models with :free in the slug are gated by request rate limits, not just credit balance. Hit too many requests in a short window and you get a 429 error even on a fully topped-up account.

This is where the $10 credit threshold matters. OpenRouter unlocks a generous daily limit on free models once you have purchased at least $10 in credits one time, ever.

From my experience, that one-time top-up is what turns OpenRouter from “frustrating” to “genuinely usable” for daily roleplay. You are not paying for the free models, the credits sit there as a permanence signal to OpenRouter.

For a fallback that does not require any setup at all, Candy AI is the path I would point friends to who do not want to touch SillyTavern at all.

It will not give you SillyTavern’s customisation depth, but it ships with strong memory and a much smoother first hour.

The Settings That Move the Needle

SillyTavern has dozens of settings, but for OpenRouter free models the four that matter are temperature, max response length, context size, and the streaming toggle.

Most SillyTavern guides walk you through every parameter. Most parameters do not move the needle for a fresh setup. From what I would prioritise on a new connection, this is the order:

- Temperature: 0.85 to 1.0 for creative roleplay. Below 0.7 and the model gets boring, above 1.2 it drifts into nonsense.

- Max response length (tokens): 400 to 600. Free-tier models will sometimes generate 2,000-token monologues if you let them; capping it forces tighter pacing.

- Context size: Match the model’s documented context window. DeepSeek V3 is 128K, Llama 3.3 70B is 128K, Gemma 3 12B is 8K. Setting context above the model’s window silently truncates from the front, which is what users mean when they say the bot “forgot the start of the conversation.”

- Streaming: Turn it on. SillyTavern feels twice as fast with token streaming, even though total generation time is identical.

Skip the rest of the parameter panel until you have a working session. I have watched new users tweak min-p, top-k, repetition penalty, and presence penalty before they have generated a single message, and the only outcome is a session that does not work at all.

Common Errors and How to Fix Them

The four errors that account for nearly every “SillyTavern is broken” post are wrong API selection, expired keys, model rate limits, and context window overrun.

The fixes are short. Here is the lookup table I keep mentally for new SillyTavern OpenRouter users:

| Symptom | Likely cause | Fix |

|---|---|---|

| Empty model dropdown after Connect | Wrong API key or expired | Regenerate key at openrouter.ai/keys, paste, Connect again |

| “Insufficient credits” on Test Message | Picked a paid model on a free account | Switch to a model with :free in the slug |

| 429 errors mid-conversation | Free-tier rate limit | Wait 30 seconds, or top up $10 credits one time for the higher cap |

| Bot “forgets” recent messages | Context size higher than model supports | Drop SillyTavern context size to match the model’s window |

| Test Message returns nothing | Source dropdown set to OpenAI instead of OpenRouter | Re-select OpenRouter in Source dropdown, click Connect |

| Filter refusal on free models | Some OpenRouter models do apply light filters | Switch to a different free model, DeepSeek V3 is the most permissive |

The way I see it, three of those six are first-five-minutes errors and the rest are edge cases. If you hit one of the first three, do not start tearing through Reddit threads, fix the dropdown and the key first.

For longer-term reliability, Pew Research found that AI tool adoption among consumers continues to climb fast, which is why platforms keep tightening rules. SillyTavern + OpenRouter is the path that does not get rule-changed out from under you.

Frequently Asked Questions

Is OpenRouter really free for SillyTavern?

OpenRouter has a genuine free tier for specific models tagged with :free in their slug. The catch is rate limits on the free tier are strict until you top up $10 in credits once, after which the daily quota becomes much higher.

Does SillyTavern work without a powerful GPU?

Yes, when you connect it to a remote API like OpenRouter, all model inference runs on remote servers. Your machine only needs enough resources to run a browser and the lightweight SillyTavern frontend.

Which OpenRouter free model is best for roleplay?

DeepSeek V3 (deepseek/deepseek-chat-v3.1:free) is the strongest free pick as of May 2026. It has 128K context, light filtering, and stable prose quality for long sessions. Llama 3.3 70B is the closest second.

Can I use OpenRouter free models indefinitely?

Yes, with the caveat that any specific free model can be deprecated, rate-limited, or removed at any time. Plan for the model lineup to shift every two to three months and keep two backup picks ready in your favourites list.

Does SillyTavern keep my conversations private?

Conversations stay on your local machine inside SillyTavern. The prompts you send to OpenRouter pass through OpenRouter and the underlying model provider. Local-only setups via Ollama or KoboldCpp are the only fully private path.

What if OpenRouter blocks roleplay content?

Most OpenRouter free models apply light filters or none at all, but a few mainstream models (some Claude and OpenAI variants) refuse explicit roleplay. Switch to DeepSeek V3 or a Llama variant if you hit refusals on a different model.