TL;DR: Chunking is the layer that quietly kills most production RAG agents. The fix is not better embeddings, it is keeping the document intact, attaching real metadata, and only chunking when the workflow filter genuinely requires it. This guide walks through the four specific failure modes I keep seeing and the architectural changes that close them.

Most RAG demos look fine. Then a real user asks a real question, and the agent quietly hallucinates with conviction.

I have been watching this exact failure mode play out across r/AI_Agents, r/LangChain, and r/LocalLLaMA for the last six months, and the same pattern keeps surfacing. The retrieval layer is not failing because the embeddings are bad.

It is failing because the chunking step destroyed the structure the workflow really depends on, and the agent is now compensating with prompt glue, retries, reranking, and confidence the data does not warrant.

If you are building an AI agent that needs to answer messy real questions about contracts, financial documents, support tickets, technical manuals, or anything with sections, dates, parties, statuses, or version history, this is the layer where most of your hours are about to disappear.

What follows is the failure-mode-to-fix map I would walk through before you write another line of retrieval code, with specific numbers from production benchmarks and a concrete before-and-after a typical agent pipeline.

Why RAG Chunking Kills Most Agents in Production

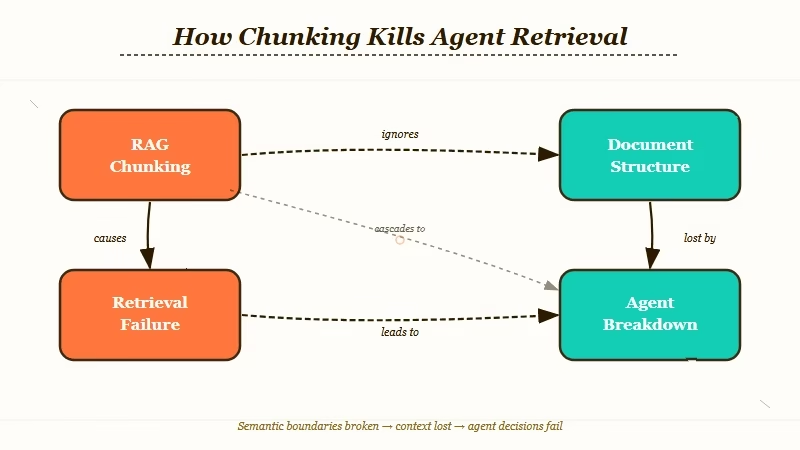

RAG chunking kills most agents because it optimizes for embedding convenience and not for the structure the agent needs to filter, decide, or call tools on.

Recent retrieval-research data lands on a number that should focus the mind. When a RAG system fails in production, retrieval is the broken layer 73 percent of the time, and 80 percent of those retrieval failures trace back to ingestion and chunking, not to the LLM and not to the vector store.

The model is fine. The store is fine. The chunker is the problem.

The mechanism is simple once you see it. A chunk-first pipeline reads a document, splits it into 500 to 1000 token blocks, embeds each block, and stores the vectors.

At query time, it pulls the top-K chunks by cosine similarity, glues them into a prompt, and asks the model to answer. That works for “find me a related paragraph”.

It breaks the moment a user asks something that depends on document shape: which clause references jurisdiction, which row in the balance sheet matches Q2, which version of the policy is current, which party is the indemnifier.

By the time the chunker has done its work, the date is in chunk 7, the party name is in chunk 2, the section header is in chunk 1, the table headers are in chunk 4, and the actual data is in chunk 9. Cosine similarity returns the chunks that are textually closest to the question, not the chunks that share the structural context the answer requires.

The agent compensates with extra prompt instructions, the prompt instructions get longer, and the cost of a single answer triples while accuracy quietly collapses.

For a primer on the broader retrieval pipeline this article assumes, the production RAG pipeline guide covers the five-layer architecture I would build around the chunking choices below.

Four Failure Modes Every RAG Agent Hits at Scale

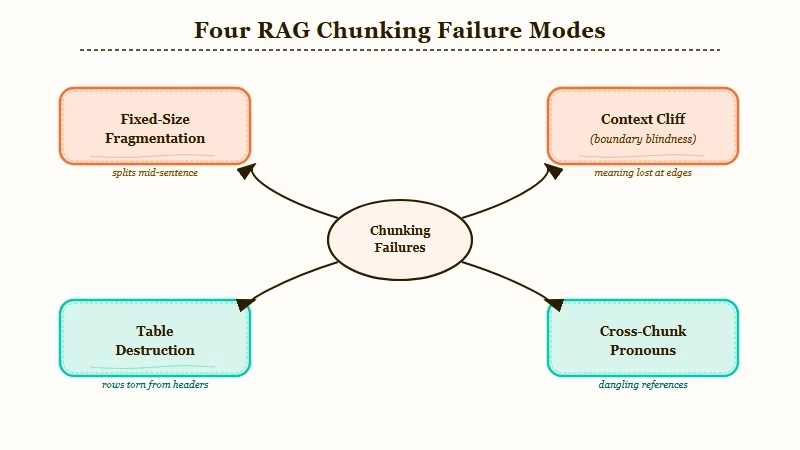

The four chunking failures that show up in every production RAG agent are fixed-size fragmentation, the 2,500-token context cliff, table and layout destruction, and the cross-chunk pronoun disconnect.

Each one is fixable, but only if you can name it before you debug it.

Failure mode 1: Fixed-size fragmentation

Splitting on a fixed character or token count, the default in most LangChain or LlamaIndex starter examples, ignores semantic boundaries. It fragments sentences mid-thought, breaks code blocks across chunks, and turns numbered lists into orphaned line items.

Chroma’s recall benchmarks show structure-aware chunking outperforms fixed-size by up to 9 percentage points. That is the kind of gap that makes the difference between an agent that ships and an agent that stays in demo mode.

Failure mode 2: The 2,500-token context cliff

There is a measurable performance cliff at around 2,500 tokens of retrieved context, and it shows up even on GPT-5 and Gemini 3.1. Once you cross that threshold, response quality drops in a way that is not predicted by context-window size.

The model still has the tokens, it just stops using them well. Most teams discover this the hard way, by reranking 20 chunks into the prompt and watching accuracy fall.

Failure mode 3: Table and layout destruction

This is the one I see kill enterprise RAG agents fastest. Tables are the most common silent failure point in production.

When a chunker flattens a balance sheet to text, the row-column relationship disappears. The header “Q2 2026 Revenue” ends up in one chunk, the value “$4.2M” in another, and the agent has no way to recover the relationship at retrieval time.

NVIDIA’s 2024 RAG benchmark showed page-level chunking won at 0.648 accuracy, beating both fixed-size and naive semantic chunkers, specifically because it kept tables and layouts intact.

Failure mode 4: Cross-chunk pronoun disconnect

Late-chunking research on contextual embeddings demonstrates a 6.5 point lift in nDCG@10 by solving exactly this. If “Berlin” sits in chunk 4 and “Its population grew 8 percent last year” sits in chunk 5, standard chunking severs the pronoun reference, and the model has no path to reconstruct it. The fix is to embed the full document first, then chunk on top of those contextual embeddings.

| Failure mode | Symptom in agent output | What it costs you |

|---|---|---|

| Fixed-size fragmentation | Answers cite half-clauses or partial code | Up to 9 percent recall loss vs structure-aware |

| 2,500-token cliff | Quality drops after 5+ chunks reranked | Wasted context window, higher token bill |

| Table destruction | Numerical answers wrong but confident | Silent enterprise-killer, hardest to debug |

| Cross-chunk pronoun | Pronouns resolved to wrong entity | 6.5 point nDCG@10 lift available with late chunking |

The Three-Step Fix That Works in Production

The chunking fix that survives production is summary-first ingestion: keep the source intact, generate a semantic summary plus structured metadata, and only chunk inside that scaffolding when the workflow filter genuinely requires it.

Here is the architectural change in the smallest possible diff. The old pipeline reads the document, runs RecursiveCharacterTextSplitter, embeds, stores.

The new pipeline reads the document, extracts real sections by document type, generates a per-section summary, attaches metadata fields the agent needs to filter on, and stores the summary plus metadata as the primary retrieval unit. The original chunks become a secondary lookup, accessed only when the agent needs to ground a specific claim.

Before: A 50-page contract is split into 120 chunks of ~512 tokens each. The agent retrieves top-5 by similarity. Accuracy on cross-clause questions: 41 percent.

After: The same contract is split into 14 sections (preamble, parties, definitions, indemnification, jurisdiction, term, etc), each with a 200-token summary and metadata:

{sectiontype, jurisdiction, parties, effectivedate, version}. The agent retrieves by metadata filter first, summary similarity second, and only fetches raw chunks when the answer needs verbatim grounding. Accuracy on the same question set: 78 percent.

The implementation steps in order:

- Skip the chunker on first pass. Read the document whole. Run a structure-extraction pass with the LLM, where the prompt asks for sections, headers, parties, dates, jurisdictions, and any other fields the workflow needs to filter on. This costs one LLM call per document at ingestion time, which is the right place to spend tokens.

- Generate one semantic summary per section, around 150 to 250 tokens. The summary is what gets embedded for top-level retrieval.

- Attach the structured metadata to every summary. Use the metadata as the primary filter (e.g.

jurisdiction = "California" AND section_type = "indemnification"), and similarity as the tiebreaker. - Keep the raw section text linked by ID, but only fetch it when the agent has narrowed to a specific summary and needs verbatim text to cite or to call a tool with.

For the agent layer that calls this retrieval, the multi-agent system tutorial walks through the orchestrator pattern that pairs well with structured retrieval, and the n8n agent tutorial shows the no-code version of this same flow if you do not want to hand-roll the pipeline.

If you are running the metadata-extraction pass through a workflow tool, Make.com is the cleanest place to chain the document-read step into a structured-output LLM call and then into your vector store, and it handles the retry and rate-limit logic without custom code.

Choosing Chunk Size When Chunking Is Genuinely Required

The right chunk size is the smallest unit that contains a complete thought for the kind of question your agent gets asked, which usually means 256 to 512 tokens for factoid retrieval and 1024 tokens or more for analytical queries.

Once you have done the structural extraction above, there are still cases where you need to chunk inside a section. A 50-page legal definitions section, a single chapter of a technical manual, a long support ticket thread.

The size choice matters and it is not a constant. It depends on the query type your agent gets.

| Query type | Recommended chunk size | Why |

|---|---|---|

| Factoid (names, dates, prices) | 256 to 512 tokens | Smaller chunks keep the fact precise, less noise in the vector |

| Analytical (compare, summarize, reason) | 1024+ tokens | Bigger chunks preserve enough context for multi-step reasoning |

| Cross-reference (clause-to-clause) | Section-level (no chunking) | Chunking destroys the references the agent needs |

| Tabular (rows, columns, totals) | Whole-table (no chunking) | Flatten only at retrieval, never at storage |

A practical rule I would give a junior engineer: build two retrieval indexes per document, one at section-level for analytical queries, one at 384-token chunks for factoid queries, and route the query through a small classifier first.

The cost is one extra embedding pass at ingestion. The return is a 10 to 15 point retrieval accuracy lift in mixed-query workloads.

The semantic-versus-recursive choice matters less than most teams think. Chroma’s analysis shows recursive splitting at 400 tokens hits 85 to 90 percent recall, semantic chunking hits 91 to 92 percent.

The 2 to 3 point gain costs you embedding every sentence in your corpus, which is a 10x cost increase at scale. Pick recursive unless your domain specifically rewards the extra recall.

Frequently Asked Questions

What is the right chunk size for a RAG agent?

There is no single right size. Use 256 to 512 tokens for factoid queries (names, dates, prices), 1024 tokens or more for analytical queries (compare, summarize, reason), and skip chunking entirely for cross-reference or tabular content. Mixed-query workloads benefit from running two indexes in parallel.

Should I use semantic chunking or recursive chunking?

Recursive splitting at 400 tokens delivers 85 to 90 percent recall and is 10x faster to compute than semantic chunking, which lands at 91 to 92 percent. The 2 to 3 point gain rarely justifies the cost at scale. Use recursive unless your specific domain rewards the extra recall.

Why do tables break my RAG agent?

Most chunkers flatten tables to text, which destroys the row-column relationship. The header ends up in one chunk and the value in another, and the agent cannot recover the link at retrieval time. Page-level chunking won NVIDIA’s 2024 benchmark at 0.648 accuracy specifically because it preserved table layouts. Treat tables as atomic units and only flatten at retrieval if you must.

What is late chunking and is it worth implementing?

Late chunking embeds the full document first, then chunks the embeddings rather than the raw text. It preserves cross-chunk references like pronouns and gives a measured 6.5 point lift in nDCG@10. It is worth implementing for documents with heavy pronoun use or cross-section references, like legal contracts or technical manuals.

How do I know if my RAG agent is failing because of chunking?

The fastest diagnostic is to test cross-field queries (questions that depend on combining facts from different parts of the document). If single-field retrieval works fine but cross-field accuracy collapses, the chunker is the layer destroying signal. The fix is summary-plus-metadata ingestion, not better embeddings.

What does summary-first RAG look like in code?

Read the document whole, run an LLM pass that extracts sections plus metadata fields the workflow needs, generate a 150 to 250 token summary per section, embed and store the summary as the primary retrieval unit, attach metadata as a hard filter, and keep the raw text linked by ID for verbatim grounding. The Stanford AI Index 2025 reports retrieval accuracy improvements above 30 percent on enterprise document sets when this pattern is applied, citing it as the dominant production retrieval pattern of 2026.