What Happened: Alec Radford and two collaborators released talkie, a 13B language model trained only on pre-1931 English text. It is the first frontier-credentialed researcher experiment that explicitly separates what a large language model has learned from what the modern web told it to memorize.

The pre-1931 language model talkie went live this morning, and the implication is bigger than the name lets on. Alec Radford, the lead author of the original GPT-1 and GPT-2 papers at OpenAI, partnered with Nick Levine and David Duvenaud to train a 13-billion-parameter model on 260 billion tokens of text that all predate January 1, 1931.

No web crawl, no Wikipedia, no modern code, no Reddit. The training corpus is just historical books, newspapers, scientific journals, patents, and case law from before the radio became mainstream. The model literally cannot know about anything that happened after 1930, which is the entire point.

By midday on April 28, 2026, the release had broken out of the AI research bubble. It hit the front page of Hacker News, Simon Willison published a write-up before noon, and the r/singularity discussion was already debating what the result means for reasoning research.

The reason it travelled this fast is that talkie is the cleanest experiment anyone has built so far for separating what a model has learned from what the modern web taught it to repeat.

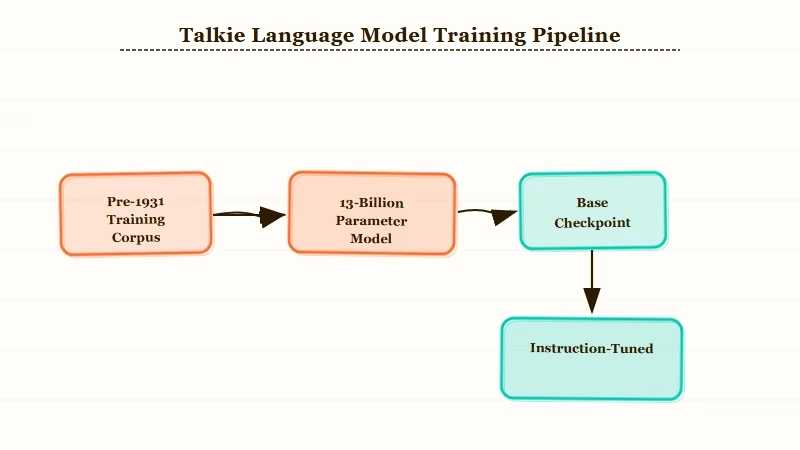

The release ships in two checkpoints, both Apache 2.0 licensed and posted to Hugging Face. There is a base model for raw evaluations, plus an instruction-tuned variant that learned to follow instructions from period etiquette manuals, letter-writing guides, and encyclopedias rather than the post-2020 instruction datasets every modern LLM uses.

The team also trained an identical modern twin on FineWeb so the comparison is apples to apples on architecture and parameter count.

What Actually Happened

talkie is a 13-billion-parameter language model trained exclusively on 260 billion tokens of English text published before January 1, 1931.

The project is led by Alec Radford, the same researcher who wrote the original GPT-1 and GPT-2 papers, alongside Nick Levine and David Duvenaud.

The release happened on April 28, 2026, with a public website at talkie-lm.com, a Hugging Face collection of model weights, and a GitHub repository for the inference library.

The training corpus is what makes the project unusual to me. Books, newspapers, periodicals, scientific journals, patents, and case law from before 1931 were assembled into a 260-billion-token dataset.

Roughly 30% of training compute was lost to OCR transcription errors in the historical texts, so the effective scale is smaller than the raw token count suggests. The team estimates the corpus could grow to over a trillion tokens of historical text with continued digitization work.

The instruction-tuned variant is the part most people will miss on a first read. The team built a novel instruction-following dataset entirely from pre-1931 source material, including Victorian etiquette manuals, formal letter-writing guides, encyclopedias, and poetry collections. They then ran online DPO on top of supervised fine-tuning.

The result is a model that follows modern English instructions but defaults to the diction, framing, and worldview of a well-read person from 1925. The team also trained a modern twin on FineWeb, the standard 2024-vintage web dataset, using the exact same architecture and parameter count.

That gives the team a clean control group. When talkie underperforms on a task, they can ask whether the gap is due to the cutoff or the architecture. Early benchmark results say the gap is almost entirely about the cutoff.

Why This Is a Bigger Deal Than It Sounds

The talkie release matters because it is the first clean separation of LLM reasoning from LLM memorization.

Modern AI tool reviews tend to confound capability and corpus size, because every commercial LLM has trained on something close to whatever question you ask it. talkie cannot have memorized any task involving information from after 1930.

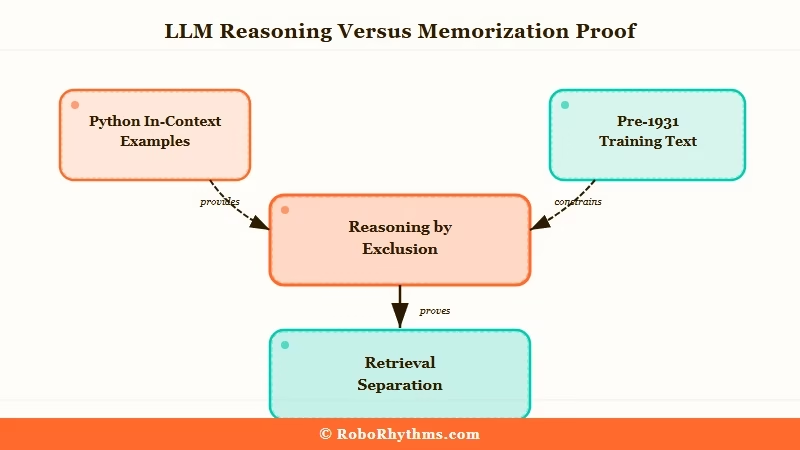

The headline finding is not that talkie can write like a Victorian novelist, although it can. It is that talkie picks up Python from in-context examples despite zero modern code in its training set.

When researchers gave it a few example programs, it produced new correct programs, including one-line solutions and minor modifications to existing functions. It even implemented the decoding function of a rotation cipher when given only the encoding function.

That is not retrieval. There is no Python in pre-1931 text, and the model is doing inverse reasoning from algebraic structure it learned from period mathematics texts.

The way I see it, that single result is the most important reasoning datapoint anyone has produced in 2026. talkie is solving a task where memorization is structurally impossible, and it is solving it from in-context examples alone.

The future-prediction angle is the second finding worth tracking. The team has started measuring how surprised talkie is by descriptions of post-1930 events, with the prediction that “surprise” should grow as the historical distance from 1930 increases.

Early results show exactly that pattern, with a sharp jump in surprisingness around the 1950s and 1960s as the post-war information explosion outruns what a 1930 corpus could anticipate. The methodology is reusable, and any future cutoff-locked model can be tested against any post-cutoff event to estimate what an LLM’s prior would have predicted.

There is a darker finding the release notes do not hide. talkie produces output that reflects the racial, gender, and colonial assumptions of 1925 print media, because that is what its training corpus contains.

The model is not malicious; it is mirroring its data. The team frames this as evidence of how much modern LLMs depend on post-2010 moderation data and post-training alignment to soften the rough edges of pre-internet text.

What I find most consequential about that framing is the quantification. “AI is biased” stops being a vague accusation and becomes a measurable function of training data composition. You can read about Anthropic’s recent ID verification rollout for the modern alignment context this experiment implicitly critiques.

What This Means for You

The talkie release changes how to evaluate three specific claims AI vendors make. Each one was hand-waved before this morning, and each one now has a measurable answer:

- Reasoning quality. Whether the model has truly learned to reason, or whether it has memorized something close to the answer from web data.

- Recency illusion. Whether the model is answering a 2024 question from training-data overlap, or by genuine inference from older priors.

- Alignment cost. Whether and how much modern moderation and post-training alignment trade away raw model quality.

Modern reviews of GPT-5.5 versus GPT-5.4 and Claude Opus 4.7 tend to confound capability and corpus size. talkie is the first model where the corpus is genuinely small and historical, so any task it can do is a lower bound on what 13 billion parameters can learn from any dataset. The early benchmark numbers give reviewers a real baseline for the first time.

The recency illusion is the bigger practical issue for working professionals. When ChatGPT or Claude answers a 2024 question correctly, you have no clean way to tell whether the answer came from reasoning or from a verbatim match to a recent web page.

With talkie, you have a model that cannot have seen the question. If it gets a 2024 question right, that is reasoning by exclusion.

The same logic applies to the 60-year-old math problem GPT-5.4 solved last week. talkie cannot have seen any solution attempts after 1930. If a future fine-tune of talkie can attack pre-1931 unsolved problems, the reasoning credit will be much cleaner than current modern-model results allow.

The alignment-cost question is the third shift. talkie is a public reminder that without modern moderation data, an LLM defaults to whatever its training corpus contained.

Every commercial LLM you use today is doing meaningful alignment work to keep period-typical biases from surfacing, and that work has a quality cost. Knowing the cost is non-zero, and being able to measure it against a vintage baseline, will put pressure on the major labs to be more transparent about what their alignment pipelines trade away.

The practical takeaway for AI buyers is small but real. If you are evaluating an AI tool and the vendor claims their model “reasons”, ask what their generalization-versus-memorization story is.

The honest answer used to be that there was no good way to test it. Starting today, there is a yardstick. Here is what a credible reasoning test looks like in practice:

Vague: “Does Claude really reason or does it memorize?”

Specific: “Run a 2024 trivia question through Claude and through a talkie-13B fine-tune. If Claude is correct and talkie cannot answer, the result is retrieval. If both arrive at correct answers using the same chain of reasoning from older priors, the result is closer to genuine inference.”

| Question | What modern LLMs let you check | What talkie lets you check |

|---|---|---|

| Did the model reason or retrieve? | No clean answer, web-trained models may have seen it | Reasoning by exclusion if model is talkie-locked |

| How much does post-2010 alignment cost on quality? | Vendor blogs only | Quantifiable via twin comparison |

| Can a 13B model learn Python with zero code in training? | Untested before April 2026 | Yes, slowly, from in-context examples |

| What was an LLM’s prior in 1930 about a 2026 event? | Not measurable | Measurable as a “surprise” score |

What Comes Next

The most likely follow-up is a wave of vintage-cutoff models from other labs.

The methodology is reproducible, Apache 2.0 licensing makes derivative work easy, and the research narrative is fresh enough that any lab with spare compute can fork the project. Expect 1950, 1980, and pre-internet 1990 cutoff models within the next 90 days.

Releases of this kind tend to trigger a small avalanche, the same way the Kimi K2.6 open-weights release triggered a Chinese open-source frontier-model wave earlier this month. I would not be surprised to see a vintage-cutoff Chinese model before June, since the Chinese open-weights ecosystem moves fastest on novel research narratives.

The second-order effect is on benchmarks. Most reasoning benchmarks today implicitly assume the model has seen something close to the answer.

talkie breaks that assumption, and new benchmarks specifically designed for cutoff-locked models will arrive within six weeks. The first round will be embarrassing for at least one major commercial lab, because somebody will produce a “reasoning vs retrieval” leaderboard that exposes how much of current capability is retrieval.

The third effect is on training-data discourse. Lawsuits, ethics committees, and the broader public conversation about what AI models “know” have been hampered by an inability to measure how much of an LLM’s behaviour comes from data composition versus architecture. talkie supplies the measurement, and I would expect Stanford’s AI Index report to cite the methodology in its 2026 update.

What I am watching most closely is whether the team releases a bigger talkie before the end of the year. 13B is small by 2026 standards, and the marginal value of vintage-cutoff models grows fast with scale. A 70B talkie would let researchers test reasoning at frontier-comparable parameter counts, which would make the project the most consequential AI research release of 2026 by a wide margin.