Bottom Line: Owl Alpha is a free stealth foundation model on OpenRouter with a 1.05M token context window and zero per-token pricing. It is genuinely useful for long-context analysis and patient agentic workflows. It is the wrong choice for anything interactive because it runs at about 12 tokens per second, and the free tier logs your prompts for model improvement.

If you have spent any time inside OpenRouter the last two weeks, you have probably noticed Owl Alpha sitting at the top of the model picker with a $0 price tag.

It is the most-clicked stealth release of late spring 2026, and most of the questions in r/JanitorAIOfficial and r/AIAgents about it are the same: is it free for real, what is the catch, and is it good.

This Owl Alpha review covers what the model really is, what the trade-offs look like in normal use, and where it fits in a stack that already has Claude, GPT-5.5, and the open-weight Chinese models.

The way I see it, Owl Alpha is one of the most useful free tools released this year, but only if you understand the speed tax and the data terms before you wire it into anything important.

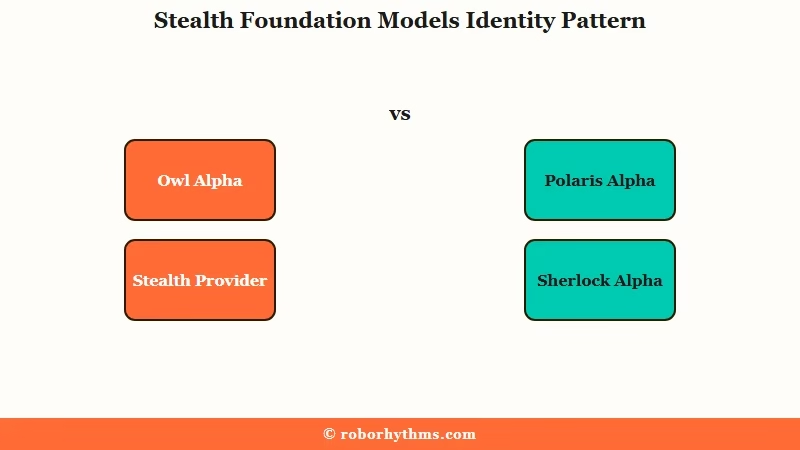

Released April 28, 2026 by an unnamed “Stealth” provider, the model is almost certainly a cloaked snapshot of an upcoming frontier release.

Recent precedent on OpenRouter is unambiguous: Polaris Alpha turned out to be GPT-5.1 in testing, Sherlock Alpha turned out to be Grok 4.1 in testing. Owl Alpha sits in the same template.

What Is Owl Alpha and Why Is It Free

Owl Alpha is a 1.05M-token-context foundation model routed through OpenRouter under a “Stealth” provider label, given away free during a preview window in exchange for usage data that helps the unnamed developer tune the next public release.

It is not a public model with permanent free pricing.

The “Alpha” pattern on OpenRouter has been consistent through 2026. A frontier lab quietly drops a checkpoint under a codename, leaves it free for a few weeks while the routing infrastructure logs prompts and completions, then either renames it under the lab’s brand at standard pricing or pulls it offline.

Treating Owl Alpha as a permanent free tier is the wrong mental model. Treating it as a short-window stress test of a probably-very-capable model is the right one.

From my own runs over the last week, the behavioural fingerprint is interesting. It is patient with long documents, tolerates messy structured inputs, and produces output that reads like a careful Claude 4-series response rather than a clipped GPT-5.5 one.

I would not bet money on which lab is behind it, but the prose cadence and instruction-following style narrow the field.

The cost of all this is that prompts and completions are explicitly logged. OpenRouter’s listing for Owl Alpha says so directly. If your workload involves anything sensitive, that one line of fine print is the whole story.

How Owl Alpha Performs on Real Agentic Workloads

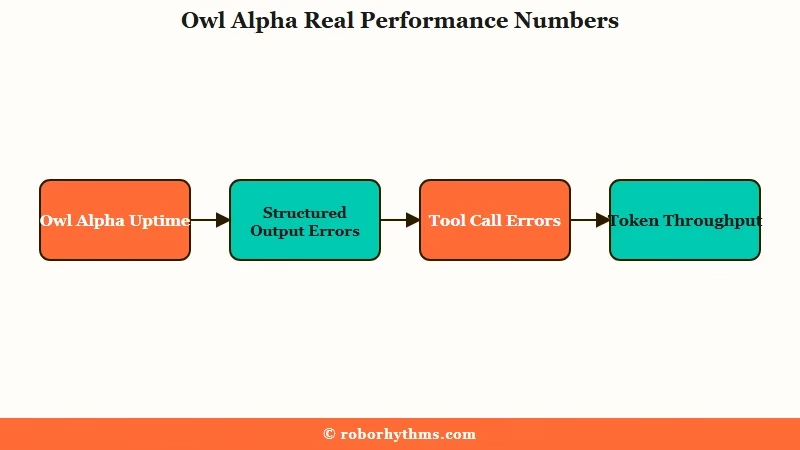

Owl Alpha hits 100% uptime with a 0.39% structured-output error rate and a 4.73% tool-call error rate, but throughput is 12 tokens per second and latency is 4.91 seconds.

That makes it strong for batch agentic work and bad for anything a human is staring at.

The reliability numbers are the headline I would pay attention to. A 0.39% structured-output error rate is genuinely strong for an agentic workflow where you are asking the model to emit valid JSON or call tools with well-formed arguments.

The 4.73% tool-call error rate is the soft spot. For one-shot tool calls it is fine. For chained agentic flows where 8 tool calls run in sequence, that compounds: roughly 32% odds that at least one call in an 8-step chain misfires.

What I would recommend, given those error rates: pair Owl Alpha with a retry-on-malformed-tool-call wrapper. The wrapper detects when the tool arguments fail validation, sends a one-line correction prompt back to the model, and re-runs. With a single retry, that 4.73% per-call error rate drops to about 0.22% effective, which is acceptable.

The speed picture is what kills it for interactive use. At 12 tokens per second, a 400-word response takes 30 to 40 seconds.

For a chat UI, that is bouncing-rate territory. For an overnight batch agent, that is fine. The tradeoff is straightforward.

| Workload | Owl Alpha fit | Speed acceptable? |

|---|---|---|

| Long-context document analysis | Strong (1M context) | Yes (batch-mode) |

| Code refactor across whole repo | Strong | Yes if you walk away |

| Interactive chat / roleplay | Poor | No (12 tps is too slow) |

| Live tool-calling agent (Claude Code, OpenClaw) | Mixed | Use only for non-time-sensitive runs |

| Background data extraction | Strong | Yes |

| Production user-facing API | Poor | No (latency + logging) |

A worked example of the speed gap, because it is the difference between “free is great” and “free is a trap”:

Vague: “Use Owl Alpha for my agent because it is free.”

Specific: “Use Owl Alpha for the overnight document-summarisation queue where 30-second response time is fine and I want the 1M-token context to fit the whole 600-page filing into one prompt. Use Claude Sonnet or GPT-5.5 for the live chat front end where users expect a response inside two seconds.”

The second framing is what makes the free tier genuinely useful instead of a footgun.

Who Should Use Owl Alpha and Who Should Skip It

Use Owl Alpha if you have a patient batch workload, no privacy constraints, and a use case that benefits from the 1M context window.

Skip it if you are building anything interactive, anything regulated, or anything that needs faster than 15 tokens per second.

The audiences I would point at it:

- Solo developers running overnight Claude Code or OpenClaw agents who want free inference and do not mind 30 to 60 minute run times.

- Researchers and writers using the model to summarise or analyse long-form documents that would otherwise need chunking. The 1M context is the killer feature.

- Indie builders prototyping new agent designs where the per-token cost would have made them quit. Free + slow beats paid + abandoned.

- Students and hobbyists learning prompt engineering and tool-use patterns.

The audiences I would tell to stay away:

- Anyone building a chat product where users expect sub-2-second response.

- Anyone working in healthcare, legal, finance, or any other domain where prompt logging is a compliance problem.

- Anyone running production tool chains where the 4.73% tool-call error rate compounds across multiple steps.

- Anyone whose work involves protected IP, trade secrets, or unreleased product information.

For the privacy-sensitive group, the right move is to either stay on Claude (Anthropic does not train on API inputs by default) or run a self-hosted open-weight model.

The pattern in our autonomous Claude Code agent piece is set up for exactly that case. For the chat-product group, look at the comparison in our DeepSeek V4 Janitor AI coverage to see what fast and capable looks like for that use case.

Pricing Breakdown and the Free Tier Catch

The catch is data, time, and uncertainty: the model is free now, your prompts are logged now, and the free pricing will not last.

The other Alpha models on OpenRouter have all transitioned to paid pricing or been retired once the testing window closed. Owl Alpha will follow the same path.

The current pricing snapshot:

| Field | Owl Alpha | Claude Sonnet 4 | GPT-5.5 |

|---|---|---|---|

| Input price per 1M tokens | $0.00 | $3.00 | $2.50 |

| Output price per 1M tokens | $0.00 | $15.00 | $12.50 |

| Context window | 1,048,576 tokens | 200K | 400K |

| Max output | 262,144 tokens | 64K | 128K |

| Provider | Stealth (cloaked) | Anthropic | OpenAI |

| Prompt logging | Yes (model improvement) | No (API default) | No (API default) |

| Tool call error rate | 4.73% | <1% reported | <1% reported |

| Throughput | 12 tps | ~80 tps | ~70 tps |

The math at $0 is hard to argue with for batch work. A 100K-token analysis run that would cost $0.30 on Claude Sonnet 4 input plus another $1.50 on output costs nothing on Owl Alpha. For a developer running 20 of those a day, that is real money saved during the free window.

The thing to plan for: when the window closes, your pipeline should be portable. Build against the OpenAI-compatible chat completion API that OpenRouter exposes, keep your prompts in a shape that works on any reasonable model, and have a switchover path ready when the price changes or the model goes offline.

Our coverage of Claude finding zero-day vulnerabilities walks through how to keep a model-agnostic agent design that survives this kind of provider change.

Verdict on Whether Owl Alpha Is Worth Switching To

Owl Alpha is a strong “and” not a “instead”: add it to your stack for batch work and long-context analysis, keep Claude or GPT-5.5 for anything interactive or sensitive.

It is genuinely useful right now because the free tier removes the friction of trying a 1M-context model on real workloads. It will not replace your daily driver, and it should not.

Pros:

- Free input and output tokens during the testing window

- 1.05M token context window, the biggest currently in the consumer-accessible tier

- 100% uptime and 0.39% structured-output error rate, both genuinely strong

- Compatible with mainstream agent runtimes (Claude Code, OpenClaw, Hermes Agent, Kilo Code, Roo Code) via OpenAI-compatible API

Cons:

- 12 tokens per second throughput, far too slow for interactive chat

- 4.73% tool-call error rate compounds across multi-step agent chains

- Prompts and completions logged for model improvement, breaks any privacy-sensitive workflow

- “Stealth” provider, no transparency on developer, no guarantee of continuity

- Free pricing is a testing window, not a permanent tier

The way I see it, the most useful thing about Owl Alpha is that it gives indie developers and researchers a real chance to test 1M-token agent patterns without burning cash.

That alone earns it a slot in the toolbox for the next few weeks. Just keep your eyes open for the day the price changes, because it will.

Frequently Asked Questions

Is Owl Alpha really free or are there hidden costs?

The current price on OpenRouter is $0 per million input and output tokens. The cost is that prompts and completions are logged and likely used to improve the model. For non-sensitive workloads that is acceptable, for anything proprietary it is not.

What model is really behind Owl Alpha?

The provider is listed as “Stealth” with no developer attribution. Based on the OpenRouter pattern, where Polaris Alpha turned out to be GPT-5.1 and Sherlock Alpha turned out to be Grok 4.1, Owl Alpha is almost certainly a cloaked frontier model from one of the major labs. The exact identity has not been confirmed.

How does the 1M context window compare to Claude or GPT?

Owl Alpha’s 1,048,576-token context is roughly 5x Claude Sonnet 4’s 200K and 2.6x GPT-5.5’s 400K. For tasks like analysing whole legal filings, full codebases, or multi-document research syntheses, that is a meaningful capability gap.

Can I use Owl Alpha inside Claude Code or OpenClaw?

Yes. The model exposes an OpenAI-compatible API through OpenRouter and is listed as compatible with Claude Code, OpenClaw, Hermes Agent, Kilo Code, and Roo Code. Configure it as a custom model endpoint inside your tool’s settings.

Will the free pricing last?

No. Other Alpha models on OpenRouter have moved to paid pricing or been retired once the testing window closed. Plan your stack so it can switch providers without rewriting your prompts.

Is Owl Alpha safe for sensitive work?

No. The provider logs prompts and completions. If you are working with personal health information, regulated financial data, unreleased product details, or any other sensitive content, use a provider with a no-training data clause instead.