Slice Knowledge Honest Review

Imagine transforming dense manuals into interactive e-learning courses with a snap of your fingers. Slice Knowledge turns this into…

Imagine transforming dense manuals into interactive e-learning courses with a snap of your fingers. Slice Knowledge turns this into…

Picture a world where your paintbrush never runs out of color, your pen never dries up, and your…

Ever felt like you’re trying to build a skyscraper with a toy hammer? That’s what crafting a business…

Picture yourself sipping a cup of coffee, staring at a blank screen, and wrestling with writer’s block. Now,…

Picture yourself in front of your computer, staring at a blank screen. The cursor blinks, almost taunting you,…

Ever stumbled upon a tech tool so cool, it made you rethink the way you do things? Well,…

Imagine stepping into a world where machines think, learn, and even make decisions. That’s the realm of Artificial…

Picture this: You wake up one morning to find that your smartphone has scheduled your day, your smart…

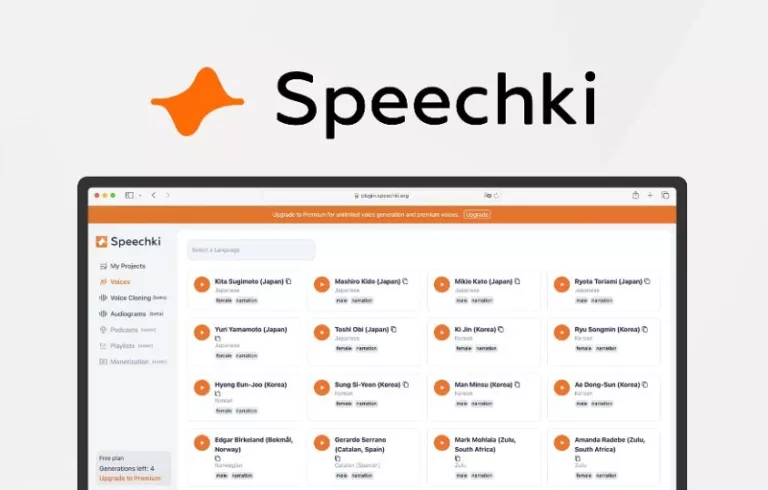

Are you tired of listening to text-to-speech outputs that sound like they’re coming straight from a vintage computer…

Ever found yourself stuck in the content creation rut, feeling like every video idea has been done to…