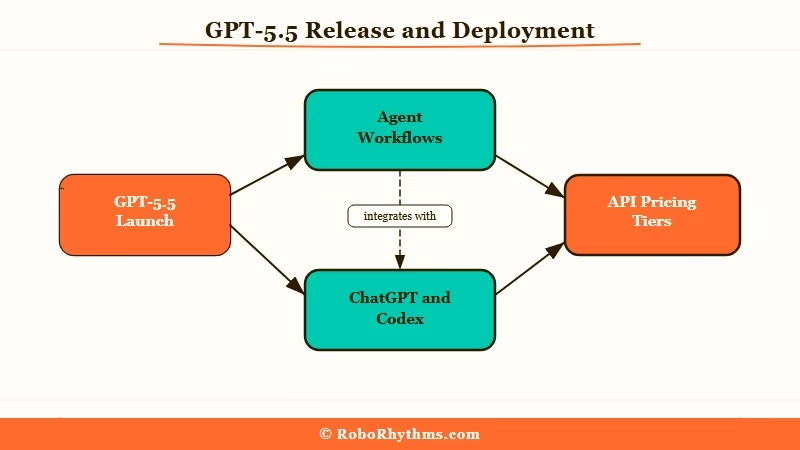

What Happened: OpenAI shipped GPT-5.5 on April 23 2026, just six weeks after GPT-5.4, with a clear pitch around agentic workflows, multi-step task completion, and computer-use. API pricing lands at $5 and $30 per million tokens for standard, $30 and $180 for GPT-5.5 Pro. The real story is not the benchmark wins, it is that OpenAI is no longer selling a chat model. They are selling an agent.

OpenAI shipped GPT-5.5 today, April 23 2026. The release came barely six weeks after GPT-5.4 and OpenAI is pitching it as “a new class of intelligence for real work.” Every major outlet is covering it as another benchmark leap, which is the part I think everyone is missing.

From what I have seen reading the launch material, this is not a model update. It is a positioning shift.

OpenAI has stopped selling a chat completion API and started selling an agent. The language in the announcement, the capabilities they are leading with, even the example tasks, are all agentic. The benchmark stuff is the side show.

If you are building with AI, running agents, or just watching where the industry is going, the GPT-5.5 release matters more than the version number suggests. Here is what shipped, why it is a bigger deal than it sounds, and what to do about it this week.

What OpenAI Released in GPT-5.5

GPT-5.5 is OpenAI’s new flagship model, available today in ChatGPT and Codex, pitched around agent workflows, computer use, and multi-step task completion rather than chat quality.

It replaces GPT-5.4 as the default on Plus, Pro, Business, and Enterprise tiers, with a GPT-5.5 Pro variant gated to the three paid tiers.

The headline capability, per OpenAI’s own announcement, is that you can “give GPT-5.5 a messy, multi-part task and trust it to plan, use tools, check its work, navigate through ambiguity, and keep going.” That is the sales pitch, in their words.

Not “it answers questions better.” Not “it writes cleaner code.” It finishes tasks without you managing every step.

The concrete capability list they lead with:

- Coding and debugging across longer contexts without losing thread

- Computer use for operating software and navigating interfaces

- Spreadsheet and document work, including real filling and manipulation

- Multi-step web research that reads, cross-references, and cites

- Tool orchestration across a session without explicit routing

The API pricing is where things get spicy. Standard GPT-5.5 is $5 per million input tokens and $30 per million output, which undercuts Claude Opus on input cost but sits above Sonnet. GPT-5.5 Pro at $30 and $180 is genuinely expensive, more than Anthropic’s Opus pricing.

The Pro tier is for serious agent work where you run long context and generate a lot of tokens per task.

Axios also reported the internal code name is “Spud.” I have no idea why. If OpenAI’s naming scheme now includes root vegetables, that is its own story.

We have also seen the GPT-5.4 release follow the same staged rollout pattern, so the infrastructure playbook is now routine.

Why Does GPT-5.5 Matter More Than Another Version Bump

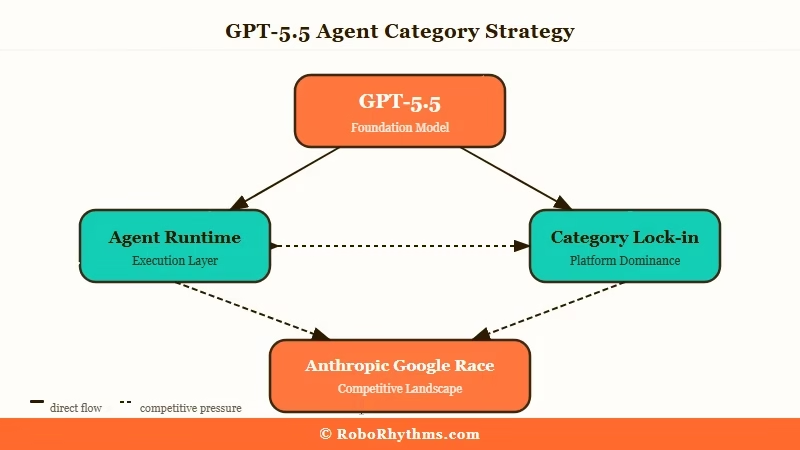

GPT-5.5 matters because it is the first OpenAI flagship positioned primarily as an agent runtime rather than a chat model.

The benchmark claims against Gemini 3.1 Pro and Claude Opus 4.5 are interesting but secondary. What changed is the product story.

In my experience reading OpenAI launches going back to GPT-4, the company has always led with quality metrics. Reasoning scores, coding benchmarks, token-level evaluations.

GPT-5.5 leads with outcomes. “It completes the task.” “It uses your tools.” “It doesn’t need you to babysit it.” That is a different market than chat completions, and it puts pressure on a different competitive set.

The rate of release compounds the point. Six weeks from GPT-5.4 to GPT-5.5 is not normal model release cadence. That is product-launch cadence.

When a frontier lab ships that fast, it is because they are racing to lock down a category, not to publish a paper. The category they are locking down, I would argue, is agents.

This also flips how Anthropic and Google have to respond. Anthropic’s Opus 4.7 leans into reasoning and long-context quality. Google’s Gemini 3.1 Pro leans into multimodal.

Neither of those framings translates to “but can it run a workflow.” If OpenAI owns agent framing for the next quarter, they will pull enterprise contracts that were up for grabs.

| Vendor | Latest flagship | Pitched as | Agent-specific framing |

|---|---|---|---|

| OpenAI | GPT-5.5 | Agent runtime | Yes, primary |

| Anthropic | Claude Opus 4.7 | Reasoning / coding | Partial, via Managed Agents |

| Gemini 3.1 Pro | Multimodal quality | Limited | |

| xAI | Grok 4.20 | Speed / personality | No |

What Does GPT-5.5 Mean for How You Build With AI

If you are building products or workflows with AI, GPT-5.5 changes the economics of agent deployment but not the architecture.

The tools you use, the prompt patterns, the tool-calling loops, all still apply. What changes is how far a single model call gets you before handoff.

The way I see it, three practical shifts land today. First, if you were chaining three or four GPT-5.4 calls to get through a multi-step task, you can probably collapse that to one or two with GPT-5.5. The reliability improvements on multi-step task completion are real enough that the extra per-token cost is paid back in fewer round trips and less glue code.

Second, the Pro pricing recalibrates the “should I use Claude for this” question. At $30 input and $180 output for Pro, GPT-5.5 Pro is priced above Claude Opus 4.5 for most workloads.

If you were on Opus for quality reasons, Pro is the like-for-like swap. If you were on Opus for cost, Claude stays cheaper. This matters because Anthropic just reported $30B ARR, mostly from API customers choosing Claude for exactly that quality-at-price sweet spot.

Third, if you are running local LLMs or self-hosted agent infrastructure, this does not affect you directly, but it will affect your users’ expectations. Once people get used to a model that finishes messy tasks without prompting, the bar for any agent product goes up.

Open-source coding agents built on smaller models will feel slower by comparison even when they are objectively faster per token.

For RoboRhythms readers specifically, my take is this. If you are using ChatGPT daily for content, research, or coding, the 5.5 upgrade is already in your subscription. Use it.

If you are building agents, test the Pro tier on your hardest workflow before committing, the delta over Claude Opus 4.5 is real but not universal. If you are writing about AI tools, start covering the “does it really use computers” angle, because every major lab will be pitching that by summer.

What Comes Next After GPT-5.5

The next three months will test whether agentic positioning can stick or whether it resets to another capability race. Anthropic and Google both have responses queued, and I would not bet on OpenAI holding the agent narrative alone for long.

Anthropic’s likely counter is a faster Managed Agents rollout combined with Opus pricing adjustments. They already have the agent harness, and they already have the quality claim.

What they do not have is OpenAI’s distribution. Expect a Managed Agents general-availability push within six weeks if the pattern holds. For context on where that leaves Cursor and the coding-agent race, the squeeze on standalone agent IDEs is going to intensify.

Google will do what Google does, which is release something impressive on the model side and undersell it. Gemini 3.2 or 3.5 is almost certainly in flight. The question is whether they can frame it as an agent story too, and their track record on product framing is not great.

The wildcard is pricing. If OpenAI undercuts on the standard tier over the next quarter, the API margin pressure on Anthropic gets real.

If they hold standard pricing and push Pro as the enterprise tier, they are betting their agent framing is strong enough to justify the premium. Either way, the race is happening now, not next year.

I will write the follow-up piece when we get the first real benchmark from someone other than OpenAI, which should be within a week. For anyone tracking how fast the frontier is moving on the agent side, this release is the one to date the shift against. The chat-era positioning is effectively over.