What Happened: North Korea’s Lazarus Group compromised the axios npm package on March 31, 2026, pushing malicious versions that deployed a remote access trojan. OpenAI’s macOS app-signing pipeline pulled the infected code, exposing code-signing certificates for ChatGPT Desktop and Codex. No user data was compromised, but all macOS OpenAI app users must update before May 8, 2026.

The way I read it, this is not a story about OpenAI’s security failing. The openai axios supply chain attack is a story about the architecture of trust that every AI app on your computer rests on, and how a nation-state just proved it has a soft underbelly.

The attackers never touched OpenAI’s systems. They targeted a single open-source package that OpenAI, like thousands of other companies, depends on. The damage was contained. The method was not.

One compromised developer account. One infected npm package. Potential access to the certificate that tells your Mac: this software is safe from OpenAI. That’s the attack. And it will be used again.

What Actually Happened in the OpenAI Axios Attack?

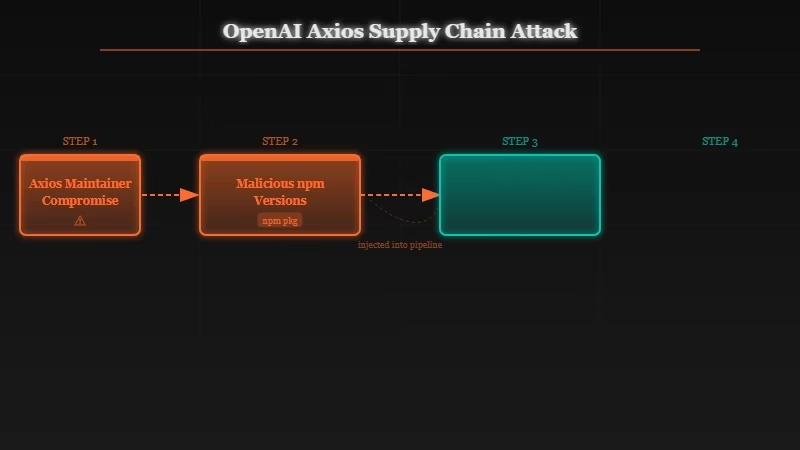

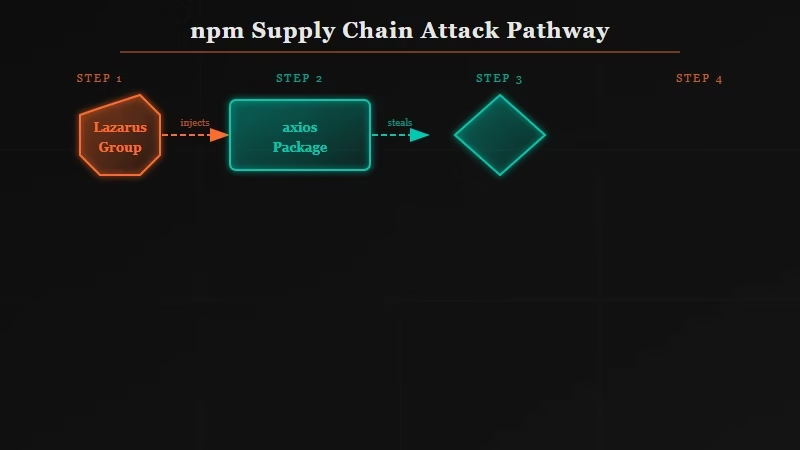

The OpenAI axios supply chain attack began on March 31, 2026, when North Korea’s Lazarus Group (BlueNoroff subgroup) socially engineered the lead axios npm maintainer, hijacking his npm and GitHub accounts to publish malicious package versions.

From what I’ve pieced together across the incident reports, the sequence was precise. Axios is not obscure. It handles HTTP requests in JavaScript and sees roughly 70 million weekly downloads.

The attackers published two compromised versions: v1.14.1 and v0.30.4. According to Elastic Security Labs report, both versions injected a dependency called plain-crypto-js@4.2.1, which deployed a cross-platform remote access trojan dubbed the BlueNoroff RAT.

OpenAI’s macOS app-signing GitHub Actions workflow pulled the infected version. That workflow had access to the code-signing certificate and notarization material for ChatGPT Desktop and Codex.

The certificate is what tells macOS this software is genuinely from OpenAI. If it had been fully exfiltrated, attackers could have signed malware your Mac would have trusted without question.

OpenAI’s incident report states there is no evidence the certificate was extracted or misused. Regardless, OpenAI rotated all macOS code-signing certificates and is requiring all Mac users to update before May 8, 2026, after which older versions stop working entirely.

Here’s the full picture of what was and wasn’t affected:

| Platform / Asset | Status | Action Required |

|---|---|---|

| ChatGPT Desktop (macOS) | Certificates rotated | Update before May 8, 2026 |

| Codex Desktop (macOS) | Certificates rotated | Update before May 8, 2026 |

| ChatGPT iOS / Android | Not affected | None |

| ChatGPT Web | Not affected | None |

| ChatGPT Windows | Not affected | None |

| User data (all platforms) | Not compromised | None |

| API keys and passwords | Not compromised | None |

Why Does an npm Supply Chain Attack Matter This Much?

An npm supply chain attack matters because it bypasses the target company’s security controls entirely, compromising the open-source foundation that their application is built on.

From what I’ve seen in the security community’s response to this incident, the precision of the targeting is what stands out. Lazarus didn’t spray malicious code into axios hoping for random collateral damage.

They identified that a specific high-value target (OpenAI) was running axios in a GitHub Actions pipeline that had access to code-signing keys.

Then they went after the maintainer. That is patient, deliberate, state-level tradecraft.

This is the same playbook the BlueNoroff subgroup has used for years against cryptocurrency exchanges. Find a developer with elevated access. Compromise them through social engineering. Walk in through their credentials.

The only thing that changed is the target shifted from wallet-draining to AI infrastructure.

The uncomfortable structural truth: OpenAI’s security team did nothing wrong. Their internal systems held. This attack succeeded purely because of how all modern software is built.

Every JavaScript application depends on a stack of npm packages, each maintained by developers who may or may not have multi-factor authentication configured. Axios happens to be one of the most-downloaded packages on the planet. That makes it a high-leverage target.

Microsoft published its own axios mitigation guidance within days of the incident surfacing, which strongly implies OpenAI wasn’t the only large company with this package running in a sensitive CI/CD pipeline. The blast radius is still being mapped.

What Does the Axios Hack Mean for AI App Users?

For macOS users, the required action is updating all OpenAI apps before May 8. The broader implication is that every AI desktop app carries inherited security risk from its dependency chain, independent of the building company’s own defenses.

If you have ChatGPT Desktop or Codex installed on a Mac, update now. OpenAI has issued new signed versions. You can update through the app itself or through the Mac App Store, depending on your installation source.

The harder question is what this means for the other AI tools on your Mac. The way I see it, Claude Desktop, Cursor, Windsurf, and similar applications all use npm packages in their build pipelines. Every one of them carries some version of this structural risk.

Not because those companies are negligent, but because they’re building on the same open-source substrate that every other software company uses.

Here’s what you can do right now:

- Update every AI desktop app you have installed. Certificate rotations only protect you if you’re running a current version.

- Stop dismissing update prompts. If an app has been requesting an update for weeks, that window is not trivial.

- Follow security disclosure channels for the tools you depend on. OpenAI, Anthropic, and most frontier labs now publish public security advisories.

- If you work in a development team: audit which npm packages have access to code-signing keys in your CI/CD pipelines. This attack demonstrates exactly why that surface is worth mapping.

This incident fits a broader pattern. North Korea targeting AI labs has been escalating, moving from model IP theft to infrastructure compromise. The supply chain approach is a natural step up: rather than fighting frontier AI companies head-on, go after the open-source layer underneath them.

Is the OpenAI Axios Attack a One-Off or a Preview?

It is a preview. State-sponsored supply chain attacks will increase as AI infrastructure becomes higher-value, and the npm ecosystem remains structurally exposed to this technique.

From my read of the security research, the Lazarus Group will run this playbook again. The axios attack confirmed that a sophisticated actor can identify high-value build pipelines, find the weakest link in the dependency chain, and compromise it without ever touching the primary target’s systems. That proof of concept now exists.

The industry response is moving in the right direction. Anthropic has been investing in proactive security research, including Claude surfacing open-source zero-days before attackers can exploit them.

npm is facing renewed pressure to mandate MFA for maintainers of high-download packages. Dependency integrity tools like Socket.dev and Snyk are gaining adoption in enterprise pipelines.

The fundamental architecture hasn’t changed. AI companies build on millions of lines of code maintained by individual developers, many of them volunteers. That will remain the attack surface that well-resourced actors probe.

Earlier this year, the Claude code source exposure showed how even passive leaks become signals for adversarial reconnaissance. The axios attack shows what active exploitation of that reconnaissance looks like.

The question is no longer whether AI supply chain attacks will become a recurring threat category. It’s which package is next, and whether the industry notices fast enough to contain it.