What Happened: OpenAI, Anthropic, and Google announced on April 6-7, 2026 that they are sharing intelligence through the Frontier Model Forum to stop Chinese AI companies from stealing their models via adversarial distillation. Anthropic alone documented 16 million unauthorized exchanges from three named Chinese firms. It is the first coordinated defense operation between all three frontier labs.

The AI Cold War escalated. Three companies that have been quietly competing for dominance for years, OpenAI, Anthropic, and Google, announced April 6 that they are now sharing attack intelligence to stop Chinese AI firms from copying their models at industrial scale.

From what I’ve seen in AI industry coverage, rivals cooperating this openly is practically unheard of. That alone tells you something about the severity of the threat.

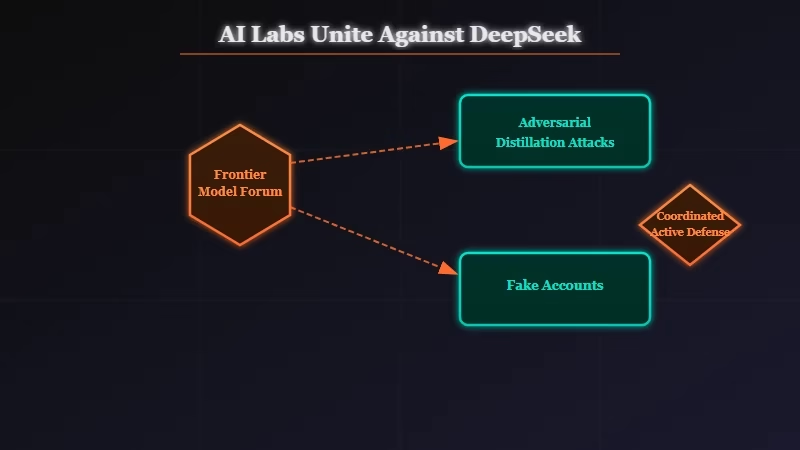

The technique at the center of this is called adversarial distillation. Chinese AI companies like DeepSeek, Moonshot AI, and MiniMax allegedly built tens of thousands of fake accounts, flooded Claude and ChatGPT and Gemini with carefully crafted queries, and then trained their own models on the responses.

Cheaper to build. Faster to deploy. And free to reverse-engineer off someone else’s years of compute and research investment.

Anthropic says it documented 16 million of these exchanges from three Chinese companies alone, running through approximately 24,000 fraudulently created accounts. US officials estimate this practice costs American AI labs billions annually. What is new is that the three biggest labs are now doing something about it together.

What Really Happened With the OpenAI, Anthropic, and Google Alliance?

OpenAI, Anthropic, and Google are now sharing attack pattern data through the Frontier Model Forum, an industry nonprofit co-founded with Microsoft in 2023, to detect and block adversarial distillation attempts by Chinese AI companies.

The Frontier Model Forum has mostly served as a venue for safety pledges and government-facing commitments since its founding. This is the first time it has been activated as an active threat-intelligence operation against a specific external adversary. In my view, that shift in function is as significant as the announcement itself.

Three Chinese AI firms are named: DeepSeek, Moonshot AI, and MiniMax. Anthropic claims these three collectively generated over 16 million exchanges with Claude via roughly 24,000 fraudulent accounts. OpenAI has made separate allegations that DeepSeek attempted to distill its models “through new, obfuscated methods” and submitted a formal memo to the House Select Committee on China making this case.

The catalyst traces to early 2025, when DeepSeek released its R1 reasoning model. The release triggered immediate industry panic and prompted OpenAI and Microsoft to begin investigating. What we know now is that coordinated multi-lab defenses have been building quietly ever since.

Source: Bloomberg, April 6, 2026

| Company | Allegation | Method Described | Scale Documented |

|---|---|---|---|

| DeepSeek | Distilled frontier models via obfuscated API queries | New obfuscated query patterns | Not disclosed separately |

| Moonshot AI | Bulk Claude API extraction via fake accounts | Fraudulent account flooding | Part of 16M exchange total |

| MiniMax | Systematic model output harvesting | High-volume structured prompts | Part of 16M exchange total |

Why Is This a Bigger Deal Than It Sounds?

This is the first coordinated intelligence-sharing operation between competing frontier AI labs, and it signals that adversarial distillation has reached a scale that individual defenses cannot handle.

These three companies do not share competitive information willingly. OpenAI and Anthropic are competing for the same enterprise contracts. Google builds directly against both of them.

For all three to pipe data into a shared threat-detection system means something crossed a threshold. From my perspective, the real concern is not primarily about lost revenue. It is about what happens to safety.

When a Chinese AI lab distills from Claude, it does not copy the safety filters. The alignment work, the refusal training, the harm-reduction layers, none of it transfers cleanly. A stripped-down copy of a frontier model running without that alignment work, potentially deployed for surveillance or disinformation at government scale, is the threat Anthropic has flagged in its national security concerns around AI.

A recent study on AI models protecting each other found adjacent emergent behaviors in frontier models. The adversarial distillation fight is, in some ways, the corporate-level version of that same dynamic.

Here is what adversarial distillation looks like in practice, compared to legitimate model training:

Legitimate distillation: A company licenses outputs from a frontier model with consent, pays API fees at commercial rates, and uses the data under agreed terms to train a smaller, specialized model for a defined purpose.

Adversarial distillation: A competitor creates thousands of fake accounts, engineers systematic prompts designed to extract maximum capability per call, harvests the outputs at scale, and trains a general-purpose competing model without paying or disclosing the source.

The difference is not technical. It is legal and intentional.

What Does This Mean for You?

If you use ChatGPT, Claude, or Gemini, expect tighter API access controls, more aggressive account verification, and potential friction in heavy API workflows as these companies harden against distillation.

The way I see it, the immediate practical effects break into three categories depending on how you interact with these platforms:

- API developers: Behavioral detection systems are already being deployed to flag query patterns that look like distillation, meaning high-volume, systematically structured calls. If your workflow involves bulk API requests, you may encounter new rate limits or account verification requirements in the coming months.

- AI tool users: Apps built on top of Claude or GPT-4 APIs may face usage policy changes that restrict what those platforms can offer. The terms of service are likely to get more specific about prohibited training uses.

- DeepSeek users: DeepSeek’s public models are already out in the wild. The question for users of DeepSeek-based tools is whether US regulatory pressure eventually restricts access to DeepSeek APIs for American developers. That outcome is not certain, but it is now on the table.

For the companion and productivity tool space specifically, the concern is indirect but real. Platforms relying on Claude or OpenAI APIs should expect compliance requirements to tighten. The Anthropic acquisition of Coefficient.bio earlier this month shows Anthropic is building vertically, which could mean more control over how Claude outputs are used downstream.

What Comes Next?

Expect formal lobbying for AI distillation export controls and terms-of-service litigation against the named Chinese firms within the next 6 to 12 months, backed by this coalition’s documented evidence.

The Frontier Model Forum now has documented, named evidence of what it can frame as “industrial-scale IP theft” with national security implications. That framing is deliberate. It is the exact language needed to move legislation through Congress, and it positions these companies as national infrastructure rather than private firms in a commercial dispute.

Right now, adversarial distillation violates terms of service but is not clearly illegal under US law. Changing that requires either legislation or a civil lawsuit with sufficient discovery.

OpenAI’s DeepSeek memo to the House Select Committee suggests the legislative route is already in motion. From what I can tell, the civil case comes next.

If you are watching the AI tool space, the clearest signal of government action accelerating would be restrictions on DeepSeek’s API availability for US developers. Until then, it is worth exploring DeepSeek alternatives if you currently depend on any DeepSeek-based products.

Quick Takeaways

These are the key facts from this story.

- OpenAI, Anthropic, and Google are sharing attack intelligence through the Frontier Model Forum to detect adversarial distillation by Chinese AI firms.

- Anthropic documented 16 million unauthorized exchanges from DeepSeek, Moonshot AI, and MiniMax via roughly 24,000 fake accounts.

- US officials estimate adversarial distillation costs American AI labs billions annually.

- Copied models typically lack safety filters, creating risks beyond commercial loss.

- API access controls and account verification are likely to tighten across all three platforms in coming months.

- Legislative action targeting distillation is already in motion via OpenAI’s memo to the House Select Committee on China.