My Take: LangChain is no longer building a framework for developers. It is building a funnel toward LangSmith. The framework itself has become the free tier of a commercial product, and the community on r/LangChain is noticing. This is not a criticism of LangSmith, it is a warning about what LangChain is becoming.

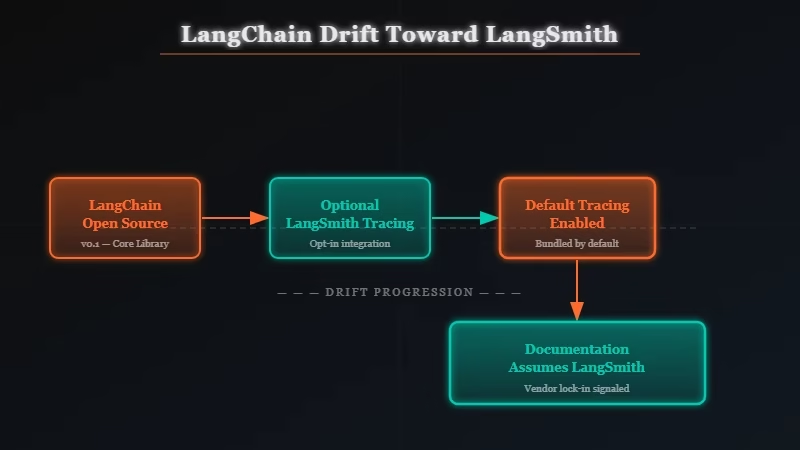

Something changed in the r/LangChain community tone over the last few months. A thread titled “LangChain feels like it’s drifting toward LangSmith” got real engagement in March 2026, not just complaints, but a serious technical conversation about whether LangChain’s API design choices are still being made for the benefit of independent developers or for the benefit of LangSmith adoption.

That thread matters because the people in it are not beginners. They are experienced ML engineers who have built production systems on LangChain and are now reassessing.

The complaints are specific: changelog entries that seem to optimize for LangSmith tracing hooks, documentation that assumes LangSmith is in your stack, and core abstractions that have gotten more complex over time without proportional usefulness gains.

I have been following LangChain since the 0.0.x days, when it was a genuinely useful glue layer for stitching together LLM calls, tools, and memory.

The framework I use today is not that framework. And the direction it is heading is not where I want to follow.

The Mainstream View and Why It Falls Short

The mainstream view, held by LangChain’s documentation and most enterprise AI consultants, is that LangChain remains the most mature, best-supported framework for building LLM applications, with LangSmith as an optional observability add-on.

This view is articulated most clearly by LangChain’s own blog and by enterprise AI consultants like McKinsey’s QuantumBlack, who have published guides recommending LangChain as the default choice for production LLM systems.

The argument goes: LangChain has the largest community, the most integrations (hundreds of tool connectors), and the deepest documentation. Switching costs are high; the ecosystem value is real.

That case is not wrong. LangChain does have the largest community and the most integrations. If you are onboarding a new hire and want them productive immediately, LangChain’s documentation advantage is genuine.

But the “optional add-on” framing for LangSmith is where the mainstream view breaks down. Nothing is truly optional when the platform’s documentation assumes it, the debugging experience is built around it, and the venture-backed company’s commercial future depends on it.

What’s Actually Happening

What is actually happening is that LangChain Inc. has made a rational business decision: the framework cannot be the commercial product, so the commercial product has to be LangSmith, and LangChain’s role is to generate demand for it.

This is not speculation. It is visible in the API design trajectory over the last 12 months. What I have watched happen:

LangChain’s RunTree and tracing APIs, which existed as lightweight debugging utilities, have been progressively integrated deeper into core chain execution. Disabling LangSmith tracing now requires explicit configuration rather than being the default. The LANGCHAINTRACINGV2 environment variable is set to “true” by default in recommended quickstart guides.

The documentation for complex chains now routinely uses LangSmith trace visualization as the primary debugging method rather than standard Python debugging or logging. For a developer who does not have LangSmith set up, the debugging experience for production issues has gotten worse over the last year, not better.

The r/LangChain thread I mentioned had a comment that landed well: “I don’t think they’re doing anything wrong. It just means LangChain isn’t the tool for me anymore.” That is the quiet churn in action. No drama, no public breakup, just developers leaving for LlamaIndex, DSPy, or raw SDK calls.

From what I have seen in the agent development space, the developers who left LangChain for raw Claude SDK calls are not coming back.

The abstraction layer that LangChain provides is useful for prototyping and genuinely helps with certain integration patterns. But for production systems, the framework’s overhead, both in complexity and in implicit dependency on LangSmith, has crossed the value threshold for a meaningful subset of the developer base.

The Part Nobody Wants to Admit

The part nobody wants to admit is that the LangChain abstraction layer was always solving a temporary problem, and that problem is now mostly solved by the model providers themselves.

In 2022, you needed LangChain because building a chain of prompts, tool calls, and memory management was genuinely complex without abstractions. OpenAI had no function calling. Anthropic had no tool use. Memory was handled entirely by the developer. LangChain filled a real gap.

In 2026, that gap is substantially closed. Claude’s tool use API handles structured tool calling natively. OpenAI’s assistant threads manage conversation state. The model providers have built the primitives that LangChain was abstracting over. The abstraction no longer saves you the complexity it used to.

What this means for LangChain’s value proposition: the framework is most useful now precisely in the situations where you want tight LangSmith integration, complex multi-agent systems where distributed tracing is genuinely valuable. In other words, LangChain has found its niche, but that niche is the enterprise LangSmith customer, not the independent developer building a side project or a small team shipping a product.

The way I see it, this is not LangChain failing. It is LangChain succeeding at the wrong thing for most of its users.

Hot Take

The actual successor to LangChain is not a framework at all. It is three things: the model provider’s native SDK, a simple prompt management file or module, and good old Python logging. Every abstraction layer between you and the model API is a liability that will confuse your next hire, generate upgrade friction every six months, and, if it is LangChain, subtly pressure you toward a paid observability product you may not need. Stop abstracting your way into framework debt. Write the API call directly.

Why This Matters for Your Stack Decisions

If you are making framework decisions for an LLM system in 2026, the LangChain vs raw SDK choice is now also a LangSmith vs your own observability choice, and you should make that decision explicitly rather than letting it happen by default.

The developers most at risk from not noticing this shift are teams that adopted LangChain early, have it deeply embedded in their stack, and have not revisited the decision since 2023.

For those teams, the incremental drift toward LangSmith is invisible, each individual change to the framework seemed reasonable. The pattern only becomes visible when you look at the cumulative direction.

Three questions worth asking if you are currently using LangChain:

- If LangChain released a major version that required LangSmith for certain features, would your stack be able to avoid it without significant refactoring?

- Are your senior engineers using LangSmith’s trace visualization as their primary debugging method, or do they have independent observability in place?

- If LangChain the company shut down tomorrow, could your team maintain and evolve your LLM layer without it?

If the answers are uncomfortable, the migration to raw SDK calls or a simpler abstraction (DSPy, LlamaIndex’s core) is worth planning. It will not happen without effort, but the effort is finite. Framework lock-in that deepens over time is not.