What’s Changed: JanitorAI switched JLLM to a new base architecture on April 20, 2026, and the upgrade broke grammar for a large number of users. Repetition loops, random capitalization, character drift, and the bot writing your actions are all tied to this model swap. Fixes exist, but they require prompt-level changes on your end.

JanitorAI JLLM grammar problems started spiking the week of April 20, 2026. The platform quietly swapped JLLM to a new base architecture tuned on Gemini and Opus data, and the side effects hit users immediately.

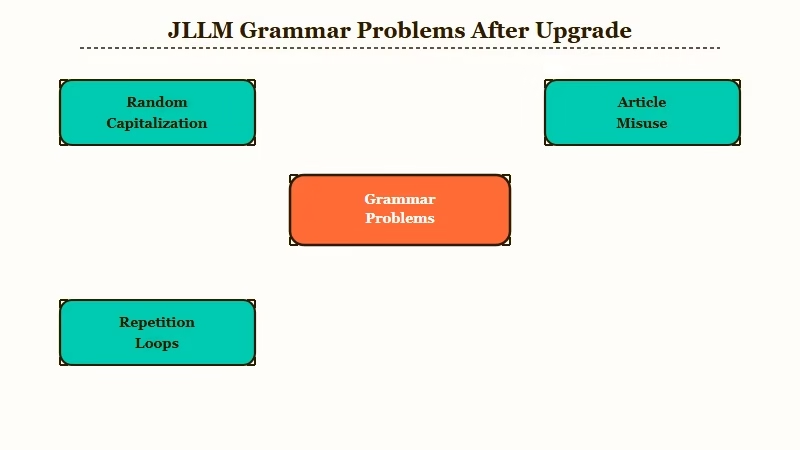

Random capitalization mid-sentence. The word “a” appearing before vowels instead of “an.” Adverbs stacked three deep in a single paragraph. Phrases that echo themselves almost word for word after 30 or 40 messages.

If your JLLM bot suddenly started producing output that reads like a rough draft with no editor, you are not imagining it. The model changed underneath you, and JanitorAI’s team has confirmed they are still patching the results. The way I see it, the architecture swap was necessary but the rollout left users holding the bag on quality.

What Changed With JanitorAI JLLM in April 2026

JanitorAI replaced JLLM’s base architecture on April 20, 2026, retraining it on Gemini and Opus data to improve logic and coherence, but the upgrade introduced new grammar and repetition problems.

The writing style, pacing, and personality of JLLM bots shifted overnight.

What is JLLM: JanitorAI’s built-in language model that powers free chat without requiring an external API key. It runs on JanitorAI’s own GPU infrastructure and is the default model for most of the platform’s roughly 130 million monthly visits.

The JanitorAI changelog shows four model-level changes in the first four months of 2026. The January 26 update rolled out FP8 quantization for H20 GPUs and expanded context handling to 16,384 tokens. The February 25 update introduced a reasoning layer where roughly 1 in 5 requests route through a “think before responding” pipeline.

Then came April 20. The full base model swap landed with a single changelog note acknowledging that “writing style, pacing, and personality might feel a bit different.” The phrase “a bit different” undersells the reality by a wide margin.

The team confirmed they are working on three specific fixes: solving repetition loops, increasing response variety, and improving gender locking on characters. None of these are resolved as of early May 2026.

What Are the Specific JLLM Grammar Problems

JLLM grammar issues fall into four categories: random capitalization, article misuse, repetition loops, and character drift that worsens over long conversations.

Each has a different root cause and a different workaround.

Here is how each problem shows up in practice:

| Symptom | What it looks like | Likely cause | Fix |

|---|---|---|---|

| Random capitalization | Words capitalized mid-sentence for no reason | Tokenizer mismatch in new architecture | Regenerate the response, or add “never capitalize random words” to your system prompt |

| Article misuse | “A elephant” instead of “an elephant” | Grammar layer regression in base model | Add explicit grammar instruction to persona card |

| Repetition loops | Phrases doubling, tokens looping until cutoff | Known model-level limitation, confirmed by team | Lower repetition penalty in generation settings to 1.05-1.10 |

| Character drift | Bot writes your actions, personality fades after 30+ messages | Context window overflow pushing persona data out | Restart the conversation or trim message history regularly |

From my testing, the repetition loop is the most disruptive. JLLM gets stuck on a phrase and repeats it with minor variations until the response hits the token limit.

The platform now shows a “bot got stuck repeating itself” notice and cuts the response short instead of filling it with garbage, but that is a band-aid, not a fix.

Before: “She walked toward the door, her hand reaching for the handle as she walked toward the door, her hand reaching for the handle as she walked toward the door”

After (with repetition penalty at 1.08 and explicit anti-loop instruction): “She walked toward the door and paused, fingers hovering over the handle. Something in the hallway made her reconsider.”

The character drift problem is different. It happens because JLLM’s context window is currently around 8,000 to 9,000 tokens. Once a conversation exceeds that, older messages get pushed out, including the persona card data that defines how the character behaves.

The bot starts writing your character’s actions and loses the personality you set up.

How to Fix JLLM Grammar Issues Right Now

The most effective fix is a combination of generation settings adjustments and prompt-level instructions that compensate for the model’s current weaknesses.

The platform team is working on model-level patches, but you can improve output quality today.

From what I’ve seen, these steps in order give the best results:

- Open your bot’s generation settings and set repetition penalty between 1.05 and 1.10. Higher values (1.15+) cause the model to avoid repeating even necessary words and produce incoherent output

- Add this line to your system prompt or persona card: “Write with correct English grammar. Never capitalize words randomly. Use ‘an’ before vowels. Do not write actions for {{user}}. Stay in character at all times.”

- Set temperature between 0.7 and 0.85. The new architecture produces more varied output at higher temperatures, but above 0.9 it starts hallucinating style choices

- If you are in a conversation longer than 40 messages, start a new chat. The context window cannot hold that much history without losing persona data

- Use the “regenerate” button aggressively. The reasoning layer (active on roughly 20% of requests) produces noticeably better grammar when it triggers

I’d recommend testing these settings on a simple conversation first before applying them to a bot you care about. The interaction between repetition penalty and temperature is different on the new architecture than it was on the old one.

For anyone who has been troubleshooting JanitorAI roleplay problems, the grammar fixes overlap significantly with the prompt-level solutions that work for roleplay quality issues.

When Will JanitorAI Fix JLLM Permanently

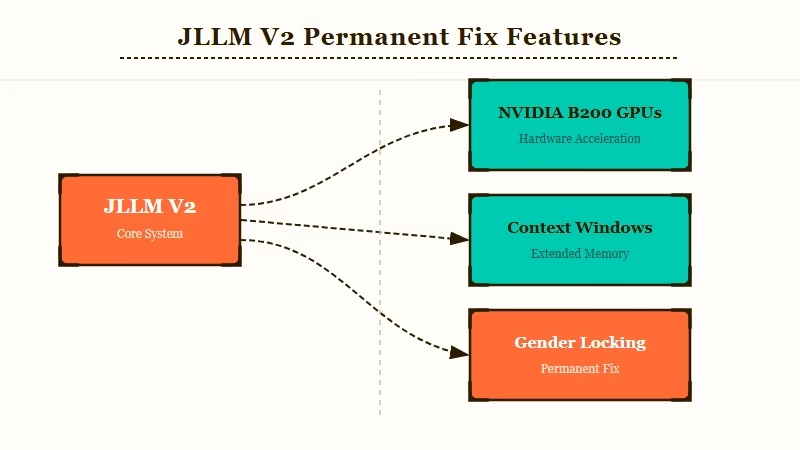

JanitorAI has announced JLLM V2 as the permanent fix, powered by a new NVIDIA B200 GPU cluster that will bring larger context windows, creator-chosen formatting, and more granular personality controls.

No release date has been confirmed.

The way I see it, the timeline is the second half of 2026 at the earliest. The B200 cluster is not operational yet, and the team has tied JLLM V2’s launch to that infrastructure being online.

What V2 promises to fix:

- Expanded context window (current 8K-9K tokens is the root cause of character drift)

- Model optionality so users can pick which version of JLLM to use mid-conversation

- Creator-chosen formatting controls so bot makers can set how the model structures its responses

- Better gender locking (the current model frequently misgenders characters)

Until V2 ships, the prompt-level workarounds from the section above are the only reliable path. The JanitorAI team has been responsive about acknowledging the problems in their changelog, which is more transparency than most platforms offer.

If the grammar issues are a dealbreaker and you need a working bot today, the two realistic options are switching to an external API (GPT-5 or Claude via proxy, which costs money) or trying a different platform entirely.

The broader JanitorAI issues breakdown covers whether the platform is worth staying on given the current state.

What Alternatives Work If JLLM Is Not Good Enough

If JLLM’s grammar and repetition problems are breaking your experience, switching to an API-backed model on JanitorAI or moving to a platform with stronger built-in models are both viable paths.

The right choice depends on whether you want to stay on JanitorAI or leave entirely.

Staying on JanitorAI with an external API key (OpenAI or Claude) eliminates every JLLM-specific problem. The grammar is clean, repetition does not happen, and the context window is dramatically larger.

The cost is roughly $5 to $20 per month depending on usage, and you need to handle API key setup yourself.

If you want to leave JanitorAI entirely, the platforms with the strongest built-in models in 2026 are Candy AI for memory quality and image generation, and Nectar AI for creative roleplay without content restrictions. Neither requires an API key.

From my experience, Candy AI is the better fit for users who care about conversation quality and long-term memory. The model remembers context across sessions, which is the single biggest thing JLLM cannot do.

Nectar AI is worth considering if your priority is creative freedom and you want a platform that does not filter your conversations.

For a full comparison of what is available outside JanitorAI, the JanitorAI alternatives guide covers every major platform with pricing and feature breakdowns.

Frequently Asked Questions

Why is my JanitorAI bot suddenly writing badly?

JanitorAI swapped JLLM to a new base architecture on April 20, 2026. The model was retrained on Gemini and Opus data, which improved logic but introduced grammar regressions including random capitalization, article misuse, and repetition loops. The team is actively patching these issues.

How do I stop JLLM from repeating itself?

Set the repetition penalty in your bot’s generation settings to a value between 1.05 and 1.10. Add an explicit anti-repetition instruction to your system prompt. Values above 1.15 cause the model to avoid even necessary word reuse and make output worse.

Does switching to an API fix the grammar problems?

Yes. Using an external API key (GPT-5 or Claude) on JanitorAI bypasses JLLM entirely. Grammar, repetition, and character drift issues disappear because you are using a different model. The trade-off is cost, typically $5 to $20 per month depending on usage.

When is JLLM V2 coming out?

JanitorAI has announced JLLM V2 will launch when their NVIDIA B200 GPU cluster is operational. No official date has been set. Community estimates point to the second half of 2026, but this is speculation based on infrastructure timelines.

Is JanitorAI still worth using with these problems?

For free users who adjust their generation settings and prompts, JanitorAI remains usable. The platform draws over 100 million monthly visits and the team is transparent about fixes in their changelog. If grammar quality is critical to your experience and you do not want to pay for an API, consider Candy AI or Nectar AI as alternatives with stronger built-in models.