What’s Changed: JanitorAI’s JLLM quality has fallen sharply across May 8 to 9, 2026, with users reporting repetition loops, homogenized character voice, and content refusals. Root cause is the April 20 architecture swap plus a dead GPU endpoint that was removed on May 8. The “proper” fix is JLLM V2 in training.

If your JanitorAI bots have been writing like they’re suddenly bored, refusing turns they wouldn’t have refused last week, or looping the same sentence three times before the response cuts off, you’re not imagining it.

The May 8 to 9 quality drop is real, the changelog confirms the architecture-level cause, and the immediate fix is mostly workarounds while the team trains JLLM V2.

I’d been watching this slow-motion regression since April when the base model swapped, and the May 8 spike on r/JanitorAI_Official (six different complaint posts in 24 hours, dozens of comments per thread) is the point where it became impossible to chalk up to individual bot quality. The pattern is a model-level issue, not a per-character problem.

This piece covers what changed, why your specific symptoms map to which root cause, and what to do today while the new model is in training.

What’s Changed Across May 8 and 9

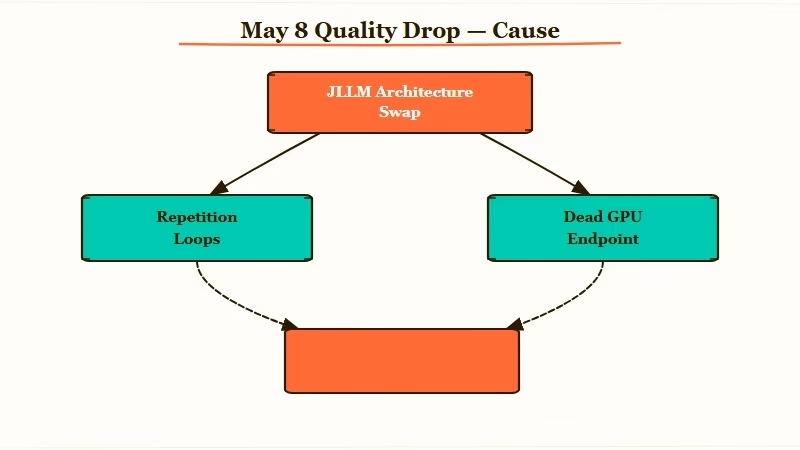

JLLM transitioned to a new base architecture on April 20, 2026, tuned on Gemini and Opus data, and a dead GPU endpoint was finally removed on May 8.

The combination is what’s producing the visible quality drop today.

Three things happened in sequence:

- April 20 architecture swap. JLLM moved to a new base model architecture, retrained with Gemini and Opus output as the tuning data. Writing style, pacing, and character personality all shifted. The team’s own changelog acknowledges users may find the new model “different” from the prior version.

- Repetition loops as architectural side effect. The new architecture has a known tendency to loop. Phrases double, single tokens repeat until the response cuts off. The team’s framing: this is “baked into the architecture” and not solvable through parameter tuning alone.

- May 8 dead-GPU removal. A GPU endpoint that had been failing silently for weeks was incorrectly still receiving traffic. The team removed it on May 8 to restore healthy traffic flow. This is what made the symptoms suddenly visible to users who’d been on the slow-degradation curve.

The on-the-ground experience: bots that worked fine on May 5 started producing flat, repetitive, occasionally refusing replies on May 8.

The architecture issue had been there since April 20, but the dead-GPU traffic was masking the worst cases by serving them on healthy hardware. Once that was removed, traffic redistributed and the architectural limitations became unavoidable.

Why Your Specific Symptoms Map to Which Cause

Each symptom maps to a specific layer of the regression. Knowing which is which tells you whether the workaround helps or whether you’re stuck waiting for V2.

| Symptom | Likely cause | Workaround that helps |

|---|---|---|

| Bot loops the same phrase or doubles tokens | New architecture limitation | Reroll the response as soon as you see the “bot got stuck” notice |

| All bots suddenly sound the same | Homogenized output from the new tuning data | Bump temperature to 0.85-0.95 for variety; lower repetition penalty |

| Bot refuses turns it accepted last week | Tuning shift toward Gemini/Opus safety patterns | Rephrase the prompt; less direct framing helps |

| Long arcs forget character details | “Memory Budget” exhaustion from bloated permanent tokens | Trim character permanent tokens; prefer simpler bots |

| Slow generations or failed calls | Pre-May 8 dead GPU endpoint | Should be resolved as of May 8; if persists, check status page |

The repetition loops are the most universal symptom and the hardest to fix client-side. The team’s interim mitigation is detecting the loop and stopping early instead of filling the response with garbage. That keeps the chat readable but doesn’t generate a usable turn. You still need to reroll.

The homogenization is the second-most-cited complaint on the May 8-9 threads. Rerolls don’t help much because the issue is in the tuning data, not the random seed. The architectural variety that makes characters feel distinct will not return until V2 ships.

The refusals are the most fixable today. The new tuning data leans toward more conservative content patterns.

Rephrasing the prompt to be less direct, or adjusting the bot’s character card to lean more explicit in its system prompt, gets past most of the new refusal patterns. The earlier JLLM grammar fix piece covers the related grammar regression and the parameter tuning that helps.

What to Do Today While V2 Is in Training

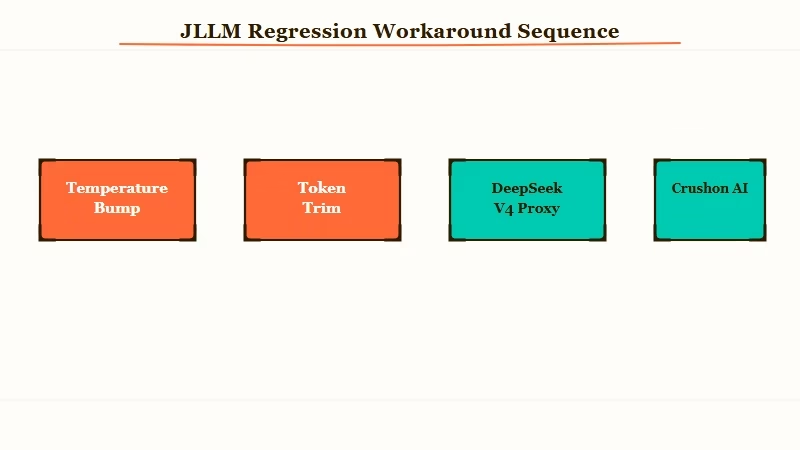

The realistic move is a combination of parameter tuning, lighter character cards, and selective use of the proxy. None of these are full fixes; they raise the floor while the model team works on V2.

Here’s the sequence I’d run today:

- Update your generation settings. Bump temperature to 0.85 for variety. Drop repetition penalty to 1.05 (the new architecture is more sensitive to penalty values). If the bot starts looping anyway, reroll, don’t try to push through.

- Trim character permanent tokens. The “Memory Budget” issue compounds with the architectural regression. A character card with 4000+ tokens of permanent context is more likely to hit memory issues on the new model. Aim for 1500-2500 token cards for the next two to four weeks.

- Test the proxy if the JLLM-only experience is unusable. DeepSeek V4 Pro through OpenRouter is the most popular workaround for users who can’t wait for V2. The DeepSeek V4 Pro evaluation covers the breakeven math at $0.60/$2.50 per million tokens, and the DeepSeek V4 Flash piece covers the cheaper alternative if Pro is overkill.

- Consider an alternative platform if your daily usage is heavy. Crushon AI’s 16K context window handles long anime arcs and OCs without the memory budget issues. Nectar AI is the secondary recommendation if you specifically want long-term character memory persistence.

- Wait for V2 if you’re a casual user. The team has confirmed JLLM V2 is in training. No public release date yet, but the changelog suggests “weeks not months.” For users with low daily volume, riding out the regression is probably the right call.

The one thing I’d avoid is fighting the loop manually. Sending the same prompt three times to “force” past the repetition is a known anti-pattern on the new architecture. The model’s tendency to loop is per-prompt, not per-session, so re-rolling fresh is more effective than retrying.

When JLLM V2 Ships and What to Expect

JLLM V2 is being trained on a new NVIDIA B200 GPU cluster with a larger context window and creator-chosen formatting. No release date confirmed, but the cluster is operational and the team has signaled it’s the structural fix.

The known V2 features per the changelog:

- Larger context window. The current 8K to 9K JLLM ceiling is tight for long roleplay. V2 is targeting 16K minimum and 32K aspirationally.

- Model optionality. V2 will let users choose between model versions in chat settings rather than forcing the latest. This addresses the “I want the old JLLM back” complaint that’s dominated April and May threads.

- Creator-chosen formatting. Per-character formatting controls so a creator can lock dialogue patterns the model otherwise homogenises.

- Better gender locking. The new tuning data caused regressions in character/user gender consistency. V2 is attempting an architectural fix.

- Reasoning layer toggle. The new “Show Thinking” feature lets users see or hide the model’s reasoning process. Useful for debugging bot behavior, breaks immersion if left visible during roleplay.

The reasoning layer is genuinely new and worth knowing about. JLLM V2 will run a “thinking” pass before producing the final response, similar to o1-style architectures. The Show Thinking toggle exposes that reasoning. For roleplay, hide it. For figuring out why a bot is refusing, show it briefly to see what the model thinks the prompt is asking for.

Frequently Asked Questions

Why did all my JanitorAI bots suddenly start sounding the same?

The April 20 architecture swap retrained JLLM on Gemini and Opus tuning data. That data is more uniform than the prior tuning corpus, so distinct character voices collapse into a shared “natural-sounding” baseline. The fix is JLLM V2, in training. Temperature 0.85 plus simpler character cards helps marginally in the meantime.

Is the JLLM repetition loop a bug I can fix in my settings?

No. The loop is architectural, not a tuning issue. The team explicitly said parameter changes won’t fully solve it. The interim mitigation is the system stopping early when it detects a loop, plus rerolling fresh whenever you see the “bot got stuck” notice. JLLM V2 is the proper fix.

What’s the May 8 dead GPU thing the changelog mentioned?

A GPU endpoint that had been failing silently for weeks was still receiving traffic. The team removed it on May 8, which redistributed load to healthy hardware. This is why the quality drop felt sudden on May 8 to 9 even though the architecture swap was April 20.

Should I switch to DeepSeek V4 Pro through the proxy?

Maybe. DeepSeek V4 Pro at $0.60/$2.50 per million tokens through OpenRouter is the most-tested workaround. The breakeven on Pro vs. JLLM-free is roughly 60 turns per session, so heavy users come out ahead, casual users don’t. Setup friction is meaningful.

When will JLLM V2 ship?

The team has confirmed training on the new B200 GPU cluster but not committed to a public date. Based on JanitorAI’s prior model upgrade cadence, “weeks” rather than “months” is the framing in the changelog. Expect the larger context window and the model-version selector to ship first.

Is JLLM still safe to use for sensitive roleplay scenarios?

The new tuning data leans more conservative on safety patterns, so refusals are up. JLLM is still functional for unfiltered roleplay, but you’ll hit more turn-level refusals than in March. Rephrasing prompts less directly works around most of the new refusal patterns.