My Take: Human coding is not over. Solo coding as the default way software gets built is. That distinction matters enormously, and most of the people saying “it’s over” are conflating two very different things.

The r/singularity post titled “The era of human coding is over” picked up nearly 3,000 upvotes this week. I read through all 716 comments.

The thread is a useful artifact of where developer opinion sits right now: equal parts genuine anxiety, cope, and people who have not opened a code editor since GPT-4 launched and are pronouncing the profession dead from the sidelines.

Here is the case for and against, and my honest read on which side is right.

What the “Coding Is Over” Crowd Is Actually Seeing

The evidence that something significant is ending is real. AI tools are now completing tasks that took junior engineers weeks. The question is whether that means coding is over or that a specific mode of coding has been replaced.

The SF Standard ran a piece in February 2026 documenting software engineers at major companies describing weeks-long projects being completed in hours. That is not hype. That is a real shift in how production software gets written. From what I’ve seen using Claude Code and similar tools daily, the acceleration is genuine.

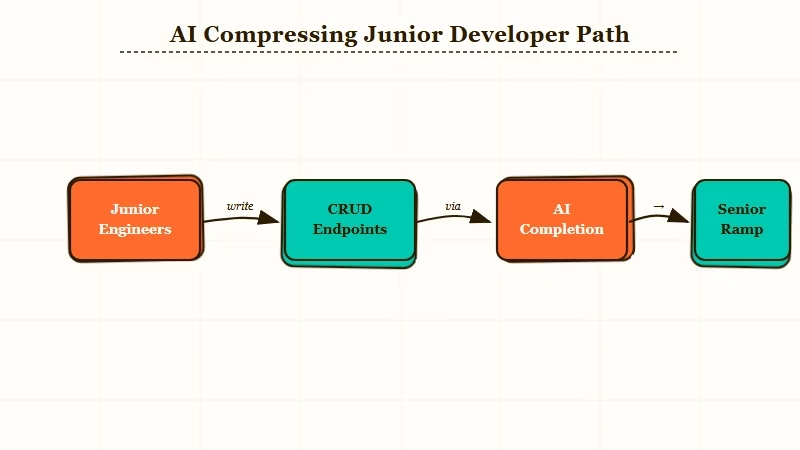

The posts that resonated most in that Reddit thread were from junior engineers who have spent the past year watching AI handle the work they used to get paid to do. That is a real economic signal. Entry-level implementation work, the CRUD endpoints, the config plumbing, the boilerplate scaffolding, is now a prompt, not a career path.

Stack Overflow’s 2025 research on AI and junior developer careers documented this clearly: AI has compressed the entry ramp. Work that used to take a new graduate six months to learn now gets delegated to a model on day one.

What the “Coding Is Not Over” Crowd Is Actually Seeing

The counterargument is also real: AI does not consistently produce production-ready software from a single prompt, and the demand for engineers who can direct AI effectively has not dropped.

The pattern I keep seeing is that AI is excellent at the parts of programming that look like pattern matching: standard endpoints, familiar architectures, documented libraries. It is significantly less reliable at the parts that require judgment: novel constraints, performance under edge conditions, security implications of design decisions.

What changes is not the volume of coding work, but the nature of the work that has value. The engineer who can write a working REST endpoint from scratch is competing with a free model.

The engineer who can specify a system architecture, catch the security hole in the AI’s output, and decide when the model’s confident wrong answer will cause a production incident: that person is not replaceable by current tooling.

Anthropic, Google DeepMind, and OpenAI are all hiring senior engineers aggressively. The companies saying AI will replace coders are also paying senior engineers more than ever. That contradiction is informative.

What Is Actually Ending and What Survives

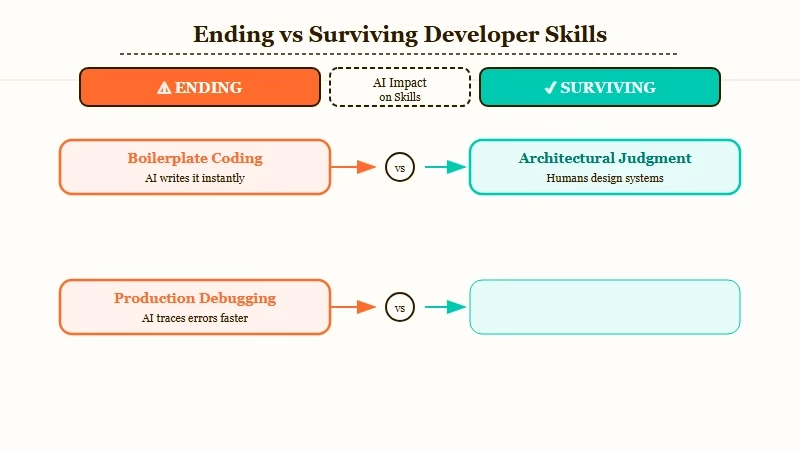

What is ending: coding as a solo craft practiced by individuals who work alone with a text editor. What survives: engineering judgment, system thinking, and the ability to direct and verify AI output at scale.

Here is the clearest way I can frame what has changed versus what has not:

| Coding work | Status in 2026 | Reason |

|---|---|---|

| Boilerplate implementation | Ending | AI handles this at near-zero marginal cost |

| Junior implementation ramp | Compressed | AI completes tasks in hours that took weeks |

| System architecture decisions | Survives | Requires domain judgment AI cannot fake reliably |

| Production debugging | Survives | Context-specific, needs knowledge of the actual system |

| Security review of AI output | Growing | AI makes confident mistakes; humans catch them |

| Novel constraint engineering | Survives | Not in training data; model has no reference pattern |

- Ending: Writing boilerplate alone. The junior role where you spend 40 hours implementing a feature a senior described in 5 minutes is functionally gone.

- Ending: Learning by doing trivial implementation. The traditional path of “write lots of code to build intuition” is compressed. You get that feedback from prompting and reviewing AI output now.

- Surviving: Knowing what the right architecture is. AI can implement whatever you specify. Knowing what to specify requires domain knowledge that cannot be prompted.

- Surviving: Production judgment. The models make confident mistakes. Catching them before they hit prod requires understanding what correct looks like.

- Surviving: Context humans have that models do not. The business constraint that was decided in a meeting three months ago and never written down anywhere. The regulation that changed last quarter. The legacy code that has surprising behavior at scale.

The tools that accelerate this shift are already in daily use. Building an AI orchestration layer without LangChain, or automating a 24/7 Claude-powered station using CLI tools. Three years ago that required a team. Today they require one person who knows how to direct AI effectively. That person is not doing less work. They are doing different work at higher leverage.

The People Who Are Actually Losing in This Shift

The developers most at risk are not senior engineers. They are mid-career engineers who specialized narrowly in implementation and never developed systems thinking, and junior engineers who expected a traditional learning ramp.

The narrow specialist who spent 10 years becoming the person who writes the most efficient SQL queries is in a worse position than they were two years ago. Not because SQL is irrelevant but because that skill no longer commands the premium it once did when AI can write competent SQL from a description.

The junior who expected to follow the traditional path of “write lots of code, get promoted, eventually get architectural authority” is also in a harder position. That path still exists but it is narrower and faster. You either develop judgment quickly or you get outcompeted by AI-augmented engineers who have it.

What I find more troubling than the job market question is the learning pipeline. If juniors never spend years doing the implementation work that historically built the intuition for what goes wrong at scale, where does the next generation of people with genuine systems judgment come from? That is an unsolved problem and the best Claude Code skills articles do not answer it.

My Actual Take on Where This Goes

Human coding is not over. The craft of software is being restructured around human judgment as the scarce input and AI capability as the abundant one. That is a significant restructuring, and some careers will not survive it. But the profession does.

The “human coding is over” framing is emotionally satisfying because it is binary. It is also wrong in the way most binary framings of complex transitions are wrong.

What is happening is more like what happened to manufacturing when power tools arrived. The individual craftsman who built furniture by hand with chisels and saws did not disappear overnight. The market for handmade furniture that only a master craftsman could produce did narrow dramatically. A different population of people with different skills built the furniture industry that followed.

AI tools are the power tools. The engineers who thrive are the ones who know what they are building, understand the material they are working with well enough to catch the tool’s mistakes, and can specify outcomes precisely enough to get useful output. That is not nothing. That is not “over.” It is a different craft than what it was.

The people posting “it is over” from outside the industry are mourning a version of programming they romanticized. The engineers inside the industry who are adapting are, in my experience, mostly doing more interesting work than they were two years ago.