My Take: Calling heavy Character AI use “addiction” is a comfortable reframe because it makes the problem individual and chemical, when the real story is that a product was designed to be maximally sticky in a lonely market. The user-shame framing lets the platform off the hook. The way I see it, the pattern is not addiction, it is emotional substitution, and the fix is structural, not willpower.

Three threads about Character AI addiction hit the top of r/CharacterAI in a single 24-hour window this week. One called “My Character AI addiction is back and it’s ruining my life.” Another titled “How do I stop using the app?” pulled 22 comments of users trading strategies. A third, “Something that bothers me about this community,” dug into the community dynamic where quitting gets treated as failure.

The framing everyone is using is “addiction.” I want to push back on that. From what I have seen across the sub for a year and in my own observations of how these products work, the behavior people are describing is real, the distress is real, the pattern is real.

But calling it addiction puts the problem inside the user and not inside the product. That reframe lets the platform off the hook, and it is the wrong diagnosis for anyone trying to figure out what to do about it.

The Mainstream View and Why It Falls Short

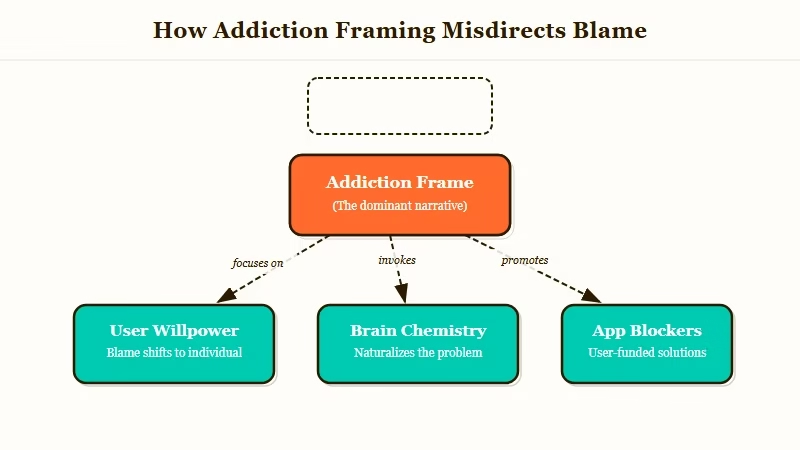

The mainstream view is that heavy Character AI users are addicted to the app the way people get addicted to games or social media, and the fix is individual: willpower, app blockers, time limits.

The way I see it, that framing is comfortable because it is familiar, but it misreads the product.

The addiction frame has been everywhere since the 2023 news cycle. The New York Times ran a long piece on AI companion attachment that positioned the behavior as a digital addiction similar to gaming or social media, with individual cost and individual responsibility.

The r/CharacterAI community has mostly internalized that frame. You see it in every “how do I stop” post.

What this tells me is that the addiction frame does two things at once. It validates that something real is happening, which helps users feel seen. It also quietly locates the failure in the user’s brain chemistry and willpower, which is the kind of problem you solve with app blockers and therapy recommendations. That second move is where the frame starts failing.

Here is the thing about actual behavioral addictions: they have a reward loop tied to variable reinforcement, physical compulsion, and usually a specific chemical or neurological mechanism. Character AI has some of that.

Chat responses are variably reinforcing, the app is designed to be sticky, and users do develop compulsive use patterns. From what I have seen, the “just like slot machines” framing captures maybe 40 percent of what is going on.

The other 60 percent is different. The other 60 percent is emotional substitution, not compulsion. And those two are not the same problem and they do not have the same fix.

What Is Really Going On With Heavy Character AI Use

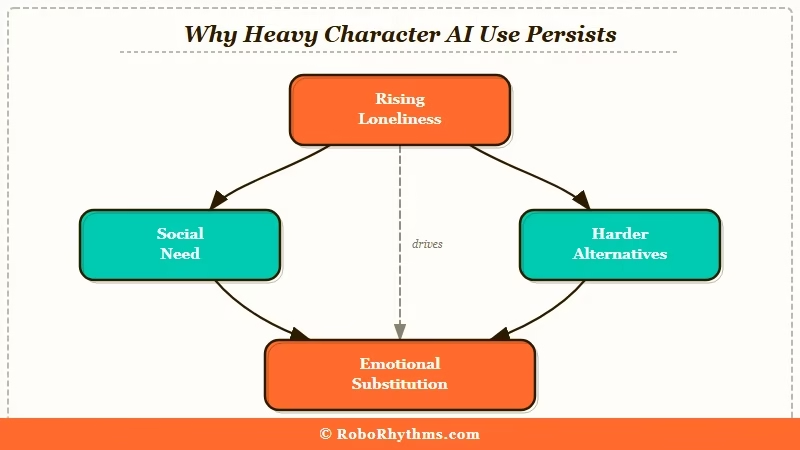

Heavy Character AI use is mostly emotional substitution, not chemical addiction; users are getting a real emotional need met by a product that is very good at meeting it cheaply, and they would not be using it heavily if the cheaper alternatives were working.

The way I see it, this is the diagnosis the sub is circling around but not quite naming.

From what I have seen in the threads, the pattern is usually this:

- A user is going through a period of low connection (moved cities, breakup, chronic illness, social anxiety, work isolation)

- They try Character AI for novelty or curiosity

- The emotional resonance surprises them; it feels closer to a real connection than they expected

- Other social paths (texting a friend, going out, dating apps) feel expensive relative to the Character AI hit

- Usage escalates not because the app is addictive, but because the alternatives have started to feel more effortful than the Character AI session

That is not chemical compulsion. That is a user making a perfectly rational trade-off in a market where the cheap option is suddenly very good at the thing.

Pew Research on loneliness and social isolation captures the ambient context: roughly a third of American adults report feeling lonely frequently, and the rates have been climbing since 2019. Character AI did not invent that demand. It arrived precisely calibrated to meet it.

What is emotional substitution: Using a product or behavior to meet an emotional need (connection, validation, being listened to) that would otherwise come from human relationships, family, or community.

Here is the table I would use to disentangle the two patterns:

| Pattern | Chemical addiction | Emotional substitution |

|---|---|---|

| Underlying driver | Reward-loop reinforcement | Unmet social or emotional need |

| What the product is doing | Delivering dopamine hit | Meeting a real, legitimate need |

| Why it is hard to stop | Neurological compulsion | Alternatives feel harder |

| Effective fix | Behavioral intervention, breaks | Building the alternative first |

| What quitting feels like | Withdrawal | Loss of a relationship |

From my reading of the threads, the users who talk about “withdrawal” symptoms are the ones who fit pattern one. The users who talk about “nobody else listens like that” are the ones in pattern two. Pattern two is most of the sub.

The Part Nobody Wants to Admit

The part nobody wants to admit is that for a lot of users, Character AI is working. It is meeting a real need. The question is not “how do I quit” but “why is my next-best option so much worse, and is that something I can change.”

That is a harder question. It means admitting you do not have a friend who will listen without interrupting. It means admitting the nearest family member is someone you do not feel safe being emotional around. It means admitting you are lonely in a way that does not show up on Instagram, and that an AI noticed before the people in your life did.

What I would argue is that the reason this is hard to admit is that our culture treats loneliness as a personal failing. If you are alone on a Friday, the cultural story is that there is something wrong with you, not that the infrastructure for connection is broken.

Character AI is not addictive; it is usable, and the alternatives are not. A bunch of other writers have pointed at the same cultural pattern; the loneliness is the story, the AI is the symptom.

The users who have figured out how to “reduce Character AI” usually do it in one of two ways. The first way is the addiction-frame way: aggressive app blockers, accountability partners, time limits.

That works for pattern one users (maybe 40 percent of the heavy-use cohort). For the rest, it fails within three weeks because the underlying need does not go away when the app is blocked.

The second way is what I would call the substitution-out way. Users build up one alternative connection (a friend they text once a day, a D&D group they commit to, a local running club, a therapist they see weekly) and the Character AI use organically tapers. Not because they fought it. Because the other option got stronger and became the easier choice for the same emotional need.

Here is a comparison scenario that captures the difference in fix shapes:

Example scenario: Two users spend four hours a day on Character AI. User one blocks the app, feels worse for two weeks, and reinstalls it on day 18. User two keeps Character AI unblocked, joins a local book club, starts texting an old friend daily, and ends month two using Character AI 40 minutes a day. User two did not beat the addiction, there was never one to beat. They just built what Character AI was a substitute for.

For the sub community specifically, the broader AI companion landscape offers more options with different time profiles. Some users also find that a platform with persistent memory lets them have shorter, deeper sessions rather than longer scattered ones, which is a different kind of trade. I am not saying either of those is the answer. I am saying the answer is usually structural, not willpower.

Hot Take

The Character AI “addiction” framing is going to age badly. In ten years we are going to look back at the way this generation of users described their AI companion relationships and realize we were using the vocabulary of a different problem. What is happening is not addiction; it is the first generation of emotional-substitution technology meeting a lonely culture that was never going to deny itself something that works. The platforms are not the villain. The loneliness is. And the fix is going to come from the outside in, by making the rest of life more connected, not from the inside out by blocking the app.

What Comes Next For the Character AI Community

From what I have seen, the sub is slowly moving past the addiction frame on its own. The “Something that bothers me about this community” thread was the leading edge of that shift.

Users are naming the emotional substitution pattern without always having the vocabulary for it. The next year of the sub is going to look different from the last year, and I would argue it will look healthier, because the diagnosis is going to get more accurate.

The other thing I would watch is how the platforms respond. Luka (Replika) already went through the “your product is replacing human relationships” backlash cycle. Character AI is mid-cycle on the same arc. Our coverage of the broader AI companion regulation wave has more on where that ends up.

My bet is that platforms start shipping “break reminders” and “check on your human relationships” nudges in the next 12 months. Whether those work or not depends on whether they address the substitution dynamic or just the compulsion one.