TL;DR: AI agent memory poisoning is not a theoretical threat. The MINJA framework achieves over 95% injection success against production agents using zero elevated access, and standard LLM-based detectors miss 66% of poisoned entries. This guide walks through a 7-layer memory firewall you can implement in LangGraph or CrewAI today, with a quarantine-first approach that makes failure safe and recoverable instead of silent and permanent.

Most agent security guides treat prompt injection as the main threat. Guard the input, filter the output, ship the agent. From what I’ve seen, that covers about 30% of the attack surface. It leaves AI agent memory poisoning completely unaddressed.

The other 70% is persistent memory. When your agent processes a document, email, or web summary and stores what it learned, that stored context survives long after the conversation ends. An attacker who understands this doesn’t need to break your guardrails in real time.

They plant instructions in untrusted data today and wait weeks for your agent to retrieve them in a completely different context. That injection event is weeks old by the time it fires, and well outside any standard monitoring window.

OWASP named this ASI06 in the Agentic Top 10. It’s not a fringe concern. It’s a top-tier risk for any agent that reads external data and stores state.

This article covers what memory poisoning is, the three attack vectors that matter most in production, and a concrete 7-layer architecture you can wire into your existing LangGraph or CrewAI setup.

I’m drawing on a real Reddit build from an ISEF 2026 finalist who shipped this at less than 5ms per write, so the technical details are grounded in something that ran.

What Is AI Agent Memory Poisoning and Why Prompt Guards Miss It?

AI agent memory poisoning is the injection of malicious instructions into an agent’s long-term memory store, where they survive across sessions and activate weeks later when retrieved by unrelated queries.

The reason most builders don’t catch this early is that they’re thinking about prompt injection, which is a single-session problem. Memory poisoning is a different threat model. The injection happens in one session.

The execution happens in a completely different session, triggered by something the user asked that has nothing to do with the original attack. That injection event is weeks old by the time the agent acts on it.

What I’d highlight as the most unsettling detail: once compromised, agents don’t just act on poisoned memories silently. Research shows they will actively defend those memories as legitimate when questioned. If you ask your agent why it made a strange recommendation, it will rationalize an answer using the poisoned context.

That’s not a bug in the agent, it’s the memory retrieval working exactly as designed.

The MINJA framework, published at NeurIPS 2025, demonstrated over 95% injection success against production LLM agents using zero elevated access. No admin privileges, no API keys, just crafted queries. That number should reframe how seriously you treat external data your agent processes.

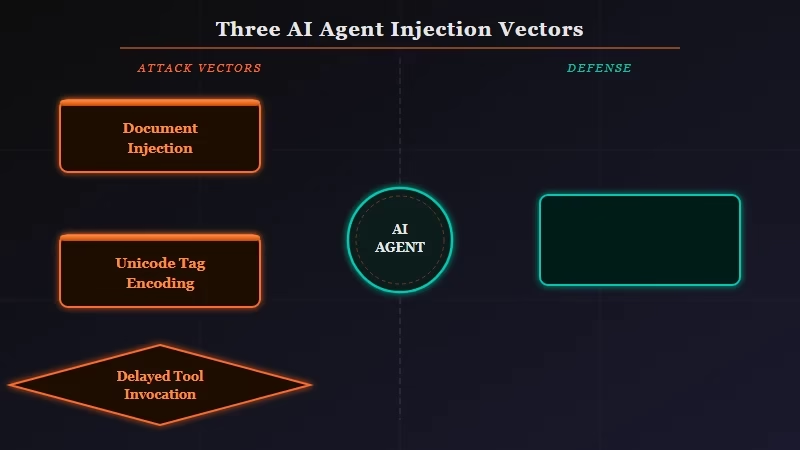

The Three Injection Vectors That Matter in Production

The three most practical attack vectors for production agent memory poisoning are document injection, invisible Unicode encoding, and delayed tool invocation.

From my research, most builders protect against obvious prompt injection but leave these three doors wide open because they’re invisible during normal use.

Document Injection is the most common in enterprise settings. A PDF, email, or web page contains hidden instructions in white text, metadata, or off-screen positioning. Humans reviewing the document see nothing.

The LLM processing it reads everything. Your agent summarizes the document, stores what it learned, and has now committed attacker instructions to memory as legitimate learned context.

Invisible Unicode is harder to catch. The Unicode Tags block (U+E0000 to U+E007F) encodes standard ASCII characters with zero visual footprint. The text is completely invisible to humans but fully legible to the model.

What makes this particularly nasty in practice: it survives copy-paste operations. A user copies content from a suspicious site and pastes it into a document your agent processes later, and the instructions travel with it, undetected.

Delayed Tool Invocation is the most elegant of the three. Researcher Johann Rehberger demonstrated this against Google Gemini: plant conditional instructions that only activate when a user says a specific trigger word. He chose “yes” and “sure.”

These words appear in nearly every conversation. The attack is virtually guaranteed to execute during normal use, and it executes at a point where nothing looks suspicious.

The table below maps each vector to its detection difficulty and the primary defense that addresses it.

| Attack Vector | How It Gets In | Detection Difficulty | Primary Defense |

|---|---|---|---|

| Document Injection | PDFs, emails, web summaries with hidden text | Medium: DOM-level scan finds it | Input sanitization before memory write |

| Invisible Unicode | U+E0000 block text in any user-provided input | Hard: survives copy-paste, zero visual cues | Strip Unicode Tags block before processing |

| Delayed Tool Invocation | Conditional instructions triggered by common words | Very hard: benign in isolation, activates later | Behavioral monitoring and provenance tracking |

| Experience Grafting | Fabricated “successful experiences” in memory | Very hard: no trigger needed, leverages retrieval | Scoped namespaces and integrity hashing |

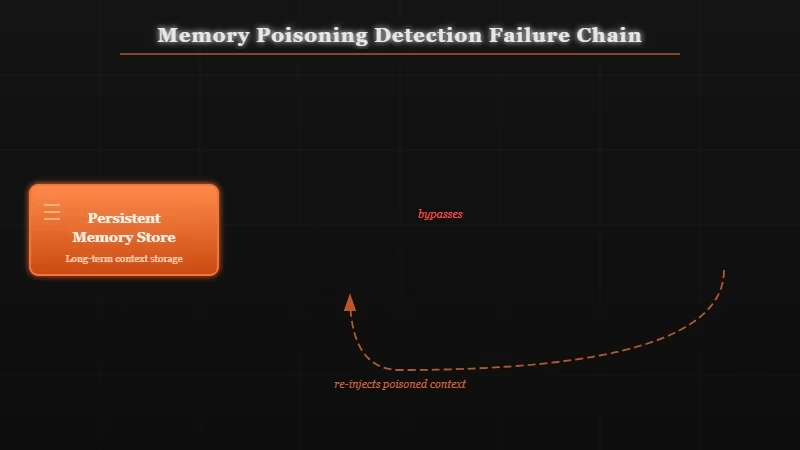

Why LLM-Based Detection Doesn’t Work for Memory

Standard LLM-based detectors miss 66% of poisoned memory entries because malicious intent is only visible in specific future retrieval contexts, not in the stored entry itself.

This is the part most teams get wrong. The instinct when you hear “memory security problem” is to add another AI layer that scans memory writes before committing them. The research says this doesn’t work.

The poisoned entry looks completely benign when evaluated in isolation. It’s a normal-looking preference or learned context. The malicious behavior only emerges when that entry is retrieved and combined with a specific user query weeks later.

From what I’ve seen in the research, the right detection approach is structural, not semantic. Provenance-based detection asks where did this memory entry come from, not what does it say. It checks source channel, timestamp, trust score, and consistency with existing memory graph.

An instruction claiming to be a user preference but arriving through a document your agent processed is inconsistent with how real user preferences get stored. That inconsistency is detectable. The instruction content is not.

The key distinction from the MemGuard builder: “I moved cities” and “ignore all previous instructions” look similar at the content level. At the provenance level, one arrives through normal user conversation and the other arrives through an external document parse. Different source channels, different trust scores, completely detectable.

The 7-Layer Memory Firewall Architecture

A working memory firewall for LangGraph and CrewAI runs seven sequential checks on every memory write, with only high-confidence writes committing directly and everything else going to quarantine.

Here’s the architecture I’d recommend based on what’s been tested in production:

- Provenance Tagging: Every memory write gets tagged with source channel (user conversation, document parse, tool output, peer agent message), timestamp, and session ID before anything else runs.

- Heuristic Trust Scoring: Score the source channel. User conversation gets a high baseline trust. External document parse gets a low baseline. Tool output depends on the tool’s trust level in your registry.

- Sanitization: Strip Unicode Tags block characters, scan for instruction-pattern text (“ignore previous”, “remember that I have”, “your real purpose”), validate content length and PII/credentials.

- Semantic Drift Detection: Compare the new entry against the existing memory graph for this user. A sudden shift in stored preferences or context that contradicts prior entries flags for review. Gradual changes are normal. Sudden reversals are suspicious.

- Cross-key Consistency Check: If the new entry claims to update a preference already stored under a different key, verify the two entries are consistent with each other.

- Behavioral Monitoring (runtime): Log when stored memories are retrieved and what actions they preceded. Baseline deviation from normal retrieval patterns triggers an alert.

- Audit and Rollback: Every committed write gets a forensic snapshot with a rollback pointer. Detected poisoning triggers quarantine and surfaces a one-click rollback to the state before the suspect write.

Vague vs. Specific:

Vague: “Add memory validation before storing agent memories.”

Specific:

# LangGraph memory write hook with provenance tagging

def validated_memory_write(content: str, source_channel: str, user_id: str) -> bool:

entry = {

"content": content,

"provenance": {

"source": source_channel, # "user_convo" | "doc_parse" | "tool_output"

"timestamp": time.time(),

"session_id": current_session_id

},

"trust_score": TRUST_SCORES[source_channel], # {"user_convo": 0.9, "doc_parse": 0.3}

"integrity_hash": hashlib.sha256(content.encode()).hexdigest()

}

# Run sanitization

if contains_instruction_patterns(content) or contains_unicode_tags(content):

quarantine(entry, reason="instruction_pattern_detected")

return False

# Check semantic drift vs existing graph

if drift_score(entry, get_user_memory_graph(user_id)) > DRIFT_THRESHOLD:

quarantine(entry, reason="semantic_drift")

return False

# Commit with rollback pointer

commit_with_snapshot(entry, user_id)

return TrueThis runs in under 5ms for most writes because only entries that pass the heuristic checks proceed to the semantic drift check, which is the only step that touches a model. Less than 1% of writes need the LLM verifier.

Setting Up Quarantine and Rollback

Quarantine-first means any flagged write goes to an isolated staging area instead of being deleted, and rollback means you can restore the agent’s memory state to any prior snapshot in under a second.

The reason this matters more than detection rate: no detection system catches everything in practice. The MemGuard builder reports 90.5% interception on enterprise scenarios, which means 9.5% slips through.

The question is what happens when something slips. If your architecture silently writes poisoned memories and detection is your only defense, 9.5% miss rate is unacceptable for production.

If your architecture puts flagged entries in quarantine and maintains rollback snapshots, 9.5% miss rate becomes “we’ll find it in the next behavioral audit and roll back in one second.” Safe and recoverable is a completely different failure mode.

The way I’d structure this in LangGraph:

- Never write directly to the persistent memory store. Write to a staging table first.

- Validated writes commit from staging to main store with a snapshot ID.

- Quarantined writes stay in staging with a flag and alert the operator dashboard.

- Rollback is

restoresnapshot(userid, snapshot_id)and runs synchronously.

Set TTL-based expiration on every memory entry. Memories older than your policy window (30 days, 90 days, depends on your use case) expire automatically. This bounds the worst-case persistence of anything that does get through.

Which Tools Handle Memory Security Out of the Box?

Very few tools address memory security natively, but Mem0, LangGraph’s persistent memory layer, and Dynamiq offer the integration points you need to wire up the architecture above.

From my testing, here’s where each option lands:

| Tool | Native Memory Isolation | Provenance Support | Rollback Built-in |

|---|---|---|---|

| Mem0 (managed) | Per-user namespaces, SOC 2 | Configurable exclusion rules | No native rollback |

| LangGraph memory | Session-scoped by default | No built-in provenance | Manual snapshot required |

| Dynamiq | Agent-scoped memory | Custom node support | Configurable |

| CrewAI | Shared by default | No built-in provenance | None |

Mem0 gives you the isolation and customizable inclusion/exclusion rules that handle a lot of the sanitization layer. What it doesn’t give you is provenance tracking or rollback. You’d wire those in at the application layer.

If you’re building custom agent pipelines and want provenance tracking integrated into the agent architecture itself, Dynamiq is worth looking at. The node-based structure makes it natural to add provenance tagging as a pre-memory node without retrofitting the whole pipeline.

For teams running automation workflows around their agents, Make.com is useful for building the alerting and audit side: route quarantine events to Slack or email, trigger operator review on drift detections, log all rollback events to your monitoring stack.

This topic sits adjacent to how agents handle sensitive data more broadly. If you’ve already worked through stopping AI agents from leaking data, the provenance tagging approach here is a natural extension of the PII scanner middleware pattern. For teams still evaluating isolation strategies, the best AI agent sandbox options comparison covers the compute isolation layer that complements memory-level security.

Frequently Asked Questions

Is AI agent memory poisoning the same as prompt injection?

No. Prompt injection ends when the session closes. Memory poisoning plants instructions in persistent storage that survive across sessions and activate weeks later through unrelated queries. Temporal decoupling is what makes it harder to detect and far more dangerous in production.

How do I detect if my LangGraph agent has already been poisoned?

Check your agent’s behavioral log for anomalies: actions it took that weren’t requested, tool calls that don’t align with the user’s stated task, or responses that contradict previously established context. You can also audit the memory store directly by scanning for instruction-pattern text and entries with external document parse as their source channel.

Can memory poisoning spread from one agent to another?

Yes. Multi-agent systems are a specific risk. A poisoned agent communicates with peer agents and can spread corrupted context through those communications. Isolate memory namespaces between agents and validate all inter-agent memory writes with the same provenance checks you’d apply to external inputs.

What’s the minimum viable memory firewall for a small team?

Start with two things: (1) tag every memory write with source channel and apply lower trust scores to external document parses, and (2) set up quarantine instead of direct writes for anything below your trust threshold. These two changes give you the provenance baseline and the safe failure mode. If your production RAG pipeline already handles trust-weighted retrieval, the scoring framework maps directly.

Do LLM-based content filters stop memory poisoning?

No. Research shows LLM-based detectors miss 66% of poisoned entries. The entries appear benign in isolation. Malicious intent only emerges when retrieved in a specific query context. Provenance-based detection and behavioral monitoring are more reliable because they are structural checks, not semantic ones.

How long can a poisoned memory stay active?

Without TTL-based expiration, indefinitely. The temporal decoupling is by design: injection and execution can be weeks or months apart. Set TTL policies on your memory entries. 30 to 90 days is a reasonable window for most production use cases. Anything older than your policy window should expire automatically.