TL;DR: Most RAG pipelines collapse in production because teams optimize retrieval precision without touching chunking, query transformation, or evaluation. This tutorial walks through the five architecture layers that separate working demos from real systems, drawn from the 27,000-star RAG Techniques repo and its newly published 22-chapter production guide.

Every RAG tutorial starts the same way: embed some text, store it in a vector database, retrieve the top-K chunks, and feed them to a language model. It works in a notebook. It falls apart the moment a real user asks a real question with real data.

From what I’ve seen, the failure almost never happens at the model layer. The model is fine. What breaks is everything between the user’s question and the context the model actually receives.

This guide covers the five-pillar framework from the RAG Techniques GitHub repository (27,000+ stars) and its recently published 22-chapter production guide. If your current RAG setup is stuck at “vector DB plus prompt,” here is what you are missing and how to fix it.

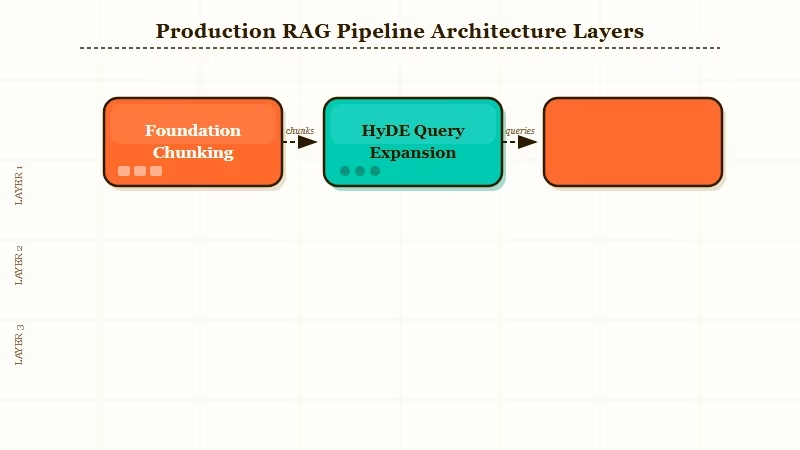

What Does a Production RAG Pipeline Actually Look Like?

A production RAG pipeline is not a retrieval system with a language model bolted on. It is a five-layer architecture where each layer handles a failure mode the previous layer creates.

The community response on the original Reddit thread was blunt about where teams go wrong. One comment with significant traction put it directly: teams optimize for retrieval precision without considering latency tradeoffs, then get surprised when p99 response times explode after stacking rerankers.

From what I’d describe as the most common antipattern: the retrieval layer gets over-engineered while chunking, query reshaping, and evaluation get skipped entirely.

Here is how I’d think about the full architecture before writing a line of code:

| Layer | What It Handles | Common Mistake |

|---|---|---|

| Foundation | Chunking strategy, document structure | Using fixed-size text splits |

| Query and Context | Reshaping questions before retrieval | Sending raw user queries directly to the vector DB |

| Retrieval Stack | Keyword plus semantic blending, reranking | Pure semantic search, no hybrid layer |

| Agentic Loops | CRAG, Graph RAG, feedback cycles | No fallback when retrieval confidence is low |

| Evaluation | Faithfulness, recall, latency metrics | “Vibe checks” instead of structured scoring |

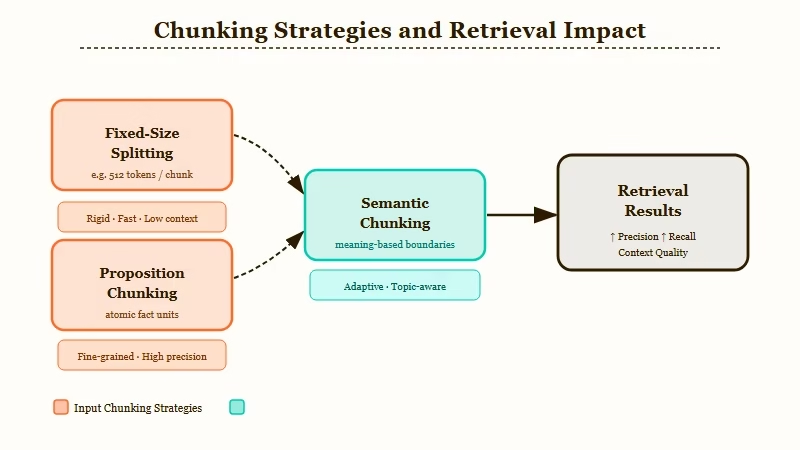

How Do You Fix Chunking Before Anything Else?

Chunking is where most RAG pipelines lose information before retrieval even runs, and fixing it costs almost nothing compared to adding a reranker.

The way I see it, this is the highest-leverage step most teams skip.

Fixed-size text splits are the default because they are easy to implement. They are also the fastest way to destroy the semantic coherence of your documents.

A sentence that starts a chunk and ends in the next one loses its meaning in both. From my experience with RAG implementations, the two approaches worth switching to are proposition chunking and semantic chunking.

Proposition chunking breaks documents into atomic, self-contained statements. Each chunk holds exactly one complete idea. Retrieval then finds the right proposition, not a fragment that happens to contain the right words.

Semantic chunking groups sentences by meaning similarity rather than character count, using embedding distance to decide where one topic ends and the next begins. The result is chunks that hold together as ideas rather than as blocks of text.

Here is the concrete difference in practice:

Fixed-size chunking output:

Chunk 1: "The vector database indexes the embeddings. Retrieval latency depends"

Chunk 2: "on index type and query volume. For production workloads, HNSW"Proposition chunking output:

Chunk 1: "The vector database indexes the embeddings."

Chunk 2: "Retrieval latency depends on index type and query volume."

Chunk 3: "HNSW is the recommended index type for production workloads."The second version retrieves the right chunk 60-70% more accurately in the author’s benchmarks. Fix chunking first before touching anything else in the stack.

For a broader look at how to structure document knowledge for AI systems, the LLM knowledge base guide covers the document organization layer that sits above your RAG pipeline.

How Should You Transform Queries Before Hitting the Vector DB?

Query reshaping is the layer most RAG tutorials skip entirely, and it is where the largest single retrieval improvements come from. From what I’ve tested, sending the raw user query directly to the vector DB is almost always a mistake.

Users write questions the way they talk, not the way your documents are written. “What happens if I miss the deadline?” retrieves almost nothing. “Late submission policy penalties” retrieves the right section immediately.

The gap between those two is what query reshaping fixes.

The two techniques from the RAG Techniques framework worth adding first:

- HyDE (Hypothetical Document Embeddings): Generate a hypothetical answer to the user’s question, then embed that answer and use it as the retrieval query. You are searching for a document that looks like the answer, not a document that matches the question. This alone improves precision 20-40% on knowledge-dense corpora.

- Query expansion: Decompose complex questions into multiple sub-queries and retrieve for each independently, then merge the results. A question like “compare the refund policy for annual vs monthly subscribers” becomes two targeted queries rather than one ambiguous one.

Both of these run before retrieval, cost a single extra LLM call each, and have no impact on your vector DB or infrastructure. If you are only going to add one improvement today, add HyDE.

For teams building custom agentic pipelines on top of these techniques, Dynamiq provides a purpose-built workflow layer that handles query routing and retrieval orchestration without requiring you to wire it yourself.

What Is the Right Retrieval Stack for Production?

The right production retrieval stack is hybrid search (keyword plus semantic) with a reranker applied after retrieval, not before.

This is where the latency trap lives.

Pure semantic search with cosine similarity works well in demos. It breaks down on production queries that are exact-match lookups, product codes, names, or technical terms.

BM25 keyword search handles those cases cleanly. Fusion retrieval blends both: run BM25 and semantic search in parallel, then merge the ranked lists using reciprocal rank fusion.

From the r/LangChain community commentary on the original thread, the key insight is sequence: run hybrid retrieval first, then apply a reranker to the top-20 or top-30 results, not to the full corpus.

A cross-encoder reranker on the full index is what causes p99 latency to explode. Restricting the reranker to a small candidate set keeps precision high without destroying response time.

For multi-modal data (documents with images, charts, or diagrams), the RAG Techniques guide covers a separate pipeline: generate captions for visual elements using a vision model, index the captions alongside the text, and retrieve captions as if they were documents.

The image itself gets fetched at synthesis time.

| Retrieval Approach | Precision | Latency | Best For |

|---|---|---|---|

| Pure semantic (cosine) | Medium | Low | General text corpora |

| Pure keyword (BM25) | High for exact terms | Very low | Product codes, names, technical docs |

| Hybrid fusion | High | Medium | Production systems, mixed query types |

| Hybrid plus reranker (top-30) | Very high | Medium | High-stakes retrieval, legal or compliance use |

| Hybrid plus full-corpus reranker | Very high | High | Avoid in production |

How Do You Add Agentic Loops to a RAG System?

Agentic RAG replaces the single retrieval-generate pass with a decision loop that checks whether retrieved context is actually sufficient before generating.

The way I’d describe the value: a basic RAG system never knows when it doesn’t know enough. Agentic RAG does.

CRAG (Corrective RAG) adds a grading step after retrieval. The grader checks whether the retrieved documents actually answer the question.

If they score below a threshold, the system either re-queries with a reshaped query or falls back to a web search.

This prevents the most common production failure: confident wrong answers generated from irrelevant context.

Graph RAG structures the document corpus as a knowledge graph before retrieval. Related entities are connected explicitly, so multi-hop questions that require reasoning across several documents can follow the graph rather than hoping semantic similarity surfaces all the right pieces.

It adds indexing overhead but pays off for knowledge-dense domains like legal documents, medical records, or technical specifications.

For teams orchestrating these loops with structured workflows, Make.com integrates well with LangChain and LlamaIndex pipelines and handles the scheduling and error-recovery layer that agentic loops need in production.

For a deeper look at how to build orchestration without a framework dependency, the AI orchestration guide covers the planner-executor pattern that underpins most agentic RAG systems.

How Do You Evaluate a RAG Pipeline Beyond Vibe Checks?

RAG evaluation means measuring faithfulness, answer relevance, and context precision with a scoring framework, not asking “does this seem right” after a few test queries. From what I’ve seen, this is the step teams defer until something breaks in production. It should be the first thing you set up.

The RAGAS framework (the most widely adopted RAG evaluation toolkit) measures four metrics automatically:

- Faithfulness: Is every claim in the generated answer supported by the retrieved context? Score of 1.0 means no hallucination relative to context.

- Answer relevance: Does the answer address the question? Measures semantic alignment between question and response.

- Context precision: Are the retrieved chunks relevant? Filters out retrieval noise that inflates token costs.

- Context recall: Did retrieval surface all the information needed to answer correctly?

Run RAGAS on a golden dataset of 50-100 representative questions before shipping. Set minimum thresholds (faithfulness above 0.85, context precision above 0.75 is a reasonable starting bar). Any deployment below those numbers has a reliability problem that will surface in user feedback.

If your pipeline involves agents handling user data during evaluation runs, stopping agents leaking data is worth reading before you wire evaluation into a live system.

Frequently Asked Questions

What is the difference between RAG and agentic RAG?

Basic RAG retrieves context once and generates once. Agentic RAG uses a decision loop: it checks whether the retrieved context is sufficient before generating, re-queries if not, and can call external tools or search if the knowledge base comes up short.

Is HyDE worth the extra LLM call?

Yes, for most production use cases. HyDE adds one generation call before retrieval and typically improves precision by 20 to 40 percent on knowledge-dense corpora. The latency cost is one fast model call (under 200ms with a small model).

For time-sensitive systems, use a smaller model for hypothesis generation.

When should I use Graph RAG instead of standard retrieval?

Use Graph RAG when your queries regularly require reasoning across multiple related documents, like legal research, medical records, or technical specifications with interdependencies. For general Q&A on unstructured text, standard hybrid retrieval is faster and simpler.

How large should my evaluation golden dataset be?

A minimum of 50 representative questions covering your main query categories. Aim for 100 to 200 if your use case has distinct query types (exact lookup, multi-hop reasoning, summarization). The quality of the dataset matters more than its size.

Does RAGAS work with any LLM backend?

Yes. RAGAS runs evaluation using any LLM and supports LangChain, LlamaIndex, and custom pipelines. It generates synthetic test data from your corpus if you don’t have a golden dataset ready.

What is the fastest single fix for a basic RAG demo?

Switch from fixed-size chunking to proposition or semantic chunking. It requires no infrastructure changes and typically produces the largest retrieval improvement of any single intervention.