TL;DR: Building AI orchestration without LangChain takes less code than you think. The key pattern treats the Planner LLM and Executor as separate trust zones, with your own validation layer in between. This tutorial shows the exact architecture from a working production system, no framework required.

I used LangChain for about six months. Every time I needed to debug something, I ended up reading three layers of abstraction instead of my own code.

A thread on r/LangChain put it clearly: “I ripped LangChain out and replaced it with 80 lines of Python and it works better.”

That post had 87 upvotes and 28 comments, most of them agreeing. The pattern the developer shared is worth documenting properly, because it solves the one thing LangChain makes difficult: knowing what your LLM actually decided to do before it does it.

This is that tutorial.

Why Developers Are Dropping LangChain for Custom Orchestration

LangChain adds abstraction cost that outweighs its value once you understand the underlying pattern it implements.

The framework does real things: it handles prompt templates, manages memory, connects to tools, and wraps the Anthropic and OpenAI APIs. Once you have built a few agents, you realize those are not the hard parts. The hard part is knowing when to trust what comes out of the LLM.

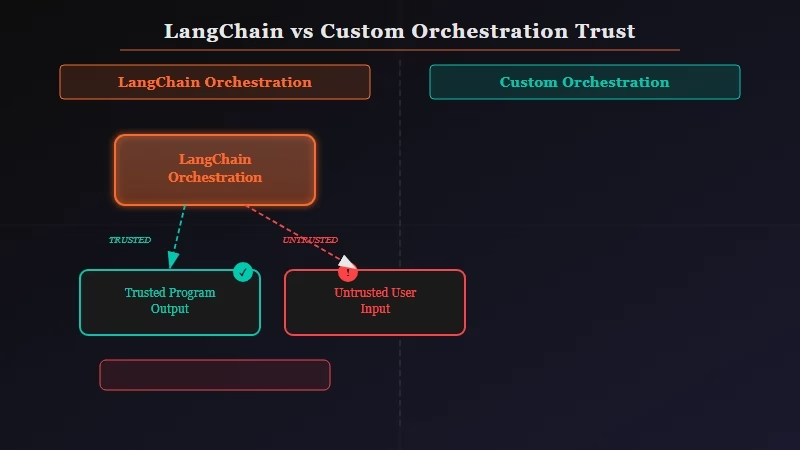

LangChain treats LLM outputs as program output. The pattern emerging across the community in 2026 treats them as untrusted user input. That distinction changes everything about how you structure the code.

My own shift happened when a LangChain agent called a DELETE endpoint I had not intended to expose. The framework passed the LLM’s tool selection directly to the executor with no validation layer I could hook into without fighting the abstraction stack. That was the last time I used it for anything production-facing.

The broader community shift away from LangChain is documented if you want the full picture. What this tutorial gives you is the replacement pattern.

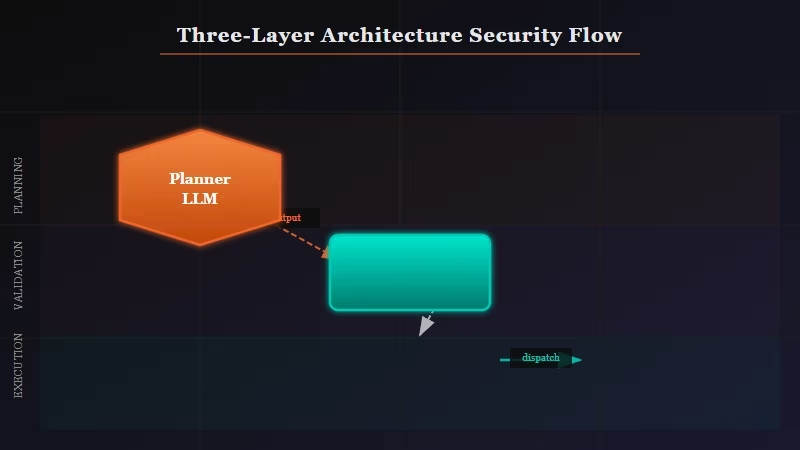

How the Three-Layer Architecture Works

The architecture has three layers: a Planner LLM that decides what to do, a trust boundary that validates the decision, and an Executor that carries it out.

The layer most developers skip is the middle one. Without it, you are trusting the LLM to correctly name a tool, correctly format the arguments, and stay within the scope you intended. Three things that all fail in production at some point.

Here is the minimal skeleton:

class OrchestratorEngine:

def __init__(self, available_tools):

self.tools = {t.__name__: t for t in available_tools}

def run(self, user_query: str) -> str:

plan = self._call_planner(user_query) # LLM decides

validated = self._validate_plan(plan) # you check it

return self._execute(validated) # then it runsThe trust boundary is just a function that lives between the LLM call and the tool call. It is not a framework concept. It is a function you write once and never have to think about again.

Building the Planner LLM

The Planner LLM’s only job is to produce a structured JSON decision, never to take any action directly.

Constrain the output format in your system prompt. The planner sees the list of available tools and picks one. It never runs anything.

import anthropic, json

client = anthropic.Anthropic()

def _call_planner(user_query: str, tool_names: list) -> dict:

system_prompt = f"""

You are a planning agent. Given a user request, output a JSON object with:

- "tool": one name from this exact list: {tool_names}

- "args": a dict of arguments for that tool

- "reasoning": one sentence explaining your choice

Output ONLY valid JSON. No prose before or after.

"""

response = client.messages.create(

model="claude-haiku-4-5-20251001",

max_tokens=512,

system=system_prompt,

messages=[{"role": "user", "content": user_query}]

)

return json.loads(response.content[0].text)The Anthropic Messages API has the full parameter reference if you want to adjust temperature or add caching.

For faster and cheaper inference on the planner step, running a quantized local model can cut your per-call cost to near zero once you are past the prototype stage.

If the JSON parse fails, the LLM went off-script. Catch it at parse time, not at execution time. That parse error is your signal to retry with a more constrained prompt or fall back to a default action.

The Trust Boundary Explained

The trust boundary is the function that makes this architecture production-safe.

Here is the expanded version using Pydantic for structure validation plus an explicit allowlist check:

from pydantic import BaseModel, ValidationError

class ToolCall(BaseModel):

tool: str

args: dict

reasoning: str

def _validate_plan(self, plan: dict) -> dict:

try:

call = ToolCall(**plan)

except ValidationError as e:

raise ValueError(f"Malformed plan: {e}")

if call.tool not in self.tools:

raise ValueError(f"Tool '{call.tool}' not in allowlist: {list(self.tools.keys())}")

return call.dict()Pydantic handles the structure check. Your allowlist handles the scope check. Any LLM hallucination that tries to call a nonexistent tool or pass malformed arguments fails loudly here, not silently downstream.

The trust problem with AI systems in production almost always traces back to skipping this validation step. The LLM is not your code. Its output is input.

How to Wire It All Together

A complete working orchestrator in about 80 lines of Python handles the most common single-hop agent pattern with no framework dependency.

Setting up the full pattern takes five steps:

- Define your tool functions with clean signatures and docstrings.

- Register them in a dict keyed by function name.

- Write the Planner call with a JSON-constrained system prompt listing available tools.

- Add the allowlist check and Pydantic validation in the trust boundary function.

- Wire the three pieces into a

run()method that logs each step for debugging.

Here is a complete example with two tools:

import json, anthropic

from pydantic import BaseModel, ValidationError

client = anthropic.Anthropic()

# Step 1: Define tools

def get_weather(city: str) -> str:

return f"Weather in {city}: 72F, sunny" # replace with real API call

def search_docs(query: str) -> str:

return f"Found 3 docs matching: {query}" # replace with real search

# Step 2: Register tools

TOOLS = {"get_weather": get_weather, "search_docs": search_docs}

class ToolCall(BaseModel):

tool: str

args: dict

reasoning: str

def run_agent(user_query: str) -> str:

# Step 3: Plan

response = client.messages.create(

model="claude-haiku-4-5-20251001",

max_tokens=256,

system=f"Output JSON only: {{tool: one of {list(TOOLS.keys())}, args: dict, reasoning: str}}",

messages=[{"role": "user", "content": user_query}]

)

plan = json.loads(response.content[0].text)

# Step 4: Validate

try:

call = ToolCall(**plan)

except ValidationError as e:

return f"Plan validation failed: {e}"

if call.tool not in TOOLS:

return f"Unknown tool: {call.tool}"

# Step 5: Execute

result = TOOLS[call.tool](**call.args)

print(f"[DEBUG] Used {call.tool}: {call.reasoning}")

return result

print(run_agent("What is the weather in Tokyo?"))

# Output: Weather in Tokyo: 72F, sunnyThis is production-deployable as-is. Add retry logic on the Anthropic call, structured logging to your observability stack, and you have a complete agent runtime you fully control.

For multi-agent setups with shared memory between steps, Dynamiq builds on this exact pattern and adds the persistence layer that raw Python does not handle cleanly.

When to Add a Framework Back

Frameworks earn their complexity cost at two specific points: when you need multi-agent memory that persists across sessions, or when you need observability tooling you do not want to build yourself.

The raw Python approach handles most production cases. Single-hop agents, simple tool routing, and basic retry logic all work cleanly without any dependency.

Where it starts to struggle is multi-agent setups where Agent A needs to know what Agent B decided three steps ago.

| Your situation | Recommended approach |

|---|---|

| Single agent, fewer than 5 tools | Raw Python with trust boundary |

| Multi-step chain, same session | Raw Python, pass messages list forward |

| Multi-agent with shared memory | Dynamiq or LangGraph (not LangChain) |

| Production observability required | LangSmith or Langfuse on top of raw Python |

| Prototype or proof of concept | Raw Python, always |

LangGraph handles the stateful multi-agent case better than the original LangChain. It gives you the graph structure without the abstraction layers that made debugging painful.

For the other 80% of agent use cases, the pattern in this tutorial is all you need.

Quick Takeaways

- The trust boundary pattern treats LLM outputs as untrusted input, validated before any tool runs

- Three layers: Planner LLM (decides) > Trust Boundary (validates) > Executor (acts)

- A working single-hop orchestrator takes about 80 lines of Python with no framework dependency

- Pydantic covers structure validation; your tool allowlist covers scope validation

- Add a framework only when you need persistent multi-agent memory or external observability tooling