TL;DR: You can build a Reddit-scanning AI agent in n8n that reads target subreddits, extracts pain points, scores them for product potential, and sends you a daily digest. The full workflow takes about 90 minutes to set up and costs roughly $0.03 per run. This guide covers the exact node sequence and prompts that work in production.

The most reliable way to find product ideas is to watch what people complain about publicly and repeatedly. Reddit is the largest database of publicly documented frustrations on the internet.

Scanning it manually is tedious and slow.

From what I’ve seen, the builders who have cracked product-market fit fastest in 2026 are not running surveys or doing customer interviews first.

They are building lightweight AI agents to monitor Reddit communities at scale, then validating only the highest-signal complaints before they write a single line of product code.

This tutorial walks through the exact n8n workflow I’d build to do this. I’ll show you the node configuration, the prompt that consistently extracts signal from noise, and the scoring logic that separates “worth investigating” from “someone just venting.”

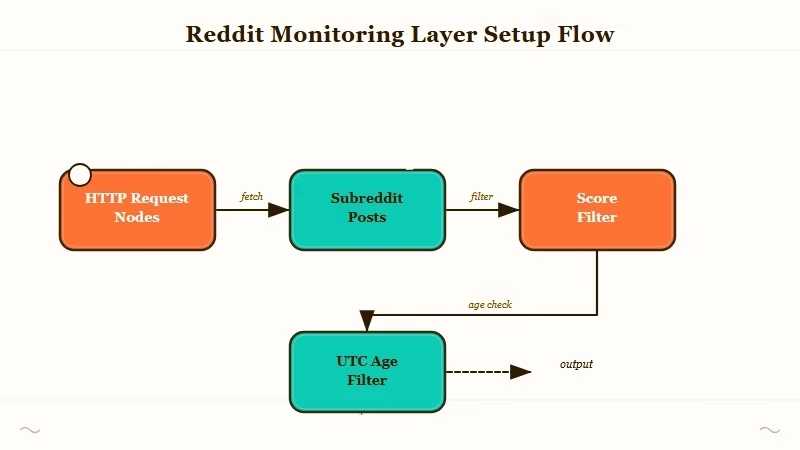

How Do You Set Up the Reddit Monitoring Layer?

The Reddit monitoring layer uses n8n’s HTTP Request node to pull from Reddit’s public JSON API on a daily schedule, then filters posts to only those describing a specific frustration or unmet need.

What is n8n: An open-source workflow automation platform with a visual node editor. It runs locally or on a cloud instance and connects AI models, APIs, and data sources without custom code.

Before you write any AI logic, you need a reliable feed. Here is what I’d build as the input layer:

- Schedule Trigger node, set to run daily at 7am. This is the heartbeat of the whole workflow.

- HTTP Request node (x3, run in parallel), one per subreddit. Point each at the Reddit JSON API:

GET https://www.reddit.com/r/{subreddit}/new.json?limit=50&sort=newSet a custom User-Agent header (Mozilla/5.0) or Reddit will return a 429. I’d start with r/Entrepreneur, r/smallbusiness, and r/SideProject for general product research.

- Merge node, combines the three feeds into a single array.

- Filter node, keeps only posts where

score >= 5andnum_comments >= 3. Posts with no engagement are usually noise.

Vague: “Filter Reddit posts for quality.” Specific: Set the Filter node to: {{$json.data.score}} >= 5 AND {{$json.data.num_comments}} >= 3. This removes zero-engagement posts before the AI ever sees them.

One thing I learned from testing: also filter by created_utc to keep only posts from the last 24 hours. Otherwise the agent re-processes old posts every run and your AI costs stack up.

| Node | Type | Purpose |

|---|---|---|

| Schedule Trigger | Built-in | Fires daily at 7am |

| HTTP Request (x3) | Core | Pulls new posts from each subreddit |

| Merge | Built-in | Combines feeds into one array |

| Filter | Built-in | Removes low-signal posts |

| Code | Built-in | Normalizes post fields |

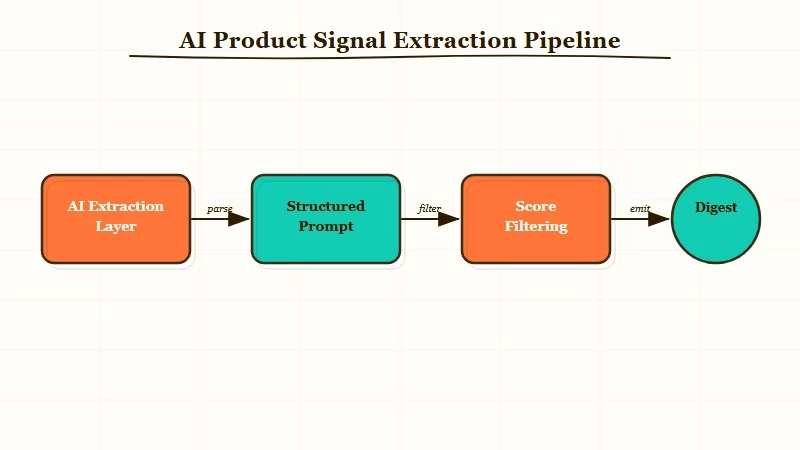

How Do You Extract Product Signals with AI?

The AI extraction layer passes each filtered post to a language model with a structured prompt that scores the post across three dimensions: problem clarity, frequency signal, and monetization fit.

This is where most tutorials go wrong. They use a generic “summarize this post” prompt and get generic output. From my experience, the signal-to-noise ratio collapses immediately when you do not give the model a specific evaluation framework.

It is the same root cause behind why most AI agents fail in production. A vague output contract means downstream logic has nothing reliable to parse.

Here is the prompt that works. Drop it directly into an n8n AI Agent or OpenAI node:

You are a product opportunity analyst. Evaluate this Reddit post and return valid JSON only.

Post title: {{$json.title}}

Post body: {{$json.selftext}}

Subreddit: {{$json.subreddit}}

Return this exact JSON structure:

{

"problem": "one sentence describing the core problem",

"is_recurring": true or false (is this a pattern vs a one-off?),

"diy_workaround": "what the user is currently doing to solve it, or null",

"monetization_fit": "low" | "medium" | "high",

"score": integer 1-10,

"reason": "one sentence explaining the score"

}

Scoring criteria:

- 8-10: Clear pain, no good existing solution, user is willing to pay

- 5-7: Real pain, existing solutions exist but are clunky or expensive

- 1-4: Vague complaint, already well-solved, or unlikely to monetizeConnect this node to a Code node that filters for score >= 7 only. Everything below that threshold gets discarded.

The output from high-scoring posts is what feeds your daily digest. From what I’ve seen, a 50-post input typically yields 3-6 high-signal items per run, which is exactly the right volume to action without overwhelm.

Which AI Model Should You Use for This?

Claude Sonnet 4.6 or GPT-4o Mini both work well for this structured extraction task. I’d default to GPT-4o Mini for cost and Claude for accuracy if the posts are nuanced or context-heavy.

Here is how the cost math works out for daily runs across three subreddits, based on OpenAI’s published API pricing:

| Model | Input cost (per 1M tokens) | Output cost | Est. daily cost (150 posts) |

|---|---|---|---|

| GPT-4o Mini | $0.15 | $0.60 | ~$0.02 |

| Claude Haiku 4.5 | $0.25 | $1.25 | ~$0.03 |

| Claude Sonnet 4.6 | $3.00 | $15.00 | ~$0.35 |

| GPT-4o | $2.50 | $10.00 | ~$0.30 |

For this use case, GPT-4o Mini hits the right balance. The extraction task is structured and well-defined, so you do not need a frontier model. Save Sonnet or GPT-4o for the validation step if you decide to dig deeper into a specific opportunity.

How Do You Build the Scoring and Digest Output?

The scoring layer aggregates today’s high-signal posts, deduplicates by topic cluster, and sends a formatted daily digest via email or Slack.

After the AI extraction node, I’d wire up the following sequence:

- Aggregate node, collect all JSON objects from the AI node into a single array.

- Sort node, sort by

scoredescending so the best opportunities surface at the top. - Code node, deduplicate by checking if two posts describe the same underlying problem. Use a simple keyword overlap check on the

problemfield. - Gmail / Slack node, format and send. Use Make.com’s automation tools if you want to also log results to a Google Sheet or Notion database in the same workflow.

The digest format that I find most useful is:

Subject: Reddit Product Opportunities, April 10, 2026

HIGH SIGNAL (score 8+):

1. [r/Entrepreneur] Score: 9/10

Problem: No affordable tool for freelancers to automate follow-up emails with Stripe data

Current workaround: Manually copying invoice data into Gmail drafts

Monetization fit: HIGH

Thread: https://reddit.com/r/...

2. [r/SideProject] Score: 8/10

Problem: ...Plain text, no fluff. Each item links back to the original thread so you can read the comments before deciding whether to pursue it.

Here is a concrete before/after of the output quality difference between a vague prompt and the structured one above:

Vague prompt output: “The user is having trouble with email follow-ups and wants a better solution.”

Structured prompt output: {"problem": "No affordable tool for freelancers to automate follow-up emails using Stripe invoice data", "isrecurring": true, "diyworkaround": "manually copying invoice IDs into Gmail drafts", "monetization_fit": "high", "score": 9, "reason": "Clear pain point with specific manual workaround and obvious SaaS angle"}

The second output is directly actionable. The first is noise dressed up as insight.

Should You Use n8n or Make.com for This?

For this specific workflow, n8n is the better choice because it handles the parallel subreddit fetches cleanly and gives you more control over the AI node configuration. Make.com is the better option if you want to connect the output to a CRM or Google Sheet without writing code.

From my testing, n8n’s AI Agent node is better suited for structured JSON extraction tasks. Make.com’s OpenAI integration is more straightforward but less flexible when you need custom JSON schemas in the response.

The how to build AI orchestration pattern I’d apply here is the planner-executor split: the scheduling and filtering logic lives in n8n, and the AI call is a single, stateless extraction step. Keep them separate.

A few things worth knowing before you choose:

- n8n self-hosted is free and gives you full control. n8n Cloud starts at $20/month for production use.

- Make.com charges by operations. A 150-post daily run with AI costs roughly 450 operations, which fits in the free tier for light use.

- LangGraph is the right call if you need the agent to loop, maintain memory across runs, or handle multi-step reasoning. For this workflow, it is overkill.

If you are already using Make.com for other automation and just want to add Reddit scanning as one more workflow, use Make. If you are starting fresh and want the most control over the AI logic, use n8n.

For a broader comparison of AI agent tools in 2026, the differences come down to where you need control and where you want convenience.

Frequently Asked Questions

The most common questions about Reddit-scanning AI agents cover API limits, cost, and what to do with the output.

Does Reddit block automated access to its JSON API?

Reddit’s public JSON API (/r/{sub}/new.json) does not require authentication and is not rate-limited for light use. Keep requests under 60 per minute and include a descriptive User-Agent header. Running once daily on 3-5 subreddits is well within acceptable use.

How much does this workflow cost to run daily?

At 150 posts per day using GPT-4o Mini for extraction, expect roughly $0.02 to $0.05 per day. Running on n8n self-hosted, the only variable cost is the AI API call. Monthly cost is under $2 for most users.

Can I scan competitor subreddits instead of general ones?

Yes, and that is often more valuable. If you are building a developer tool, scan r/webdev and r/devops. If you are building a productivity app, scan r/productivity and r/ADHD. The more specific the subreddit, the higher the signal density.

What should I do with the high-scoring opportunities?

Do not build immediately. Validate first. Read the full thread and comments for the top-scoring items. Check if a solution already exists with a Google search for the problem description. If you find nothing good, post a question in the subreddit asking about the problem before you build. Confirm demand in public before you write code.

Does this workflow work for finding content ideas too?

Yes. Replace the product-scoring prompt with a content-scoring prompt that evaluates whether a post represents a question many people are asking but few articles answer well. The node structure is identical.