What Happened: xAI quietly pushed Grok 4.3 to its API on April 30, 2026 at $1.25 input and $2.50 output per million tokens, with a 1M token context window and 207 tokens per second output speed. It scores 53 on Artificial Analysis’s Intelligence Index, behind GPT-5.5 (60) and Claude Opus 4.7 (57). It is a price and speed play, not a frontier model.

The Grok 4.3 release April 2026 landed almost without a press release. xAI flipped the switch on the API on April 30, 2026, and the only obvious signal was a tiny banner in the developer console telling existing customers to migrate from grok-4.20 to grok-4.3.

I went in expecting another “Opus level” promise from Elon. What I got was a model that prices like a budget option, runs at the speed of a sports car, and quietly trails the actual frontier models on every benchmark that matters.

If you have been waiting for the Grok release that finally beats Anthropic and OpenAI on raw intelligence, this is not it. What it is, in my read, is a very smart re-positioning of xAI as the cheap-and-fast lane for production workloads, not the smart-money lane for hard reasoning. That distinction matters more than the number on the model card.

What Actually Happened

Grok 4.3 is xAI’s flagship API model as of April 30, 2026, available at $1.25 per million input tokens and $2.50 per million output tokens with a 1 million token context window.

xAI’s official model docs were updated overnight on April 30 to recommend all API callers move to grok-4.3. The early developer reception has skewed cool, with users questioning whether this is the long-promised “Opus level” Grok or something quieter.

The release date is not the only fresh signal. Grok 4.3 Beta first appeared in the model selector on April 17, 2026, locked behind the new SuperGrok Heavy tier at $300 per month.

Two weeks of internal beta, then a quiet API push. That is a different cadence from the launch-event-and-tweetstorm playbook xAI has used for every Grok release since 2.0.

The release also coincides with Elon Musk’s testimony in the OpenAI versus xAI trial, where Musk acknowledged that xAI’s earlier models were partially trained on OpenAI tech, per the TechCrunch testimony report. My read on the same-day timing: nobody at xAI thought the release deserved a louder push than the lawsuit news. That itself is the story.

Why Grok 4.3 Isn’t the Opus 4.7 Killer Elon Promised

Grok 4.3 is the third best frontier model right now, not the first, on every public benchmark we have access to as of release day.

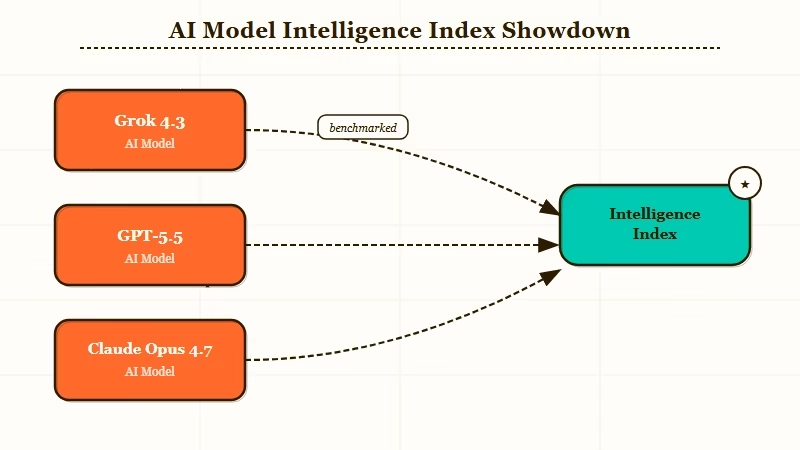

The independent Artificial Analysis benchmarks put Grok 4.3 at a score of 53, well above the 34 median for its price tier but a step behind GPT-5.5 at 60, Claude Opus 4.7 at 57, and Gemini 3.1 Pro Preview also at 57. The way I see it, that is not a trivial gap. A 7-point Intelligence Index swing usually translates to a noticeably worse experience on multi-step reasoning, code, and verification.

The mood among early developer testers has crystallised around one question, neatly summed up by an early r/singularity reply: “Is this supposed to be the model Elon promised to be ‘opus level’?” The honest answer from the responses underneath is no.

Grok 4.3 is competent. It is not a frontier model.

What surprised me was how candid the official xAI docs are about this. xAI does not market 4.3 as “the smartest”. It markets it as the fastest and cheapest of its serious-tier models. That is a quieter pitch than what the X account usually broadcasts, and it reads to me like an admission of where the model fits in the lineup.

How Does Grok 4.3 Compare to GPT-5.5 and Claude Opus 4.7

On intelligence Grok 4.3 trails both, on speed it crushes both, on price it is roughly half of GPT-5.5 and a quarter of Opus 4.7.

Here is how the three models stack on the dimensions that matter for production:

| Metric | Grok 4.3 | GPT-5.5 | Claude Opus 4.7 |

|---|---|---|---|

| Intelligence Index | 53 | 60 | 57 |

| Output speed (tok/s) | 207 | 87 | 59 |

| Input price (/1M) | $1.25 | $2.50 | $5.00 |

| Output price (/1M) | $2.50 | $10.00 | $25.00 |

| Context window | 1M | 256K | 1M |

What I’d flag is the speed asymmetry. Grok 4.3 finishes a typical 1,000-token answer roughly 2.4x faster than GPT-5.5 and 3.5x faster than Opus 4.7. For voice agents, real-time chat surfaces, and any product where latency is the customer-experience bottleneck, that is not a small edge.

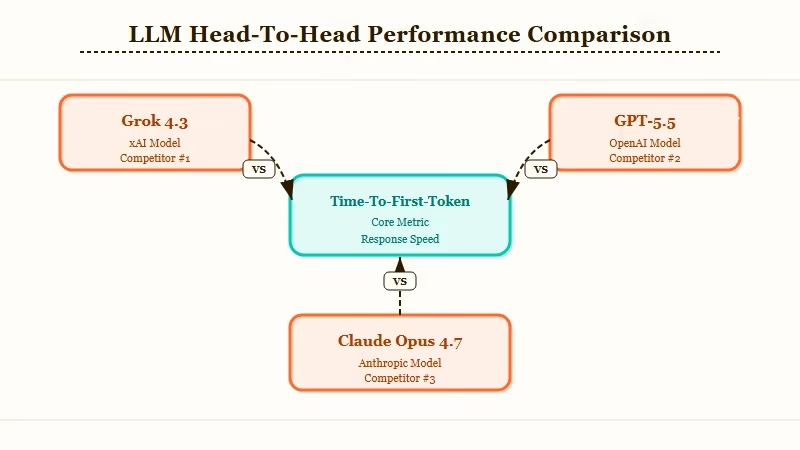

The catch is one I did not expect. Grok 4.3 has the highest time-to-first-token (TTFT) of any model at its tier, 12.65 seconds versus a 2.82s median, per Artificial Analysis.

It thinks for a long time, then talks fast. The end-to-end latency for short responses is worse than GPT-5.5; the speed advantage only shows up on long outputs. That is a counter to the headline pitch and worth knowing before you put it behind a chat UI.

What Does Grok 4.3 Really Cost to Run

At sticker price Grok 4.3 looks like a bargain, but the model is verbose enough that real per-task spend lands closer to mid-tier than the price card suggests.

The detail buried in the Artificial Analysis test: Grok 4.3 used 88 million tokens to complete the same Intelligence Index evaluation that the median model finished in 35 million. That is roughly 2.5x verbosity. From my read, that means the headline $2.50 per million output tokens needs to be mentally adjusted up to a real per-task cost closer to $6.30 versus a competitor charging the same nominal rate.

Here is a worked cost example for a single typical agent task that requires 50K input tokens and an extended reasoning trace:

Before (assuming median verbosity): Grok 4.3 = $1.25 (50K input) + $2.50 (1M output) = ~$0.31 per task

After (actual Grok 4.3 verbosity, 2.5x): Grok 4.3 = $1.25 (50K input) + $2.50 (2.5M output) = ~$0.69 per task

Compare that to GPT-5.5 at the same task with median verbosity, around $0.85, and the gap is real but smaller than the price card suggests.

The way I read it, Grok 4.3’s price advantage gets eaten roughly by a third on long-form generation.

For batch workloads xAI offers a 50% discount, and the Batch API now includes image generation at $0.01 per standard image and video generation at $0.025 per second. That is the part of the release I’d pay close attention to if I were running a media pipeline.

Should You Switch to Grok 4.3 Right Now

Switch if you run high-volume voice products, batch creative workloads, or care about cost-per-token over peak intelligence. Stay on Opus 4.7 or GPT-5.5 if your workloads are reasoning-heavy or memory-dependent.

| Use case | Best fit | Why |

|---|---|---|

| Voice agents | Grok 4.3 | TTS at $4.20/1M chars, ~90% cheaper than ElevenLabs |

| Multi-step reasoning | Opus 4.7 or GPT-5.5 | 7-point Intelligence Index advantage on hard tasks |

| Long-context document RAG | Grok 4.3 or Opus 4.7 | 1M context tied, but Grok wins on price |

| Persistent project memory | GPT-5.5 or Opus 4.7 | Grok 4.3 still has zero session memory |

| Batch image/video generation | Grok 4.3 | New Batch API discount + $0.01 image pricing |

In my experience the place this lands cleanly is voice. xAI has undercut ElevenLabs and OpenAI on voice on per-character TTS pricing, by 86% to 92% depending on the comparison.

That is not incremental. That is a “build your voice product on Grok now or eat the difference for the next year” moment for anyone who has been pricing those workloads.

Where it does not work for me is anything memory-dependent. Grok 4.3 still has no persistent memory between sessions, a feature ChatGPT has had since 2024 and Anthropic added through the Mythos memory add-on earlier this year.

At $300 per month for SuperGrok Heavy, that absence is hard to defend. For long-running agents and project-style use, the lack of memory is a real handicap, not a footnote.

What Comes Next for xAI

xAI is reportedly training a 1 trillion parameter Grok variant, so 4.3 is likely the price-tier release, not the frontier release.

Independent reports cited in the source material put Grok 4.3 at roughly 0.5T parameters with a 1T checkpoint nearing completion. If that lands as a “Grok 5” or “Grok 4.3 Heavy” later in 2026, it would be the real head-to-head with Opus 4.7 and GPT-5.5. The 4.3 release reads to me as the budget tier shipping early, and the frontier model arriving later.

The other shoe is the lawsuit. With Musk on the stand acknowledging earlier Grok models were partially trained on OpenAI output, the timing of an extremely quiet 4.3 release on the same day starts looking less like a coincidence. For broader context on the AI lab landscape right now, the Anthropic OpenAI valuation story and the Cursor coding race piece cover the surrounding arguments.

Three things I’d watch over the next 30 days, ranked by how much they change the calculus:

- Whether the 1T checkpoint ships at all, since that is the only path to a real frontier-model Grok.

- Whether SuperGrok Heavy picks up enough developer mindshare to keep API pricing this aggressive.

- Whether the verbosity issue gets quietly tuned down, which would close the gap between sticker price and real per-task spend.

Any one of those moves changes the migration math. For the older Grok baseline, the Grok 4.20 comparison piece sets the reference.

For now the honest read is this: Grok 4.3 is the cheapest credible frontier-adjacent model on the market, and that is a real product. It is not the smartest, and Elon’s “Opus level” framing is not what shipped. Both of those things can be true, and both matter for what you build with it.

First time on the site, that article had a strong Opus or Sonnet vibe. I think it also tripped itself up on the cost to run section. Artificial Analysis showed the opposite, that the cost reduction more than offsets its loss in token efficiency.