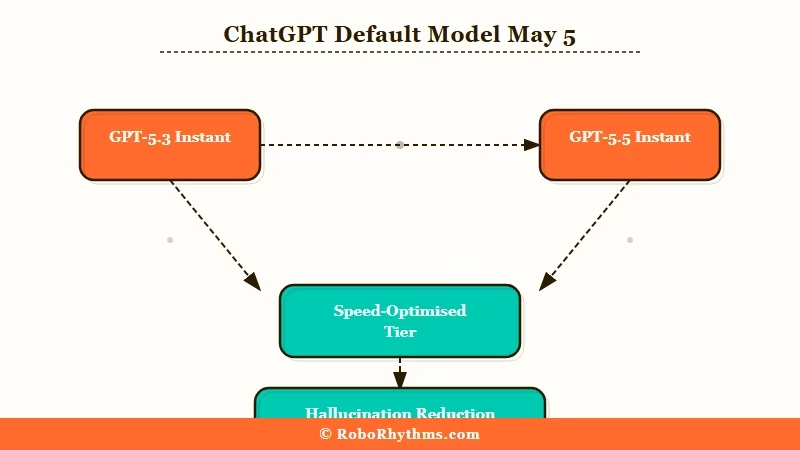

What Happened: OpenAI rolled out GPT-5.5 Instant on May 5, 2026 as the new default ChatGPT model, replacing GPT-5.3 Instant. The pitch is 52.5% fewer hallucinations on high-stakes prompts, 37.3% fewer factual errors on flagged conversations, and a tighter response style with fewer emojis. The catch is a new memory layer that can search across past conversations, files, and Gmail.

If you opened ChatGPT yesterday or today and felt like the responses were a little colder, a little shorter, and weirdly less prone to dropping random emojis, you were not imagining it.

OpenAI swapped out the default model on May 5, 2026 and pushed GPT-5.5 Instant to everyone, replacing GPT-5.3 Instant in a phased rollout that landed exactly two months after the previous default switch.

The headline numbers OpenAI is pushing are real. Internal evaluations show GPT-5.5 Instant produced 52.5% fewer hallucinated claims on high-stakes prompts in medicine, law, and finance, and 37.3% fewer inaccurate claims on conversations that users had flagged for factual errors.

Math benchmarks moved too: AIME 2025 went from 65.4 to 81.2, and MMMU-Pro multimodal reasoning climbed from 69.2 to 76.

What I find more interesting is what is not in the press release. The new default model can now reach into your Gmail, your past conversations, and your uploaded files to personalise its answers, and the older personality you may have liked is being engineered out. Both trade-offs deserve a closer look before you decide whether the upgrade is good news.

What Actually Happened on May 5

GPT-5.5 Instant is the new default ChatGPT model as of May 5, 2026, replacing GPT-5.3 Instant for free, Plus, and Pro users.

OpenAI announced the rollout with a blog post and a phased launch starting that day. Older users on paid tiers will keep access to GPT-5.3 Instant for three months before it is fully retired.

Two important framing notes from the announcement. First, the model is positioned squarely as the fast everyday driver, not the heavyweight. OpenAI launched GPT-5.5 Thinking and Pro the previous month for what it calls “real work”, which means coding, deep research, and long reasoning chains.

Instant is what most casual ChatGPT users interact with by default, and that is the layer that just changed under them.

Second, the model maintains the same low latency as the predecessor according to OpenAI. So speed should feel identical, while the underlying responses are tuned differently. The most visible behavioural change for everyday users is what OpenAI calls a reduction in “gratuitous emojis” and “verbosity”, which the company frames as an intelligence upgrade rather than a stylistic one.

What is GPT-5.5 Instant: OpenAI’s fast-response default ChatGPT model, optimised for low latency and conversational use rather than long-chain reasoning, which is handled by the separate GPT-5.5 Thinking and Pro tiers.

The announcement was covered live by TechCrunch, Axios, and 9to5Mac, all of whom confirmed the same numbers and timeline. Nothing in the rollout requires you to opt in. If you use ChatGPT, you are already using the new model.

Why Is This Update a Bigger Deal Than It Looks

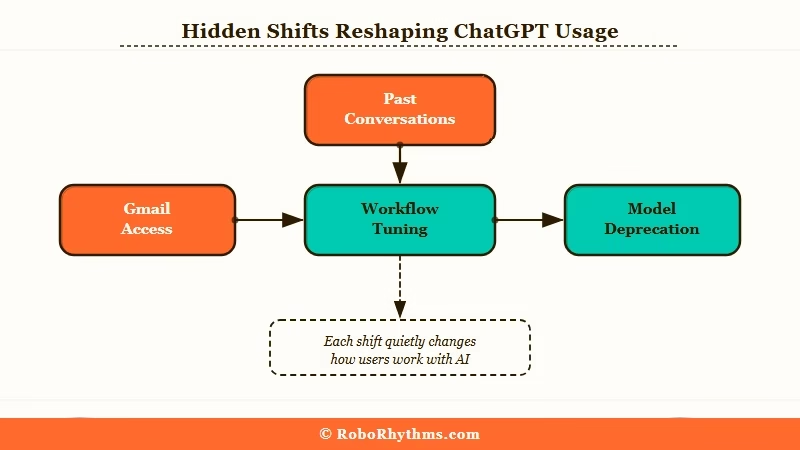

The hidden story is that OpenAI extended ChatGPT’s memory into your Gmail and files, and accelerated the deprecation cycle for older models to roughly three months.

Neither of those changes shows up in the headline benchmarks, and both have real consequences for how you use the product.

The personalisation expansion is the part most users will not notice until it surprises them. Plus and Pro users on the web now get a search tool that can refer back to past conversations, uploaded files, and Gmail to give more personalised answers. Mobile is coming “soon”, and free, Go, Business, and Enterprise users will get it in the “coming weeks”.

The way I read this is straightforward. The moment ChatGPT starts pulling in last week’s email thread to answer a casual question is the moment a lot of people quietly turn the feature off.

The model deprecation cadence is the second story. Paid users only have three months of access to GPT-5.3 Instant before it is gone. That is a tighter window than Anthropic’s typical legacy-model timelines.

It forces anyone who has tuned a workflow, a custom GPT, or a prompt library against a specific model to rebuild every quarter. For hobbyists this is mild annoyance. For anyone running production work on the API, it is a forced upgrade treadmill.

There is also the personality angle that the press release studiously avoids. OpenAI explicitly reduced what it calls “gratuitous emojis” and engineered for tighter, more to-the-point responses. The official quote is that the model keeps “the warmth and personality that makes ChatGPT enjoyable to use”, but the design direction is plainly toward less expressive output.

The 2026 retirement of GPT-4o, which prompted petitions and users describing the model as their “best friend”, is the recent precedent OpenAI is choosing to walk back from. The way I read the trade, precision is up and charm is down.

What This Means for You

Most ChatGPT users will see a faster, more accurate model with a quieter personality, and Plus and Pro users will need to decide how comfortable they are with ChatGPT reading their Gmail. Here is how the change lands by user type.

If you are on the free tier, the upgrade is mostly upside. You get the accuracy boost and the response cleanup at no cost. The Gmail and file personalisation feature is gated to Plus and Pro on the web for now, so you will not have to think about it yet.

The only thing to know is that any custom GPTs or prompts you built around GPT-5.3 Instant’s quirks (longer responses, more conversational filler, more emoji use) may now produce different output. Test your saved prompts before assuming they still work the same.

A simple before-and-after to feel the change yourself. Send the same prompt under both models if you still have access:

Before: Ask GPT-5.3 Instant “Plan me a healthy weeknight dinner for two.” The response usually opened with an emoji, listed options with friendly subheaders, and ended with a follow-up question.

After: The same prompt to GPT-5.5 Instant now returns a tighter list with fewer emoji subheaders, and often skips the closing question entirely.

If you are on Plus or Pro, you get the personalisation feature first. The quick checklist before you let ChatGPT into your inbox is straightforward.

- Open ChatGPT settings and find the new memory and personalisation panel.

- Decide whether to grant Gmail and file access. The default tends to be off until you connect the integration, but verify before assuming.

- Review the new “memory sources” view. ChatGPT now shows where any given answer was generated from, and you can delete or correct individual memory entries.

- Note that memory sources stay hidden when you share a chat with someone else, so a shared conversation will not leak which past chats or files were referenced.

- If you do not want the integration at all, leave it disconnected. Nothing in the rollout forces it on you.

If you are an API developer, the new model is available as chat-latest. GPT-5.3 Instant remains available for three months, which gives you a reasonable window to A/B test outputs against your existing pipelines.

The hallucination drop is real and material if you are building anything in regulated domains, but the response shape is also different. Anything that depends on a specific verbosity level, emoji frequency, or formatting habit will need to be revalidated.

The accuracy improvements are the part I would not undersell. A 52.5% drop in hallucinated claims on medical, legal, and financial prompts is the kind of move that genuinely matters for the segment of users who have been burned by confident wrong answers from earlier models. If that is your use case, GPT-5.5 Instant is genuinely a better tool, even with the personality trade-off baked in.

| Symptom | Likely cause | Fix |

|---|---|---|

| Responses feel shorter and colder | New tighter response style, deliberate design choice | Add a one-line personality preamble in custom instructions |

| Custom GPT outputs changed shape | GPT-5.5 Instant interprets prompts differently | Re-test your top 5 prompts and tune wording |

| ChatGPT references old emails unexpectedly | Gmail integration was enabled, often during onboarding | Open settings, disconnect Gmail under personalisation |

| API responses regressed in your app | Default API model now chat-latest (GPT-5.5) | Pin to GPT-5.3 explicitly for the next 3 months |

| Math or factual quality improved noticeably | Hallucination rate dropped 52.5% on high-stakes prompts | Lean into it; revisit any disclaimers you added for older models |

How Does GPT-5.5 Instant Compare to Thinking and Pro

GPT-5.5 Instant is the speed-optimised default; GPT-5.5 Thinking and Pro are the heavier reasoning models OpenAI launched the month before.

Most public coverage treats the May 5 update as “the newest model”, but that is not quite right. Instant is the everyday driver, and the heavier siblings sit above it for harder work.

| Model | Best for | Who gets it | Latency |

|---|---|---|---|

| GPT-5.5 Instant | Everyday chat, search, casual questions | All ChatGPT users, default | Low, same as 5.3 Instant |

| GPT-5.5 Thinking | Complex reasoning, multi-step problems | Plus, Pro | Higher, deliberate |

| GPT-5.5 Pro | Coding, deep research, long workflows | Pro only | Highest, slow but thorough |

| GPT-5.3 Instant (legacy) | Backwards compatibility for prompts tuned to old style | Paid users, 3-month window | Low |

The way I read the lineup, OpenAI has split the value equation in two. Instant carries the high-volume conversational load with a tighter personality and better factual accuracy. Thinking and Pro absorb the genuine intellectual work for a smaller slice of users.

The April release of Thinking and Pro plus the May release of Instant means OpenAI shipped a complete refresh of its consumer stack in five weeks.

What I would watch is whether the API “chat-latest” tag continues to shift underneath you. If you are building production work, pinning to a specific model snapshot rather than chat-latest is the safer move now that the deprecation window is three months and the model behaviour is changing this aggressively.

The continuing GPT-5 backlash over earlier transitions tells you how users react when they cannot pin a model to a workflow.

What Comes Next

Expect the Gmail and file personalisation to expand to free, Go, Business, and Enterprise users in the coming weeks, with mobile rollouts trailing the web release.

OpenAI was explicit about both timelines in the launch notes. The bigger open question is what the next default switch looks like.

If the two-month cadence holds, GPT-5.7 or GPT-5.6 Instant could land in early July 2026. The pattern of releasing Thinking and Pro a month before the new Instant default also looks deliberate. From what I have seen of the broader ChatGPT roadmap pattern, each refresh cycle has gotten faster, and the heavy-tier launches keep pulling forward.

The personality angle is the one I would watch most closely. OpenAI has now publicly committed to engineering away “gratuitous” emoji use and verbose responses. The fact that this is being marketed as an upgrade rather than a quiet adjustment means the company is signalling its long-term direction.

If you preferred the warmer, chattier style of the GPT-4o era, the next two years of ChatGPT updates are likely to keep moving in the opposite direction.

What is harder to predict is how the Gmail integration plays out at scale. Per Pew Research on data privacy, about 81% of Americans say the risks of how companies use their data outweigh the benefits.

That is the audience OpenAI is now asking to plug their inbox into a model that just changed under them. The take-up rate over the next quarter will tell you a lot about whether ChatGPT becomes a personal information hub or stays a stateless chat product for most users.

The bigger question for users is which version of ChatGPT they were attached to. The shift toward more conservative outputs has been a running theme since the GPT-5 transition began, and GPT-5.5 Instant continues that direction.

If you want to feel out the difference quickly, ask the same question you asked ChatGPT a week ago. The response shape will tell you more than any benchmark.