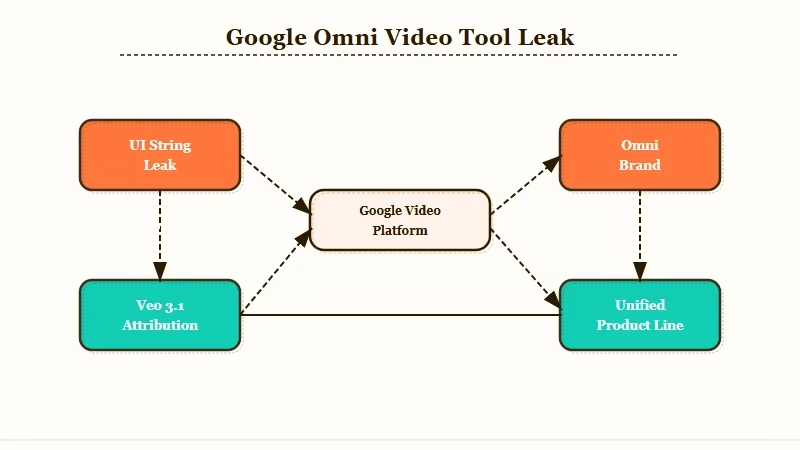

What Happened: Google is testing a new Gemini video-generation tool called Omni, surfaced through UI string leaks ahead of I/O 2026 on May 19-20. The leak suggests Google is bundling video and image generation into one consumer-facing model, a structurally different bet from OpenAI’s standalone Sora. I think this is the most strategically loaded leak Google has had this year.

Google Gemini Omni surfaced through UI string leaks today, sixteen days before Google I/O 2026 opens at Shoreline Amphitheatre on May 19.

A leaked screenshot of the Gemini video generation interface shows a new product line with the line “Start with an idea or try a template. Powered by Omni,” and the placement of that wordmark inside the consumer UI is what tells me this is bigger than a code name swap.

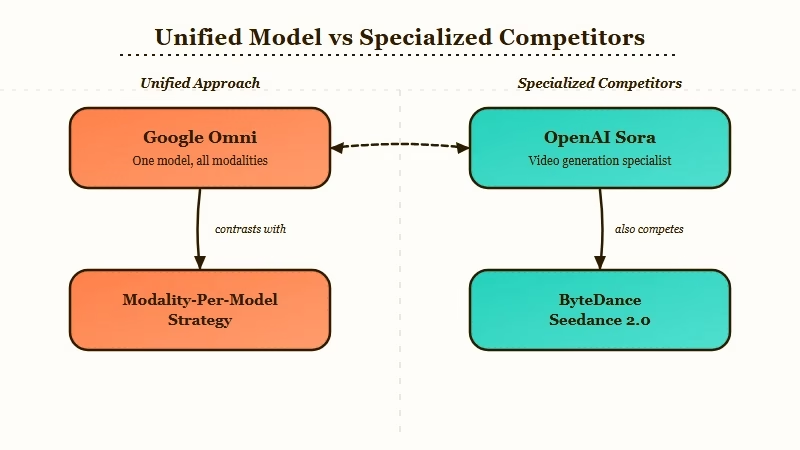

If the leak holds up at I/O, Google is about to ship the first top-tier omni-model that handles video and images inside one system. That is a different shape of product to OpenAI Sora, and it is a different shape of product to Veo 3.1, the model currently powering Gemini’s video tool.

What follows is what the leak really contains, why I think the strategy behind it matters more than the screenshot, and what the timing means if you build with Gemini, post on YouTube, or have been watching the AI video space tighten up around ByteDance and OpenAI.

What Actually Happened

Google leaked a Gemini video tool called Omni through UI strings ahead of I/O 2026, with the consumer-facing wordmark appearing inside the video generation interface itself.

The original leak was published by TestingCatalog and amplified across the AI community earlier today. The screenshot shows the Gemini video generator with the bold wording “Powered by Omni,” replacing the previous Veo 3.1 attribution that the same UI carried in late April.

Google currently runs a split-model strategy on the consumer side. Veo 3.1 powers video, the Nano Banana Pro and Nano Banana 2 lines power image generation, with Nano Banana 2 sitting on top of a Gemini 3.1 Flash Image checkpoint.

Omni breaks that split. The leak frames the new tool as a single brand for both modalities, which is a meaningful shift away from the modality-per-model layout that Google and OpenAI have both used through 2025 and 2026.

The leak does not tell us prices, token costs, Vertex AI availability, output durations, or whether Omni is a brand-new model or a wrapper that sits on top of Veo 4 and the Nano Banana line. The UI string is the only confirmed artifact. Everything else is being inferred from the placement, the absence of Veo branding, and Google’s pre-I/O communications cadence over the last three weeks.

For context on Google’s current video stack, the Veo 3 marketing video walkthrough covers what the existing tool can already do and where the quality ceiling sits today.

Why This Is a Bigger Deal Than It Sounds

The Omni leak is significant because Google appears to be replacing its modality-per-model strategy with a single consumer brand, which closes the structural gap with OpenAI on consumer naming and reopens the gap on capability surface.

OpenAI has been winning the consumer naming war for two years. ChatGPT became the catch-all for text, DALL-E sat under it for image, and Sora sat next to it for video.

Each one had a clean noun a non-technical user could repeat to a friend, and each one had its own front door. Google’s matching layer, what the platform really shipped, was Gemini for chat, Imagen and Nano Banana for image, and Veo for video.

That is three brands and four tools for the same job, and you can feel the friction every time you try to explain where Google’s video generator lives.

Omni collapses that. If the leak is what it looks like, Google is going to walk on stage at I/O with one consumer-facing video and image product instead of two, and it is going to point Android, Workspace, and YouTube users at the same noun.

The way I see it, that is the first time Google has had a cleaner naming story than OpenAI in the generative-media category since Sora launched.

The capability angle is the second half. ByteDance’s Seedance 2.0 currently tops the public AI video benchmarks, and Kling, Hailuo, Runway, Luma, and Pika are all running ahead of Veo 3.1 on at least one dimension of motion realism or duration.

A unified Omni model lets Google trade some specialization in video for cross-modal reasoning, the thing Google’s TPU stack has been optimized for from the start. That is the bet I read in this leak.

There is a specific risk in that bet. The “one-model-to-rule-them-all” approach makes specialized video models like Sora look obsolete only if Omni’s video output is good enough.

If it is not, Google ships a unified brand running on a model that is still a step behind Seedance and Sora, and the brand consolidation costs more than it earns. I think this is why the LMSYS Arena Gemini 3.2 Flash sightings matter so much, the Flash variant tells us the underlying model line is improving fast enough to support a unified product.

What This Means for You

If you generate video for content, build on the Gemini API, or run a YouTube channel, the Omni leak changes your three-month planning window because Google’s pricing, distribution, and feature surface are about to move at I/O.

For content creators using AI video right now, the most useful thing the leak tells me is that the friction of switching tools at the end of May is going to be lower than at any time this year. Omni inside the consumer Gemini app means you can stay in one tool for the storyboard, the script, the still images, and the video output.

That kills the export-import-rerender chain a lot of people are running today. Even if Omni’s quality lands a hair below Sora at launch, the speed of iteration in one app is a real productivity gain.

A concrete way to think about how Omni would change a single workflow:

Before: Storyboard in Midjourney, script in Claude, still frames in DALL-E or Imagen, then export every asset and re-import them into Sora or Runway to render the final clips. Each tool boundary is its own friction tax.

After: Drop one prompt into Omni, watch it produce the storyboard frames, the still hero, and the 30-second clip in the same panel. One brand, one set of credits, one consistency layer for character and style.

For developers building on the Gemini API, the gap is the API access tier. Right now the consumer Gemini app and the Vertex AI side of the house move on different release cadences, and the leak gives no information on how Omni will be exposed to Vertex AI, how its tokens will price, or whether developer access lands on day one or trails by a month.

I would not lock in any production video work to Veo 3.1 right now without budgeting the chance of an API rename or a deprecation timeline.

For YouTube creators, the Android plus YouTube plus Google Workspace integration story is the part that should pull your attention. OpenAI does not have a native mobile distribution channel for Sora that competes with Google’s footprint.

If Omni ships with Shorts and YouTube Studio integration day one, the gap between AI video generation and the platform you publish on closes by a year. That is not the kind of feature OpenAI or Anthropic can match at I/O speed.

The unanswered questions I would track between now and May 19 are the SynthID watermarking story for deepfake prevention, the developer API exposure path through Vertex AI, and whether Omni includes any concession on training data, given how much of YouTube’s creator catalog Google has access to.

Statista’s generative AI market reporting puts the addressable market for AI video tools above 12 billion USD by 2027, so the answer to the training data question is going to land on a growing pile of money.

If you want background on where Google’s Veo line stands against the competition right now, the Wan 2.2 vs Google Veo 3 breakdown walks through where each model wins, and the Sora 2 social video app review covers what OpenAI shipped on the consumer side last quarter.

| Question | What we know now | What I expect at I/O |

|---|---|---|

| Is Omni a new model or a Veo wrapper? | Unknown, only UI string leaked | New unified model with shared backbone |

| Pricing on the consumer Gemini app | Unknown, current Veo 3.1 sits inside Gemini Advanced | Bundled with existing Gemini Advanced tiers |

| Vertex AI developer access | No information in the leak | Trailing release of two to four weeks |

| Output duration and resolution | Unknown | 30s to 60s clips at 1080p, parity with Veo 3.1 |

| SynthID watermarking | Not mentioned in the leak | Default-on for all Omni outputs |

| Distribution surface | Gemini consumer app confirmed | YouTube Shorts, Workspace, Android day one |

What Comes Next

The Omni leak compresses the I/O 2026 keynote agenda, because Google now has to either confirm and ship the model on May 19 or live with a leaked product that competitors get sixteen days to position against.

I have watched Google leak a video tool before I/O before. The Toucan code name surfaced ahead of I/O 2025 and the product that shipped was Veo 2 under a different name.

The Omni leak feels different to me because the wordmark is inside the consumer UI string, not in a private alpha tester’s screenshot or a server-side log file. That suggests Google was within days of a public roll, and the leak just shortened the runway.

The realistic outcome is that Google announces Omni on the I/O 2026 main stage, ties it to Gemini 3.2 or 3.5 on the model side, and pushes Workspace and Android distribution alongside it.

The risk case, the one I would not bet on but would not dismiss, is that Google delays the announcement to coincide with the next earnings cycle and uses the I/O slot for an upgraded Veo 4 instead. Either way, the leak just told us Google has a unified video and image brand it is willing to ship.

The competitive reaction from OpenAI and Anthropic is the other thing to watch. Anthropic is reportedly red-teaming a model called Jupiter-v1-p ahead of its May 6 developer conference, which lands thirteen days before Google I/O. If Anthropic has anything in the multimodal video space, May 6 is when we will know.

For now, the move I would make is simple, and it breaks down into three steps:

- Pause any new AI video commitments that lock you to Veo 3.1 pricing or branding for the next four weeks.

- Pre-sketch the prompts and storyboards you would want to test on Omni so you can run them inside an hour of the I/O keynote on May 19.

- Decide in advance whether you would migrate to Omni from Sora, Runway, or Kling on a quality threshold or on a price threshold. The two answers lead to very different launch-week behavior.

The cost of two weeks of patience is small, the upside of catching Omni at launch with first-mover usage data is large, and the model line you are about to be priced against is shifting under your feet.