TL;DR: The strongest free-tier AI agent stack in 2026 is Gemini Flash for the heavy reasoning loop, Groq or Cerebras for any latency-critical hop, OpenRouter for fallback routing, and Mistral or Cohere for embedding and rerank. Real practical ceiling is roughly 5,000 requests per day per provider, and your real bottleneck is prompt portability, not the rate limits.

A real working agent on zero spend is finally possible in 2026, and the question stopped being “is there a free tier” and became “which free tiers stack into a coherent runtime.” That is the question I wanted to answer with an actual tool list, real limits, and one routing pattern that holds up.

The headline numbers got large enough this year that prototyping costs nothing, and small production workloads can hide inside the free quotas if you route carefully. Gemini 2.5 Flash gives you 1,500 daily requests on a 1M-token context window for $0, Groq lets you run Llama 3.3 70B at 300+ tokens per second for $0, and Cerebras pushes 2,000 tokens per second on Llama 3.3 70B with 1M free tokens daily.

What the existing roundups miss is the operational layer, the part where you decide which provider handles which step and what happens when one of them throws a 429. That gap is the actual reason most free-tier agent builds die before they ship.

This walkthrough is opinionated: a recommended stack, a routing pattern, the exact rate limits I would budget against, and the three failure modes I would design around from day one.

Why a Free Tier AI Agent Stack Even Works in 2026

A free tier AI agent stack works because frontier-class models and 1M-token context windows are now available across at least four providers at zero cost, with daily quotas large enough to run real workloads.

The math has shifted: a properly routed agent can stay inside free limits for 4,000-5,000 requests per day before any single provider taps out.

The thing nobody talks about is how recent this is. In early 2025 the only credible free tier was Gemini 1.5 Flash at 15 requests per minute. By May 2026, Gemini Flash sits at 1,500 daily requests, Groq adds 30 RPM on Llama 3.3 70B with no card required, Cerebras adds 1M free tokens per day at unreal speed, and Mistral throws in 1 billion tokens per month on the Experiment tier.

The way I see it, the inflection point was when Cerebras and Groq made fast Llama 3.3 70B free at the same time Google made Gemini Flash effectively unlimited for prototyping. That meant your reasoning loop, your fast hops, and your wide-context analysis no longer needed the same model or the same provider. You could route them.

Stacking is the lever that turns five mediocre free tiers into one production-ish stack. The trick is that the limits do not stack additively in any meaningful sense if you treat each provider as a primary, only if you route by capability and use the others as fallbacks.

The Recommended 2026 Free Tier Agent Stack

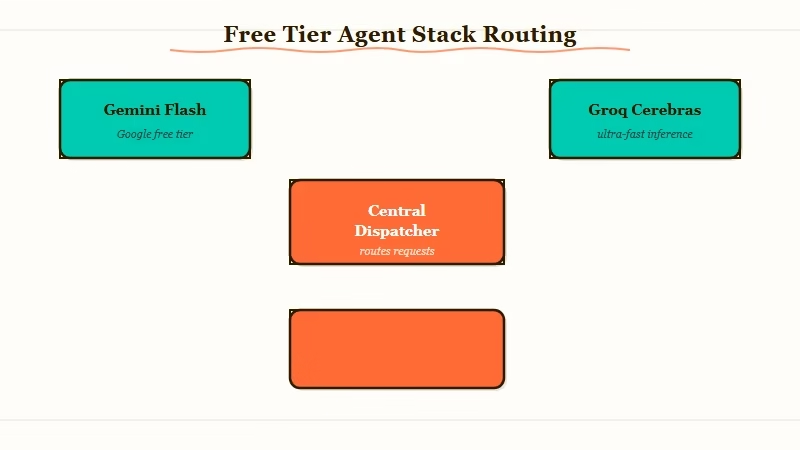

The strongest 2026 free-tier stack uses Gemini Flash as the primary reasoning model, Groq or Cerebras as the latency-critical hop, OpenRouter as the fallback gateway, and Mistral or Cohere for embeddings and rerank.

Each provider is doing one job it is uniquely good at, not competing for the same job.

Here is the role split with exact free-tier ceilings.

| Role in the stack | Provider | Free-tier ceiling | Why pick it for this role |

|---|---|---|---|

| Main reasoning loop | Gemini 2.5 Flash | 15 RPM, 1,500 RPD, 1M-token context, multimodal in | Biggest daily quota, biggest context window, no card |

| Latency-critical step | Groq (Llama 3.3 70B) or Cerebras | Groq 30 RPM, 1,000 RPD, 6K TPM; Cerebras 30 RPM, 1M tokens/day | 300 to 2,000 tokens per second, the fastest free path |

| Fallback gateway | OpenRouter (free models with :free suffix) | 20 RPM, 50 RPD ($0 balance) or 1,000 RPD ($10 balance) | Routes to 30+ community-funded free variants, one API surface |

| Embeddings + rerank | Cohere (Embed 4, Rerank 3.5) | 20 RPM, 1,000 requests per month | Best-in-class rerank, no card, non-commercial only |

| Long-context analysis | Mistral (Experiment tier) | 2 RPM, 500K TPM, 1B tokens/month | Largest monthly token budget, EU-hosted, GDPR-friendly |

| Open-source variety | Hugging Face Serverless Inference | Per-model rate limits, 30+ second cold starts | 300+ models under 10B params for experimentation only |

| One-time credit boost | xAI Grok | $25 signup credit; $150/mo via opt-in data sharing after $5 spend | Useful for spike capacity, not a steady-state primary |

The pieces I would NOT lean on: OpenAI does not offer an indefinite free API tier, Anthropic only ships a one-time $5 trial credit, and Together AI requires a $5 credit purchase to start. They all have a place in a paid stack; none of them anchor a true zero-spend build.

For a sense of what the broader category looks like once you start paying, the Anthropic pricing breakdown covers the doubled Claude Code limits, the multi-agent distributed pattern walkthrough covers orchestration once you outgrow the free tier, and the agent production infrastructure patterns piece covers the idempotency and state work you should be doing whether free or paid.

How To Wire the Stack Into a Real Working Agent

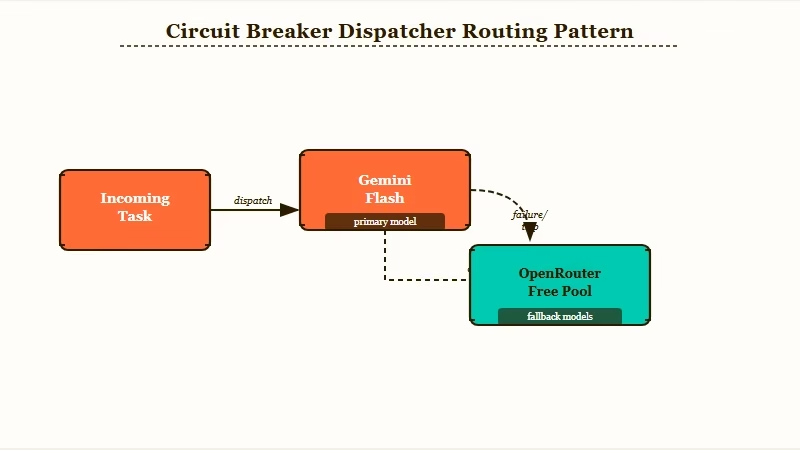

The routing pattern is: a small dispatcher decides which provider to call based on task type, with a circuit breaker that fails over when any provider returns a 429.

Build the dispatcher first, then plug in providers behind it. Without it, every model swap means rewriting prompts.

Here is the sequence I would actually walk through to set this up, in order:

- Sign up for the four no-card providers in one sitting. Gemini AI Studio, Groq, Mistral La Plateforme (Experiment tier), and OpenRouter. Each gives you an API key in under 5 minutes. Store all four keys in a single

.envfile and never commit it. - Build a thin dispatcher layer in front of the keys. Map task types (“reasoning”, “fast_summary”, “embed”, “rerank”) to providers. Every call to your agent goes through this dispatcher, never directly to a provider SDK. This is the single most important architectural decision in the whole stack, because it isolates your prompts from the underlying model.

- Add a circuit breaker on 429s and 503s. When Groq returns a rate limit, the dispatcher should fail over to OpenRouter’s Llama 3.3 70B free route for the same task type. When Gemini hits its daily cap, fail over to Mistral. The breaker should auto-close after a 60-second cooldown.

- Use Cohere only for the embed and rerank passes. The 1,000 monthly request cap kills it as a general-purpose model, but for RAG it is more than enough if you batch embeddings at index time and only rerank top-50 results at query time.

- Cap your own daily usage at 80 percent of each provider’s quota. If Gemini Flash gives you 1,500 RPD, treat 1,200 as your real ceiling and route the rest to OpenRouter or Groq. Provider-side throttling is harsher than the documented numbers; the soft limit is the one that protects you.

Here is what the dispatcher logic looks like in practice on a single agent task:

Before (single-provider, brittle): Your agent calls Gemini Flash for everything. Around request 1,400 of the day, Google starts returning 429s. The agent throws errors at users until midnight when the quota resets. You paid nothing, but you also shipped nothing usable.

After (dispatcher, routed): The same agent calls the dispatcher with task type “reasoning”. Dispatcher checks if Gemini Flash is within budget today. If yes, route there. If no, route to OpenRouter’s

meta-llama/llama-3.3-70b-instruct:freewith the same prompt. The agent never sees a 429; it just sees slightly slower responses for the last 10 percent of the day’s traffic.

You can hand-roll this dispatcher in Python in about 80 lines, or use n8n’s AI agent node which handles provider routing inside a no-code workflow if you would rather not own the failover code yourself.

What Breaks First and How To Plan Around It

The three failure modes that kill free-tier agent builds are prompt portability across model families, rate-limit divergence, and free-tier privacy terms. Every other failure is downstream of one of those three.

Prompt portability. Gemini Flash, Llama 3.3 70B, and Mistral Large do NOT respond to the same system prompt the same way. A tool-calling prompt that works on Gemini will misfire on Llama. The fix is a per-provider prompt template stored alongside the dispatcher, not one universal prompt. From my experience, this is the silent killer of free-tier builds, because the failure is “the agent gets dumber” not “the agent throws an error”, and you don’t notice for a week.

Rate-limit divergence. Each provider sends a different status code, header, and message on rate limit. Groq sends 429 with x-ratelimit-remaining-requests, Gemini sends 429 with a retry-after header, OpenRouter sends 429 with a JSON error body. Your dispatcher needs a parser per provider, not a generic 429 handler. Treat heterogeneous error handling as a first-class build, not an afterthought.

Privacy and commercial use. Google’s free Gemini explicitly trains on your free-tier requests. Mistral’s Experiment tier is prototype-only by terms. Cohere’s free tier is non-commercial only. If any user data flows through these providers, you have a compliance issue from day one. The way I’d handle it: never let production user data hit a free tier. Use free tiers for development, scheduled batch jobs, internal tools, and your own personal agent traffic.

For context on why this scale matters in 2026, the Stanford AI Index 2026 report tracks frontier API costs dropping 280x from Q1 2025 to Q2 2026, with free-tier daily quotas roughly doubling year over year. The free stack ceiling has risen alongside that drop, which is the reason this approach works now and did not work in 2024.

Here is the failure-mode map I keep nearby when wiring a new free-tier agent.

| Failure mode | Symptom | Fix |

|---|---|---|

| Prompt portability break | Agent gets “dumber” silently on fallback | Per-provider prompt template, not one universal prompt |

| Rate-limit divergence | Generic 429 handler misses provider-specific signals | Per-provider parser inside the dispatcher |

| Privacy / training-data leak | Customer data flows into a training corpus | Classify traffic, route customer-facing to paid tier |

| Daily quota wall | Provider returns 429s past quota with no fallback path | 80% soft cap + circuit breaker to OpenRouter free pool |

| Cold start on Hugging Face | First call to a model takes 30+ seconds | Keep HF only for batch jobs, never user-path |

A practical worked example of where this bites:

Before: You build a customer-facing email triage agent on Gemini Flash because it is free and fast. A customer’s confidential reply gets sent through the free tier. Three months later, that reply may be in Google’s training data. The compliance question lands on your desk.

After: Same agent, same Gemini Flash, but the dispatcher classifies every call: customer-facing traffic routes to paid Gemini (Vertex AI, which does not train on input) while internal-tool traffic routes to free Gemini. Cost stays low, compliance stays clean.

For automation glue that wires these providers into existing tools, Make.com is the workflow layer I would use to orchestrate the free-tier APIs with calendars, email, and Slack without writing your own queue infrastructure.

It pairs well with the dispatcher pattern because you can offload retries and scheduling to Make and keep your dispatcher focused on routing logic.

When To Stop Using the Free Stack

Stop using the free stack the moment you need an SLA, real privacy, or more than 5,000 requests per day from any single provider.

Past that point, the routing overhead stops paying for itself, and the cost of debugging cross-provider issues exceeds the cost of just paying for one good API.

The signal I look for is consistent 429s after dispatcher failover. If your circuit breaker is firing more than 5 percent of requests, you are at production scale and the free tier was not designed for that. Switch your primary path to a paid provider (Gemini paid tier, Anthropic, or Groq paid) and keep the free tier only as the backup path. You still keep most of the dispatcher work, you just flip which lane is primary.

The other signal is when you start needing fine-tuning, advanced safety filters, or guaranteed response times. Free tiers omit all three. The moment any of those becomes a requirement, you are out of the free-tier game and into a paid contract, full stop.

That is the honest end-state. Free-tier stacking gets you from zero to MVP at zero dollars, and it gets you a working dispatcher pattern that survives the upgrade. It does not get you to production scale, and pretending otherwise is the mistake that burns the most weekends.

Frequently Asked Questions

Can I run a real production agent entirely on free AI tiers in 2026?

You can run an MVP or low-traffic internal tool on free tiers indefinitely, but customer-facing production agents hit the limits inside 5,000 daily requests across all providers combined. Free tiers also lack SLAs, fine-tuning, and strong privacy guarantees, so the moment any of those matter you have to upgrade at least one provider.

Which free AI API has the highest daily quota in 2026?

Google Gemini 2.5 Flash leads with 1,500 requests per day on a 1M-token context window, no credit card required. Cerebras follows with 1 million free tokens per day on Llama 3.3 70B at 2,000 tokens per second. Groq adds another 1,000 daily requests on 70B models with industry-leading latency.

Is it legal to stack multiple free AI providers for one agent?

Yes. Stacking different providers is fully legitimate and recommended for cost-sensitive builds. What is not allowed is creating multiple accounts at the same provider (e.g. multiple Gmail addresses for Gemini AI Studio) to bypass that provider’s individual quota. Route across providers, not around their terms.

Do free AI APIs train on my data?

Google’s free Gemini explicitly uses free-tier requests to improve its models. Mistral’s Experiment tier and Cohere’s free tier are prototype-only and similar in spirit. Groq and Cerebras typically do not train on input, but always re-read the current terms before sending sensitive data through any free tier.

What is the fastest free AI inference provider right now?

Cerebras delivers up to 2,000 tokens per second on Llama 3.3 70B via wafer-scale chips. Groq follows at 300 to 800 tokens per second on the same model family via custom LPU hardware. Both are dramatically faster than free or paid GPU-based providers, which sit closer to 50-100 tokens per second.

How do I handle the different rate-limit responses from each free provider?

Each provider returns 429s with a slightly different header and body shape, so a generic catch-all does not work cleanly. Build a per-provider parser inside your dispatcher, surface retry-after where it exists, fall back to a 60-second cooldown where it does not, and route the next request through your fallback provider.