Terafab is Elon Musk’s $25 billion joint chip factory between Tesla, SpaceX, and xAI, announced March 22, 2026 in Austin. It targets 1 terawatt of annual AI compute output at 2nm process nodes, which would match roughly 70% of TSMC’s entire global capacity. The project is worth watching closely, but Tesla has zero chip manufacturing experience and the cost estimate may be understated by a factor of two or more.

Elon Musk announced Terafab at an event inside Austin’s Seaholm power plant on the night of March 22, 2026.

The claim:

the largest chip manufacturing facility ever built, targeting one terawatt of AI compute per year.

One terawatt. That’s not a typo.

I’ve watched enough Musk hardware announcements to know the pattern. The numbers are always enormous. The timeline is always optimistic. And somewhere in the middle is a real strategy worth understanding.

Terafab has all three of those things, and knowing which is which matters a lot if you follow AI tools and where they’re heading.

Here’s what I found going through the TechCrunch, Bloomberg, and Electrek coverage.

What Actually Happened

Terafab is a $25 billion chip fabrication venture announced March 22, 2026 between Tesla, SpaceX, and xAI, starting at Giga Texas in Austin and targeting 1 terawatt of annual AI compute at 2nm process nodes.

Musk presented the project at the Seaholm power plant event alongside Tesla and SpaceX leadership. The initial Austin facility sits on the North Campus of Giga Texas, where Tesla already manufactures vehicles.

The main fabrication campus will require thousands of acres, with multiple locations still under consideration.

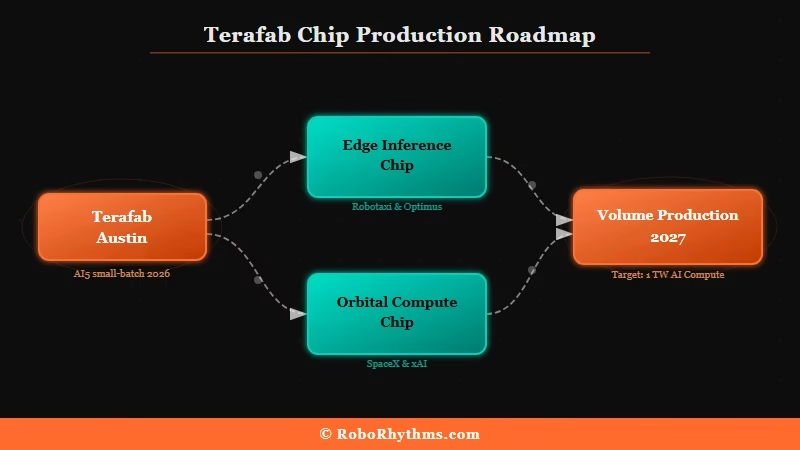

Two chip types are planned:

- An edge and inference chip for Tesla vehicles, robotaxis, and Optimus humanoid robots

- A high-power chip for space-based orbital AI systems, built for SpaceX and xAI

The vertical integration scope is unlike anything in the semiconductor industry today. Terafab combines logic fabrication, memory production, advanced packaging, and on-site photomask manufacturing under one roof.

That last part is key: photomasks are normally outsourced, and bringing them in-house is what enables the rapid design iteration Musk is pointing to.

| Phase | Target | Status |

|---|---|---|

| Late 2026 | Small-batch AI5 chip production | Announced, no confirmed date |

| 2027 | Volume production ramp | Projected |

| Full capacity | 1 million wafer starts per month | Long-term ambition |

| Full compute output | 1 terawatt per year | Long-term ambition |

Worth flagging: Tesla had already delayed its AI5 chip to mid-2027 before this announcement.

Why This Is a Bigger Deal Than It Sounds

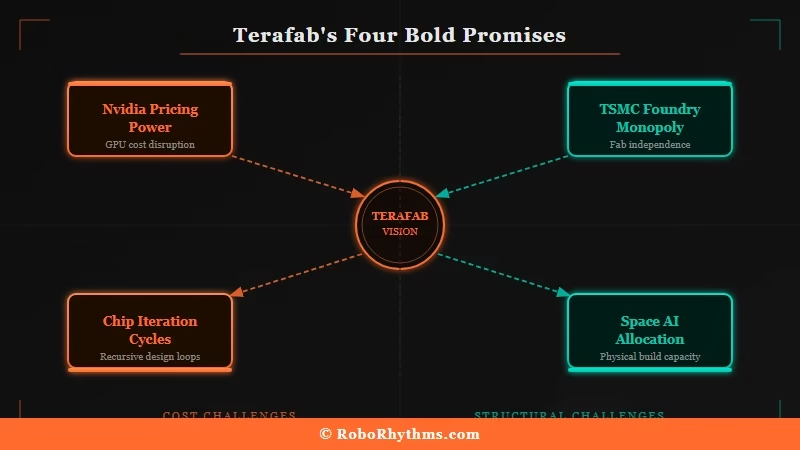

Terafab is not just a chip factory. It is a direct attempt to break the three structural dependencies constraining Musk’s companies: TSMC’s foundry monopoly, Nvidia’s AI chip pricing leverage, and US-China semiconductor supply chain risk.

Think about what AI compute costs right now. Every major AI lab, including xAI, runs on hardware sourced from Nvidia and fabricated at TSMC. That is not a coincidence. It is the current chokepoint in the entire AI industry.

Tesla stated in its Terafab announcement that its projects require “more chips than all the chip manufacturers in the world combined can provide today.” Whether that’s marketing or honest scarcity assessment, it points at a real problem.

What the Electrek analysis surfaces is the detail most headlines skip: Terafab at full capacity would match roughly 70% of TSMC’s current global output from a single facility.

TSMC took five decades to build that capacity. Musk is proposing to replicate a meaningful portion of it in years.

The recursive design loop is the genuinely new idea here. With photomask manufacturing, wafer fabrication, and testing all on the same campus, Terafab can theoretically compress chip iteration from the current 6 to 9 months down to days or weeks.

That’s the same philosophy behind how AI automation tools changed software development by collapsing the feedback loop, applied to hardware.

| Terafab Promise | Optimist Reading | Skeptic Reality Check |

|---|---|---|

| 1 terawatt compute per year | Rivals 70% of TSMC’s entire global output from a single fab | TSMC built that capacity over 50+ years. Tesla has zero chip manufacturing track record. |

| $25B price tag | Defensible if phased over 5 to 7 years with contributions from all three companies | A single 2nm fab at 50,000 wafer starts costs about $28B alone. The math needs more detail. |

| 10x faster chip iteration | SpaceX’s Starship rapid-prototype philosophy did eventually work for rockets | Semiconductor physics constraints are more rigid than rocket engineering tolerances |

| 80% of output for space AI | Space solar delivers 5x more irradiance; heat rejection in vacuum is genuinely easier | Electrek called the space AI allocation a plan with zero connection to any near-term business reality |

What This Means for You

Terafab matters to anyone who uses or builds with AI tools because compute scarcity is the main reason AI pricing has stayed high, and a credible new chip source changes that equation.

The practical implications break down this way:

- AI tool costs could drop. Nvidia’s ability to price H100 and B200 chips at current levels depends on there being no credible alternative foundry at advanced nodes. A Terafab that hits even 30% of its stated capacity puts pressure on that pricing structure. Lower upstream compute costs eventually reach the API pricing and subscription tiers you pay monthly. If you’re tracking the best paid AI tools worth keeping in 2026, cheaper compute is the upstream variable that matters most.

- Other AI labs accelerate their own chip programs. Google already runs TPUs. Meta is scaling MTIA. Terafab is competitive pressure that speeds up the whole field. The question of when AI makes traditional labor optional becomes much more urgent when the compute ceiling lifts this fast.

- Start with skepticism. Electrek draws the Battery Day parallel directly. In 2020, Tesla promised revolutionary cell manufacturing at scale. Five years later, Tesla is at roughly 2% of its original volume goal. I’d give Terafab somewhat better odds because the recursive loop model is a genuinely new approach. But I wouldn’t price in the terawatt target for at least three to four years.

- The ASML dependency is a real risk worth tracking. Terafab still requires ASML’s EUV lithography equipment to reach 2nm production. ASML sits at the center of US-China semiconductor export controls. If geopolitical pressure tightens access to EUV tools, Terafab’s timeline slips regardless of what Musk announces.

What Comes Next

The real test is late 2026, when AI5 chip samples either come out of Giga Texas or they don’t. Everything before that is groundbreaking ceremonies and procurement.

Musk’s near-term steps are relatively modest compared to the scale of the announcement: begin construction at Giga Texas North Campus, finalize the second large-format site (thousands of acres, multiple locations under review), and get the on-site photomask loop operational for early AI5 design cycles.

TSMC is not standing still. The Arizona Fab 21 is already in volume production at advanced nodes, and TSMC’s 2nm roadmap is on track for 2025 to 2026.

One Musk announcement does not shift the competitive landscape. A working fab with verified yield data would.

What I’d watch in 2026: the AI5 chip volume data coming out of Tesla earnings calls, the second site announcement, and whether Wall Street starts treating Terafab as a capital expenditure line item or keeps it in the “ambitious vision” bucket.

The best AI tools you’re using today were all built on the compute infrastructure that exists right now. Terafab is a bet that infrastructure changes in three years. Whether that bet pays is a 2027 question.

FAQs

What is Terafab?

Terafab is a $25 billion chip fabrication facility announced by Elon Musk on March 22, 2026, structured as a joint venture between Tesla, SpaceX, and xAI. It targets 1 terawatt of annual AI compute at 2nm process nodes, starting at the Giga Texas North Campus in Austin.

When will Terafab start producing chips?

Small-batch AI5 production is targeted for late 2026, with volume production projected for 2027. Tesla already delayed its AI5 chip to mid-2027 before this announcement, which raises questions about both timelines.

How does Terafab compare to TSMC?

At full stated capacity, Terafab would match roughly 70% of TSMC’s current global output from a single facility. TSMC took over 50 years to build that capacity. Tesla has no chip manufacturing experience at any scale.

Is this just another Musk overpromise?

That is the fair question. Electrek compares it directly to Battery Day 2020, where Tesla’s cell manufacturing promises remain far from stated targets five years later. The recursive chip iteration loop is a genuinely new approach, but semiconductor physics does not bend as easily as rocket design.

What does Terafab mean for Nvidia?

If Terafab reaches meaningful production volume, it reduces Nvidia’s pricing leverage by introducing a new source of advanced AI compute. Google TPUs and Meta MTIA are already reducing Nvidia’s lock-in. A Tesla-built chip at scale would accelerate that trend further.

Will Terafab make AI tools cheaper?

Potentially, over time. Nvidia’s current pricing power on H100 and B200 chips depends on supply constraints. More AI compute supply from any credible source tends to push down API pricing and inference costs over time. The earliest any pricing effect would reach end users is 2027.