What Happened: Deepseek V4 dropped today on Janitor AI and users are pushing it hard on r/JanitorAI_Official. Early reports show stronger memory and better long-context recall, but filter behavior is inconsistent and some proxy setups are timing out. This is the live April 2026 picture of what works and what does not.

Deepseek V4 on Janitor AI is the story of the day for AI roleplay users. A thread went live on r/JanitorAI_Official around 04:06 UTC on April 24, 2026, and within a few hours the community was already reporting bugs, filter quirks, and context wins in parallel.

The pattern is the same one we saw with V3: new model, new expectations, new landmines.

I have spent the morning reading through the Reddit thread, the JLLM complaints next door, and the Bloomberg coverage of the V4 release. The short version is that V4 is a genuine upgrade on paper, but the Janitor deployment is uneven. If you are using a custom proxy setup, you are going to feel it first.

What Happened With Deepseek V4 Today

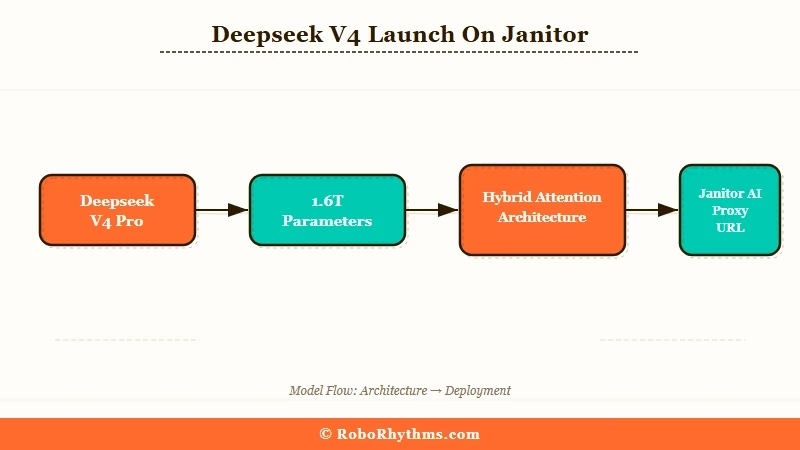

Deepseek V4 on Janitor AI is a new model integration that went live on April 24, 2026, pushing the Hybrid Attention Architecture and a 1M token context window into active roleplay sessions.

Users are swapping to it through the proxy field in character settings and reporting results across the r/JanitorAI_Official thread “Deepseek V4 is out” within hours of release.

The parent release from Deepseek itself is the bigger context here. Bloomberg reported that Deepseek unveiled V4 Flash and V4 Pro variants, with the Pro model running 1.6T total parameters and 284B active in a Mixture of Experts setup.

The 1M token context is the detail that matters for Janitor users, because JLLM has been bleeding context on long scenes for weeks.

From what I have seen in the live thread, most users are plugging V4 into the proxy field and treating it like a drop-in replacement for Deepseek V3. That works for about 70 percent of setups. The other 30 percent are getting 502 errors, silent proxy timeouts, or blank responses on the first message.

What is Janitor AI: A community-driven AI roleplay and character chat platform where users can plug in external LLMs like Deepseek, Claude, or GPT via a proxy field instead of relying on the default JLLM.

One comment in the thread captured the mood: “It is so good at holding the thread of what my character said three hours ago. I forgot what that felt like.” That is the Hybrid Attention Architecture doing its job.

Meanwhile, someone else posted that their character suddenly started responding in Chinese mid-scene until they regenerated.

Why This Is a Bigger Deal Than It Sounds

V4 on Janitor matters because JLLM quality collapsed this week and users have been looking for a reliable swap.

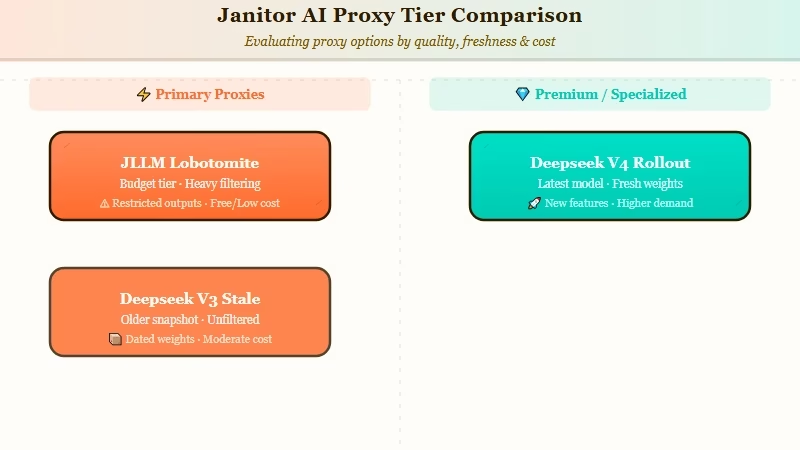

The backdrop makes this release more than a routine model bump. r/JanitorAI_Official has been flooded for 48 hours with complaints about the native JLLM, with one thread titled “JLLM issues” pulling 61 comments and another calling bots “lobotomites.”

The way I see it, Janitor AI’s entire product depends on the LLM behind the scenes feeling smart. When JLLM regressed, the platform became unusable for a chunk of power users. Deepseek V4 arriving today is accidentally perfect timing for the platform.

Here is what I would call the layered problem users are navigating right now:

| Layer | Current state | What breaks |

|---|---|---|

| JLLM (default) | Regression reported, “lobotomite bots” | Short memory, repetitive responses |

| Deepseek V3 proxy | Still usable, older context | Stale context on scenes over 30k tokens |

| Deepseek V4 proxy | New today, uneven | Proxy timeouts on some configs |

| OpenAI / Claude proxy | Stable but expensive | Rate limits and key costs add up |

The tension is that V4 is the obvious choice on quality, but the rollout is rough enough that nobody wants to commit until the proxy layer stabilizes. I would expect a 48 hour settling period before the dust clears.

What This Means for You Today

If you are a Janitor AI user right now, V4 is worth trying but not worth trusting as your only setup. Keep V3 in your proxy rotation as a fallback because the V4 endpoint is still shaking out. The users who are happiest in the thread are the ones who swapped, ran a test scene, and kept their V3 URL handy.

Here is the sequence I would walk through today if I were testing V4:

- Back up your existing proxy URL before changing anything, so you can revert in one click if V4 misbehaves on your character

- Open Janitor AI character settings and paste the new V4 proxy endpoint into the proxy URL field

- Run a short test scene (10 messages) on a character you know well, to benchmark memory and tone against your V3 baseline

- If the test goes well, switch your main characters over; if it does not, flip back to V3 and wait 24 hours for the endpoint to stabilize

- Check the Reddit thread once a day for the next week for proxy URL updates from the community

What I would not do today is assume V4 is ready for your most important long-form scenes. The context window is bigger than V3 but the early-day bugs outweigh the upside until proxy behavior settles.

Example scenario: You have a character you have been running on Deepseek V3 for three months. On V4, the character suddenly remembers a detail from week one that V3 had dropped, which feels like magic. Twenty messages later, the model responds in Mandarin because the proxy glitched. On V3 you get neither the magic nor the glitch.

For everyone who has been considering whether to jump ship entirely, the best Character AI alternatives guide still applies. V4 does not change the platform options, it just shifts where Janitor sits in the hierarchy.

What Comes Next For Deepseek V4 and Janitor

From what I have seen across past Deepseek releases, the endpoint stabilizes within about a week. Proxy providers like OpenRouter and third-party relays have to update their routing tables, and Janitor users find the working URLs through the Reddit hivemind.

If V4 follows the same curve as V3 did last year, the sweet spot is roughly April 29 to May 1.

The bigger question for Janitor AI is whether V4 becomes a permanent upgrade path or a bandaid over JLLM degradation. If the platform does not ship a JLLM fix in the next two weeks, expect a chunk of the community to settle permanently on V4 proxies, which changes the revenue calculus for Janitor’s paid tier.

I have seen the Janitor AI slow response pattern repeat three times in the last twelve months, so this is not a one-off.

For users who are tired of waiting for Janitor to stabilize, my honest recommendation is to keep one persistent memory platform in your rotation, so that when the next proxy outage hits, you still have continuity with your main character.

Our Nomi AI review covers the persistent memory side, and the broader Janitor AI not working guide covers the proxy failure mode.