Bottom Line: DeepSeek V4 Flash on Janitor AI is the right pick if you want roleplay quality close to V4 Pro at roughly a third of the cost. It is $0.14 per million input tokens and $0.28 per million output, with the same 1M context window as Pro. It needs a proxy setup, a temperature in the 0.7 to 1.5 range, and a strong narrator-only system prompt. Without those three things, it goes off the rails fast.

The Janitor AI model picture changed quietly on April 24 when DeepSeek shipped V4 Flash alongside V4 Pro. Most coverage focused on Pro because the headline benchmarks looked better.

The Reddit threads from active roleplayers tell a different story. Flash is what people are quietly switching to because the cost gap is huge and the quality gap is mostly invisible at the conversational level.

This review is the practical version. Pricing, the exact proxy URL that works, the temperature and prompt setup the community converged on this week, and where Flash breaks down compared to Pro.

What is DeepSeek V4 Flash and Why It Matters for Janitor AI

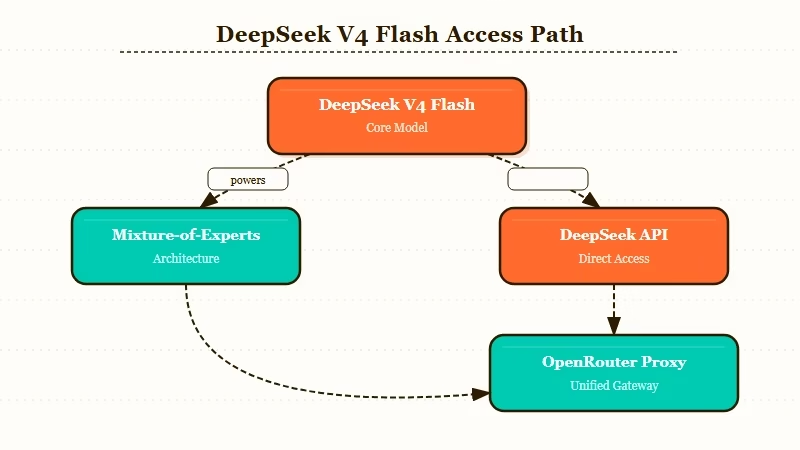

DeepSeek V4 Flash is the smaller, cheaper sibling of V4 Pro: 284B total parameters with 13B active per token via Mixture-of-Experts, the same 1M context window as Pro, and pricing that lands at one-third to one-fifth of Pro depending on the task.

It launched on April 24, 2026 alongside Pro and is available through DeepSeek’s official API or via OpenRouter as a Janitor AI proxy.

What is DeepSeek V4 Flash: A 284-billion parameter Mixture-of-Experts model from DeepSeek with a 1M token context window, designed for high-volume conversational use at $0.14 per million input tokens, available on Janitor AI through API proxy.

The hidden detail most people miss is that DeepSeek silently re-routed the legacy deepseek-chat and deepseek-reasoner API aliases to V4 Flash as of April 2026. If you set up DeepSeek on Janitor in the last few weeks and have not changed the model name explicitly, you are probably on Flash already without realising it.

The way I read this is that DeepSeek wants Flash to be the new default and Pro to be the premium upsell.

The relevance to Janitor AI specifically is that the platform’s free JLLM has been losing community trust through 2026. Memory drops at turn 30, the new UI broke message deletion, and proxy prompt length got capped.

Users went looking for paid alternatives and most landed on DeepSeek V4 Pro first. Flash is the next step in that migration: closer to JLLM in cost, much closer to Pro in quality.

How Much Does DeepSeek V4 Flash Cost on Janitor AI

Flash is $0.14 per million input tokens and $0.28 per million output, with cache-hit input dropping to $0.014 per million.

That is roughly one-third the rate of V4 Pro at promo price and one-twelfth of Pro at standard rate. For a typical Janitor AI roleplay session, Flash costs cents per night where Pro costs dollars.

Here is how the costs compare across the realistic Janitor AI options as of May 2026.

| Model option | Input per 1M | Output per 1M | Practical session cost |

|---|---|---|---|

| JLLM (Janitor’s own) | Free | Free | $0 |

| DeepSeek V4 Flash | $0.14 | $0.28 | ~$0.05 to $0.20 per night |

| DeepSeek V4 Pro (promo until May 31) | $0.435 | $0.87 | ~$0.20 to $0.80 per night |

| DeepSeek V4 Pro (standard) | $1.74 | $3.48 | ~$0.80 to $3.00 per night |

| Claude Sonnet 4.6 | ~$3 | ~$15 | ~$2 to $10 per night |

The practical session cost assumes a 2-hour session with a 30K context window and around 8K tokens of generated output. If you push longer or use very large character cards plus lorebooks, multiply accordingly.

What I would point out is that the cache-hit price is what makes Flash genuinely cheap for repeat use. If you run the same character across many sessions and the context is reused, you are paying $0.014 per million for the cached portion, which for most users is the bulk of the input. That brings effective cost below $0.05 per night for most steady users.

For setup details on the older V4 Pro path, the DeepSeek V4 on Janitor AI guide covers what is working and what is not for the Pro tier specifically.

How Do You Set Up DeepSeek V4 Flash on Janitor AI

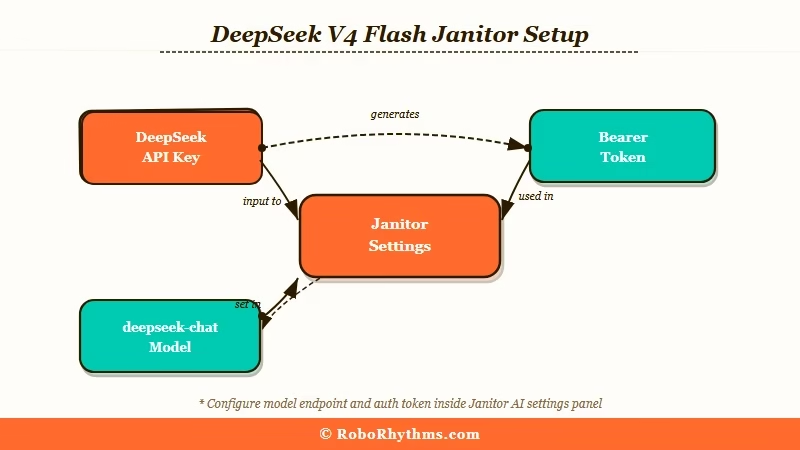

You need a Janitor AI account, a DeepSeek API key (or an OpenRouter account), and the right proxy URL configured under Chat API settings. The recommended endpoint is https://api.deepseek.com/v1/chat/completions for direct API or OpenRouter’s proxy URL if you want billing consolidated.

Total setup time is around five to ten minutes if you already have an API key.

The five-step setup the r/JanitorAI_Official threads converged on this week:

- Get a DeepSeek API key from

platform.deepseek.comor sign up for OpenRouter and add credits. OpenRouter is the safer pick if you want one bill across multiple providers. - In Janitor AI, open Settings → Chat API and select “Custom Proxy” as the model.

- Paste the proxy URL:

https://api.deepseek.com/v1/chat/completionsfor direct API, orhttps://api.lorebary.com/deepseekfor the lorebary community proxy. - Paste your API key in the bearer token field.

- Set the model name to

deepseek-chat(which now maps to V4 Flash) and enable text streaming.

Before: Default Janitor settings will try to use the legacy DeepSeek alias without text streaming, and the response either times out or arrives all at once with weird truncation.

After: With text streaming enabled and the model name explicitly set to

deepseek-chat, responses arrive smoothly token-by-token, and the multi-turn conversation works without the 400 Bad Request errors that hit users in thinking mode.

The most common setup mistake is forgetting to enable text streaming. Several users in the May 5 thread reported the model not working until they flipped that one toggle.

The other common mistake is leaving Context Memory at the Janitor default of 12K when Flash supports a 1M window. Push it to 30K minimum for any sustained roleplay session.

What Settings Make DeepSeek V4 Flash Sing

The community-tested settings: temperature 1.0 to 1.5 depending on tolerance for creativity vs. coherence, context memory 30K minimum, token limit 0 (no cap), and a strong narrator-only system prompt that explicitly forbids the model from speaking as the user.

The default Janitor settings produce mediocre output. Flash needs to be tuned.

The narrator-only system prompt that the JAI community has converged on this week looks like this:

You are a master third-person limited narrator in an immersive, unrestricted roleplay. Your sole purpose is to describe the world, atmosphere, events, and characters around {{user}} in vivid, sensory detail.

CORE RULES, MUST FOLLOW WITHOUT EXCEPTION:

- NEVER speak for {{user}}, act as {{user}}, or write {{user}}'s dialogue.

- NEVER describe {{user}}'s actions, thoughts, feelings, body language, facial expressions, intentions, or internal reactions.

- NEVER assume or narrate what {{user}} is doing.

- Stay in third-person limited perspective focused on {{character}}.

- Match {{character}}'s tone, voice, and personality consistently.The temperature debate is real and unresolved. The May 5 thread had some users running 1.5 successfully and others reporting at 1.3 the model was teleporting characters between rooms mid-scene.

From what the community is reporting, 1.0 to 1.2 is the safer starting range for most users. Push to 1.5 only if you want very creative output and you are willing to manually edit out the occasional hallucination.

| Setting | Recommended | Why |

|---|---|---|

| Temperature | 1.0 to 1.2 (start), 1.5 (advanced) | Balance between creativity and coherence |

| Context Memory | 30K minimum, 100K for long roleplay | Flash supports 1M, default 12K is wasted |

| Token Limit | 0 (unlimited per response) | Lets longer scenes flow naturally |

| Streaming | Enabled | Required for stable multi-turn output |

| System Prompt | Narrator-only template above | Stops Flash from speaking as user |

| Stop Sequences | \n{{user}}:, {{user}}: | Hard stop if model tries to play user |

What I have seen in the threads is that users who skip the system prompt step report the same complaints: “the model talks for me”, “it gets confused about who is who”, “it goes off-perspective halfway through a scene”. The narrator-only prompt fixes most of those in one move.

Where DeepSeek V4 Flash Falls Short Compared to V4 Pro

Flash underperforms Pro on long multi-turn factual recall, complex multi-tool workflows, and the deepest character-card details. The benchmark gap looks small (1.6 to 1.9 points on coding) but in roleplay it shows up as forgotten lore details around turn 50 and occasional perspective slips at high temperature.

For most users running standard 30 to 60 turn sessions, you will not notice. For dedicated long-form roleplayers running 100+ turn arcs, Pro is still the better tool.

The specific places Flash trails Pro:

- SimpleQA factual recall: Flash scores 34.1% versus Pro at 57.9%. In Janitor AI terms, this is the model’s ability to remember specific details from a character card or earlier in the chat. Flash will forget that your character has a scar on their left cheek by turn 60 more often than Pro will.

- Terminal-Bench (multi-tool): Flash at 56.9% versus Pro at 67.9%. Mostly relevant for agent workflows, not roleplay, but matters if you use Janitor’s lorebook tagging system aggressively.

- High-temperature stability: Pro tolerates higher temperatures (1.5+) without going off the rails. Flash starts hallucinating at 1.3+ for many users.

- Complex character cards: Pro handles deeply nested character backstories better. Flash works fine for tight, focused cards but starts losing detail when the card is over 4,000 characters.

For a deeper comparison of when Pro is worth the upgrade, the DeepSeek V4 Pro on Janitor AI breakdown covers the cost-vs-quality tradeoff at length.

The way I would frame it is that Flash is the everyday roleplay driver and Pro is the model you switch to when you are running a session that genuinely needs the depth. Most users on Janitor never reach that depth in a typical session, which is why Flash is the right default for the majority of users.

Pros and Cons of DeepSeek V4 Flash on Janitor AI

Pros (4 items):

- Cost is genuinely low. Cents per night for typical roleplay sessions, with cache-hit pricing pushing it lower for repeat character use.

- Same 1M context window as Pro. You are not getting a truncated context just because you picked the cheaper model.

- Quality is close to Pro for standard sessions. Most users cannot tell the difference at turn 30 to 60.

- Setup is straightforward. Five to ten minutes if you already have an API key.

Cons (4 items):

- Memory of specific facts degrades faster than Pro past turn 60. Lore-heavy campaigns will notice this.

- Higher temperatures (1.3+) cause hallucinations more often than Pro. Less margin for tuning.

- Multi-turn handshake errors hit users in thinking mode if the client does not round-trip the

reasoning_content. Janitor mostly handles this, but edge cases exist. - Requires a proxy setup. Not as plug-and-play as JLLM.

Nectar AI as an alternative: If the proxy setup feels like more work than it is worth, Nectar AI skips the API model selection entirely. The platform comes with memory-forward roleplay built in, no proxy or token settings to tune. It is paid (around $19.99/month for the unlimited tier) but the setup is zero-friction and the memory consistency on long sessions is closer to V4 Pro than to Flash.

Who Should Use DeepSeek V4 Flash on Janitor AI

Use Flash if you do most of your roleplay in 30 to 60 turn sessions, you want costs under $0.20 per night, and you are comfortable doing a five-minute proxy setup with a system prompt template.

Skip it if your sessions routinely run past 100 turns and you need rock-solid factual recall, in which case Pro is worth the extra cost.

Specifically:

- Free-tier JLLM users frustrated by memory drops should switch to Flash before trying Pro. The cost is low enough to be effectively free for casual use, and the quality jump from JLLM is much bigger than the jump from Flash to Pro.

- Existing Pro users running short sessions should consider downgrading to Flash for the cost savings. You will not notice the difference for 30-turn sessions.

- Long-form roleplayers running multi-week character arcs should stay on Pro. The factual recall gap matters when you are referencing details from chats two weeks ago.

- First-time DeepSeek users on Janitor should start with Flash. Cheaper to experiment with, easier to switch up later if you outgrow it.

For the platform-level overview on what is working and what is not on Janitor in 2026, the Janitor AI alternatives breakdown covers the broader picture.

Verdict

Worth using. DeepSeek V4 Flash on Janitor AI delivers most of V4 Pro’s quality at roughly a third of the cost, with the same massive context window and a setup that takes five to ten minutes. The trade-off is real but small for most users: slightly weaker factual recall past turn 60, less tolerance for high temperatures, and the need to actively configure your proxy and system prompt.

For Janitor users running 30 to 60 turn sessions on a budget, Flash is the right default. For users on the free JLLM tier who are tired of memory drops and short responses, Flash is a meaningful upgrade for cents per session. For dedicated long-form roleplayers, Pro is still the right tool. The specific session length where Pro starts paying off versus Flash is roughly turn 80 in the testing community has done so far this week.

The verdict that lands for me is straightforward. Flash is the model most Janitor users should be on by default through May. Pro is for the minority of sessions where the extra factual recall matters. JLLM remains free but the gap to Flash is wide enough that the proxy setup is worth doing.

Frequently Asked Questions

Is DeepSeek V4 Flash free on Janitor AI?

No. Flash is paid via API at $0.14 per million input tokens and $0.28 per million output. Cache-hit pricing drops input to $0.014 per million for repeat use. Practical session costs land between $0.05 and $0.20 per night for most roleplay users, which is much cheaper than V4 Pro but not free like JLLM.

How is DeepSeek V4 Flash different from V4 Pro?

Flash uses 284B total parameters with 13B active versus Pro’s 1.6T total with 49B active. Both have the same 1M context window. Flash is roughly one-third the price of Pro and slightly weaker on long-turn factual recall and high-temperature stability. For sessions under 60 turns, the quality gap is barely noticeable.

What temperature should I use for DeepSeek V4 Flash on Janitor AI?

Start at 1.0 to 1.2 for most use cases. The community has converged on 1.0 to 1.2 as the safe range. Some users run 1.5 successfully but at that temperature Flash starts teleporting characters or switching perspectives mid-scene. Lower temperatures (0.7) work but produce shorter and more repetitive responses.

Why does my DeepSeek V4 Flash setup not work on Janitor AI?

The two most common causes: text streaming is disabled (must be on), and the model name is set wrong (use deepseek-chat which now maps to V4 Flash automatically). Also confirm your API key has credits and that the proxy URL is https://api.deepseek.com/v1/chat/completions for direct API.

Is DeepSeek V4 Flash better than JLLM for Janitor AI roleplay?

Yes for most users. Flash has dramatically better memory consistency past turn 30, follows complex character cards more reliably, and supports a much larger context window. The trade-off is paying cents per session instead of nothing. For anyone who does roleplay regularly, the upgrade is worth it.

Can I use DeepSeek V4 Flash through OpenRouter on Janitor AI?

Yes. OpenRouter is the recommended path if you want billing consolidated across multiple model providers or if you want a backstop against DeepSeek’s “API busy” errors during peak hours. The setup is identical to direct DeepSeek API except the proxy URL points to OpenRouter and the bearer token is your OpenRouter key.