Anthropic shipped Claude Opus 4.7 on April 16, 2026, and it is the first Opus upgrade that does not raise the price. Same $5 per million input tokens, same $25 per million output tokens, a full 1 million token context window, and 87.6% on SWE-bench Verified.

I pulled the benchmarks and the Anthropic announcement, and the thing that surprises me most is not the score. It is what the company chose not to release.

What Happened: Anthropic released Claude Opus 4.7 on April 16, 2026 with a 1M token context window, 87.6% on SWE-bench Verified, and the same $5/$25 per million token pricing as Opus 4.6. The company also confirmed it is keeping its stronger Mythos Preview model restricted to a small group of enterprise cybersecurity partners.

What Shipped in Claude Opus 4.7

Claude Opus 4.7 shipped on April 16, 2026 as a direct Opus 4.6 replacement with a 1M context window, 87.6% on SWE-bench Verified, 2x agentic throughput, and identical $5/$25 pricing.

Opus 4.7 is a direct replacement for Opus 4.6 on the API and in Claude Code. The headline numbers from the Anthropic release post are 87.6% on SWE-bench Verified, a 1 million token context window available to all Tier 3 customers, and a 2x throughput improvement on long-running agentic tasks.

The pricing surprised me. Every prior Opus jump raised the per-token cost. Opus 4.6 launched at $5/$25.

Opus 4.7 is also $5/$25. VentureBeat’s writeup flagged that Anthropic is absorbing the efficiency gains instead of pocketing them, which they read as a direct answer to GPT-5.4 pricing pressure.

| Spec | Opus 4.6 | Opus 4.7 |

|---|---|---|

| SWE-bench Verified | 84.1% | 87.6% |

| Context window | 500K | 1M |

| Input price (per 1M tokens) | $5 | $5 |

| Output price (per 1M tokens) | $25 | $25 |

| Prompt caching hit discount | 90% | 90% |

| Extended thinking | Yes | Yes (refined) |

The 2x agentic throughput claim is the one I want to verify with my own workload before believing. It matters because Claude Code sessions today can stall on a single long tool loop, and cutting that in half would change how I schedule overnight runs.

If you are already building on Anthropic agents, the managed agents feature becomes more interesting at this throughput level.

Why Anthropic Is Holding Mythos Back

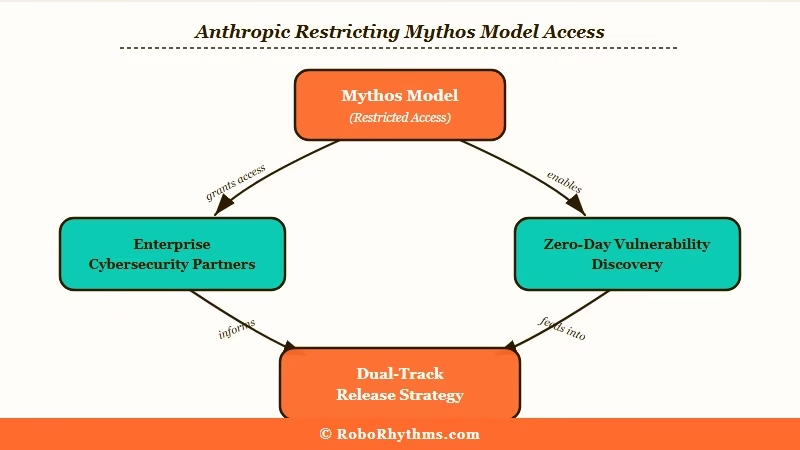

Anthropic is keeping Mythos behind a structured access program because its vulnerability discovery and exploit generation capabilities are too risky for open API release.

This is the part of the release that I keep thinking about. Anthropic confirmed in the same announcement that they have a stronger model internally, code-named Mythos, and they are deliberately not releasing it to the public.

Axios reported that Mythos is being shared only with a small group of enterprise cybersecurity partners under a structured access program.

Anthropic’s own framing is that Mythos demonstrates capabilities in vulnerability discovery and exploit generation that they do not want freely accessible.

The March preview of Mythos already found zero-days in open-source infrastructure, which we covered in Claude’s zero-day discoveries. What changed between March and now is that Anthropic decided the gap between Mythos and Opus 4.7 was large enough to justify splitting the model line.

Reading between the lines of the Anthropic post on Mythos access, my read is that this is the first time a frontier lab has publicly committed to a dual-track release strategy. Opus 4.7 is the commercial product.

Mythos is the safety-gated ceiling. OpenAI and Google have released everything they have trained. Anthropic just said they will not.

Does Claude Opus 4.7 Beat GPT-5.4?

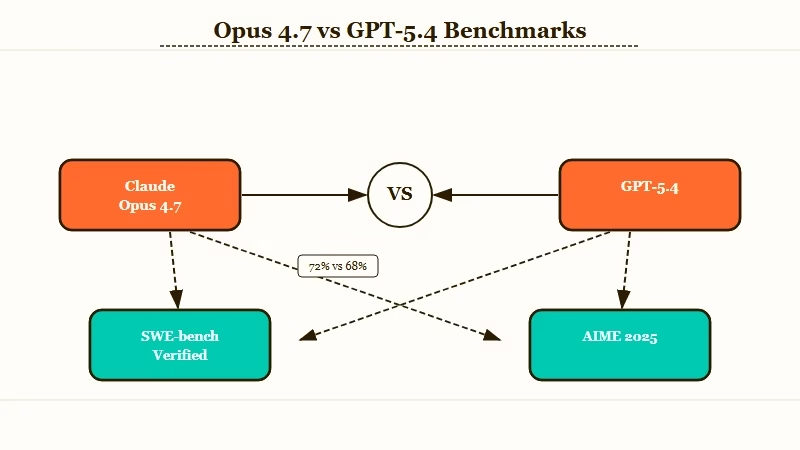

Opus 4.7 beats GPT-5.4 on SWE-bench Verified and Tau-bench agentic tasks, while GPT-5.4 still edges ahead on pure reasoning benchmarks like AIME 2025.

Depends on what you need it for. On SWE-bench Verified, Opus 4.7 at 87.6% edges past the most recent GPT-5.4 reported score of 86.2%.

On raw math and reasoning benchmarks like AIME 2025, GPT-5.4 still leads by a few percentage points. The Decoder’s benchmark roundup from this morning has both models within the margin of error on most tasks.

| Benchmark | Opus 4.7 | GPT-5.4 | Grok 4.20 |

|---|---|---|---|

| SWE-bench Verified | 87.6% | 86.2% | 82.9% |

| AIME 2025 | 94.1% | 96.4% | 93.8% |

| MMLU-Pro | 88.3% | 87.9% | 85.1% |

| Tau-bench (agentic) | 78.2% | 71.4% | 69.1% |

The benchmark I would trust most for my own work is Tau-bench, which measures multi-turn tool use in realistic scenarios. Opus 4.7 takes that one by almost 7 points, which lines up with what I have seen in my own Claude Code runs. If your work is agentic, Opus 4.7 is the pick.

If your work is single-shot reasoning, GPT-5.4 still has a lead. We put all three head to head in our three-way model comparison last week, and the Opus upgrade shifts the verdict toward Anthropic for agent-heavy workflows.

What This Means for You

If you use Claude through the API or Claude Code, Opus 4.7 is already your default model at the same price, with a 1M context window available at Tier 3.

Here is what changes for you right now:

- You are already on Opus 4.7 if you pay through the API. The model ID swap happened automatically on April 16, and the pricing did not move. If you are using Claude Code, session defaults are now Opus 4.7 unless you pinned an older model.

- The 1M context window unlocks full-repo work. Feeding an entire repository into a single prompt without chunking is now possible at Tier 3, which changes how I do audit work. If you have been bouncing between Claude and GPT for large-repo tasks, this closes the main gap that pushed people toward Gemini.

- The timing is good for ChatGPT migrators. For ChatGPT users thinking about switching from ChatGPT to Claude, the pricing pressure is working in buyers’ favor. Anthropic just crossed $30 billion in revenue, which means the API is not going anywhere.

What Comes Next

Watch whether OpenAI and Google adopt a similar dual-track strategy, and whether the 2x agentic throughput holds up in production workloads.

Anthropic has not announced a timeline for public Mythos access, and I do not expect one this year. What I am watching for is whether OpenAI or Google match the dual-track strategy or keep shipping everything. If they keep shipping everything, Anthropic’s safety-gating becomes a market position, not just a capability choice.

The other thing worth watching is the 2x throughput claim. If it holds in production, the cost per task on Opus 4.7 is effectively lower than Opus 4.6 even at the same per-token price. That is the kind of quiet upgrade that changes unit economics for anyone running Claude in a product.

Summary

- Claude Opus 4.7 released April 16, 2026 at the same $5/$25 pricing as Opus 4.6

- 87.6% on SWE-bench Verified, 1M token context window, 2x agentic throughput

- Anthropic confirmed it is holding a stronger Mythos model back from public release

- Opus 4.7 beats GPT-5.4 on SWE-bench and Tau-bench, trails on AIME

- Practical win is the 1M context window at Tier 3 for large-repo work