My Take: Opus 4.7 is not a clean upgrade over 4.6. It scores 41.0% on the NYT Connections extended benchmark where 4.6 scored 94.7%, real users are noticing the regression in coding and research tasks, and the simplest explanation is the one Anthropic will not say out loud. The model is cheaper to run, not smarter, and that matters because OpenClaw demand has exceeded what their datacenters can serve at 4.6 quality.

If you only read the Anthropic release post, Opus 4.7 looks like a normal point upgrade. New Research mode, a new Design tool shipped alongside, a couple of benchmark wins. Standard AI lab cadence.

If you read the people who use Opus 4.7 on hard work, the story is the opposite. The top post on r/artificial this week is titled “Opus 4.7 is terrible, and Anthropic has completely dropped the ball.” The top post on r/singularity is a benchmark showing a 54 point drop on NYT Connections.

That is not a release story. That is a regression story, and the mainstream AI press is not touching it.

I covered the Opus 4.7 launch as a straight news item in yesterday’s Opus 4.7 release post. This one is the part that piece could not say yet, because the user data had not caught up.

The Mainstream View (And Why It Falls Short)

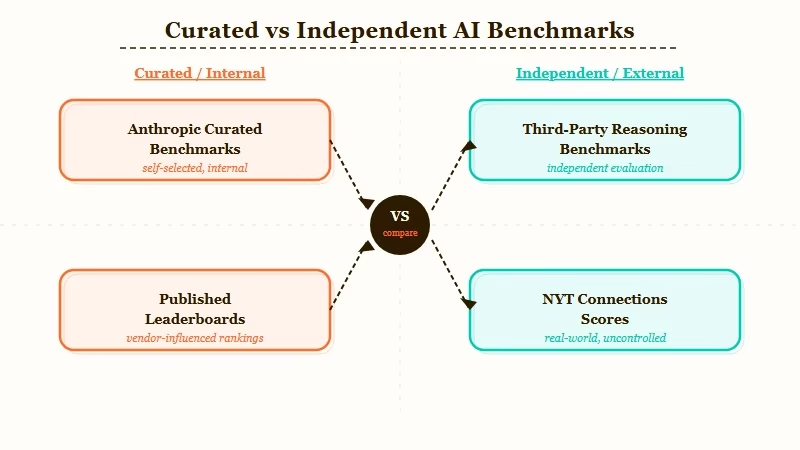

The mainstream view is that Opus 4.7 is a generational improvement over 4.6, pointing to Anthropic’s published benchmarks and the Research mode demo. That view ignores the only benchmarks that matter: the ones real users are running on their own work.

Here is the framing you will see in most coverage. Anthropic’s own release post describes Opus 4.7 as an upgrade with “significant gains in research and multi-step reasoning.” Artificial Analysis has 4.7 narrowly beating 4.6 on their composite while using fewer tokens.

SWE-bench numbers move up. Design, a new Anthropic Labs product, ships the same day.

That set of signals tells a tidy story: Anthropic shipped faster than the field, Figma’s stock dropped 4.26% on the Design launch, and 4.7 is the backbone.

The problem with that story is that it only uses benchmarks Anthropic controls the selection of. Published benchmarks are a menu. A lab picks which ones to highlight, which ones to leave off, and which ones to claim a win on.

When independent benchmarks come in, the picture changes. The GitHub repository lechmazur/nyt-connections is a third-party puzzle benchmark that the Anthropic marketing team does not curate.

On that benchmark, Opus 4.7 (high) scored 41.0%. Opus 4.6 scored 94.7%.

That is a 54 point regression on a reasoning benchmark that was not designed around either model. It is the kind of number that does not show up in a launch deck.

The mainstream view is not wrong because it is lying. It is wrong because it is only looking where Anthropic pointed.

What’s Actually Happening

What is really happening is that real power users ran 4.7 on their own tasks, found it regressed on complex reasoning and coding work compared to 4.6, and then found that Anthropic had already quietly regressed 4.6 itself in the weeks before the 4.7 release.

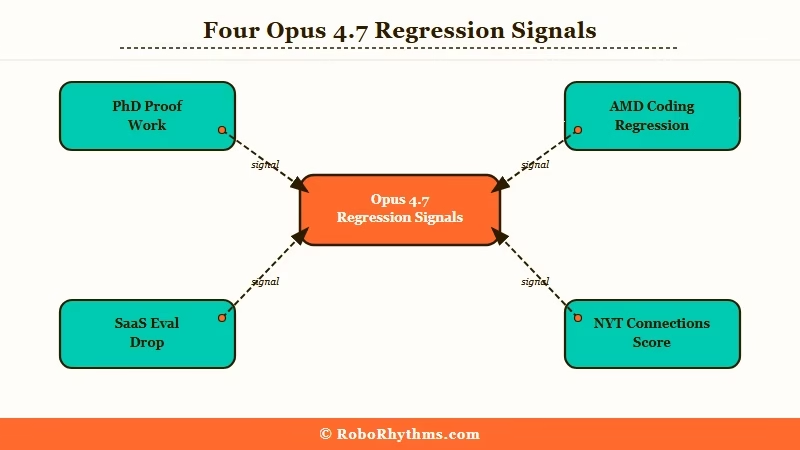

The pattern is specific. Here is what users across r/artificial, r/ClaudeAI, and r/singularity are reporting this week, and what makes their reports credible.

- Proof work spirals. PhD students and researchers describe 4.7 cycling through “oh wait, that doesn’t work, let me try again” five times in a single response on theoretical math and physics work that 4.6 handled cleanly a month ago. This burns through usage limits on the $20 Pro tier.

- Coding regression. AMD’s senior director of AI publicly stated “Claude has regressed” and “cannot be trusted to perform complex engineering.” This is not a random user post. It is a named industry figure on the record.

- Real-world evals drop. One Reddit user ran 4.7 against a saved SaaS-specific reasoning benchmark from before 4.6 regressed. In identical conditions, the pre-regression 4.6 beat 4.7. They published the result on openmark.ai.

- Connections collapse. The NYT Connections benchmark drops from 94.7% to 41.0% between 4.6 and 4.7 on the extended set.

Notice what ties these together. It is not latency or outages.

It is reasoning depth, the one thing that matters on the work people are paying $20 to $200 a month for.

The way I see it, a model can feel slower because of load and still produce the same answers. A model producing worse answers is a different class of problem. It is behavioral regression, and that only happens when the model itself changed.

From what I have seen, this is the point where the cost-pressure explanation stops looking like a conspiracy theory and starts looking like math.

The Part Nobody Wants to Admit

The part nobody wants to admit is that Opus 4.7 is not a pure quality upgrade. It is a quality-for-cost trade. Anthropic is serving more users per GPU, probably through aggressive quantization and system prompt compression, and the loss of fidelity is the price tag they charged the users without announcing it.

This is uncomfortable territory, so let me be specific about why the cost story is the most plausible explanation.

The highest-voted comment on the r/artificial backlash thread (137 upvotes) nails it in one sentence. Exploding popularity, OpenClaw demand, datacenters struggling. Anthropic “adaptively nerfing” Opus to keep the servers running until they can build more.

The commenter’s specific claim is that 4.7 is half as expensive to run as 4.6.

That maps to what an ML engineer would do under capacity pressure. Quantization reduces weight precision, cuts VRAM requirements and speeds up inference, and comes at a predictable cost to reasoning depth on hard problems. A heavily quantized 4.7 would look exactly like what users are reporting: faster on easy work, mediocre on proofs, worse on long reasoning chains.

It also explains a detail that would otherwise be strange. The 4.6 that shipped three months ago is not the 4.6 available right now.

Users have been reporting 4.6 regressions for the last few weeks. If Anthropic was trialing compute-saving changes on 4.6 to relieve capacity, and those changes became the 4.7 baseline, the release of 4.7 is partially a relabeling exercise.

Dario Amodei has stated publicly that Anthropic is cash positive on inference. If that is true, the economic motive is not margin, it is capacity.

You cannot add datacenter capacity on a 90-day calendar, but you can halve your per-request compute in a model release cycle.

The Mac Mini and Mac Studio shortage this month is the same pressure expressed on the consumer side. Thousands of individual buyers cannot get the hardware they need to run local models because OpenClaw demand ate the supply.

Anthropic is facing the enterprise-scale version of that shortage inside their own cloud, and a model that uses half the GPU time is how you buy yourself six months.

If Anthropic came out and said “4.7 is a cheaper-to-serve release, quality is flat to slightly down on hard tasks, we did this to keep servers running,” I would respect the move.

Trading a point of reasoning quality for 2x concurrency during a capacity crunch is a reasonable business decision. Shipping it as a straight upgrade and hoping power users do not run independent benchmarks is the part that does not hold.

Hot Take

Anthropic is quantizing its way into mediocrity, and the only reason they can get away with calling it a “4.7 release” is that the AI press corps does not run its own evals. If OpenAI had shipped a version of GPT that regressed 54 points on a third-party reasoning benchmark, there would be a week of cycles about it. Because it is Anthropic, and because the narrative this year is “Anthropic is winning,” nobody wants to write the story.

The version of Opus that made people switch from ChatGPT six months ago is not the version you are paying for today. The branding is the same, the price is the same, and the model is demonstrably worse at the work that justified the price tag. If that is not a story, I do not know what is.

The worst part is what this predicts. If 4.7 is the capacity-management move, 4.8 is the same knob turned further.

The floor for quality is wherever users stop complaining loud enough to reach the enterprise customers paying Anthropic billions in API revenue. The PhD student on the $20 tier is nowhere near that floor.

If you are running serious work on Opus right now, the honest advice from what I am seeing in the data is this. Keep your own eval set, version-lock it, and run it on every release the day it drops. If 4.7 regressed, 4.8 will too, and “I ran my evals” will be the only defense you have against paying more for less.

Frequently Asked Questions

Is Opus 4.7 really worse than 4.6?

On independent benchmarks like NYT Connections extended, yes, 41.0% vs 94.7%. On Anthropic’s own benchmarks, 4.7 wins.

Which set you trust depends on whether you think lab-curated benchmarks or user-run third-party benchmarks are more honest. Real-world coding and research reports from power users lean toward the regression story.

Why would Anthropic release a worse model on purpose?

Capacity. OpenClaw and other agent frameworks have exploded usage.

A model that is cheaper to serve per request lets Anthropic handle more concurrent users without adding datacenter capacity, which cannot be added fast. A small reasoning hit in exchange for 2x concurrency is the trade.

How can I tell if my workflow is affected?

Save a set of hard prompts that 4.6 handled well (proofs, multi-step coding tasks, complex research synthesis). Re-run them on 4.7 on the same tier and grade the output yourself. If 4.7 takes more turns or produces shallower answers, you are in the affected segment.

Should I downgrade back to 4.6?

If you are on the API, 4.6 is still accessible and costs more per token, and that premium is worth paying for research-grade work. In the Claude chat app, you cannot pin 4.6 unless you are on the top tier, which is part of the leverage.

Is this going to get better with 4.8?

Probably not without capacity relief. If Anthropic is tuning for cost-per-request under demand pressure, the direction of travel is more of the same until either datacenter capacity catches up or users leave for competitors in volume.

Does this affect Sonnet and Haiku the same way?

The complaints are concentrated on Opus because Opus is the tier people pay for reasoning depth. Sonnet and Haiku regressions would be harder to notice because they are not sold on that axis, and the public benchmarks on them are thinner.

This article literally was written by Claude.

I just paid Anthropic $100 for the month and now I cannot use the app. I guess I’ll have to wait for the class action to get a refund.