What Happened: Claude Code has been consuming tokens at abnormally high rates since March 23, 2026, with some users burning through their entire 5-hour session limit in under 90 minutes. A broken prompt caching system is the likely cause, though Anthropic has officially described it as intentional capacity management.

If you have been hitting your Claude Code usage limit far faster than usual this week, you are not imagining it. Starting around March 23, users across every subscription tier began reporting that their token budgets were evaporating at rates that made the tool nearly unusable during working hours.

People paying $100 or $200 per month for Max plans were watching five-hour session windows disappear in 90 minutes on identical workloads they had been running for weeks without issue. One user posted to the Claude AI Community that a single prompt consumed 7% of their five-hour Max 5x session. Others documented individual prompts jumping the usage meter from 21% straight to 100%.

The numbers do not add up unless something fundamental changed in how Claude counts or reuses tokens. From what I have pieced together across GitHub issues, Reddit threads, and Anthropic’s own public statement, here is what is going on.

What Happened to Claude Code’s Rate Limits in March 2026?

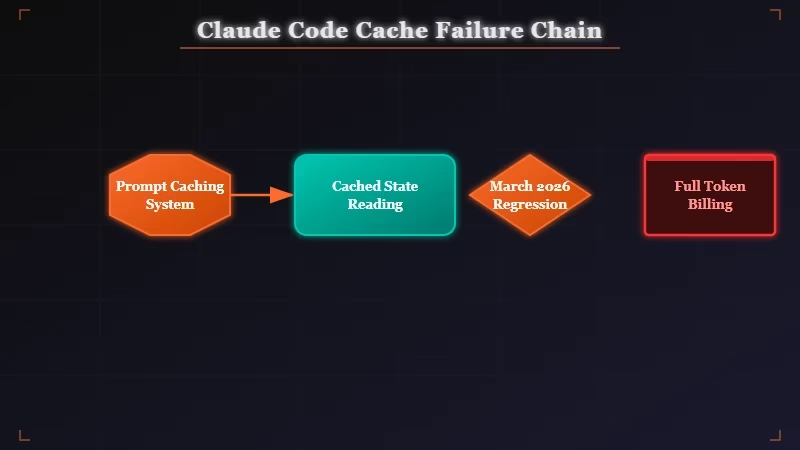

Claude Code rate limit drain is caused by a broken prompt caching system that forces the model to reprocess conversation history at full token cost on every turn instead of reading from cache.

What is prompt caching: A system that stores the computational state of prior conversation turns so the model can skip reprocessing them. When working, it makes long sessions dramatically cheaper. When broken, every turn costs as much as a fresh cold start.

Claude Code is supposed to store key-value (KV) cache states from each conversation turn. On subsequent turns, the model reads from those cached states rather than recomputing them. This is what makes long agentic sessions economically viable on a subscription plan.

According to GitHub issue #40524, the bug causes conversation history to get “invalidated” unexpectedly mid-session. The cache write happens, but the read fails, and the model bills you for full reprocessing of every prior message. In the worst documented case, a user recorded cache reads consuming 99.93% of their quota at a rate of 1,310 cache reads per 1 I/O token.

The session resume feature is also broken. Using the --resume flag to continue a previous conversation should reload cached context cheaply. Instead, users report it triggers a full reprocess of the entire prior session at the original cost.

Why Is Claude Code Burning Through Tokens So Fast?

Token overconsumption in Claude Code is the result of cache invalidation firing on every turn, meaning 200,000-token project contexts get billed repeatedly instead of once.

The way I think about it: imagine loading your entire codebase into a session. With working caching, that context is charged once and subsequent turns cost only your new messages and the model replies.

With cache invalidation broken, every single turn re-bills the full 200,000 tokens. Three or four turns in and your five-hour window is gone.

This hits hardest on the use cases Max plan users specifically paid to support: large codebases, long system prompts, and multi-step agentic workflows with tool calls. The users spending $100 to $200 a month are exactly the ones running the session types most exposed to this bug.

Here is a quick reference for diagnosing what you are seeing:

| Symptom | Most Likely Cause | What to Try |

|---|---|---|

| One prompt burns 5-15% of session | Cache invalidation mid-session | Restart the session, skip --resume |

| Session gone in under 2 hours | Peak-hour throttle stacking with cache bug | Shift work to off-peak hours |

--resume costs as much as a new session | Session resume cache regression | Avoid --resume until patched |

| Steady drain that tracks your workload | Normal usage, no bug present | No action needed |

Is This a Bug or Did Anthropic Change Something on Purpose?

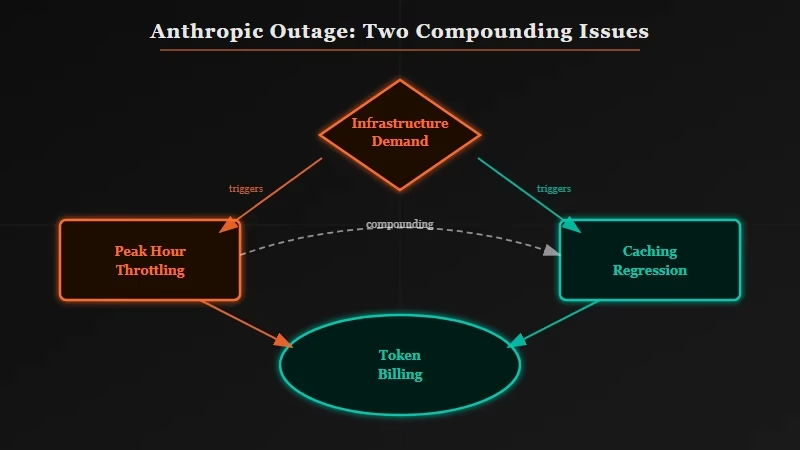

The Claude Code rate limit issue is both a known caching regression and an intentional throttle that Anthropic announced on March 26, 2026.

On March 26, Thariq Shihipar from Anthropic’s technical team posted that the company is “intentionally adjusting 5-hour session limits to manage growing demand.”

During peak hours (5am-11am PT on weekdays), users will “move through session limits faster than before.” The announcement acknowledged that around 7% of users would hit limits they had not hit before.

The framing here is worth examining closely. Anthropic described this as a capacity decision and mentioned “efficiency wins” that partially offset the impact. It also recommended shifting “token-intensive background jobs” to off-peak hours, which is advice that only makes sense if working hours have gotten substantially more expensive.

What the MacRumors report on this issue and The Register’s coverage both noted is that Anthropic’s statement did not address the underlying caching regression at all. From my read of the GitHub threads, the intentional throttling and the caching bug are two separate problems active at the same time. When both hit simultaneously, a $100/month Max subscriber can end up with the effective token capacity of a free tier user.

March 2026 also saw five major Claude platform outages in a single month, suggesting real infrastructure strain playing into both issues.

What Should I Do About My Claude Code Rate Limit Problem?

The most reliable fix for Claude Code rate limit drain right now is shifting intensive work to off-peak hours and breaking large sessions into shorter independent runs.

From what I have tracked in the GitHub threads and Reddit discussions, here is the sequence I would walk through first:

- Shift intensive tasks to off-peak hours. Off-peak is outside 5am-11am PT on weekdays. Overnight runs and weekend sessions avoid the peak-hour throttling entirely and appear to see less severe cache issues.

- Break large projects into smaller sessions. Cache invalidation compounds over longer conversations. Shorter, discrete sessions with fresh starts limit how much context gets reprocessed per billing cycle.

- Avoid the

--resumeflag for now. Session resumption triggers the full context reprocessing bug. Restructure workflows to avoid relying on it until a patch ships. - Monitor your usage meter in the first few turns. If a single short message burns more than 3-5% of your session, the cache has already invalidated. Restart rather than burning through the remaining quota.

- Check your version. Some users report that builds prior to v2.1.78 show less severe cache behavior, though downgrading is not officially supported and comes with tradeoffs.

If you are using Claude Code in an n8n workflow or automation pipeline, this bug is particularly disruptive since long-running agentic tasks are exactly the use case most exposed to cache invalidation. Restructuring those workflows to use shorter, stateless sessions where possible is worth the effort until a patch arrives.

Also check your Claude Code skills configuration if you are running custom skills with long system prompts. System prompts appear to cache more reliably than conversation history in the current broken state, but large skill sets still add to the base token cost per turn.

What Comes Next for Claude Code Users?

Claude Code users should expect continued instability during peak hours until Anthropic patches the caching regression, with no official timeline given as of March 31, 2026.

The GitHub issues tracking this are accumulating fast. Issue #40524 has the most detailed technical documentation, and issues #27048 and #41200 track related cache invalidation patterns. The volume of developer attention on these threads makes a patch likely in the near term, but “soon” from a company managing five simultaneous outages in a month is hard to predict with confidence.

The Anthropic situation in March 2026 provides useful context here. This is a company under real infrastructure pressure from surging demand, and some of the current issues appear to be direct consequences of that pressure.

The Max plan economics break down when caching fails. A Max 5x user paying $100/month expected roughly 88,000 tokens per five-hour window. With cache invalidation firing on every turn, they might get a tenth of that on complex projects.

Watch Anthropic’s changelog and Claude Code GitHub releases for cache-related fixes. When a fix ships, test your specific session types to confirm the behavior has changed before resuming your normal workflow.