TL;DR: After a direct comparison in r/LangChain, SmolVM came out on top for AI agent sandboxes in 2026, beating E2B and OpenSandbox on snapshotting, fork speed, and computer-use support. This breakdown covers how each option performs on the criteria that matter for production agents.

The question I see most in AI builder communities right now is not which model to use. It is which sandbox to run your agent in without it collapsing in production.

A developer in r/LangChain posted a head-to-head comparison of four options: SmolVM, OpenSandbox, Microsandbox, and E2B. The post triggered a solid thread, and the community added Daytona and Modal to the mix.

I dug through the full comparison to pull out what matters for teams moving agents from prototype to live.

Picking the wrong sandbox costs more than setup time. Snapshotting failures, cold starts, and missing computer-use support are the kind of problems that only surface after you have built your agent around a specific runtime. Getting this right early matters.

What Separates a Good AI Agent Sandbox From a Bad One

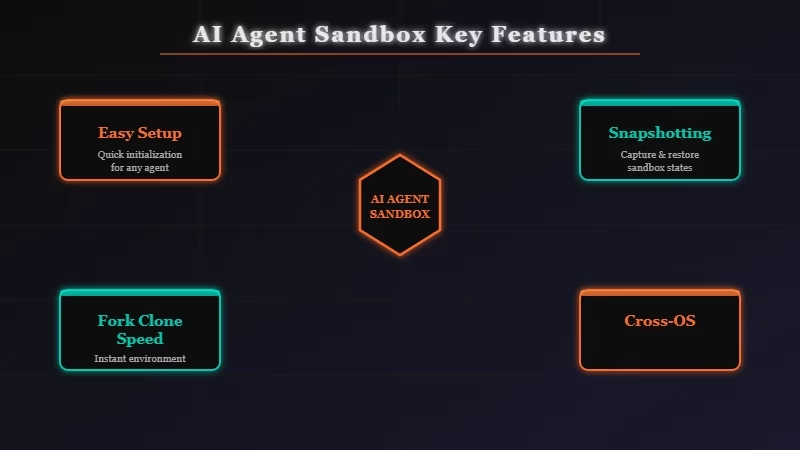

The six features that determine whether a sandbox works for production AI agents are easy setup, snapshotting, fork/clone speed, pause/resume support, cross-OS compatibility, and computer-use support. Most sandboxes handle setup fine. The gaps appear everywhere else.

From what I have seen in the community, most developers pick a sandbox based on documentation quality or GitHub stars. Both are reasonable signals, but neither tells you whether pause/resume works reliably or whether computer-use support is stable enough for a long-running agent task.

The developer behind this comparison set a clear testing framework: six criteria, four sandboxes, binary pass/fail plus notes on edge cases. That kind of structure cuts through the marketing.

Worth noting: the author disclosed working on SmolVM directly. That is not disqualifying, but it means the E2B and Microsandbox results deserve a closer read. Several commenters pushed back on the methodology, and cold-start timing numbers should be verified against your own workload before committing.

How Each Sandbox Stacks Up Across the Features That Matter

SmolVM leads the comparison on five of six criteria, with the strongest advantages in snapshotting, fork/clone, and computer-use support. E2B trails on pause/resume and computer-use but has the most mature ecosystem and the deepest SDK support across languages.

Here is how each sandbox tested across the six criteria:

| Sandbox | Easy Setup | Snapshotting | Fork/Clone | Pause/Resume | Cross-OS | Computer-Use |

|---|---|---|---|---|---|---|

| SmolVM | Yes | Yes | Yes | Yes | Yes | Yes |

| OpenSandbox | Yes | Yes | Partial | No | Yes | No |

| Microsandbox | Yes | Partial | No | No | Yes | No |

| E2B | Yes | No | No | No | No | No |

The table is based on the r/LangChain comparison thread from April 2026. Commenters flagged that E2B has been improving its snapshotting support in recent builds, so that row may shift as the library evolves.

E2B’s GitHub repository has over 7,000 stars, which tells you it has broad community adoption even if it lags on features here.

For most agent setups, snapshotting and fork/clone are the two features that determine whether your agent can recover from a mid-task failure without restarting cold.

OpenSandbox is close to SmolVM here, and several builders in the thread prefer it precisely because there is no conflict-of-interest question about who built it.

Computer-use support matters if your agent is navigating a browser or interacting with a GUI. Only SmolVM passed that test in this comparison.

If that is not on your roadmap, the decision between SmolVM and OpenSandbox becomes much closer.

How to Deploy SmolVM for Your First AI Agent

To deploy SmolVM for an AI agent, install the package, configure a snapshot policy before any agent logic runs, and test fork/clone behavior before pushing to production. The core setup takes under 20 minutes for a basic agent.

Here is the deployment sequence from the comparison thread:

- Install SmolVM:

pip install smolvm - Initialize a VM instance with your base environment:

vm = SmolVM(image="ubuntu-22.04") - Run your agent setup commands inside the VM (install dependencies, configure env vars)

- Take a snapshot before your agent starts its task loop:

snapshot = vm.snapshot() - On task failure, restore from snapshot instead of restarting cold:

vm.restore(snapshot) - For parallel agent runs, fork from the existing snapshot:

vm2 = snapshot.fork()

The fork-on-snapshot pattern is the main reason SmolVM leads this comparison. Parallel agent runs sharing a common base state cut cold-start overhead significantly, especially for agents doing repeated web research or document processing.

If you are building something more complex, Dynamiq handles the orchestration layer on top of the sandbox runtime, so you are not managing agent state and sandbox state separately. Worth looking at if the setup above starts getting complicated fast.

One thing I would add from testing: take your snapshot after all dependencies are installed but before any tool initialization. Snapshotting mid-setup adds bloat to the restore operation and slows down fork spawning on subsequent runs.

When to Skip SmolVM and Use Something Else

Use E2B for mature SDK support. Use Modal for stateless serverless tasks. Use OpenSandbox for SmolVM’s feature set without the conflict-of-interest concern.

E2B has been in production use longer than anything else on this list. Its SDK ecosystem is broader, and the LangChain community has more documented setups with it. If your agent is a simple function-execution pattern with no computer-use requirement, the maturity argument for E2B is real.

Modal came up in the thread as a different category: serverless, no persistent VM state between runs, but fast cold starts and simple billing. Modal fits short-lived agent tasks cleanly but breaks down for anything that needs to pause mid-task and resume later.

I covered the infrastructure gaps that kill production agents in more detail in why AI agents keep failing. Most of the failure modes trace back to the features in this comparison: state management, recovery from partial completion, and environment consistency across runs.

If you are working through the broader production setup question, the Anthropic Managed Agents breakdown covers how Anthropic is trying to abstract the infrastructure layer entirely. For teams that would rather not manage sandbox choice at all, that is worth reading before committing to any of the options above.

The production RAG pipeline guide is also relevant if your agent is retrieval-heavy. Sandbox choice affects RAG agent performance differently than it affects pure task-execution agents, mainly because of how document state gets managed across retrieval steps.

Frequently Asked Questions

What is the best sandbox for AI agents in 2026?

SmolVM ranked first in a direct head-to-head comparison of four sandboxes. It leads on snapshotting, fork/clone, pause/resume, and computer-use support, making it the strongest pick for production agents that need to recover from mid-task failures.

Is E2B still worth using for agent deployment?

Yes, E2B is worth using if your team has existing tooling around it or needs mature Python and TypeScript SDK support. It lags on snapshotting and computer-use features but has the broadest community ecosystem of any sandbox on this list.

What is the fork-on-snapshot pattern for AI agents?

The fork-on-snapshot pattern means taking a VM snapshot after your agent environment is configured, then forking from that snapshot for each parallel agent run. This avoids cold-start overhead on repeated runs and lets agents recover from mid-task failures without restarting from scratch.

Does SmolVM work for computer-use AI agents?

Yes, SmolVM is the only sandbox in this comparison that supports computer-use out of the box. If your agent navigates a browser or interacts with a GUI, SmolVM is the only tested option that passes that requirement.

When should I use Modal instead of SmolVM?

Modal is a serverless platform, not a persistent VM sandbox. Use Modal for short-lived, stateless agent tasks where cold starts are acceptable. It is the wrong choice for agents that need to pause mid-task, restore state, or fork into parallel runs.