Quick Answer: Anthropic is suing the US government after the Pentagon blacklisted the company as a “supply chain risk” for refusing to let the military use Claude for autonomous weapons and mass surveillance. A federal court hearing is scheduled for March 25, 2026. Microsoft, Google DeepMind, and OpenAI employees have all filed supporting briefs for Anthropic.

The Pentagon tried to bury Anthropic by putting it on a national security blacklist. Tomorrow, that decision goes in front of a judge.

Federal court in San Francisco hears arguments on March 25 in a case that pits one of America’s most advanced AI companies against its own government.

Judge Rita Lin will consider whether to grant Anthropic temporary relief after it was designated a “supply chain risk”, a label historically reserved for foreign adversaries like Huawei, not American startups.

From where I’m sitting, this is the most significant legal battle in AI right now. The dispute has drawn in Microsoft, retired military generals, and dozens of researchers from OpenAI and Google.

If you use Claude, care about who controls AI, or just follow the space, this one matters.

What Actually Happened

The Anthropic blacklist is the result of a contract negotiation that fell apart over two specific redlines.

In February 2026, the Trump administration severed ties with Anthropic after the two sides couldn’t agree on how the Pentagon could use Claude.

Anthropic wanted two guarantees in the contract: that Claude would not be used for autonomous lethal weapons without human oversight, and that it would not be used for domestic mass surveillance of Americans. The Department of War refused both.

The Pentagon’s position was that it needed to use Claude for “all lawful purposes” without a private company being able to veto specific applications during a national security emergency.

Anthropic’s argument was that it needed to retain some oversight over its own model.

When talks broke down, the Pentagon didn’t just cancel the contract. It issued a formal “supply chain risk” designation against Anthropic, which requires all defense contractors and vendors to certify that they are not using Claude in any work for the US military.

That’s the same category of label historically used for Huawei and ZTE, companies with alleged ties to foreign governments. Using it on an American startup is, as Anthropic’s lawsuit puts it, “unprecedented and unlawful.”

Here’s a quick breakdown of who is saying what in this case:

| Party | Position | Action taken |

|---|---|---|

| Anthropic | Designation unlawful, violates First Amendment | Filed two lawsuits on March 9 |

| Department of War | Needs unrestricted access to Claude for national security | Issued supply chain risk designation |

| Microsoft | Designation should be blocked pending review | Filed amicus brief urging restraining order |

| OpenAI + Google DeepMind employees (30+) | Blacklist harms entire US AI industry | Filed joint amicus brief led by Google chief scientist Jeff Dean |

| Sen. Elizabeth Warren | Designation appears retaliatory | Sent letter to DOD on March 23 |

| 22 retired military officers | Some limits on AI use are worth preserving | Co-signed Microsoft’s brief |

Anthropic filed two lawsuits on March 9, one in the US District Court for Northern California and one in the federal appeals court in Washington.

The Northern California case was fast-tracked from an April 3 date to March 25 at Anthropic’s request.

This Is a Bigger Deal Than It Sounds

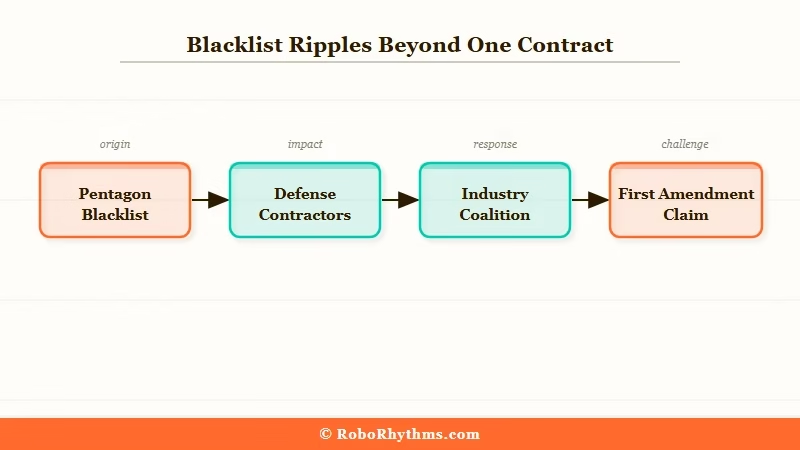

This case is not really about one contract. The supply chain risk designation doesn’t just cancel Anthropic’s direct Pentagon deal. It ripples through every defense contractor, cloud provider, and federal vendor that touches US military work.

If companies like Palantir, AWS, or Microsoft cannot use Claude in defense projects, Anthropic’s commercial market collapses well beyond one $200 million contract.

The company says “hundreds of millions of dollars” in revenue are at risk.

Fortune’s analysis of the case frames the geopolitical tension well: Chinese labs have allegedly obtained distilled versions of both Claude and GPT models, meaning adversaries can use American AI with zero guardrails while American companies restrict themselves.

The irony of weakening Anthropic precisely when the China AI race is accelerating is not lost on the 30+ researchers from OpenAI and Google DeepMind who filed a supporting brief.

Google chief scientist Jeff Dean signed it, warning that punishing a leading US AI company “will have consequences for the United States’ industrial and scientific competitiveness in the field of artificial intelligence and beyond.”

What I find most interesting legally is that Anthropic is not just challenging the designation on procedural grounds.

It’s arguing the Pentagon violated its First Amendment rights, that the blacklist is retaliation for Anthropic publicly stating its ethical positions.

If that argument holds, it sets a precedent for how the government can or cannot pressure AI companies that take public stances on military use.

Senator Warren’s letter to the DOD on March 23 adds another dimension. She is now calling the blacklist “apparent retaliation,” which means this is beginning to attract Congressional oversight.

What started as a contract dispute has become a story about government accountability over AI.

For context on how AI agents are being deployed in practice right now, this piece on AI agents for normal users in 2026 shows just how central these tools have become.

And if you want background on why OpenAI taking the Pentagon contract instead of Anthropic matters commercially, this breakdown of how OpenAI reshaped the automation market covers the competitive dynamics in detail.

What This Means for You

If you use Claude, this case directly affects the long-term viability of the product you depend on.

Anthropic’s ability to operate commercially is tied to whether this designation gets overturned. If defense contractors and federal vendors are locked out of Claude, that’s a significant revenue hit that could affect investment, hiring, and the pace of model development.

The broader question here is who gets to set ethical limits on powerful AI. Anthropic’s argument is that a private company should be able to draw lines around how its technology is used, even with government clients.

The Pentagon’s argument is that the government cannot cede that authority to a company when national security is involved.

This debate will keep coming back as AI gets more capable. The precedent set here will shape how every major AI lab negotiates government contracts going forward.

If Anthropic loses, expect every AI company to quietly drop their ethical redline policies on military use. If Anthropic wins, expect future contracts to include far more explicit constraints, which is probably good for everyone in the long run.

Here’s what to watch from a practical standpoint:

- Claude availability in enterprise tools: Palantir has said it’s still using Claude while the case plays out. If the designation is upheld, those integrations could be forced to stop.

- Government AI vendor consolidation: OpenAI signed the Pentagon contract that Anthropic refused. If this case weakens Anthropic, OpenAI becomes the dominant government AI vendor, which reshapes competition significantly.

- Your own AI use: Nothing changes for direct consumers right now. Claude apps, API access, and personal subscriptions are unaffected. The blacklist targets defense contractors, not end users.

For a broader look at which AI tools are genuinely worth paying for right now, this guide to the best paid AI tools in 2026 has a useful breakdown of where Claude fits in the stack.

What Comes Next

The March 25 hearing is the first real test of whether courts will rein in the Pentagon’s use of supply chain risk designations against domestic companies.

Judge Rita Lin will hear arguments on whether to grant Anthropic a temporary restraining order that would pause the designation while the full case is heard.

If the restraining order is granted, the blacklist gets put on hold and defense contractors can continue using Claude during litigation.

If it’s denied, the designation stays in place and Anthropic has to fight the full case while the damage accumulates.

My read is that Anthropic has a credible case on the First Amendment angle, but the government’s national security argument gives courts reason to be cautious.

The outcome of tomorrow’s hearing will signal which direction this is heading.

After the hearing, watch for:

- Congressional hearings: Warren’s letter signals oversight committees are paying attention

- Other AI companies clarifying their own government contract policies in response

- A potential settlement if the Trump administration wants to avoid an unfavorable First Amendment ruling on AI

- A ruling on the temporary restraining order, expected within days of the hearing

FAQs

Why did the Pentagon blacklist Anthropic?

The Pentagon issued a supply chain risk designation after Anthropic refused to allow Claude to be used for autonomous weapons without human oversight and domestic mass surveillance. Negotiations broke down in February 2026 when the Department of War demanded unrestricted access for “all lawful purposes.”

What is a supply chain risk designation?

A supply chain risk designation is a formal government label that requires defense contractors to stop using the flagged company’s products. It has historically been reserved for companies tied to foreign adversaries like Huawei. Applying it to a US company is legally unprecedented.

Who is supporting Anthropic in the lawsuit?

Microsoft filed an amicus brief urging a restraining order, backed by 22 retired military officers. Over 30 employees from OpenAI and Google DeepMind, including Google chief scientist Jeff Dean, filed a separate brief warning the blacklist harms US AI competitiveness.

Does this affect regular Claude users?

No. The designation targets defense contractors, not consumers. Claude apps, API access, and personal subscriptions are unaffected. The impact falls on enterprise and government customers who contract with the US military.

What happens at the March 25 hearing?

Judge Rita Lin will consider whether to grant Anthropic a temporary restraining order that would pause the supply chain designation while the full lawsuit proceeds. If granted, defense contractors could continue using Claude during litigation.

Could Anthropic lose its case?

It’s possible. The government’s argument is that it cannot allow a private company to veto military use of a tool during a national security emergency. That argument has legal merit. The outcome hinges partly on whether courts accept the First Amendment framing Anthropic is pursuing.