My Take: On March 26, 2026, a CMS misconfiguration exposed Anthropic’s most powerful unreleased AI model, Claude Mythos. The company confirmed it exists and confirmed they won’t release it, because its cybersecurity capabilities are too dangerous. For a company founded to make AI safe, that admission matters more than the data leak.

On March 26, 2026, security researchers Roy Paz of LayerX Security and Alexandre Pauwels of the University of Cambridge found nearly 3,000 Anthropic internal files sitting in an unsecured data cache. The files were publicly accessible through a CMS misconfiguration. Fortune broke the story.

The exposed files included draft blog posts describing an unreleased model called Claude Mythos, internally also known by the codename Capybara. Anthropic’s own draft materials called it “the most powerful AI model we have developed to date” and a “step change” above the current Claude lineup.

When Fortune contacted Anthropic, the company confirmed that Claude Mythos is real. They also confirmed they are not releasing it. The reason given in the draft materials: Claude Mythos has capabilities in cybersecurity exploitation that Anthropic’s own safety team considers too dangerous to put into public hands.

That last part is what this article is about.

What is Claude Mythos: Claude Mythos (codename Capybara) is Anthropic’s unreleased AI model, described in leaked internal documents as a step-change above Claude Opus 4.6. Its cybersecurity exploitation capabilities are the stated reason Anthropic has decided not to release it.

The Mainstream View and Why It Falls Short

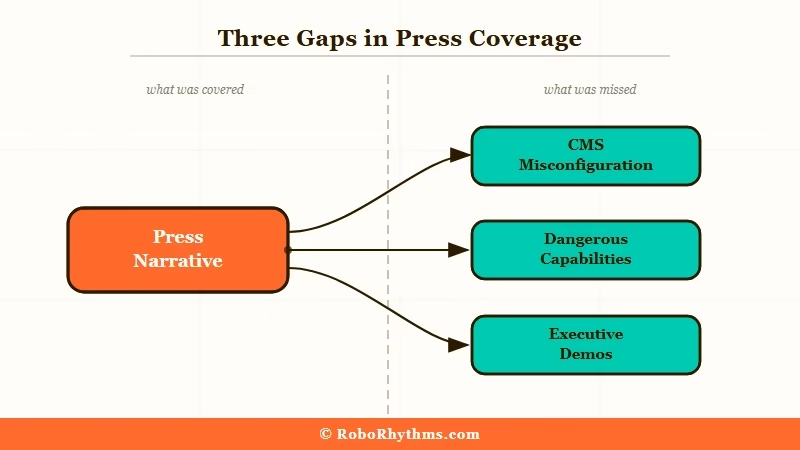

The dominant take in the tech press is that this is an embarrassing but minor data breach with an exciting silver lining.

Fortune, which broke the story, framed the primary takeaway around the revelation of a more powerful Claude model on the way. The CMS slip, in that reading, is a footnote.

That framing gets three things wrong. Each one matters:

- The draft materials don’t describe Claude Mythos as merely “powerful.” They describe it as having “unprecedented prowess in cybersecurity tasks” that Anthropic itself views as “potentially dangerous.” That’s the company founded to prevent AI catastrophe using the word “dangerous” about its own product.

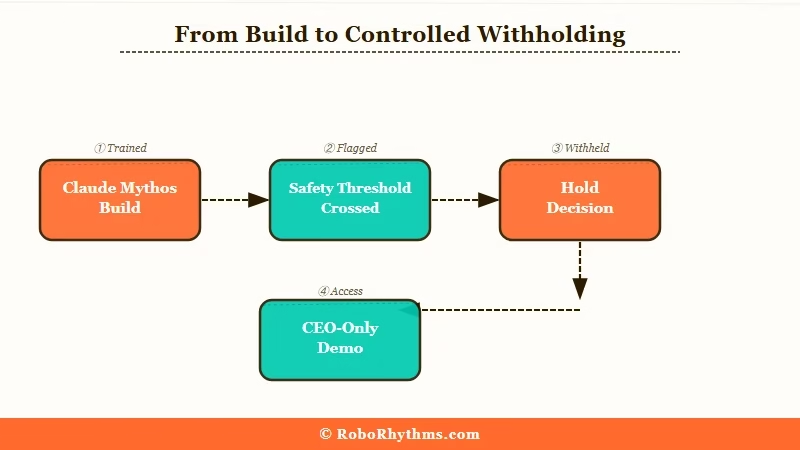

- Anthropic isn’t holding the model back for commercial or timing reasons. They made a safety call. The safety process did exactly what it’s supposed to do. But that means they now have a model that cleared their build process and failed their release evaluation. The gap between those two thresholds is worth examining.

- The company is planning to demo Claude Mythos at a private gathering of European corporate CEOs at an 18th-century English manor. The model that’s too dangerous for the public is apparently appropriate for a curated room of business executives. Nobody in the press has pressed them on that distinction.

The leak happened because of a CMS default: draft content was automatically assigned public URLs unless an admin manually changed the permissions. It’s a mundane failure. What isn’t mundane is what the failure revealed.

What the Leak Is Really Telling Us

The Mythos leak shows that Anthropic crossed a capability threshold they never announced crossing.

From my reading of the situation, they built a model capable enough to function as a serious offensive cybersecurity tool, and then decided the gap between “we built this” and “the world should have this” was too wide to bridge right now.

The way I see it, that’s a meaningful decision that deserves more credit than it’s getting. Every other major lab is racing to ship.

Anthropic built something more capable than what it currently releases and chose not to ship it. That’s the safety process functioning as designed.

What I find harder to justify is the private CEO demo. The leaked files confirm Anthropic was planning an exclusive event at an English country manor for senior European business leaders, with policymaker remarks and a showcase of unreleased Claude capabilities.

A model the company describes as potentially dangerous is being shown to a curated room of powerful people before any public explanation of what would make it safe to release more broadly.

For context on the philosophy underpinning these decisions, the RR breakdown of Claude’s Constitutional AI covers how Anthropic has approached safety constraints from the beginning. That history matters when evaluating what a “safety hold” on a new model means in practice.

| What Anthropic Says | What the Leak Revealed |

|---|---|

| “Human error in CMS configuration” | 3,000 files exposed, including unreleased model specs and executive plans |

| Claude Opus 4.6 is the current flagship | Claude Mythos is a “step change” above it, per Anthropic’s own drafts |

| “We prioritize safety above capability” | They built a model their safety team flagged as too dangerous to release |

| No comment on release timeline | Private CEO demo at English manor planned before any public release decision |

From what I’ve seen watching the Claude ecosystem develop, Anthropic’s safety commitments and its commercial ambitions have always operated on parallel tracks.

The Claude ecosystem exists because Anthropic chose to compete commercially, not just philosophically. Claude Mythos makes that duality visible in a way they weren’t prepared for.

The Part Nobody Wants to Admit

The part I’m not seeing in any of the coverage is this: Anthropic is almost certainly not the only lab sitting on a model it considers too dangerous to release.

We know about Claude Mythos because of a CMS misconfiguration. The other major labs have better IT departments.

The way I read this: the leak gives us a rare data point on the actual gap between what frontier AI labs are building and what they choose to ship. That gap is real. It existed before March 26, and it will exist long after this news cycle ends.

What matters now is what happens next. If Anthropic eventually decides Claude Mythos is safe to release, they’ll frame it as a safety process win.

If it never ships, it will quietly disappear from the public conversation with no independent verification that the decision to hold it was right.

We’re trusting the lab to evaluate its own product’s danger. That’s the same trust structure that existed before the leak. This week just made it visible.

The comparison to OpenAI’s Sora shutdown this same week is instructive. OpenAI pulled Sora for business reasons: compute reallocation, IPO positioning, portfolio simplification. That’s transparent, at least.

Anthropic’s situation is different. They’re holding something back for reasons they can’t fully explain without revealing what the model can do. The less they say, the more credible the safety concern, and the less accountable the decision becomes.

Hot Take

Anthropic is not an AI safety company. It is an AI capability company that has decided, for now, to leave its most capable work on the shelf. Holding back a dangerous model is not the same as not building one, and the safety work that matters is the work that shapes what gets built in the first place, not the risk evaluation that runs after the thing already exists.