My Take: AI writing tools are not making writers better. They are making writers average. The output is cleaner than most people’s first drafts, which creates the illusion of improvement. What is actually happening is a convergence toward the statistical midpoint of all writing in the training data. Individual voice is the casualty, and almost nobody is talking about it.

There is a hot thread running on r/artificial right now about whether AI is making us all think and write more alike. Most of the comments land in the “it’s a net positive” camp. I think that is wrong, and I am going to explain why.

This is not a “AI is bad” argument. I use AI tools every day for content work. My position is more specific: the way most people use AI writing assistance is eroding something they probably valued without realizing it.

The Mainstream View (And Why It Falls Short)

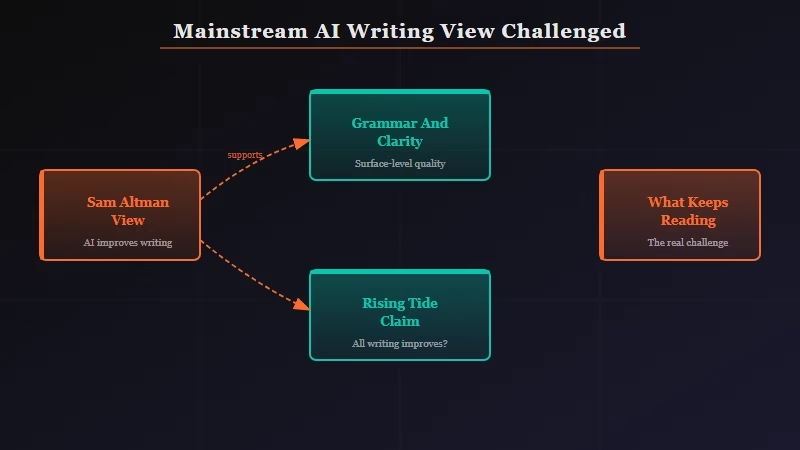

The mainstream argument, articulated most clearly by Sam Altman and OpenAI in their public communications, is that AI democratizes good writing.

The argument goes: millions of people who struggled to communicate clearly now have access to a tool that elevates their expression. Everyone gets a better writer in their pocket.

The Wired editorial team ran a piece making exactly this case in early 2025, arguing that AI writing assistance is to prose what spell-check was to spelling errors: a tide that lifts all boats.

The Gates Notes position is similar. Good writing was gatekept by education and privilege; AI breaks that barrier.

This argument has real merit. Access to clearer expression does matter. The problem is what it measures and what it ignores.

The argument defines “better writing” as “more grammatically correct, clearer, more professionally formatted.” On those metrics, AI-assisted writing does score higher. The problem is those are not the only things that make writing good, and they are arguably not the most important things.

What’s Actually Happening

What AI writing tools are actually doing is optimizing for the center of the distribution.

The models were trained on massive corpora of text. That text has patterns. Sentence length distributions. Vocabulary frequency curves. Structural conventions. Rhetorical moves that appear at certain points in pieces of certain lengths. When a model “helps” you write, it is pulling your output toward those patterns.

From what I have seen in my own writing across the last year, every AI-assisted draft I produce sounds more like every other AI-assisted draft. The distinctive tics I had as a writer, the specific rhythms that readers recognized as mine, get smoothed out. My first drafts before AI were rougher. They were also more distinctly mine.

The researcher Simon Willison noted in his writing that AI tools systematically produce “competent mediocrity” at scale. That is not a criticism of the models. It is a description of what happens when you optimize for the average: you get competence without distinctiveness.

The practical evidence is accumulating. Editors report that AI-assisted submissions from different writers are becoming harder to distinguish from one another. The vocabulary converges. The sentence structures converge. The pacing converges. At the level of individual pieces it is subtle. Across a category of content it is visible.

The Part Nobody Wants to Admit

The AI writing tool industry has a financial incentive to never tell you that your writing was more interesting before you ran it through their product.

Writesonic, Jasper, Copy.ai, and every other writing assistant on the market are measured by user satisfaction and retention. Users feel satisfied when their output improves on their first draft. “Improves” in this context means becomes more readable, more polished, more conventional.

The tool never surfaces the question of whether the idiosyncratic phrase you used before the cleanup was doing something that the normalized replacement cannot.

What I’d argue is more uncomfortable: we do not have good metrics for what makes writing distinctively good, so the tools optimize for what we can measure, which is surface correctness.

The things that make you actually want to keep reading something, voice, specificity, a sense that a particular person wrote this, are precisely the things that get averaged away.

Content farms and SEO operations do not care about this. For bulk content generation, convergence toward competent average output is a feature. For anyone trying to build a genuine audience or communicate something that only they could communicate, it is a problem.

Hot Take

The writers who refuse to use AI for their actual prose are about to become the most valuable ones in the room, not because they are more virtuous, but because they sound like themselves.

The market will eventually pay a premium for unmistakably human writing the same way it pays a premium for handmade goods in a world of machine manufacturing. The paradox is that the more AI raises the floor of average writing quality, the higher the ceiling goes for writing that breaks the pattern. The people who hold on to their distinctive voice while everyone around them sands theirs down will stand out from the noise in a way that is impossible to replicate.

I am not saying stop using AI. I am saying be more careful about which part of your process AI touches. The generation and research steps are fine. The actual sentences that carry your argument and your voice might be worth keeping yours.