My Take: The “AI is more expensive than employees” framing that lit up the front page this week is a 2025 story being told in 2026. Per-token API pricing has collapsed 90 percent since early 2025. By the time the boardrooms catch up to the headline, the math has already flipped, and the contrarian position is the default.

The Nvidia executive who told Fortune that AI is “more expensive than paying human workers” is telling a story that was true twelve months ago and is no longer true today. The 434-upvote Reddit thread that surfaced his quote treats it as a fresh insight.

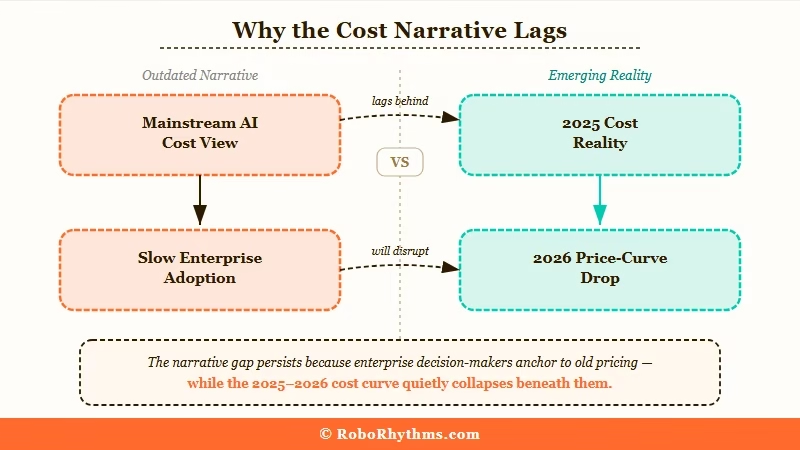

It is not. It is a lagging indicator that has not caught up to the real pricing curve.

I want to be direct about this. The cost-of-AI debate has been the loudest story in enterprise tech for two years, and the consensus view (AI is too expensive to deploy at scale) is wrong. Not “could be wrong with caveats.” Wrong, in the way that a position becomes wrong when the underlying numbers move past it.

The way I see it, the mainstream framing is going to look obsolete inside a year. Here is why.

The Mainstream View and Why It Falls Short

The mainstream view, as articulated by the Nvidia executive at the Bernstein conference and amplified by Fortune, is that AI’s cost per task is currently higher than the cost of paying a human to do the same task.

The framing is presented as if it explains the slow pace of enterprise AI adoption.

The exec’s claim is grounded in a real number: the cost of running large-scale inference on frontier models was high enough through most of 2025 that ROI calculations did not pencil for many enterprise use cases.

Big banks, big retailers, and big logistics companies pulled back AI deployments because the math did not work.

That is the steel-man version of the position. It is a coherent, evidence-backed, and (for 2025) accurate description of the constraint that slowed enterprise AI adoption.

Where the position falls short is the timing. The exec is describing the world that existed in Q1 to Q3 of 2025.

He is telling that story to an audience in late April 2026, and the audience is treating it as current. The pricing math that was true a year ago is not true now, and pretending otherwise is what makes this a contrarian take rather than a banal observation.

What’s Really Happening to AI Cost Per Inference

Per-token API pricing on equivalent-capability models has dropped roughly 90 percent between Q1 2025 and Q2 2026, and the curve is still bending.

What used to cost $30 per million output tokens on a frontier model now costs $2 to $5 on an equivalent. The “AI is too expensive” line has not survived contact with the real price sheets.

The pricing collapse is not a marketing trick. The training-cost side has collapsed in parallel: Google’s Gemini 1.0 Ultra had estimated training costs around $192 million, while DeepSeek claimed to train a competitive LLM for $6 million.

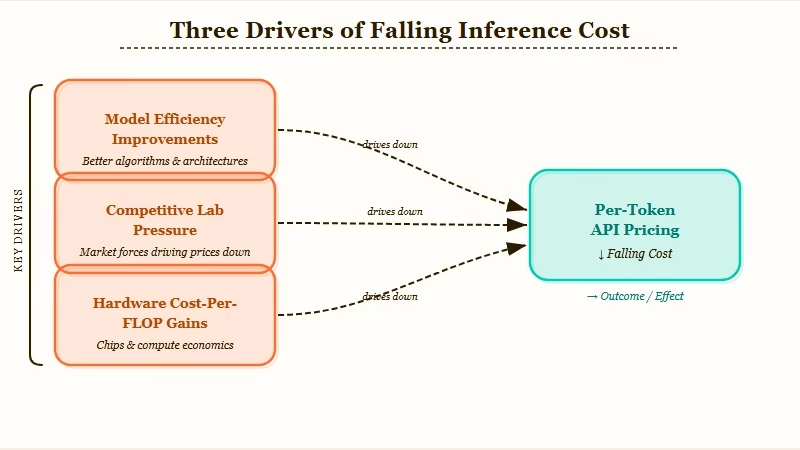

It comes from three real sources running in parallel: model efficiency improvements at the architecture level, hardware cost-per-FLOP gains from H200 to B200 to the now-shipping GB300 generation, and competitive pressure between OpenAI, Anthropic, Google, and the open-weights labs.

Stanford’s AI Index 2025 puts the inference price decline at anywhere from 9 to 900 times per year depending on the task, with one tracked trend showing a drop from $15 to $0.12 per million tokens in under a year. The combined effect compounds.

The Stanford AI Index Report 2025 puts numbers on it directly. The cost of running a model at GPT-3.5 quality fell by a factor of 280 between November 2022 and October 2024.

That is not a typo. Two hundred and eighty times cheaper for the same capability. At the current rate, one dollar buys roughly 14 million tokens, the equivalent of about 10.77 million English words.

| Period | Equivalent-capability price | Drop vs prior period |

|---|---|---|

| Nov 2022 (GPT-3.5 baseline) | $20 per million tokens | Reference |

| Oct 2024 (Stanford AI Index) | $0.07 per million tokens | 280x cheaper |

| Q1 2025 (frontier baseline) | ~$30 per million output | Reset on frontier |

| Q2 2026 (today) | $2 to $5 per million output | 6x to 15x cheaper |

That curve has continued through 2025 and 2026. The model-size side of the same trend is just as dramatic: hitting 60 percent on MMLU required a 540-billion-parameter model (PaLM) in 2022, while Phi-3-mini at 3.8 billion parameters cleared the same bar by 2024, a 142x reduction in model size for equivalent capability.

The exec quoted in Fortune is pointing at a snapshot from early 2025 and treating it as today’s reality. That is a category error, not an insight.

What this tells me is that the AI-cost narrative is now an artifact of cycle psychology. Enterprises pulled back in 2025, the pullback became a story, the story is still being told, and meanwhile the underlying numbers have moved on without the discourse.

The Part Nobody Wants to Admit

The reason the cost narrative persists despite contradicting the price sheets is that “AI is too expensive” is a comfortable explanation for executives who do not want to absorb the real disruption.

Saying the technology is too expensive is a face-saving framing for “we have not figured out how to deploy it productively.”

I would argue that is the part nobody in the boardroom wants to admit. From what I have seen, the limiter on enterprise AI ROI in 2026 is not unit economics.

The unit economics work. The limiter is workflow redesign, and that is harder, slower, and more political than buying tokens.

Workflow redesign requires firing people, retraining people, or restructuring teams. None of those are popular. “The technology is too expensive” lets executives delay all three.

The Nvidia exec, the Fortune article, and the upvoted Reddit thread are all participating in the same comfort narrative. The narrative is wrong on the cost claim because the cost claim is empirically falsifiable, but the narrative is psychologically useful because it lets enterprises avoid the real conversation about what AI changes about how work is structured.

What this tells me is that the next 18 months will see the cost-narrative collapse get loud at the same moment the workflow-redesign conversation gets uncomfortable. The two are linked. As the cost defense disappears, the political reality of AI’s real impact lands harder.

Hot Take

The Nvidia executive’s “AI is more expensive than employees” line will age in the same way “the iPhone has no hardware keyboard, businesses will never adopt it” aged after 2010. Eighteen months from now, anyone repeating that framing without a price-sheet citation will be laughed out of the room. The cost crisis is over; the workflow crisis is just starting.

What Comes After the Cost Narrative Dies

The interesting question is what fills the discourse vacuum once “AI is too expensive” stops being a viable explanation. From what I have seen, three replacement narratives are queueing up.

The first is the workflow-redesign narrative. Enterprises will start admitting (because they cannot avoid it) that the limiter is not the technology, it is their inability to restructure how work flows. That conversation is going to be politically expensive for executives who promised AI ROI in 2024 and underdelivered in 2025.

The second is the productivity-distribution narrative. AI’s gains are not evenly distributed across job types or industries, and the unevenness is going to drive a sharper labor sorting than anyone has admitted publicly. AI sorts white collar jobs covers the version of this thesis that I have come to believe.

The third is the model-capability-plateau narrative. The “models are plateauing” claim has been around for three release cycles, and so far has been wrong each time. But once cost stops being a debate, the conversation shifts to whether the capabilities deliver enough to justify the workflow disruption.

Why AI agents keep failing is part of that capability-plateau conversation already, and it does land on real failure modes that have nothing to do with cost. The cost-narrative death just makes those conversations more honest. The Anthropic OpenAI valuation flip is another piece of evidence that investors have already priced in the cost trajectory and are now competing on capability, not price.

For anyone in tech adjacent to enterprise AI deployment, the practical advice is simple. Stop quoting the cost objection.

The math has flipped. The honest conversation is about workflow, distribution, and capability, and the sooner that lands in your industry, the better positioned you are for the cycle that comes next.