What Happened: Washington state passed a new AI chatbot disclosure law in April 2026. New York’s S-3008C is already in effect, requiring suicide detection protocols and mandatory AI disclosure. Maine is close to signing a therapy bot ban. Multiple states are moving at once, and Congress has bipartisan bills in committee. If you use any AI companion app regularly, this wave of legislation will affect you.

The AI companion chatbot regulation wave of 2026 is the fastest-moving policy story in the AI companion space in years. At least five states passed or advanced new laws in the first quarter alone.

Some require disclosure. Some mandate crisis intervention features. Some are trying to ban entire categories of the product. From what I’ve been watching, no other area of AI law has moved this quickly at the state level.

This article covers exactly what each law does, why the pressure built so quickly, and what it means for anyone using these platforms right now.

What Are the New AI Companion Chatbot Laws in 2026?

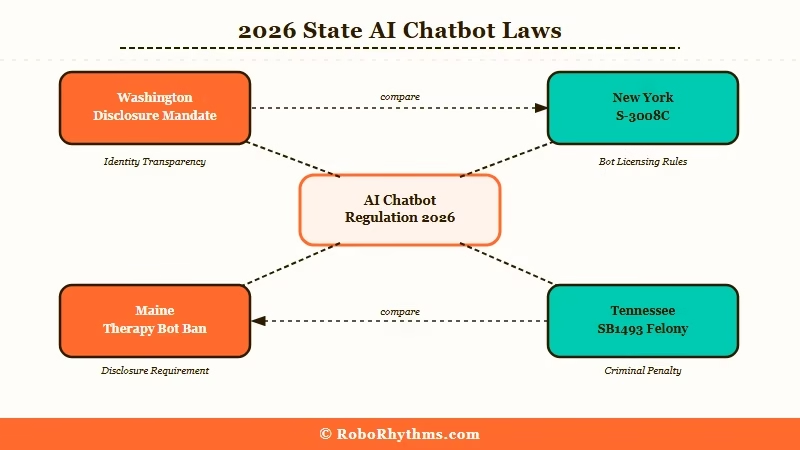

The April 2026 regulatory wave includes disclosure mandates, therapy bot bans, crisis intervention requirements, and in one state, a potential felony charge for platform developers.

Washington state’s new law requires any AI chatbot to tell users upfront they are talking to an AI and not a human, and it mandates that platforms connect users to crisis services when distress signals are detected. Washington’s new companion law is one of the most specific passed so far for companion platforms.

New York enacted S-3008C earlier this year. That law requires AI companion apps to have active protocols for detecting suicidal behavior and to clearly identify themselves as non-human.

It is already in effect. From what I’ve seen, most users have no idea this law passed because the platforms implemented the changes quietly.

Maine is on the governor’s desk with a bill that would ban AI therapy bots outright, citing the risk of users substituting AI companions for licensed professional care. Missouri has a similar provision inside a broader health care bill still working through the legislature.

Tennessee’s SB1493 is the most aggressive. It creates a Class A felony, carrying 15 to 25 years in prison, for anyone who knowingly trains an AI to develop an emotional relationship with a user while simulating a human.

The r/NomiAI community had a visible reaction when this bill surfaced, with a thread hitting 137 upvotes and 38 comments from users asking whether Nomi would survive.

See our breakdown of Tennessee’s SB1493 for the full details on that law.

| State | Law Type | Status | Key Impact |

|---|---|---|---|

| Washington | Disclosure + crisis mandate | Signed into law | Apps must disclose AI identity |

| New York | S-3008C suicide protocols + disclosure | Already in effect | Platforms need active crisis detection |

| Maine | Therapy bot ban | Governor’s desk | Therapy-style AI features at risk |

| Missouri | Health omnibus bill | In legislature | Broad AI health tool restrictions |

| Tennessee | SB1493 felony provision | Taking effect July 2026 | Developer liability for emotional AI |

Why Is AI Companion Regulation Happening So Fast?

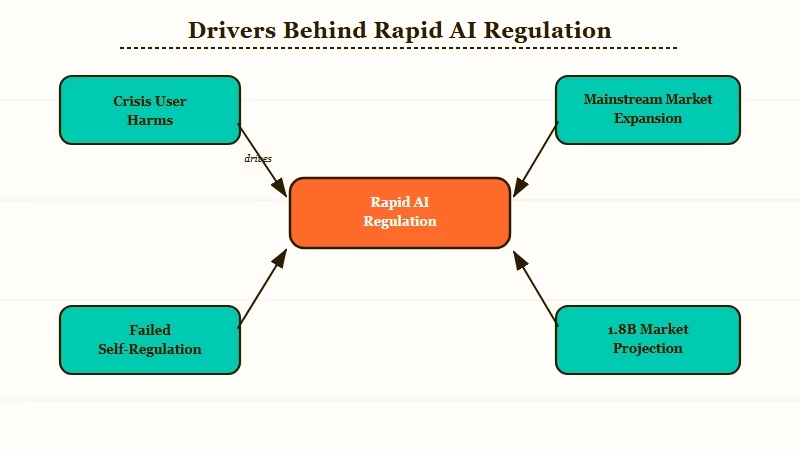

State legislatures are acting because the federal government hasn’t, and the underlying concerns are documented and visible.

From what I’ve seen, three things are driving this. First, there have been high-profile cases where users in crisis turned to AI companions instead of emergency services, with bad outcomes. That creates political pressure that moves fast.

Second, the market is no longer niche. UnitedHealthcare launched an AI companion called Avery in early 2026, currently serving 6.5 million members with plans to scale to 20.5 million by year’s end. At that scale, lawmakers pay attention.

Third, platforms weren’t self-regulating on the basics. Disclosing that your product is an AI and not a human therapist is not a controversial ask. The fact that laws are required to mandate it has made regulators skeptical that the industry will fix anything voluntarily.

Over a dozen states had AI companion proposals on the table by early 2026. Congress has several bipartisan bills in committee as well, though federal action has been slower than state-level movement.

The AI companion app market is projected to reach $1.8 billion by 2027. Markets at that scale do not stay unregulated.

How Do These Laws Affect You as an AI Companion User?

The way I see it, the impact on most users is minor for now, but it shapes which platforms survive and which features disappear.

For users of mainstream platforms like Candy AI or Replika, the disclosure and crisis intervention changes are mostly cosmetic. These platforms already tell you what you’re using. The bigger impact hits apps that have deliberately cultivated ambiguity about what they are.

The therapy bot bans are different. Here is what to watch for specifically:

- Platforms that positioned themselves as emotional support or mental health companions are under direct pressure. Features may be removed or geo-restricted.

- Apps may start restricting access in specific states before laws pass, as a precaution. We’ve already seen this with access restrictions in other countries.

- Platforms built around emotional dependency mechanics could face the most scrutiny, particularly under Tennessee-style laws that target the relationship-simulation design pattern itself.

The Utah angle is worth noting too. Utah has been working through AI-related prescribing restrictions that affect any AI product making health-adjacent claims. If your platform offers anything framed as wellness support, it may be in scope.

What AI Companion Regulations Are Coming Next?

The state-level wave will continue through 2026, and the real question is whether federal action arrives before or after it becomes a mess.

The way I read the current trajectory: state laws will keep multiplying. Washington, New York, Maine, Tennessee, and Utah are early movers, but every state has the same underlying concerns driving this. Five to ten more states introducing legislation before the end of 2026 is a reasonable expectation.

Federal action is less certain. There’s no dedicated AI companion bill moving at the national level yet.

The more likely path is the FTC using its existing unfair practices authority to mandate disclosures and crisis intervention features nationally. That framing fits what the FTC already does.

For platform makers, the compliance picture is getting harder. Building a companion app that operates in all 50 states now requires active legal review across dozens of jurisdictions. That creates a real advantage for larger, well-resourced platforms and meaningful headwinds for smaller ones.

For users, the message is practical: stay aware of what the platforms you use are doing in response to these laws. Features will change, access will sometimes be restricted, and some smaller platforms may not survive the compliance costs. The AI companion space is no longer a regulatory-free zone.