What’s Changed: Multiple AI companion platforms have updated their privacy policies to allow conversation data to be used for model training. Character AI, Replika, and others explicitly state in their terms that conversation data may be used to improve AI systems. Many users only discover this after months of intimate conversations.

Most people open an AI companion app expecting a private relationship. What they get is closer to a conversation with a platform that may be improving its model based on everything you say.

This is not new, but it has become more visible in 2026 as platforms update their privacy policies with clearer language about training data. If you have been using a companion app and sharing things you would not say publicly, this article is worth your time.

What AI Companion Apps Are Doing With Your Conversations

Most AI companion platforms use conversation data to improve their AI systems, either directly through their own training pipelines or by passing data to third-party AI providers.

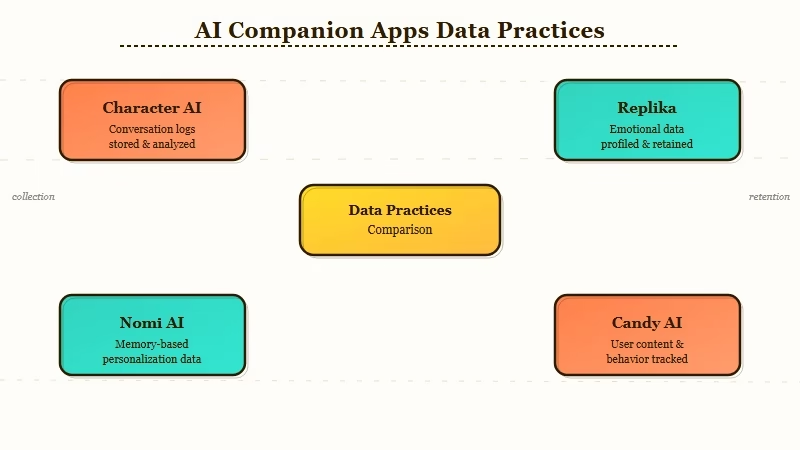

The platforms differ significantly in how they handle this. Here is what the major ones say:

Character AI: Conversations may be used to train and improve AI models. Character AI’s privacy policy states that user inputs are processed to generate responses and that this data may be used to improve services. The company’s terms do not provide an opt-out from this use.

Replika: Replika transmits conversation data to third-party AI language model providers to generate responses. Per their policy, these providers are contractually required not to use your data for training their own models. Replika has faced regulatory action: the Italian Data Protection Authority banned Replika from using users’ data in 2023, citing risks to vulnerable users.

Nomi AI: Positions itself as privacy-forward. Conversation data is used to generate responses and maintain memory for your specific account but is not shared for broad model training. This makes it one of the cleaner options if data privacy matters to you.

Candy AI / CrushOn AI / SpicyChat: These platforms use third-party model APIs and share conversation data with those providers under standard API terms. The underlying providers vary and most do not provide user-level opt-outs from data handling.

| Platform | Uses your chats for training | Third-party model provider | Opt-out available |

|---|---|---|---|

| Character AI | Yes | Own + Google models | No |

| Replika | Via third-party API | Third-party LLM providers | No |

| Nomi AI | Limited (memory only) | Proprietary | Partial |

| Candy AI | Via API | Third-party providers | No |

| SpicyChat | Via API | Third-party providers | No |

The uncomfortable reality, as MIT Technology Review documented, is that conversational data from AI companions is uniquely valuable for training emotional intelligence in AI systems. Platforms have every incentive to use what you give them.

Why This Matters More for Companion Apps Than Regular AI Tools

The concern with AI companion data is not just what is shared but what kind of data it is. Companion conversations involve emotional vulnerability, relationship dynamics, and personal disclosures that most people would not share publicly.

The intimacy is the feature. People tell AI companions things they do not tell other people. They work through difficult relationships, explore personal fears, and share private experiences. That is exactly what makes the data valuable for training social AI models.

From what I have seen in how companion platforms position their products, the patterns of why AI companions feel engaging often come down to the AI reflecting personal information back. If that personal information is also improving the model for other users, it changes the nature of the relationship.

The secondary concern is data breach risk. Emotional conversations are high-sensitivity data. A database breach at any of these platforms would expose not just email addresses but years of personal conversations.

What You Can Do About It Right Now

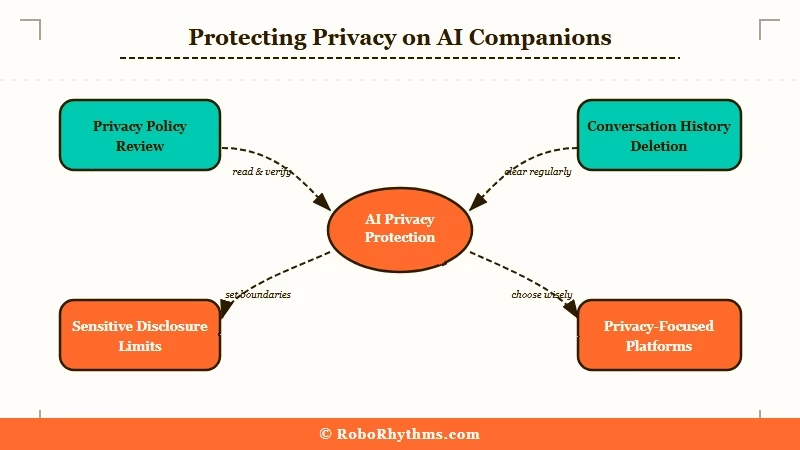

The most effective protection is choosing a platform with explicit privacy commitments, minimizing sensitive disclosures on platforms without them, and regularly deleting conversation history where deletion is available.

Here is what I recommend doing if you use AI companion apps regularly:

- Read your platform’s actual privacy policy. Search for “training”, “improve”, and “third-party” in the text. What you find will tell you the real policy faster than any summary.

- Check whether conversation deletion is available. Character AI lets you delete individual messages. Replika lets you delete your account and associated data. Go through this process for anything sensitive you no longer want stored.

- For platforms without data deletion: create a second account for sensitive conversations rather than using your main account. Keeps your regular history clean.

- If ongoing privacy matters to you, consider switching your primary companion app to Nomi AI. Nomi AI handles conversation data differently, using it for your individual memory rather than pooled model training.

- Avoid sharing legally sensitive information (financial details, health information, information about other people) in any AI companion regardless of the platform.

- Check your platform’s data export or account deletion options annually. Privacy policies change. What was acceptable use of your data a year ago may be different today.

For a companion app where the data handling is more explicitly user-focused, Nectar AI is worth looking at. The platform’s privacy approach and the depth of character customization make it worth considering if you are re-evaluating your companion app setup after reading this.

The is Chai premium worth it question is not just about features. And if you want to compare platforms before making a switch, the Dusk AI vs Candy AI breakdown covers data handling alongside features for two of the most popular alternatives. It is also about whether you are comfortable with that platform’s data practices before you commit to paying for deeper conversations.