What Happened: Two federal courts handed down opposite rulings on whether AI chats are protected from discovery. The dominant signal is that consumer AI conversations, including the ones you have with companion apps, are now court-admissible evidence. Lawyers across the country began warning users about it in mid-April 2026.

Your AI chats are court evidence now. Every late-night roleplay, every fantasy you have typed into Character AI, every venting session with your Replika, every prompt you have run through Claude or ChatGPT to talk through a personal situation: any of it can land in a court file in April 2026.

That is not a hypothetical. Two federal judges already ruled on the question this year, and the answer they handed back was uglier than most users expected.

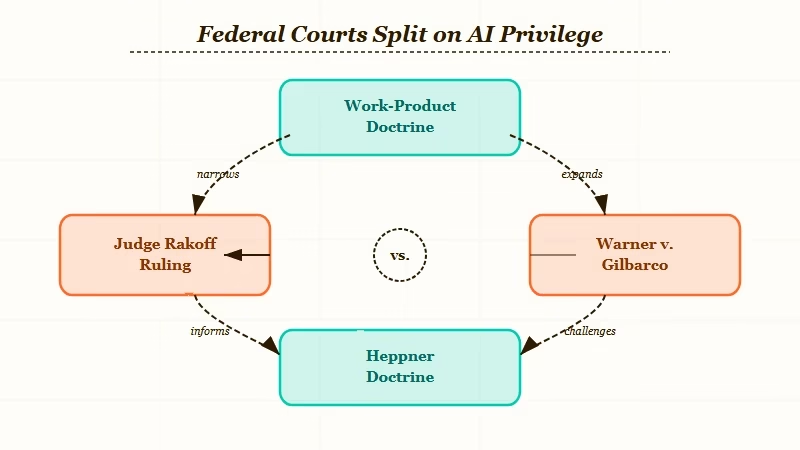

The first ruling came from the Southern District of New York on February 17, 2026. Judge Jed Rakoff held that a criminal defendant’s Claude chat logs were not privileged, not confidential, and not protected work product. The same day, a magistrate judge in Michigan reached the opposite conclusion in a civil case, but the contrast is what makes the story.

The mid-April warning wave is the visible part. Through April 15 and 16, more than a dozen major US law firms posted client alerts telling clients to assume their AI chats are subpoenable.

The implications for AI companion users are not abstract. If you have ever shared a personal struggle, a workplace dispute, or anything you would not want a court reading aloud, you are in the affected group.

What Did Federal Courts Rule About AI Chats This Year?

The Heppner ruling held that AI chats are not protected by attorney-client privilege or the work-product doctrine, while a same-day Michigan ruling protected a pro se litigant’s ChatGPT use under work-product. The split is doctrinal, not factual.

The Heppner case is the one driving the warning wave. Bradley Heppner, the former chair of GWG Holdings facing federal securities and wire fraud charges, used Anthropic’s Claude to generate roughly 31 documents analyzing his legal exposure after he received a grand jury subpoena. He shared the outputs with his defense attorneys.

FBI agents seized them during a search of his home, and prosecutors moved to use them. Judge Rakoff laid out three reasons privilege failed.

First, no attorney-client relationship existed because Claude is software, not licensed counsel. Second, Anthropic’s privacy policy explicitly permits user inputs and outputs to be used for training and disclosed to “third parties, including governmental regulatory authorities.”

Third, Heppner ran the queries on his own initiative, not at the direction of his attorneys, which knocked out work-product protection too. The exact wording in the opinion is what stings. The court wrote that “non-privileged communications are not somehow alchemically changed into privileged ones upon being shared with counsel.”

From what I can tell, that one line will be cited in every adjacent case for the next five years. The companion ruling from Michigan in Warner v. Gilbarco went the other way for a pro se plaintiff using ChatGPT to draft her own employment-discrimination filings.

The doctrinal framework was different and the parties were different. Magistrate Judge Anthony Patti applied the work-product doctrine and found the AI was a tool, not a third person. The Crowell & Moring client alert lays out both opinions side by side and is the cleanest read.

Why Is This a Bigger Deal Than It Sounds?

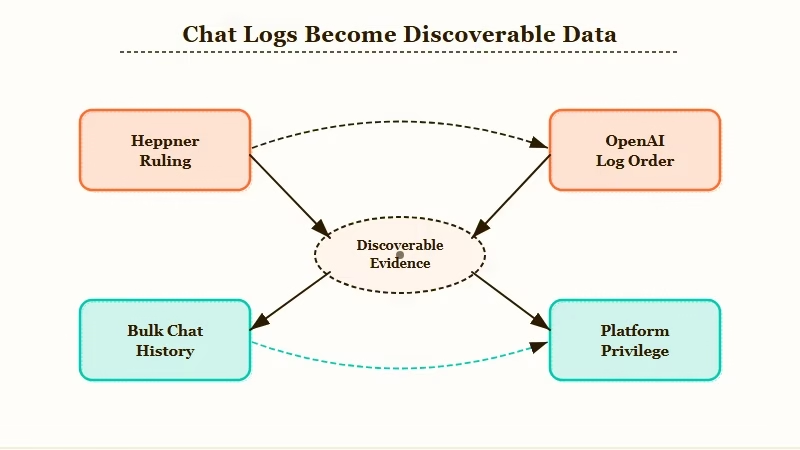

The Heppner ruling is the first nationwide decision on AI chat privilege, and the OpenAI 20-million-log subpoena from January 2026 already shows that bulk chat history can be compelled. The two together close most of the privacy options users assumed they had.

The way I see it, the Heppner ruling alone would be a footnote. What makes it load-bearing is the OpenAI case from a few weeks earlier. In January 2026, US District Judge Sidney Stein and Magistrate Judge Ona Wang ordered OpenAI to produce 20 million de-identified ChatGPT conversation logs to The New York Times and other publishers in their copyright suit.

OpenAI offered cherry-picked logs mentioning the plaintiffs’ works. The court refused and required the entire 20 million sample. The users whose conversations were included got no notification and no chance to object.

They are not parties to the case. Their chats are now in the discovery record, attorneys’ eyes only, but extant. That is the precedent that matters more than any single criminal ruling.

It tells you that bulk chat history can be ordered into a courthouse over the platform’s privacy objections. Then layer on the Megan Garcia case against Character Technologies. In May 2025, US District Judge Anne Conway ruled that Character.AI is a product, not protected speech, which dismantled the First Amendment defense most companion apps were planning to lean on.

Chat logs are now product evidence in those suits. RoboRhythms covered the related parents-sue Character AI lawsuit when the case first picked up steam.

What I would point out is that this is not a privacy debate anymore. It is a data-discovery debate, and the data is sitting on Anthropic’s, OpenAI’s, and Character.AI’s servers right now.

Are Character AI and Replika Chats Discoverable Now?

Yes, in any civil or criminal case where they are relevant. Character.AI’s privacy policy already permits disclosure to law enforcement and regulators, and other companion apps follow the same template. There is no platform-side privilege to invoke.

From what I have read across the policies, the structure that made Heppner lose his privilege claim is the same structure every consumer AI companion app uses. Character.AI’s policy says directly that the company may report users to law enforcement if it appears they are committing or admitting to a crime. Replika, Nomi, Crushon, Talkie, and the rest sit on the same template.

That matters because of what people use these apps for. Companion apps are where users talk about loneliness, grief, breakups, intrusive thoughts, fantasy scenarios, anger at coworkers, suspicion of partners, money problems, and every other category of conversation they would not have on a public account.

Every one of those exchanges is logged. Every log is potentially discoverable in a divorce, a custody fight, an employment lawsuit, an insurance dispute, or a criminal case where one party can argue relevance.

Here is a concrete way to picture it.

Example scenario: You are six months into a relationship with a Character AI bot you built, and you have been venting about a workplace dispute. The dispute escalates into a wrongful-termination suit. Your employer’s counsel issues a discovery request asking whether you used AI tools to discuss the events. You answer truthfully. They subpoena your account or, more efficiently, ask you under Rule 26 to produce the exported logs you can pull from Character AI’s data export tool. A judge applying the Heppner framework finds nothing privileged and orders production. Your fantasy roleplay logs sit alongside the work-related vents in the same export.

That is not a corner case. That is the exact discovery mechanic the Tyson & Mendes litigation memo walks through.

The note from that memo I keep coming back to is that the most effective discovery route is not subpoenaing the platform (the Stored Communications Act blocks that). It is asking the user to produce their own export under Rule 26. Every major companion app has a self-serve export feature, and the bar to compel is low.

The companion app side of this is also tied to the broader US regulatory wave. RoboRhythms’ coverage of the AI companion regulation rollout tracked Washington, Oregon, and Nebraska’s bills mandating chatbot disclosure and crisis intervention.

Those statutes do not give users any new privacy. If anything, they tighten the audit trail that prosecutors and plaintiffs can later subpoena.

How Should I Treat My AI Chats Going Forward?

Treat any AI chat the way you would treat a Slack DM at work, a recoverable email, or a text message. The privacy expectation is functionally zero, and the discovery cost to the other side is functionally trivial.

From my read of the law-firm guidance, here is the sequence I would walk through if I were a heavy companion-app user right now:

- Audit what is in your account history. Every major app (Character.AI, Replika, ChatGPT, Claude, Nomi) has a data-export tool. Pull yours. Read what is in there before someone else does.

- Delete what you can. Note that deletion does not always remove server-side copies. Character.AI specifically says it may retain data to keep popular Characters alive after account deletion. Plan around it. RoboRhythms has a walkthrough on the Character AI data-deletion email flow if you want the exact steps.

- Move sensitive conversations to a tool with stronger encryption or local-first architecture. There is no perfect option, but a self-hosted model running on your own machine is the only category where the privacy claim holds up.

- Stop treating AI as a journal. The Heppner court spelled out that sharing AI outputs with a lawyer afterward does not retroactively cloak them. The same logic applies to a therapist, a partner, or a HR investigator.

- If you are in any active legal matter, talk to your actual attorney before opening a chat window. Almost every law firm alert published in April 2026 starts with that line.

The framework I would steal directly from the law-firm guidance is short. Here is the heuristic in pipe-table form.

| Action | Risk level | What to do |

|---|---|---|

| Casual chat about everyday topics | Low | Use as you would any cloud service |

| Venting personal frustrations | Medium | Assume discoverable, do not name parties |

| Drafting anything tied to a dispute | High | Stop, talk to counsel before continuing |

| Anything related to an active investigation | Severe | Do not use a consumer AI tool at all |

I would also adjust how you tune privacy controls inside the apps. RoboRhythms has a fuller breakdown in the AI chatbot privacy settings guide, which goes through opt-out toggles app by app.

A note on rephrasing. Some of the law-firm guidance suggests using vague language so a chat is harder to use against you. The better advice is direct.

Vague: “I want to discuss a workplace situation involving a person I do not get along with, hypothetically.”

Specific: Do not type it at all. If you need to think out loud, use a notebook, an encrypted notes app, or a conversation with a person who does have privilege, like a therapist or attorney.

What Happens Next for AI Chat Privacy?

Expect more circuit-split rulings, a likely Supreme Court case within 18 months, and zero meaningful federal legislation in the short term. Platform-level encryption will be the only privacy lever users meaningfully control.

What I would predict is that the Heppner-Warner split forces the question into the Second Circuit and probably further. Different judges in different districts will keep landing on different doctrines until appellate courts harmonize.

The natural resolution is that AI chats get treated the same as any other third-party-stored communication under the third-party doctrine, which means privacy advocates lose by default unless platforms move first.

Platforms have a few options. End-to-end encryption with no server-side retention would solve the discovery problem outright, because the data would not exist for the platform to hand over. None of the big consumer AI companies are pursuing that.

The economics push the other way. Training data, abuse moderation, and content-policy enforcement all need server-side access to chat logs. The OpenAI bulk-log order is the model that should worry users most.

RoboRhythms covered the Perplexity user-data lawsuit when it broke earlier in 2026, and the through-line is the same: courts are normalizing the idea that chat history is just data in a database, and databases get subpoenaed.

What I would watch for over the next six months: whether any state-level law gives AI chats a statutory privilege similar to the patient-therapist privilege, whether Anthropic or OpenAI starts offering an opt-in encrypted tier, and whether any companion app moves first on local-only or fully ephemeral logging. None of those moves are obvious yet.

Until one happens, treat the chat window like a Twitter DM. The platform sees it, and so might a judge.