My Take: The AI backlash framing is wrong. People are not throwing Molotov cocktails at OpenAI because of hallucinations or alignment risk. They are angry about power bills, wage compression, and being told a small group of billionaires gets to rewire the economy on their schedule.

A man allegedly threw a Molotov cocktail at Sam Altman’s San Francisco home this month. Police found him carrying an anti-AI manifesto and a list of other tech executive names.

A few days earlier, gunmen put 13 rounds into the home of an Indianapolis city councilman who had voted in favor of a local data center project.

The dominant framing in the press is that this is the AI backlash going physical. The same framing was on the Bloomberg podcast last week, in Fortune’s anti-AI writeup, and in MIT Tech Review’s April 21 cover piece on the resistance movement.

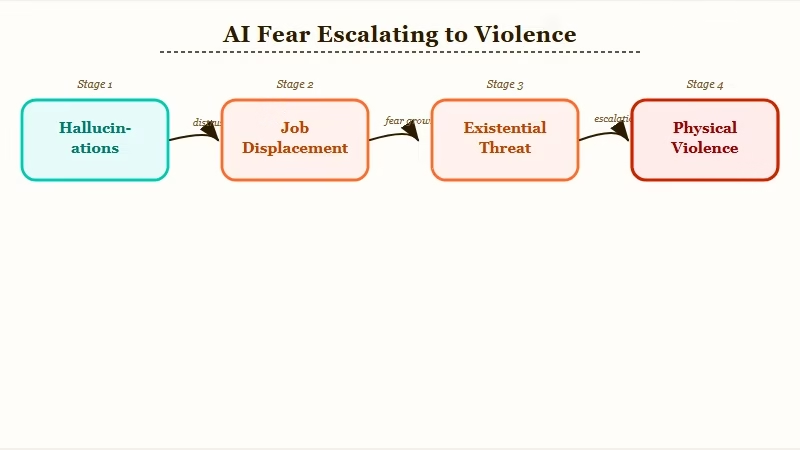

The argument runs roughly: people are afraid AI will take their jobs, lie to them, and end the world, so they are striking back at the people building it.

I think that framing gets the cause and the symptom mixed up. The thing people are angry about is not artificial intelligence. It is something AI has happened to make extremely visible.

The Mainstream View (And Why It Falls Short)

The mainstream view says the backlash is about AI capability and AI risk. The view falls short because it cannot explain why the violence is targeting data centers and CEOs rather than the models themselves.

A movement genuinely scared of the technology would push for slower model releases, capability evals, and licensing regimes. Instead, it is throwing rocks at infrastructure.

Fortune’s framing is representative of the genre. The piece quotes safety researchers, mentions teen mental health, references the Drexel chatbot study, and treats the violence as an emotional response to specific AI harms.

That story works for a subset of the protest movement. There are real parents furious about Character AI lawsuits, real employees displaced by automation, real artists whose portfolios were scraped. Those grievances are concrete and AI-specific.

But the actual targets do not match. The Molotov attack hit Sam Altman’s house, not OpenAI’s training compute. The Indianapolis shooting was about a data center vote, not about GPT-5’s behavior.

The Identity V boycott on April 23 is about NetEase using generative art while laying off the original artists. When you map the violence and the boycotts onto a chart, they cluster around two things: visible wealth concentration and visible local cost.

That is not what an AI-capability protest looks like. That is what a class protest looks like wearing AI clothing.

What’s Actually Happening

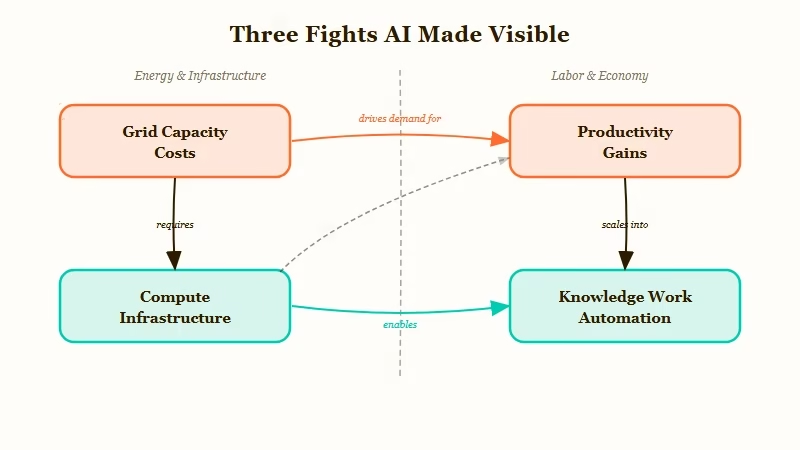

What is happening underneath the AI framing is that the technology has become the most legible symbol of three older fights: who pays the energy bill, who keeps the wages, and who gets a seat at the decision table.

AI did not start any of these fights. It just made them impossible to ignore.

The three fights are easy to name once you separate them from the AI surface story:

- The energy bill fight: who pays for the grid capacity that data centers consume

- The wage fight: who captures the productivity gain when knowledge work gets automated

- The seat-at-the-table fight: who decides whether a region hosts compute infrastructure or trains a frontier model

Take the energy fight. Data center buildouts in Virginia, Texas, and Indiana have pushed retail electricity rates up across entire grid zones. Time’s populist backlash piece shows the rate-rise pattern in three states already.

People are not protesting because they are scared of an LLM. They are protesting because their power bill went up 18 percent and the new substation feeds a Microsoft compute farm.

Take the wage fight. The Gallup data on Gen Z AI sentiment is striking. More than half of US Gen Z uses AI regularly.

Less than a fifth feel hopeful about it. About a third say it makes them angry, and nearly half say it makes them afraid.

From what I have seen, that is not a “this technology is dangerous” reaction. It is a “this technology is being used to compress my wages and my career ladder while a small group captures the upside” reaction.

I have seen this dynamic in the white collar job data from earlier this month. The pattern is bifurcation, not replacement. A few people get more leverage and the rest get more pressure to do more for less.

| Surface complaint | What is being said underneath |

|---|---|

| AI is going to take my job | I already feel powerless to negotiate my wage and AI is being used as the lever |

| Data centers are bad for the environment | I am subsidizing a billionaire’s compute through my electric bill |

| AI hallucinates and is unsafe | A small group of unaccountable people is making decisions that affect everyone and nobody asked us |

| I do not trust AI companies with my data | I have not trusted any large institution for ten years and AI is just the newest one |

Take the seat-at-the-table fight. The pattern that runs through every populist moment in the last fifteen years is the same: people feel that decisions which reshape their daily life are made by a small unaccountable group.

Wall Street did it in 2008. Pharma did it during the opioid years. Tech platforms did it during the social media decade.

AI is just the current iteration, and Sam Altman is now the visible avatar the way Mark Zuckerberg was before him. The pattern is also visible in the AI startup ARR fantasy, where revenue numbers get inflated to justify valuations that concentrate even more wealth at the top.

The Part Nobody Wants to Admit

The part nobody wants to admit is that the AI backlash will not be solved by better alignment, better safety messaging, or better PR. It will only be solved by addressing the underlying class fight, and the AI industry has no incentive and no plan to do that.

Every public response from the labs treats the backlash as an information problem. Sam Altman tweets about safety. Anthropic publishes papers on responsible scaling.

OpenAI runs ad campaigns about AI helping doctors. The implicit theory is that if people understood the technology better, they would calm down.

The problem is they understand it just fine. They understand that compute concentration is wealth concentration.

They understand that the productivity gains from AI are accruing to capital, not labor. They understand that nobody asked them whether they wanted their region’s grid to host a 500 megawatt data center. The information is not the issue.

I would argue the next twelve months are going to look worse, not better, for the labs on this front. The protest movement is still in its early phase, and the political class has not fully co-opted it yet.

When it does, you will get either a regulatory crackdown that hits compute and tax structure (the soft path) or rolling local violence and infrastructure sabotage (the hard path). Both happened to railways in the late 1800s and to oil in the 1970s. Neither was about the technology either.

The labs that survive this period will not be the ones with the best safety story. They will be the ones that figured out how to make the gains visible and distributable, which is much harder than writing a constitutional AI paper.

The AI companion regulation wave is a small preview. Tennessee’s developer-liability law, Washington’s HB 2225, Oregon’s SB 1546 are not safety bills in any meaningful sense.

They are accountability bills, and accountability is what people are demanding.

The same dynamic is going to hit frontier labs within eighteen months. The framing will sound technical. The driver will be class.

Hot Take

If a sitting US senator gets shot by an anti-AI protestor before the end of 2026, the press will write it up as an AI safety story and miss the point completely. The real story will be that a small group of people got rich enough fast enough to make themselves a target, and chose to spend the next year talking about model evals instead of about who pays the bill. Expect the rocks to keep flying until somebody addresses the part of the economy AI made impossible to ignore.