Quick Answer: Enterprise AI agent adoption is largely theater. Despite headlines claiming agents are reshaping business, MIT research shows 95% of enterprise GenAI pilots are failing and Gartner projects 40%+ of agentic AI projects will fail by 2027. The operators winning with AI agents in 2026 are individuals and small teams, not Fortune 500 companies with procurement committees and governance reviews.

The enterprise AI revolution has been announced so many times that the announcement itself has become a product.

Jensen Huang stood on stage at NVIDIA GTC 2026 and told an arena full of people that AI agents would transform business.

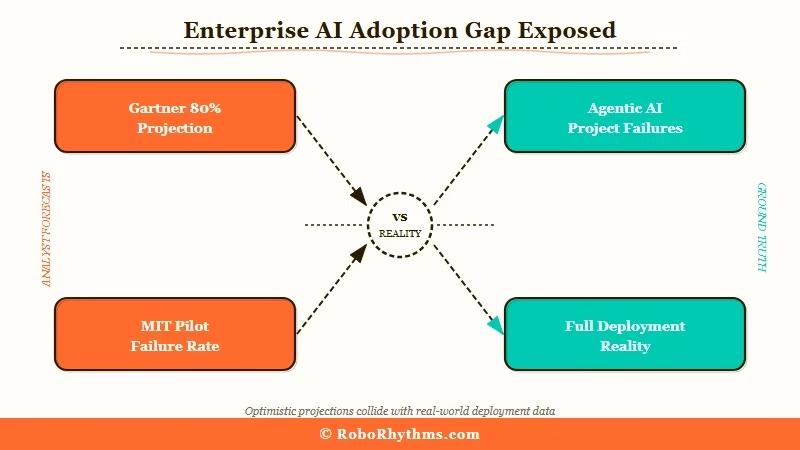

Gartner published research claiming 80% of enterprise applications would embed agentic AI by 2026. IDC has been tracking enterprise AI deployment budgets for three years running with the same conclusion: this is the decade of the AI enterprise.

I have been building and testing AI agent systems since 2024. What I see in the actual data and in the actual usage patterns tells a different story. Agents are winning.

The enterprise is not where that is happening.

The gap between the analyst narrative and the ground truth is not small. It is so large that the mainstream view and what is happening in practice describe two completely different worlds.

One of them has a lot of press releases. The other has working systems.

The Mainstream View (And Why It Falls Short)

The mainstream view on AI agents holds that enterprise adoption is the leading indicator of success.

The argument goes: when large companies commit budget, it signals maturity, which attracts developers, which creates tooling, which drives mass adoption. Enterprise first, everyone else follows.

Gartner and IDC have built entire research franchises on this narrative. Jensen Huang’s keynote at NVIDIA GTC 2026 positioned enterprise as the engine of the AI agent revolution.

If you only read analyst reports and investor decks, you would conclude that agents are being deployed at scale across global companies right now, and the results are coming.

Here is what those reports do not say: 95% of enterprise GenAI pilots are failing, according to research published by MIT. Not underperforming. Failing.

Gartner’s own projections include the prediction that more than 40% of agentic AI projects will fail by 2027 due to governance gaps alone, separate from technical issues.

The 72% of Global 2000 companies claiming to deploy agents beyond pilot stage is a number worth interrogating. “Beyond pilot” does not mean “working at scale.” It means someone in a department ran a proof of concept that was not shut down.

Only 6% of organizations have what could be called fully deployed agentic AI workflows. That is the real number. The other 66% are on their second or third pilot.

Enterprise AI adoption is a presentation. It exists to justify budget, satisfy board expectations, and appear favorably in competitor benchmarking reports.

The actual work is happening elsewhere, with people who cannot afford to spend two years in a governance review.

What Is Really Happening with AI Agents

The operators winning with AI agents in 2026 are individuals and small teams who skipped the governance committee entirely.

No procurement cycles. No IT sign-off. No 18-month deployment roadmaps. One person with API access and a real problem to solve.

I have tracked dozens of cases. The patterns are consistent:

- A solo consultant who automated their entire client onboarding process with a three-agent workflow in a weekend, cutting 12 hours of manual work per client to under two hours

- A content operation run by a single person that publishes more output per week than a 10-person team, using an agent pipeline they built themselves over two months

- A small e-commerce business that replaced three SaaS subscriptions with a custom agent that monitors inventory, generates product descriptions, and handles first-draft customer inquiry replies

None of these were enterprise deployments. None of them required a vendor relationship, an IT infrastructure team, or a steering committee. All of them are generating measurable output that justifies the time spent building the system.

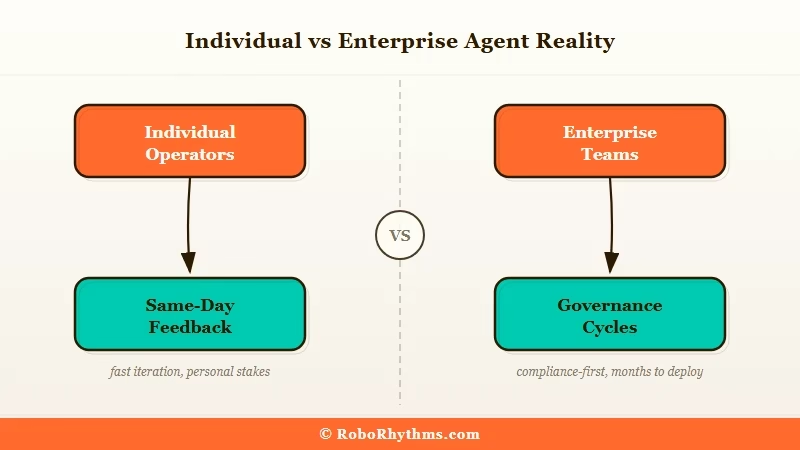

The reason individual operators succeed where enterprises fail is not technical skill. It is structure. A single person building an agent to solve their own problem has a feedback loop that closes in hours, not quarters.

They do not need to write a requirements document before they can test whether the agent works. They know within the same session whether the output is useful, and they can change it on the spot.

You can see exactly how this plays out in our breakdown of why AI agents fail in production.

The failure modes are almost exclusively organizational: unclear ownership, misaligned expectations, and feedback loops that span months instead of days.

Individual operators do not have those problems because there is no organization to create them.

According to MIT Sloan Management Review on enterprise AI adoption, one of the core barriers to enterprise AI is the gap between executive ambition and on-the-ground execution.

That gap does not exist for an individual operator who is both the executive and the executor. If you want to close that gap on your own terms, our guide to building your first AI agent covers the setup that works at the individual scale.

Individual agents also benefit from something enterprises cannot replicate: personal stakes. When you build an agent to handle a task in your own workflow, you care whether it delivers.

Enterprise agents are built by teams whose performance review is not tied to whether the agent delivers. The incentive structures are completely different, and it shows in the outcomes.

The Part Nobody Wants to Admit

The enterprise AI agent market is, in significant part, a vendor problem, not a company problem and not a technology problem. Vendors need enterprise contracts to justify their valuations.

Enterprise contracts require enterprise-grade governance, security, and audit trails. That overhead is why agents fail inside large companies before they ever do anything useful.

The honest reading of the data is that AI agent technology is mature enough to be genuinely useful right now, for problems with clear inputs and outputs, short feedback loops, and minimal coordination overhead.

That description matches the solo operator’s workflow almost perfectly. It matches an enterprise workflow almost never.

Here is the part the analyst reports skip: enterprise companies buying AI agent platforms are not buying deployed capability.

They are buying the option to deploy capability later, once the internal politics, security reviews, and change management are sorted out.

In the meantime, they announce the platform purchase in press releases and count themselves among the adopters.

Individual operators do not have that option. They either build something that works or they built nothing. That constraint produces results.

The way I see it, that forced accountability is the single biggest structural advantage of working at the individual scale.

The enterprise environment rewards the announcement over the result, which is why the announcement is what keeps appearing in the coverage.

The three-year adoption cycle that analysts keep predicting for enterprise AI agents may eventually arrive.

What is already here is a distributed population of individual operators who are quietly running businesses that would have required twice as many people two years ago.

You can get a sense of the tools they are using in our breakdown of the best AI agent tools in 2026.

| Deployment type | Time to first working agent | Governance overhead | Feedback loop | Fully deployed % |

|---|---|---|---|---|

| Individual operator | Hours to days | None | Same day | High |

| Small team (under 10) | Days to weeks | Minimal | Same week | Moderate to high |

| Mid-market company | Weeks to months | Moderate | Monthly review cycles | Low to moderate |

| Enterprise (1000+ employees) | Months to years | Extensive | Quarterly governance | 6% |

That table is not a prediction. It is a description of what is already happening. And the 6% figure comes from Gartner’s own research, the same organization running the enterprise AI hype cycle.

Hot Take

The enterprise AI agent story is a vendor-sponsored illusion, and the operators who saw through it are already a year ahead.

The most important AI agent deployment in 2026 is not a Fortune 500 initiative with a press release and a Gartner citation. It is a single person running a business that should require a team, because they built the team themselves out of code and API calls. Every enterprise roadmap presentation about agents is a distraction from the operators who already won while the committee was still forming.

Frequently Asked Questions

Are enterprise AI agent deployments failing?

Gartner projects more than 40% of agentic AI projects will fail by 2027, and MIT research shows 95% of enterprise GenAI pilots are currently failing. Only 6% of organizations have fully deployed agentic AI workflows. Enterprise deployment is lagging far behind enterprise announcements.

Why are individual operators more successful with AI agents than enterprises?

Individual operators have short feedback loops, no governance overhead, and personal stakes in the outcome. When a solo operator builds an agent, they know within hours whether it works. Enterprise agents face 18-month deployment cycles and change management processes that remove the feedback the system needs to improve.

Is the enterprise AI agent market real?

The spending is real. The deployed capability is not proportional to the spending. Enterprise companies are buying AI agent platforms to justify budget and appear competitive in benchmarking. That does not mean agents are not useful. It means the useful deployments are happening at a much smaller scale.

Who is winning with AI agents in 2026?

Individual operators and small teams who built agents to solve specific, measurable problems in their own workflows. They skipped vendor procurement, built directly on APIs, and have been running working systems for months while enterprises are still completing pilots.

Will enterprise AI agents catch up?

They will. The three-year adoption cycle analysts predict is plausible for companies willing to restructure workflows around agent capabilities. The gap between announcement and working deployment will close as governance frameworks mature. Right now, that gap is wide and getting wider.

What makes an AI agent project succeed?

Three things: a clear single problem with measurable output, a feedback loop that closes in days not quarters, and one person who owns the result personally. Enterprise projects fail because they optimize for process compliance over output.