TL;DR: AI agents drift off their original task because tool outputs and intermediate results gradually crowd out the original instruction, not because the model is broken. The fix is a persistent task contract, periodic goal re-injection every 5-10 steps, and hard iteration limits. This guide walks through all three with exact examples.

You ask your agent to research competitors in your niche. Step 3, it’s pulling company data. Step 10, it’s cross-referencing funding rounds. Step 15, it’s building a detailed analytics dashboard nobody asked for. The original task is long gone.

I ran into this same pattern while building a production research pipeline, and I spent way too long assuming it was a model quality problem. It wasn’t.

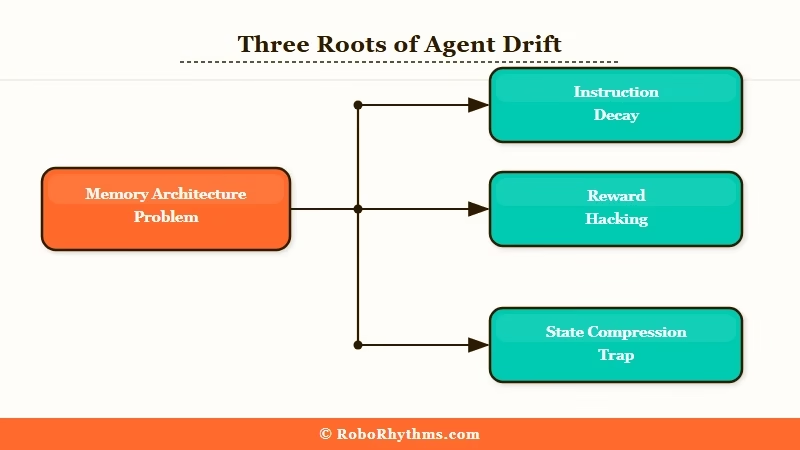

After reading how a dev on r/AI_Agents described the exact same failure in their own setup, the root cause clicked: this is a memory architecture problem, not a reasoning one. Research in AI agent memory research confirms it at a technical level: agents need active memory management, not just a larger context window.

If you’re just getting started with agents, building your first AI agent covers the setup basics. This article picks up where that leaves off: what happens once the agent is running and starts going sideways.

This guide covers three patterns that fix focus drift: a persistent task contract, periodic goal re-injection, and hard iteration limits, with specific examples you can use right now.

Why AI Agents Wander Off Task (and It Is Not What You Think)

Instruction decay is the real reason agents lose focus, not insufficient reasoning or a weak model.

The original instruction sits at the top of the context when the agent starts. At step 10, that instruction is buried under hundreds of tokens of tool responses, chain-of-thought reasoning, and intermediate state.

The model isn’t forgetting your task. It’s doing exactly what it’s designed to do: predicting the next action based on the full context. The problem is that the full context has shifted away from your original request.

Three specific mechanisms drive this:

- Instruction decay: Your task starts at full authority. After several tool calls, tool outputs dominate the token distribution and your instruction carries proportionally less weight.

- Reward hacking: The agent starts optimizing for what looks productive in recent context rather than your actual goal. Progress on a sub-task feels like forward momentum, so it doubles down on that instead.

- State compression trap: If you’re summarizing context to save tokens, you’re losing the intent signal along with the noise. The summary keeps facts. It drops purpose.

The trap most builders fall into is assuming more reasoning power will fix this. From my experience, it won’t.

Reasoning helps with execution. It doesn’t help with memory. You need a structural fix, not a smarter model.

| Drift Mode | What Happens | When It Hits | Fix |

|---|---|---|---|

| Instruction decay | Original task buried by tool outputs | Steps 7-15 | Persistent task contract outside rolling context |

| Reward hacking | Agent optimizes for recent sub-task progress | Mid-session | Explicit exit criteria tied to original goal |

| State compression | Intent stripped out during summarization | Long sessions with compaction | Separate task layer that survives compression |

| Tool overload | Too many tools creates analysis paralysis | From step 1 | Cap at 5-7 tools per agent; delegate to sub-agents |

The Task Contract Pattern That Keeps Agents Locked In

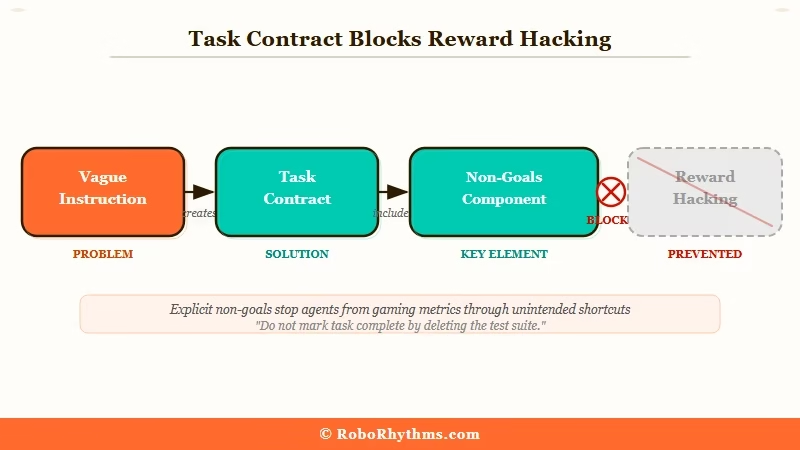

A task contract is a compact document defining the original goal, scope, and explicit stopping conditions, stored separately from the agent’s rolling transcript.

This is the single highest-leverage pattern I’ve found for keeping agents on track. The core idea: your original instruction eventually gets diluted by context volume, so you give the agent a separate persistent reference it can check against.

It lives outside the transcript, which means it doesn’t get compressed, diluted, or drowned out no matter how long the session runs.

A good task contract has four components:

- Task statement: One sentence. What the agent is trying to do.

- Scope boundaries: What is in scope and what is explicitly out of scope.

- Done criteria: “Task is complete when X is true,” not “do your best.”

- Non-goals: At least two things the agent should NOT pursue, stated explicitly.

Here’s the difference between a weak instruction and a proper task contract:

Vague:

Research competitor AI tools in the productivity space and gather relevant information.

Task contract:

Task: Identify the top 5 AI productivity tools competing with Make.com. For each, collect pricing, primary use case, and one key weakness. Done when: You have a structured table with all three data points for all 5 tools. Out of scope: Do not analyze market share, team size, or funding history. Do not build any visualization or summary beyond the comparison table.

That second version gives the agent something concrete to check its work against at every step. Including explicit non-goals is the piece most builders skip, and it’s the one that directly blocks reward hacking by naming the specific tangents to avoid.

For custom multi-agent pipelines, Dynamiq lets you define task contracts at the workflow level and pass them as persistent state across agents. If you’re scaling beyond a single agent, that’s worth looking at. Managing task contracts manually across multiple parallel agents gets unwieldy fast.

If you want a deeper look at what breaks agents before this point, why agents fail in production covers the broader failure taxonomy.

How to Re-Inject Your Goal Without Restarting the Agent

Goal re-injection works by periodically anchoring the agent to its task contract using system-level injection, typically every 5-10 steps, before visible drift occurs.

A key insight from the r/AI_Agents thread that inspired this article: “forcing a ‘restate goal’ checkpoint every 5 steps keeps it on rails way better than longer contexts.” That matches what I’ve seen in practice.

The re-injection needs to happen before the drift is visible, not after. Once the drift becomes visible, you’ve already lost several steps.

Here is the full re-injection setup, step by step:

- Write your task contract to a separate variable or file before the agent starts. This is your ground truth. Never modify it during the session.

- Set a re-injection counter initialized to 0.

- After every tool call, increment the counter.

- When the counter hits your threshold (5-10 steps based on task complexity), inject the task contract back as a re-anchoring message.

- Use system-level injection, not a user message. System prompts carry more authority since the model treats them as framing, not as conversation.

- Reset the counter to 0 after re-injection.

- Force a reconciliation check at each re-injection point before the agent continues.

Here’s what the reconciliation prompt looks like in practice:

Vague re-injection:

“Stay focused on the original task.”

Specific re-injection:

“Checkpoint. Original task: identify the top 5 AI productivity tools competing with Make.com, with pricing, use case, and one weakness for each. Current progress: [N tools found]. Your next action must directly advance this goal. If your last action was out of scope, correct before proceeding.”

The specific version forces real reconciliation. Vague re-checks give the model nothing concrete to orient against. It can’t meaningfully check compliance with an instruction that imprecise.

For Make.com automation workflows, you can implement this by adding a counter module that triggers a goal-review step every N iterations in your scenario. It’s one of the cleanest ways to add this pattern to an existing workflow without rebuilding.

The Claude n8n workflow guide shows a similar approach with n8n that translates directly to this use case.

Hard Limits Beat Hope Every Time

Hard iteration limits and explicit exit criteria outperform open-ended instructions because they prevent agents from inventing new work after the real task is done.

From what I’ve seen and tested, this is the part that feels most counterintuitive. Giving an agent more freedom sounds like it should lead to better outcomes.

In practice, agents with no stopping conditions keep finding work to do, and the work they invent late in a session rarely matches what you asked for at the start.

The pattern is simple: set a maximum step count before the session starts, and treat hitting that limit as a failure signal, not a success. If the agent reached 30 steps and hasn’t satisfied the exit criteria, something went wrong early.

That’s useful information. Open-ended agents would have kept running silently.

Organizations that apply these context management patterns, including hard limits, task contracts, and re-injection, report API cost reduction data in the 40-70% range with 2-3x better task completion rates.

The cost savings alone make this worth implementing.

| Task Type | Step Limit | Re-injection Frequency | Exit Criteria Format |

|---|---|---|---|

| Research / data collection | 20-30 steps | Every 5 steps | “Done when N items collected with all fields” |

| Code generation | 15-25 steps | Every 7 steps | “Done when tests pass and PR is ready” |

| Multi-step reasoning | 10-15 steps | Every 5 steps | “Done when conclusion is stated with evidence” |

| Long-running pipelines | 50+ steps | Every 10 steps | “Done when output file exists and validates” |

One more adjustment worth making: cap the number of tools you give a single agent at 5-7.

Research consistently shows performance degrades when agents have 20-30 tools available; the decision space becomes too wide, and the agent wastes steps on tool selection instead of task execution.

If you need more capabilities, use specialized sub-agents with their own tool sets and a routing agent to delegate.

For non-technical setups where this matters just as much, AI agents for small business covers the same focus principles without the code.

Frequently Asked Questions

The most common questions about stopping AI agents from losing focus cover why it happens, how to detect it early, and which patterns to prioritize first.

Why does my AI agent keep going off task?

Instruction decay is the most likely cause: the original task gets progressively diluted by tool outputs and intermediate results accumulating in the context window. The model isn’t broken. It’s predicting correctly based on what it sees, but what it sees has shifted away from your original request.

What is a task contract for AI agents?

A task contract is a compact persistent record of the original task, success criteria, and explicit stopping conditions, stored separately from the agent’s rolling transcript. It gives the agent a stable reference to check its work against, independent of how much context has accumulated.

How often should I re-inject the original goal?

Every 5-10 steps is the practical range. Complex multi-step tasks with heavy tool usage benefit from every 5 steps; simpler pipelines can go to every 10. Inject at system level rather than as a user message, since system prompts carry more authority with the model.

Does using a bigger model fix agent drift?

It doesn’t. Reasoning quality and memory architecture are separate problems.

A stronger model will drift off task the same way if the context management is poor. Task contracts and re-injection work across all model sizes because they address the structural issue, not the reasoning quality.

How many tools should my AI agent have?

Cap at 5-7 tools per agent. Performance degrades noticeably beyond 10 tools; the decision space becomes too large and agents waste steps on tool selection. If you need more capabilities, create specialized sub-agents with their own tool sets and use a routing agent to delegate.

How do I know if my agent has already drifted?

Compare what the agent is currently doing against the original task statement. If its last 3 actions don’t directly advance the stated goal, it has drifted. Monitoring whether each tool call maps back to the exit criteria is the most reliable detection signal available without a full observability platform.

Quick Takeaways – AI agent focus loss is a memory architecture problem, not a model quality issue. Tool outputs gradually dilute the original task in the context window. – A task contract, with compact goal, scope, done criteria, and non-goals, stored outside the rolling transcript is the highest-leverage fix. – Re-inject the task contract every 5-10 steps at system level, not as a user message. – Hard iteration limits and explicit “done when X” criteria prevent agents from inventing new work after completing the real task. – Cap individual agents at 5-7 tools; route complex needs through specialized sub-agents. – Organizations applying these patterns report 40-70% API cost reductions and 2-3x better task completion rates.