My Take: Free AI models in 2026 are a poor deal for any builder doing real work. The headline price of zero is paid in data exposure, rate limits, jurisdiction risk, and the dependency lock-in that arrives the day pricing changes. The model you wire your product to should never be the one you would not pay for.

The pitch is everywhere right now. Owl Alpha is free on OpenRouter, DeepSeek V4 is roughly 1/27th the cost of Anthropic for the same workloads, Gemini 2.5 Pro has a 1M-context window on a free tier, GitHub Models will serve you GPT-4.1 at zero per-token cost.

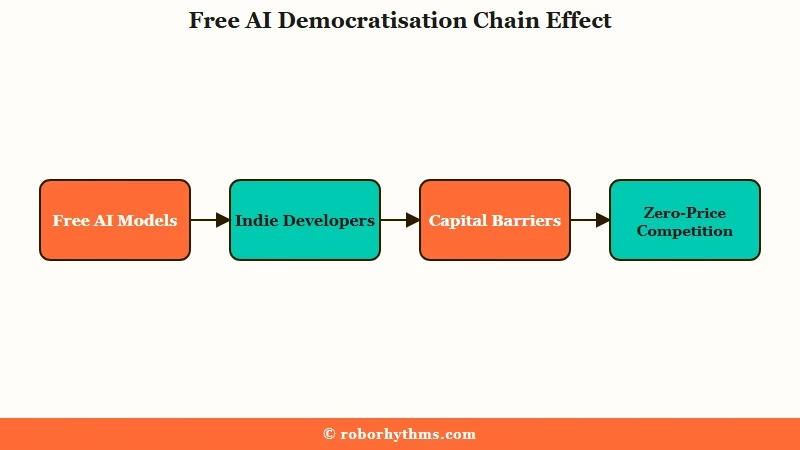

The collective response across r/AI_Agents, r/LocalLLaMA, and X is treating this as the democratisation moment.

I am going to push back on that. From what I have watched play out across the spring 2026 release cycle, the cost of every free AI tier is real. It is just denominated in something other than dollars.

This is a contrarian opinion piece, not a roundup. The mainstream view is that free AI access has democratised builder economics. The way I see it, free AI models are the worst deal a serious builder can take.

The Mainstream View And Why It Falls Short

The mainstream argument is straightforward: free models lower the barrier to entry, indie developers can prototype without burning capital, and competition between providers drives prices toward zero for everyone.

That argument is widely shared, including in the awesome-agents 2026 guide to free AI inference providers, which positions free APIs as a strict win for builders.

The reasoning is appealing. If you can run a coding agent overnight on Owl Alpha for $0 instead of $30 on GPT-5.5, that is real money saved.

If you can prototype a side project on Gemini 2.5 Pro’s free tier instead of writing the credit card down, that is friction removed. If DeepSeek V4 will summarise a thousand documents for $0.40 instead of $11 on Claude Sonnet 4.6, your batch jobs are cheaper.

In my experience, this framing is correct for hobby use and explicit one-off prototyping. It is wrong for anything you intend to ship to users or run on a schedule.

Here is the part the mainstream argument leaves out. “Free” is a marketing label, not a financial one.

The provider is not a charity. The cost is being collected somewhere, and the somewhere is always the same: your prompts, your data, your dependency, or your time.

What Is Really Happening With Free AI Tiers

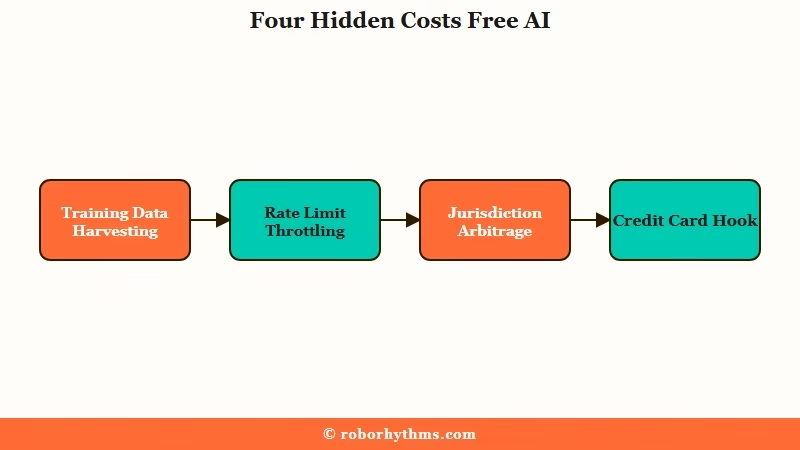

Every free AI tier in 2026 monetises through one of four mechanisms, in order of how aggressive each one is:

- Training-data harvesting. Your prompts and completions become training fuel for the next model release.

- Rate-limit throttling. Free-tier RPM caps make production use impossible, forcing you onto paid plans.

- Jurisdiction arbitrage. The cheapest providers route through legal regimes you would not pick for your data on purpose.

- The credit-card hook. Trial credits cover testing only, then your card is on file when production traffic hits.

None of them is in the user’s favour. All of them are documented.

On the data-harvesting side, the Captain Compliance 2026 AI privacy rankings put Meta AI, Gemini, and Microsoft Copilot at the bottom of the privacy scale.

The reasons are explicit: extensive data collection through mobile apps, vague policies, third-party sharing with advertising networks and corporate affiliates.

Mistral’s Le Chat sits at the top of the same ranking because it is French and bound by GDPR. The pattern is not subtle.

On the throttling side, Google AI Studio caps Gemini 2.5 Pro free-tier access at 5 requests per minute. Mistral’s free tier sits at 2. HuggingFace’s free Inference API can take 30 seconds or more to cold-start an unpopular model.

Those numbers are fine for a prototype. They are unworkable for a product with users.

On the jurisdiction side, DeepSeek’s privacy policy explicitly acknowledges data is stored on servers in China and may be shared to “comply with legal obligations” or “public interest” as defined by Chinese law. The cost-per-token math is real. So is the answer to the question “would you put your business’s prompts under that legal regime?”

On the credit-card-hook side, every “free trial” model on the market in 2026 (Anthropic $5, xAI $25, NVIDIA credits, DeepSeek 5M tokens) is structured to get you through integration before the bill starts. The free credits cover testing. The moment you have anything resembling production traffic, you are billing your card.

| Free AI tier | What is “free” | What costs you |

|---|---|---|

| Owl Alpha (OpenRouter Stealth) | $0 per million tokens | Prompts logged for model improvement, no SLA, will go paid |

| DeepSeek V4 free credits | 5M tokens of API access | Data stored on Chinese servers, jurisdiction risk |

| Gemini 2.5 Pro free tier | 1M context window, 5 RPM | Extensive data harvesting per privacy rankings |

| Mistral Le Chat free | 2 RPM rate limit | Strongest privacy in the market, but unusable for production volume |

| HuggingFace free Inference | Open-source models | 30+ second cold starts on non-popular models |

| GitHub Models free playground | GPT-4o, GPT-4.1, o3 | Non-production, no commercial use license |

| Anthropic / xAI trial credits | $5 to $25 of testing | Credit card required for any meaningful work |

| Owl Alpha price after window | Will not stay free | Other Alpha codenames have all gone paid |

A worked example of what the trade really costs:

Before: Indie builder picks Gemini 2.5 Pro free tier to prototype a customer-support agent. Saves $40/month on early dev. Ships to 200 users. Hits the 5 RPM cap by 9am every Monday. Users complain, builder migrates to paid Anthropic, has to re-test 600 prompts because Anthropic’s defaults differ on temperature and system prompts. Total switching cost: roughly two weeks of engineering time, no agent during the migration window, $4,000 of opportunity cost on a $40 savings.

After: Same builder picks Anthropic from day one for $0.50 in input cost per million on the cached tier. Ships at the same speed, with no throttling, no migration, no privacy review. Net result: spent $40 more, saved two weeks of engineering and a stack-rewrite tax.

The second story is the one nobody writes a Reddit post about because nothing went wrong. It is also what serious builders are doing.

The Part Nobody Wants to Admit

The dirty secret of free AI tiers is that they are designed for the provider to win two ways: capture market share from competitors, then convert the same captured users to paid once dependency is established.

From what I have watched across multiple Alpha-codename cycles on OpenRouter, this is not speculation. It is the documented pattern.

Polaris Alpha was free for a window, then unmasked as GPT-5.1 and moved to standard pricing. Sherlock Alpha was free for a window, then unmasked as Grok 4.1 and moved to standard pricing.

Owl Alpha is currently free. The number of OpenRouter Alpha models that remained free permanently is zero.

The framing of “free now, paid later” sounds neutral, but the cost is the migration tax. Every builder who wires a product to a free tier is paying that tax later.

The economics are predictable enough that the providers are pricing it in. The free window is the customer-acquisition cost the provider chose to take, and you are the customer.

In my experience, the only builders who consistently come out ahead on free tiers are the ones who knew from day one that they were doing one-off prototyping and would discard the work the moment they shipped. Every builder who tried to keep the free tier in their production stack has paid for it in either downtime, data exposure, or rewrite cost.

Our review of Owl Alpha covers the specific numbers on the speed and reliability gap. Our GPT-5.5 vs Claude Sonnet 4.6 comparison shows what production pricing really looks like for the workloads where free tiers fall short. Our autonomous Claude Code piece is a working example of a production stack designed around paid models from day one.

Hot Take

Free AI models are the cigarette companies of 2026. The first hit is free, you get used to the dependency, and the bill arrives once you cannot quit.

Build like a grown-up: pick the model you would pay for and pay for it from day one, because you will pay for it eventually, only by then you will also have eaten the migration cost.