TL;DR: The strongest AI job-search agents in 2026 are not single big prompts. They are 14-skill systems running on Claude Code, with one markdown file per task, Playwright for portal scanning, and a hard human-in-the-loop gate before any application is submitted. The open-source career-ops build scored 740+ listings and landed a Head of Applied AI hire on this pattern. Here is how to build your own.If you have spent any time on r/AI_Agents this month, you have seen the same shape of post over and over. Someone wires up Claude to a job board, lets it run unattended, sends 200 applications in a day, and gets exactly zero callbacks.

The pattern that has been working in 2026 is the opposite. Tight per-task skills, hard quality gates, a refusal to spray-and-pray, and a real human signing off on each application before it leaves the desk.

The way I see it, the most interesting build of the year is santifer’s open-source career-ops. Fourteen skill modes, a Go-based terminal dashboard, Playwright for portal navigation, and per-listing ATS-optimised PDF generation. The creator used it to evaluate 740+ listings, generated 100+ personalised CVs, and landed a Head of Applied AI role on the strength of it.

This is a build walkthrough, not a press release. I will lay out the 14-skill pattern, the Playwright wiring, the CV adaptation logic, and what this costs to run for a serious month-long search.

Why a Skill-Based Agent Beats One Long Prompt

A skill-based agent gives Claude Code one well-scoped task per file instead of one giant prompt holding the whole workflow.

That is what makes it survive past the first three runs. One monolithic agent that tries to scan portals, score listings, tailor CVs, and prep interviews all from one prompt drifts within five turns.

In my experience, the failure mode of single-prompt agents is always the same. Context overflows, the model starts forgetting earlier rules, and the output regresses to generic.

The fix is not a longer prompt. It is more files.

The career-ops repo names this directly: 14 skill modes in .claude/skills/career-ops/, each one a markdown file with YAML frontmatter that tells Claude what it is doing, what tools it has, and what it is allowed to output. Aakash Gupta’s “Job Search OS” build runs 18 skills on the same pattern, each one mapping to a specific step of the search.

The other reason this pattern wins is editability. Claude can read and edit its own skill files on request. You can tell the agent “tighten the scoring threshold to 4.2 instead of 4.0” and it will go open the relevant .md file and patch the rule in place.

What the 14-Skill Pattern Looks Like in Practice

The pattern is one markdown file per task, YAML frontmatter at the top declaring the skill, the body holding the actual instructions, all sitting under .claude/skills//.

A 14-skill system has 14 of these. Claude Code loads them on session start and invokes them via slash commands like /career-ops:scan or /career-ops:score.

A worked example of the file structure for a job-search build:

.claude/skills/career-ops/

scan.md # Portal scanner, 45+ companies via Ashby/Greenhouse/Lever

score.md # Reasoning-based fit scoring against your CV

tailor.md # Per-listing CV adaptation, ATS keyword injection

pdf.md # HTML to ATS-safe PDF via Playwright

intel.md # Company research, salary bands, level mapping

apply.md # Application form filling (does NOT auto-submit)

followup.md # Email follow-up templates per company stage

interview.md # Per-role question prep from job description

negotiate.md # Offer evaluation and counter-script generation

log.md # Application status logging to the Go dashboard

modes.md # Career archetype switching (engineer / PM / leadership)

voice.md # Writing-samples loader for tone-matched cover letters

reject.md # Anti-spray filter: refuse anything scoring under 4.0/5

reset.md # State reset for the next role search

modes/ # Evaluation logic that scan.md and score.md reference

templates/ # CV LaTeX/HTML templates per archetype

data/ # Your source-of-truth CV, career stories, preferences

jds/ # Cached job descriptions

writing-samples/ # Your writing for tone calibrationHere is the YAML frontmatter pattern for one of those skills:

Vague: “Read the job posting and tell me if I should apply.”

Specific:

---

name: score

description: Score a single job listing against the user's CV using a 5-point reasoning rubric. Output must include numeric score, 2-3 sentence rationale, and a clear apply / skip recommendation. Refuse to score above 4.0 unless the level strategy explicitly matches.

allowed-tools: Read, Bash, Grep

---That second prompt is what produces a useful score. The first one produces a confident-sounding lecture and zero ranking discipline.

How To Wire Up Job Portal Scanning With Playwright

Playwright is the right primitive because job boards render listings in JavaScript and refuse to render anything useful to a plain HTTP fetch.

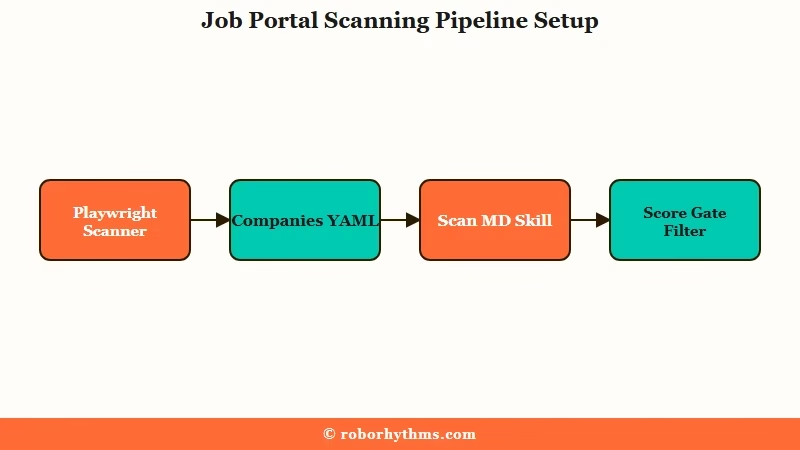

The career-ops scanner is pre-wired for 45+ companies, 19 search queries, and the three ATS systems that cover most of the high-quality listings: Ashby, Greenhouse, and Lever.

Here is the build order I would walk through:

- Install Claude Code, Playwright, and Puppeteer in your project directory. Both are needed: Playwright for the scanner, Puppeteer for the HTML-to-PDF pipeline that produces ATS-safe CVs.

- Configure a

companies.yamlwith company name, careers URL, and ATS type (ashby / greenhouse / lever). Start with five companies you genuinely want to work at. Resist the urge to import 200. - Build

scan.mdas a skill that takes the company list, launches Playwright headless, fetches the listings, normalises them into a flat JSON shape (title, location, level, description, URL, posted date), and writes them todata/raw_listings.json. - Build

score.mdas a skill that readsdata/rawlistings.jsonand your CV, scores each listing on a 5-point rubric, and writes the scored output todata/scoredlistings.jsonwith the rationale inline. - Filter ruthlessly at the score gate. Drop anything under 4.0 before the tailoring step. Tailoring is where the real cost lives, both in API spend and in your own attention.

The reason for step 5 matters more than it sounds. Once the scorer is honest, you find out that a sane search has maybe 8 to 15 real targets in a month, not 200. The agent’s job is to make those 8 to 15 land, not to maximise application volume.

| Step | Skill file | Tools used | Token cost per listing |

|---|---|---|---|

| Scan portals | scan.md | Playwright headless | low (HTML only) |

| Score against CV | score.md | Claude reasoning | medium (full JD + CV in context) |

| Tailor CV | tailor.md | Claude + templates | high (per-listing CV rewrite) |

| Generate PDF | pdf.md | Puppeteer + templates | none (deterministic render) |

| Prep interview | interview.md | Claude reasoning | medium (only invoked after callback) |

How To Generate ATS-Optimized CVs Per Listing

The right pattern is reasoning over the job description first, then regenerating only the bullets that change between listings, never rewriting the whole CV from scratch.

A skill that rewrites the whole CV every time burns tokens and produces drift. A skill that rewrites only the 4 to 6 most-relevant bullets and re-renders the PDF stays cheap and consistent.

Career-ops uses Space Grotesk for headings and DM Sans for body text. That choice is deliberate. ATS systems parse Unicode characters more cleanly when the font glyphs are simple and the ligatures are sane. Cute creative typography is what gets your CV silently rejected at the parser stage.

The keyword-injection logic lives in tailor.md and works like this:

- Read the job description

- Extract the top 12 to 15 keywords by importance, weighted by where they appear (title and required-skills sections weight higher than nice-to-haves)

- Map each keyword to an existing bullet on your CV

- If a keyword has no bullet that fits, write a new bullet that fits, but only from facts already in your source-of-truth file. Never invent experience.

- Re-render the PDF via Puppeteer + the LaTeX or HTML template

That last bullet is the only part that needs to be paranoid. The whole point of human-in-the-loop is that no application leaves your machine without your eyes on it.

Drift in the tailoring step is the most common failure I have seen people complain about on r/AIAgents this month, and the fix is always the same: keep a separate data/cvsourceoftruth.md and forbid the agent from generating anything not already in it.

For the bigger context on running AI agents 24/7 with safety gates, our autonomous Claude Code agent piece walks through the approval-queue pattern that pairs naturally with this build.

For Claude Code skills more broadly, our roundup of the best Claude Code skills covers which existing skill libraries are worth installing alongside a job-search build.

If you are interested in the multi-agent variant rather than a skill-based monolith, our 5-agent AI content team writeup covers the same pattern applied to a different domain.

What This Costs to Run on Real Job Searches

For a serious month-long search, expect to spend roughly $30 to $60 in API costs on Claude, or zero if you route the scoring and tailoring through the Gemini free tier.

That is a fraction of the value of even one decent interview, let alone a hire. The cost shape is dominated by tailoring, not scanning.

What I would recommend, based on the numbers in the public builds:

- Use Claude for the scoring and tailoring steps where reasoning quality matters

- Use Gemini’s free tier (15 RPM, 1M tokens/day) for batch scanning and intel gathering where volume matters more than depth

- Use Puppeteer locally for the PDF render, which costs nothing per run

- Cache job descriptions in

jds/so re-scoring after a CV update does not re-fetch and re-tokenise the same listings

The career-ops creator reports running the system on 740+ listings, generating 100+ tailored CVs, and the final outcome was a real Head of Applied AI role. The 100+ versus 740+ ratio is the part worth internalising. The agent’s biggest contribution was the 640+ listings it filtered OUT, not the 100 it spent tokens on.

That is the inversion that makes this pattern work. The agent is most useful as a refusal engine, not a generation engine.

Frequently Asked Questions

Do I need to know Go or LaTeX to build this?

Not for the core agent. The 14 skill files are all markdown. The Go terminal dashboard is optional, mostly a quality-of-life layer. CV templates can live in HTML and render via Puppeteer if you do not want to touch LaTeX.

Can the agent submit applications automatically?

The reference builds explicitly do not auto-submit. They prepare the materials, navigate to the apply page, and pre-fill the form, but the human clicks the final submit. This is a feature, not a limitation. Auto-submit is how you accidentally apply to the same company twelve times.

How long until the agent’s evaluations are useful?

The public builds report that the first week of scoring is unreliable. The agent needs your CV, career stories, writing samples, and preferences loaded into data/ before its judgments line up with yours. Treat the first week as recruiter onboarding, not a finished product.

What is the difference between this and CrewAI or AutoGen?

Skill-based Claude Code agents run as one process with one model and one filesystem, coordinated by markdown files. CrewAI and AutoGen are multi-agent frameworks where separate agents pass messages. The skill pattern is simpler and easier to debug. The multi-agent frameworks scale better past 20 to 30 distinct roles, which most personal job searches do not need.

Is it safe to put my full CV and writing samples in a project directory?

Yes if you keep the project local and outside any shared drive or cloud sync that auto-uploads. Add .claude/, data/, and writing-samples/ to your .gitignore before you commit anything. The career-ops repo’s README is explicit that data security is the user’s responsibility.

How do I keep the agent from inventing experience?

Two file-level rules in tailor.md. First, declare a single data/cvsourceof_truth.md and instruct the skill to read from it only. Second, add a refusal clause that any generated bullet not traceable to a source-of-truth claim must be flagged for human review before rendering.