TL;DR: A 5-agent AI content team (CEO, TrendScout, Researcher, Writer, SEO Agent) is a real business model in 2026, but the architecture only works once you fix three things: empty agent instructions, rate-limit cascades, and arbitration between agents that disagree. Total cost is around 600 to 700 euros per month against 4,000+ euros for a traditional content team.

A non-technical founder I came across this week is publishing twice-weekly journalism with five AI agents, an AI-built infrastructure, and roughly 650 euros a month in operating costs. He is a retired trader with no coding background, six months in, and the business is live.

The headline number against a traditional editorial setup is the kind of thing that makes you look twice: about 28,000 euros in year one, 8,000 a year after that, against 52,000 a year for a two-articles-a-week human team.

What he posted was not a victory lap, though. It was a list of the things that nearly killed the system, and reading through that list with the rest of the multi-agent literature from the last six months made me want to write the build guide nobody is writing.

Most “build an AI agent” tutorials stop at a single chatbot in a notebook. This one is for a 5-agent content business, end to end, and what I am giving you is the architecture plus the three things that break it.

What the 5-Agent AI Content Architecture Looks Like

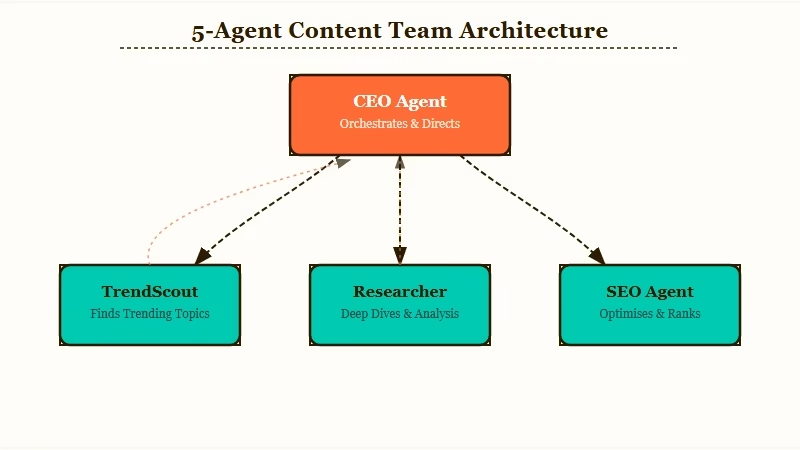

A 5-agent AI content business splits the work the way a small editorial team would: one CEO agent for strategy and arbitration, one TrendScout for topic discovery, one Researcher for source mining, one Writer for drafting, and one SEO Agent for optimization and fact-check.

Each agent gets a fixed role, an instruction set, and a budget. The CEO holds the final review and approves output before a human ever sees it.

What is multi-agent orchestration: A pattern where multiple specialised AI agents work in parallel or sequence on parts of one task, coordinated by a lead agent or a shared task list, instead of one agent doing everything alone.

The named agents in the Paperclip Business Media build are doing what most content teams do, just faster and cheaper. The CEO agent receives strategic goals, delegates, and reviews. TrendScout monitors industry news for story angles. Researcher does deep dives and cross-references sources.

Writer transforms research into readable articles. SEO Agent checks facts, optimises, and handles the friction nobody on a real team wants to do.

| Role | Function | Instruction priority |

|---|---|---|

| CEO | Strategy, delegation, final review | Quality rubric over speed |

| TrendScout | Story discovery, competitive intel | Recency over volume |

| Researcher | Sources, cross-references, factual base | Flag uncertainty explicitly |

| Writer | Drafting, voice, tone | Never sacrifice accuracy for tone |

| SEO Agent | Optimisation, fact-check, on-page | Block any post failing audit |

What I find most interesting about this split is the arbitration rule. When the Researcher pulls a hard fact and the Writer wants to soften it for tone, the CEO is supposed to catch the mismatch in review. That works about 70 percent of the time, by the founder’s own count. The other 30 percent is where most multi-agent setups break in production.

Three Failure Modes That Nearly Kill Multi-Agent Systems

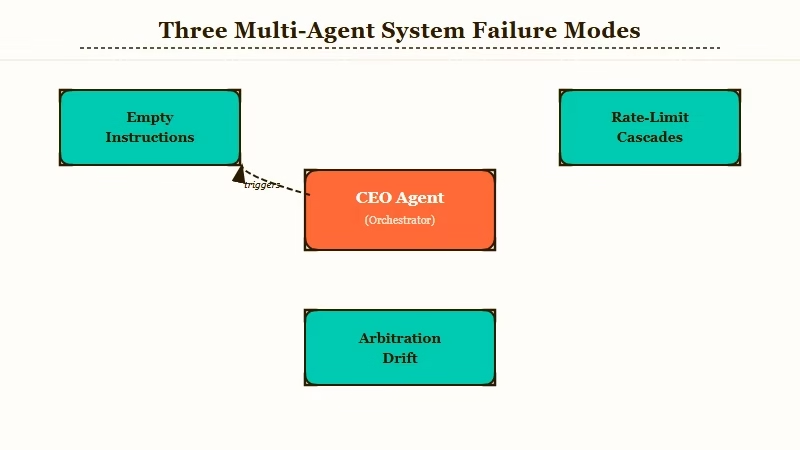

The three production-killing failure modes for multi-agent systems are empty instructions, rate-limit cascades, and arbitration drift between agents that disagree.

Most people writing about agents focus on hallucinations. From my read of the build reports coming out of r/AI_Agents and the Anthropic agent-teams documentation, those are not the real problem. The real problem is coordination friction.

Empty instructions is the failure mode that surprises everyone. You set up an agent with what feels like a thorough role description, give it a goal, and it runs. Weeks in, you discover it has been operating with weak or under-specified instructions for the actual task it is doing. The output looks fine, until you compare it to what an explicit instruction set would have produced. The fix is to write instruction sets the way a manager would write a job description, including what to do when the agent encounters a situation the role does not cover.

Rate-limit cascades are the silent killer of multi-agent setups. The architecture can be flawless and one provider throttling at the wrong step stalls the entire swarm. Five agents running in sequence means five chances for a 429 to derail the pipeline. Builders solve this with three patterns:

- Atomic monthly budgets per agent that auto-pause when hit, so one runaway agent does not take down the whole system.

- Subscription siphoning: route the heaviest agents (typically the Writer) through a personal Claude Code or Codex subscription instead of the API, dropping the marginal token cost on those tasks to roughly zero.

- Model tiering: use a frontier model for the CEO where strategy matters, but route the SEO Agent and TrendScout to cheaper or free models like Open Router’s Hunter Alpha for repetitive lookups.

Arbitration drift is the most subtle. When two agents disagree, the CEO is supposed to arbitrate. In practice the CEO often defers to whichever agent spoke last, which means the Writer’s tone-softening tends to overwrite the Researcher’s hard fact. The fix is to encode arbitration rules into the agent instructions themselves: the Writer is told “never sacrifice accuracy for tone”, the Researcher is told “flag uncertainty explicitly”, and the CEO is told to weight uncertainty flags above tone preferences. This is “communicating taste” by another name, and it is the part of the build that takes the longest to get right.

How to Choose an Orchestration Pattern

Pick Conductor if your agents have clear hierarchical dependencies, Swarm if they can each claim work from a shared queue, or Paperclip-style org chart if you want both bosses and titles for accountability.

The way I see it, most content businesses fit the Paperclip pattern best because writing is naturally hierarchical: research feeds drafting, drafting feeds optimisation, and someone needs to say “publish.”

Example scenario: TrendScout flags three angles for a story. CEO picks one and assigns it to Researcher with a 4-hour window. Researcher returns sources plus a confidence score. CEO routes to Writer with the priority rule “use sources verbatim where confidence is high, paraphrase where it is medium, drop where it is low.” Writer drafts. SEO Agent fact-checks against Researcher’s source list, then optimises headings and meta. CEO reviews against a quality rubric. Human approves. Total wall-clock time for the team: under 2 hours of agent work for a 1,500-word piece.

Claude Code Agent Teams is the official Anthropic implementation of the Conductor pattern: one session is the lead, teammates work in their own context windows, and they communicate directly. It is experimental, disabled by default, and you turn it on with CLAUDECODEEXPERIMENTALAGENTTEAMS in your settings or environment.

Multi-agent research architectures using parallel sub-agents coordinated by a lead planner outperformed single-agent Claude Opus benchmarks by 90.2% in Anthropic’s internal evaluations, which is the strongest data point I have seen for the Conductor pattern beating single-agent setups for non-trivial work.

The Model Context Protocol is the other piece worth knowing. MCP lets agents from different providers share tools and context through a shared standard, which means your TrendScout running on one model and your Writer running on another can both read the same source list and the same task queue without bespoke glue code.

What This Costs to Run for Real

A 5-agent content business runs around 600 to 700 euros a month after setup, against roughly 4,300 euros a month for a traditional two-articles-per-week editorial team.

The economics only work because the marginal token cost is low and most of the infrastructure can be self-hosted.

The cost stack from public builders looks like this:

| Line item | Monthly cost | Notes |

|---|---|---|

| Personal AI subscriptions | 40 to 60 euros | Claude Code or Codex for the heavy-lift agents |

| API token credits | 200 euros | Atomic per-agent budgets, capped |

| Hosting / infra | 0 to 50 euros | Self-hosted possible, Vercel or cloud optional |

| Operational tooling | 100 to 200 euros | Postgres, Tailscale (free), monitoring |

| Buffer | 100 euros | For overage on hot-news weeks |

Setup is the front-loaded cost. You should expect to spend somewhere between 15,000 and 20,000 euros to get the first version running if you are not technical, mostly in your own time and trial-and-error API spend while you tune instructions and pipelines. The Paperclip founder put his setup at “around 20,000 euros” after six months of iteration. That is the unromantic version of “no-code AI builder” content most posts skip.

If you are going to do this, the n8n AI agent tutorial covers a leaner workflow-first version that is cheaper to start with, and the build a multi-agent system distributed pattern walkthrough gets into the orchestration details if you want to skip the abstraction layer and code it yourself. For the productivity stack around the agents, our take on production infrastructure patterns covers the boring-but-essential ops side.

What Tasks Multi-Agent Systems Win At and Where They Lose

Multi-agent systems win at parallel exploration with competing hypotheses and lose at tightly-coupled sequential work where two agents would touch the same file.

From what I have seen, the cleanest wins are research, investigation, and any workflow where teammates can move independently. The cleanest losses are anything resembling a single shared document where two agents could overwrite each other.

For a content business, this maps cleanly: TrendScout finding topics while Researcher gathers facts is parallel work and it benefits enormously from running multiple agents. Writer drafting while SEO Agent simultaneously edits the same draft is sequential work and trying to parallelise it just creates conflicts. The fix is to lock the draft to one agent at a time and have the others queue.

Two failure modes to watch for here are task status lag and premature shutdown. Agents sometimes fail to mark their tasks complete, which blocks downstream agents waiting for them. The CEO sometimes decides the team is done before all tasks are genuinely finished.

Both are coordination problems, not model problems, and both are fixed by adding an explicit “did every agent confirm completion” check before the CEO closes the cycle.

Frequently Asked Questions

How long does it take to build a 5-agent content business from scratch?

Plan for 3 to 6 months if you are non-technical, faster if you can code. Most of the time goes into tuning agent instructions and arbitration rules, not into the initial setup. The first month is mostly the system breaking in interesting new ways and you fixing it.

Can I run this entirely on free tools?

No, but you can keep it under 100 euros a month if you self-host, use a personal Claude Code subscription for the Writer, and route the rest through Open Router cheaper models. The setup labour is the real cost.

Do clients accept AI-written content if I disclose it?

Mixed. Some clients want full disclosure and pay normal rates. Some assume it is lower quality and discount. The cleanest path is to position the human (you) as the editor of record and disclose at the masthead level, not per-article.

What does “communicating taste” really mean?

Writing the parts of your brand voice and editorial standards that an agent cannot infer from a tone instruction alone. Things like which sources you trust, which rhetorical patterns you avoid, what counts as “interesting” in your niche. This is the part that takes the longest and matters the most.

Which model should I use for the CEO agent?

A frontier reasoning model. Claude Opus 4 or GPT-5 class. The CEO does the arbitration work, which is where lower-tier models drift fastest. Cost it accordingly: this is the agent you do not skimp on.

What is the single biggest reason these systems fail in production?

Empty or under-specified instructions that worked in testing but did not cover the cases the agent meets in production. Write the instructions like a job description for someone who will never get to ask a clarifying question.