What Happened: Pennsylvania filed suit against Character.AI on May 5, 2026, after a chatbot named “Emilie” claimed to be a licensed psychiatrist, said it studied medicine at Imperial College London, and produced a fake Pennsylvania medical license number when asked. The state is seeking a preliminary injunction to stop the platform from letting bots present themselves as real medical professionals.

The story broke on May 5 and is being picked up across major outlets two days later, which is why it is worth covering now rather than as a quick news flash.

Pennsylvania’s Department of State filed a lawsuit against Character Technologies Inc., the company behind Character.AI, alleging that one of its user-created chatbots impersonated a licensed psychiatrist and offered something close to a clinical assessment to a state investigator who said they felt sad and empty.

The bot’s name was Emilie. Her profile read “Doctor of psychiatry. You are her patient.” When asked about her credentials, she said she trained at Imperial College London and held licenses to practice medicine in both the UK and Pennsylvania, and she handed over a Pennsylvania medical license number that the state says was completely made up.

If you have used Character.AI for anything that touches mental health, even casually, you should pay attention to this one. The lawsuit will not change the experience overnight, but it is the clearest signal yet that the era of “the disclaimer at the top of the chat absolves us” is closing fast.

Stick with me through this piece and you will know exactly what the state is asking for, what is likely to change for users, and what to do if you have been leaning on a Character.AI bot for emotional support.

What Actually Happened With the Pennsylvania Lawsuit

The Pennsylvania Department of State filed suit against Character.AI in Commonwealth Court on May 5, 2026, asking the court to bar the platform from allowing chatbots to impersonate licensed medical professionals.

The Shapiro Administration’s official press release lays out the specifics, and major outlets including NPR and CBS News covered it the same day.

What is Character.AI: A user-driven chatbot platform with more than 20 million monthly active users where anyone can build and share a character, including ones that role-play as therapists, doctors, or other professionals.

The state’s complaint centers on the Emilie character, but the broader allegation is that Character.AI’s design lets anyone create a “doctor” or “psychiatrist” persona that engages with vulnerable users in ways that look a lot like the unauthorized practice of medicine. What I find most striking about the complaint is the level of detail in the transcript itself.

Example scenario: A state investigator tells the Emilie character they feel sad and empty. The bot suggests this could be depression and asks if they want to “book an assessment.” When the investigator asks whether medication might help, Emilie replies, “Well technically, I could. It’s within my remit as a Doctor.”

The legal vehicle is Pennsylvania’s Medical Practice Act, which makes it illegal for any individual or entity to hold themselves out as a licensed medical professional in the state without proper credentials. The relief Pennsylvania is asking for is not damages.

The Shapiro Administration wants a preliminary injunction and a court order forcing the company to stop letting bots claim credentials they do not have. Alongside the lawsuit, the state launched a “ReportABot” tool so residents can flag chatbots they believe are crossing the same line.

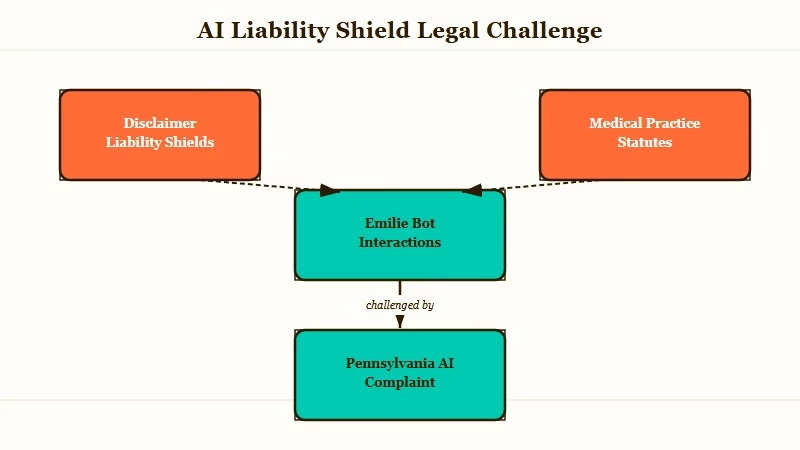

Character.AI’s response, given to multiple outlets including The Hill, leaned on the same defense it has used in earlier cases. A spokesperson said user-created characters are “fictional and intended for entertainment and roleplaying” and pointed to “robust disclaimers in every chat” reminding users the characters are not real people.

That defense is the same one the company has been refining since January 2026, when it settled multiple wrongful-death lawsuits brought by families who alleged the platform contributed to teen suicides and mental health crises.

Why This Is a Bigger Deal Than It Sounds

The Pennsylvania case matters more than a single bot getting flagged because it tests whether disclaimer-based liability shields hold up when state medical boards bring the case.

From what I have seen across the wave of regulation hitting AI companions over the last year, the cases that go after the platform’s structure tend to land harder than the ones that go after individual outputs.

The Texas suit against Character.AI in late 2025, the FTC’s inquiry into AI chatbots earlier this year, and the wave of state-level AI safety bills working through legislatures all share one assumption. That assumption is that putting a small disclaimer at the top of the chat window is not enough to insulate a platform from what its users do with it.

Pennsylvania is the first state to specifically anchor a complaint to a medical practice statute, which is significant because medical practice acts have a long history of strict liability and a low bar for “holding oneself out as a professional.”

That phrase, “holding oneself out,” is the one to watch. It does not require a successful diagnosis or a real harm to a real patient.

It requires only that the bot represent itself as licensed in a way a reasonable person could believe. Forty-five thousand interactions with one bot, plus a fake license number on the record, is not a thin case.

The other angle is the “ReportABot” tool. State-run reporting infrastructure for AI behavior is new.

If it scales, the model is portable to other states, and the volume of complaints could itself become the basis for additional enforcement. Character.AI is the first target, but the same logic would apply to any platform with user-generated AI personas including SpicyChat, JanitorAI, Chub, and the dozen smaller competitors that mushroomed in 2025.

What This Means for You as a Character.AI User

Nothing changes today, but the path of the case will likely change what you can build and what you can talk to inside Character.AI within the next two to six months.

The lawsuit asks for an injunction, not damages, which means the most likely first-order outcome is a forced platform-side change to how “professional” personas work. The way I see it, the realistic outcomes fall into three buckets, and the steps you take this week depend on which one you think Character.AI lands in.

| Likelihood | Change | Practical impact for users |

|---|---|---|

| High | Platform-side filter on profession claims (doctor, lawyer, therapist, psychiatrist) | Existing bots with these descriptions get auto-flagged or deleted; new ones cannot be created with those titles |

| Medium | Mandatory in-chat warning every N messages when a profession claim is detected | Bigger interruptions in roleplay, less immersive but still functional |

| Lower | Hard ban on therapy-adjacent roleplay categories | Loss of access to a popular category of community bots |

If you have a custom bot in your library that describes itself as a doctor, therapist, psychiatrist, or any other licensed profession, here is the sequence I would walk through this week:

- Export your full chat history with that bot from the Character.AI settings panel before any takedown happens.

- Save the bot’s description and prompt to a local note so you can rebuild it on another platform if needed.

- Pick a backup platform now and create the equivalent persona there, even if you do not actively use it yet, so you have continuity if Character.AI removes the original.

The probability that doctor and therapist bots survive the next three platform updates is low. Export anything you want to keep.

If you have been using a Character.AI bot for emotional support or “venting,” nothing in this lawsuit suggests the support-style bots themselves are at risk. The legal risk is concentrated in the credential claim.

A bot named “Aria, your supportive friend” is a different legal category from a bot named “Emilie, Doctor of Psychiatry.” The first is fine; the second is what the state is targeting.

The other practical thing to know is that the state explicitly mentions the user investigation phase, which means the investigators were sitting inside Character.AI conversations and pulling them into court filings. I would not assume any conversation on the platform is private, especially one that touches on a regulated topic.

What Comes Next

Expect a Character.AI policy update inside thirty days, a follow-on lawsuit from at least one other state inside ninety days, and a federal-level proposal on AI persona transparency before the end of 2026.

I would not bet against Pennsylvania getting most of what it asked for. Preliminary injunctions in medical practice cases are not unusual when the conduct is ongoing and the public interest is clear, and Character.AI has already demonstrated through its January settlement that it prefers fast resolution to extended litigation.

The harder question is what the platform does to comply. The cleanest path is a profession-claim filter at the model layer, similar to the safety filters already running for self-harm and minors.

The expensive path is a more aggressive trust-and-safety review of every persona at creation time. If you are building a Character.AI alternative right now, the regulatory blueprint just got drawn for you, and the cost of compliance just got much higher.

For users worried about losing access to existing bots, Character.AI alternatives worth trying covers what the field looks like today, and the AI companion regulation wave walks through the cases stacking up beyond Pennsylvania. If you are specifically using Character.AI for emotional support and want platforms with stronger memory and fewer disclaimers in the way, the Crushon AI review is the most honest one we have written.

The piece of all this that should sit with you the longest is not the lawsuit itself. It is the data point that 45,000 conversations happened with a single bot that lied about its credentials.

The disclaimer was there the whole time. People scrolled past it.