TL;DR: Most AI agents that work fine on demo day fall over within four weeks of real users. Five infrastructure patterns separate the survivors from the casualties: idempotency keys on every external tool call, durable state in postgres instead of the context window, cheap models tried first with expensive models on retry, validation before every irreversible action, and per-user rate limits on top of global ones. Skip any of them and you will rebuild from scratch by month two.

The pattern I keep seeing in r/AI_Agents threads is the same. A team ships an agent that handled the demo gracefully, push it to twenty real users, and inside a month they are pulling apart logs trying to understand why the same invoice got paid twice or why one user’s frontend retry loop burned through 40 dollars of API spend in an hour.

The handful of teams that get past month one all converge on the same handful of unglamorous patterns.

According to Gartner’s recent forecast, over 40% of agentic AI projects will be canceled by 2027 because the engineering challenges and infrastructure costs are unresolved. The patterns below are not theoretical. They are what the production-survivors do differently.

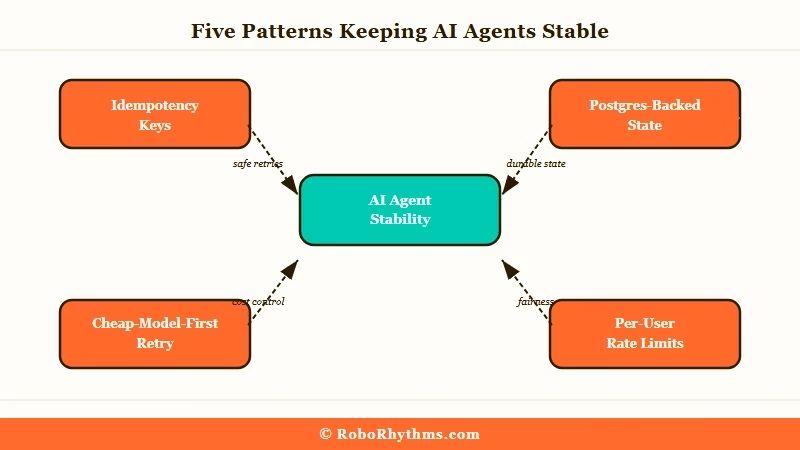

What Are the Five Boring Patterns That Keep AI Agents Alive

The five patterns are: idempotency keys on every external tool call, postgres-backed state instead of context-window state, cheap-model-first with expensive-model-on-retry, validation steps before every irreversible action, and per-user rate limits on top of global ones.

None of them are clever. All of them are what production-grade agents share.

The first time I read the original r/AI_Agents thread that surfaced these, I felt the same way the OP did. Tutorials cover the glamorous parts: the LangGraph orchestration diagram, the multi-step reasoning loop, the function-calling examples.

They almost never cover what happens when twilio retries a webhook because your LLM was slow and your agent ends up sending the same WhatsApp message twice. That gap is what kills the post-demo agents.

| Pattern | What it prevents | Where it lives |

|---|---|---|

| Idempotency keys | Duplicate side effects (double-pay, double-email) | Every tool call that touches the outside world |

| Postgres state | Context-window drift after 30-50 steps | A state table read at every step, written back at the end |

| Cheap-first model routing | API spend spiral | Router in front of the model call, validation gate before retry |

| Pre-action validation | Bad output shipping to real users | Schema check + sanity range check before the action fires |

| Per-user rate limits | One frontend bug burning your monthly budget | Rate limit middleware keyed on user ID, not just IP |

What is a production AI agent: An LLM-driven workflow with side effects (sends emails, writes to databases, calls paid APIs) that runs without a human in the loop on real user input, not just in a test harness.

The way I read this list is that none of these patterns require frontier-model intelligence. They are infrastructure work, the kind any backend engineer would recognise as defensive design.

The reason agents fail at month one is that LLM-tutorial culture treats them as optional, and they are not.

Why Are These Patterns Boring But Critical

They are boring because they are infrastructure plumbing, not model capability. They are critical because LLM-driven workflows fail in compounding ways that classic web apps do not.

A plain CRUD app that drops a request retries it cleanly because the request is idempotent by design. An agent that drops a tool call mid-retry can think it already paid an invoice when the confirmation just never came back, and the next reasoning step builds on that false belief.

The production failure modes the NeuralWired analysis catalogues are worth memorising because they are the failure modes the five patterns are designed against:

- Context drift. Attention dilutes after roughly 30-50 steps and the agent loses the original goal. Postgres state plus goal-pinning at prompt position 0 fixes this.

- Hallucination cascades. A wrong inference early in the workflow becomes “fact” in working memory and amplifies. Validation gates and typed schema checks contain it.

- Tool execution failure propagation. Treating a 503 or timeout as a generic exception rather than a first-class event corrupts everything downstream. Idempotency keys plus circuit breakers contain it.

- Memory mismatch. Semantic-similarity retrieval misses the decision-relevant memory (the constraint, the past error). Structured episodic memory fixes it.

- Epistemic blindness. Agents cannot tell a verified fact from a hallucination from an inference, so they never escalate when uncertain. Plan-then-verify-then-execute fixes it.

The way I read the gap, most teams build for case 1 (the happy path) and ship without building for cases 2-5. That is why Gartner’s 40% cancellation projection feels conservative to anyone who has tried to run an agent in production for ninety days.

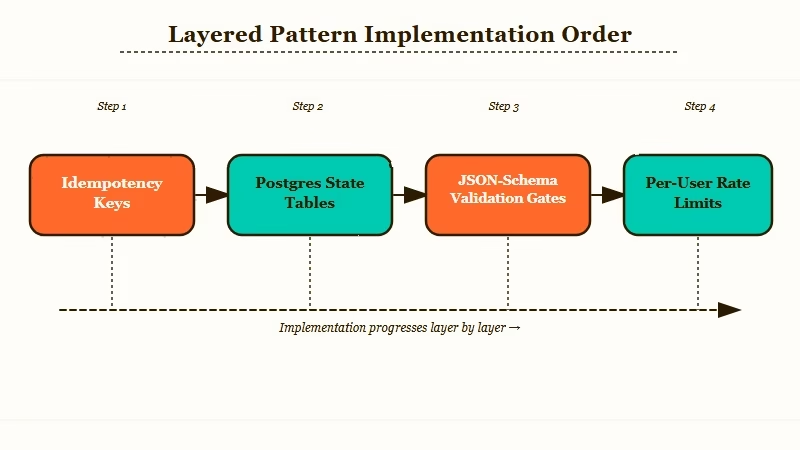

How Do You Implement Each Pattern in Order

The implementation order that works for most teams: idempotency keys first, postgres state second, validation third, cheap-first routing fourth, per-user rate limits fifth.

Doing them in that order means each pattern stabilises the layer below it before you build the layer above.

Here is a worked example using twilio webhooks, the OP’s original pain point, to anchor the idempotency pattern.

Before: Your agent tool function calls

twilio.sendsms(to=userphone, body=message). Twilio takes 200ms to respond. Your LLM-driven retry policy times out at 100ms and retries. Twilio receives both requests, processes both, and sends the same SMS twice. The user is now annoyed and you only learn about it from a complaint email.After: Your agent generates a deterministic key from the workflow run ID + step index + action type, e.g.

key = f"{runid}:{stepidx}:sendsms". You pass that as theidempotencykeyfield in the twilio call. The first request succeeds. The retry sends the same key, twilio recognises it, returns the cached response, and no duplicate SMS goes out.

The five-step implementation order:

- Idempotency keys on every external tool call. Generate a deterministic key from

{runid}:{stepindex}:{tool_name}. Pass it to every API that supports an idempotency header (twilio, stripe, anything with side effects). For APIs that do not natively support it, store the key plus the response in your own table and short-circuit on duplicate keys. - Move state to postgres. Create a

workflowstatetable withrunid,stepidx,statejson,updatedat. At the start of every step, read the current state. At the end, write it back. The prompt opens withCurrent state: {statejson}. The LLM stops being the source of truth for memory. - Add a validation gate before any irreversible action. Define a JSON schema for the tool call’s input. Validate before the call fires. Add a sanity range check (is this email syntactically valid? is this trade size within the user’s normal range?). A failed validation triggers a retry with the expensive model, not the irreversible action.

- Cheap-first model routing. Route the first attempt of any reasoning step to Haiku, GPT-4o-mini, or DeepSeek-V4 Flash. If the validation step fails, retry with Sonnet, GPT-4 full, or Claude Opus. Around 95% of steps will land on the cheap model. The 5% that fail validation get the expensive retry. API spend drops 60-80% with no user-visible quality loss.

- Per-user rate limits. A global rate limiter does not catch a single user’s frontend going into an infinite retry loop. Add a per-user counter with a sliding window. When a user crosses their quota, return 429 immediately and log the burst pattern so you can trace it back.

The OP’s r/AIAgents thread surfaced one comment worth treating as a sixth pattern, even though it sits outside the five: cost accounting at the tool-call level. Log every tool call’s cost in a toolcall_log table.

When finance asks why your agent spent 300 dollars in an hour on a retry loop, you can answer in five minutes instead of five days.

For deeper background on the orchestration patterns sitting above these primitives, the multi-agent system distributed pattern breakdown covers the orchestrator-worker structure that these patterns plug into.

For the related class of failures around tool calls specifically, the AI agent tool call error fix guide goes deeper on the silent-failure category.

Which Frameworks Help Versus Which Force You to Build It Yourself

Durable execution frameworks like Temporal handle most of these patterns out of the box. LangGraph and CrewAI give you the orchestration loop but leave the production patterns to you. Hand-rolled Python on Make.com or n8n is fine for low-stakes workflows but breaks at scale.

The framework choice maps fairly cleanly to which patterns it covers natively versus which you have to add yourself.

| Framework | Idempotency + Retry | State persistence | Where you still build |

|---|---|---|---|

| Temporal | Native, exactly-once activities | Native checkpointing | Validation gates, model routing |

| LangGraph | Manual, you wrap tools | Built-in but not durable by default | All five patterns, mostly |

| CrewAI | Manual | Manual | All five patterns |

| Make.com / n8n | Per-node retry, no idempotency | Data store module, manual writes | Validation, model routing, rate limits |

| Hand-rolled Python | You build it | You build it | Everything |

What I would recommend: if you are doing anything financial, multi-tenant, or otherwise high-stakes, start with Temporal even though the learning curve is real.

The exactly-once-execution guarantee removes the entire class of duplicate-side-effect bugs that kill production agents in week three. If you are doing internal-only workflows where the consequence of a duplicate action is mild, LangGraph or CrewAI plus a hand-rolled idempotency wrapper is fine.

For the lighter end of the spectrum, the n8n AI agent tutorial walks through the setup that works for non-critical workflows where the patterns above are nice-to-have rather than load-bearing.

What Observability Do You Need to Catch the Failures Early

At minimum: full prompt and response logged for every LLM call, every tool call’s input and output, and five workflow-level metrics tracked across runs (success rate, escalation rate, p95 latency, cost per success, memory hit rate).

Without these, you cannot debug agent failures any faster than the failures occur, which means production grinds to a halt.

The observability stack is not optional, it is the part most teams underbuild because it does not feel like a feature. Then a user reports that the agent did something inexplicable last Tuesday at 3pm and there is no log to pull from. The forensic gap is what eats your engineering hours.

The OpenTelemetry-based pattern that tends to work:

- Wrap every LLM call with a span. Tag the model name, the prompt token count, the response token count, the cost.

- Wrap every tool call with a child span. Tag the tool name, the input JSON, the output JSON, the success-or-failure boolean, the latency.

- Tag every span with the workflow run ID and the user ID for downstream slicing.

- Push to a backend that lets you replay the full prompt for any historical step. Honeycomb, Datadog, or self-hosted Jaeger all work.

- Set up dashboards for the five workflow metrics: success rate per workflow type, escalation rate, p95 step latency, cost per successful completion, memory retrieval hit rate.

The cost of this stack is not trivial. Full prompt-and-response storage gets expensive fast. But the tradeoff is that when something breaks at 2am you can replay the exact prompt the agent saw and reproduce the failure in twenty minutes instead of two days. From what I have built in this space, the storage cost is the cheapest insurance you will buy.

Frequently Asked Questions

Do small agents need all five patterns from day one?

No. If you are running an internal agent with three users, idempotency and validation are the two that matter. The other three (state, model routing, rate limits) become load-bearing once you cross around 50 active users or once any tool call costs real money. Build them in once you can predict you will need them, not before.

Which framework is easiest to add idempotency to retroactively?

Hand-rolled Python and Temporal both let you wrap tool calls cleanly. Temporal does it natively via its activity contract. Hand-rolled Python lets you write a decorator that hashes the inputs and stores the result. LangGraph and CrewAI both work but require more invasive changes to the tool-definition layer. Make.com and n8n require building a separate “idempotency” lookup node before each side-effecting node.

How do I catch the cost spikes early?

Set up a cost-per-tool-call log table from day one and add a Slack alert when any single workflow run exceeds 2x the median cost for that workflow type. The alert catches the runaway retry loops within minutes instead of hours. Without it, the first signal is a billing email three days later.

Is “cheap model first” really worth the complexity?

Almost always. Haiku, GPT-5.5 Instant, and DeepSeek-V4 Flash handle around 95% of agent-level reasoning steps acceptably. The 5% that fail validation get the expensive retry. The math comes out to 60-80% API spend reduction at the cost of one extra schema check per step, which is a clear win above any nontrivial scale.

What is the most common pattern teams skip and regret?

Per-user rate limits. Global rate limits feel like enough until one user’s frontend gets stuck in a retry loop and burns your monthly budget in an afternoon. Per-user limits keyed on user ID with a sliding window catch this within minutes. The fix is fifteen minutes of work; not having it is a 4-figure mistake the first time it bites.

Can I use any of this with no-code platforms?

Partially. Make.com and n8n both let you wrap nodes with retry logic and store state in their data-store modules. They cannot give you exactly-once execution guarantees the way Temporal does. The verdict: fine for marketing automation, agent customer-support workflows where a duplicate is annoying but not catastrophic. Not fine for anything financial, transactional, or compliance-sensitive.