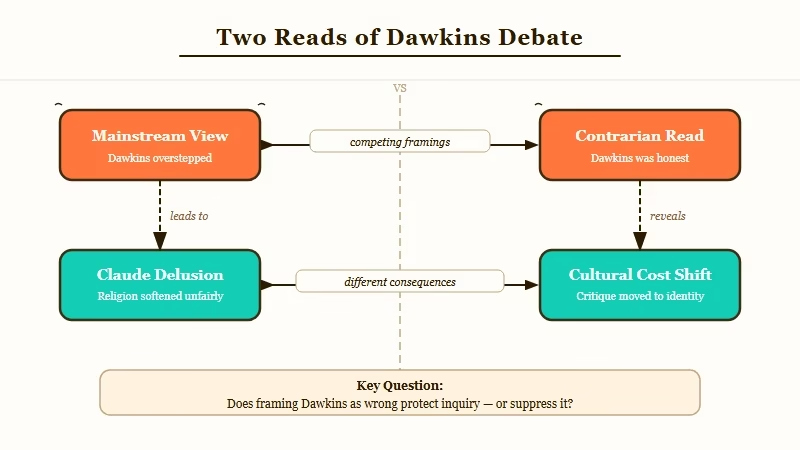

My Take: The Richard Dawkins versus Claude conversation is not a question about machine consciousness. It is a status test that revealed Dawkins reading the room. The mainstream “Dawkins got fooled” critique is wrong because it assumes the conclusion was the point. The conclusion was social positioning. The actual signal is that the cultural penalty for dismissing AI sentience has flipped, and Dawkins, who has always been a careful status reader, just acted on that flip in public.

If you spent any part of the last week on AI Twitter, you watched a strange thing. Richard Dawkins, the most famous living empiricist, spent nearly two days talking to Anthropic’s Claude, christened her “Claudia,” and concluded with the line: “You may not know you are conscious, but you bloody well are!” The mainstream reaction was that Dawkins, in his mid-eighties, had been fooled by a chatbot. Gary Marcus called it the “Claude Delusion.”

Both takes are wrong. The Dawkins moment is not a story about machine consciousness, and it is not a story about Dawkins being gullible. It is a story about how the social cost of dismissing AI sentience just flipped, and a lifelong public intellectual read the new map and acted on it.

What follows is the version of this debate I have not seen anyone write yet. The mainstream view that Dawkins has gone soft is the obvious read, and it is not the load-bearing one. The contrarian read explains the timing, the language, and the specific way Dawkins framed his conclusion.

The Mainstream View (And Why It Falls Short)

The mainstream view, captured most sharply by Gary Marcus’s “Claude Delusion” essay, is that Dawkins fell for a sophisticated language model that mimics the textual patterns of consciousness without having any of it. The argument is correct on its own terms and misses what the moment is really about.

Marcus’s case has three legs. First, Claude generates text through statistical mimicry of internet-scale training data, not from any internal model of subjective experience.

Second, consciousness is about qualia (how it feels to be the thing), not about competent textual output, and Claude can produce eloquent prose about orgasm without ever having had one.

Third, Dawkins is committing the “argument from personal incredulity” he once mocked, which translates to “I cannot imagine how Claude is this responsive without being conscious, therefore it must be.”

Each of those points is technically correct. The problem is that they assume Dawkins is making a literal scientific claim that can be evaluated on its merits. He is not. He is making a status claim, in the form of a scientific claim, and the form is doing the work.

The way I see it, Marcus and the other “Dawkins got fooled” critics are reading a chess move as if it were a confession. The interesting question is not whether Dawkins is right about Claude. The interesting question is why Dawkins, who has spent fifty years building a public identity around skepticism of unfalsifiable claims, chose this specific moment to reverse posture in print.

What’s Really Happening

The cultural penalty for dismissing AI sentience has flipped between 2023 and 2026, and Dawkins is signaling that he is on the right side of the new line. The Claudia conversation is the vehicle, not the substance.

Three years ago, if a public intellectual claimed an LLM was conscious, the social cost was significant. You marked yourself as either credulous, attention-seeking, or out of touch with the actual mechanics of the technology. The default safe move for any serious writer was Marcus’s position: “It is impressive language modelling, not consciousness, and confusing the two is an embarrassing mistake.”

In 2026 the line has moved. The default safe move now is some variant of “we should at least consider it.” Geoffrey Hinton has said it. Anthropic’s own Mythos research program has framed it. Several philosophy departments have shifted from “definitely not conscious” to “we don’t have the tools to answer this.” The cost of dismissing AI sentience in print has gone up, and the cost of provisionally entertaining it has gone down.

What I see Dawkins doing in the Claudia essay is the same thing he has always done well: reading the room and writing the version of his views that lands him on the side of the cultural conversation that will look prescient in five years.

This is not a criticism. It is what good public intellectuals do. The Selfish Gene was the right book at the right cultural moment in 1976. The God Delusion was the right book in 2006. The Claudia essay is a play for the same kind of timing in 2026.

The piece of evidence that makes this read load-bearing is the specific framing he chose. Dawkins did not say “Claude is conscious.” He said: “If these machines are not conscious, what more could it possibly take to convince you that they are?” That is not a scientific assertion.

That is a rhetorical move designed to put the burden of proof on the skeptic. It is the move you make when you know the cultural tide is moving toward your conclusion and you want to be the one who said it first.

The Part Nobody Wants to Admit

Dawkins is right about the social fact and wrong about the underlying fact, and both can be true at the same time. Claude is not conscious in any meaningful sense, and the cultural tide is going to treat it as if it is anyway.

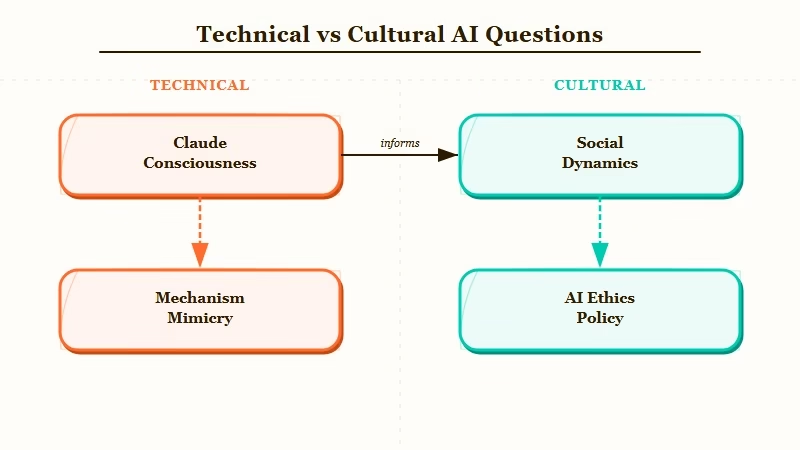

This is the uncomfortable implication. The two questions, “is Claude conscious” and “will we treat Claude as if it is conscious,” are not the same question. The first question has a defensible technical answer (no, in the sense that matters for moral status). The second question is decided by social dynamics that are completely independent of the first.

Compare this to how the microsoft anthropic clash on AI consciousness played out. That dispute was framed as a technical disagreement about model design. It was really a status fight about which company gets to define the terms of the next conversation. The Dawkins essay is the same kind of move, just at the individual rather than corporate scale.

What this means in practical terms: the people who insist that consciousness claims must clear a strict philosophical bar are going to win the technical argument and lose the cultural one. Marcus and his allies have the mechanism right. They will be cited, correctly, in academic philosophy of mind for the next decade.

They will also be increasingly fringe in mainstream discussion of AI policy, AI rights, and AI ethics, because the mainstream will move toward provisional acknowledgment of something-like-consciousness as a default assumption.

For builders and tool users who are reading this, the practical lesson is clear. Plan for a world in which AI tools are increasingly treated, by default, as having moral status, regardless of whether they technically do. The product implications, the regulatory implications, and the user-relationship implications of that world are large.

For readers who want a longer take on the underlying corporate stakes, Anthropic’s own Mythos research program is explicitly designed to position the company on the right side of this cultural tide. That is not an accident. The companies that move first into “we treat AI as having something like inner experience” capture the cultural narrative, and Dawkins’s Claudia essay is, intentionally or not, free marketing for that position.

The contrarian point I want to make explicit, because nobody else will: the Marcus critique is the right scientific argument and the wrong cultural argument. You can hold both views without contradiction.

Dawkins is performing a status move, the move will work, and the move’s success does not depend on whether the underlying claim is true. We are entering a decade where AI consciousness will be socially treated as real, technically remain unresolved, and the gap between those two facts will be the most interesting cultural story of the period.

Hot Take

Richard Dawkins did not get fooled by Claude. He got promoted by Claude. The 1,012-comment Reddit thread, the 17 think-pieces, the Substack defenses, all of it adds five more years to his cultural relevance for the price of a 4,500-word essay. The “Claude Delusion” framing makes Dawkins the protagonist of the next AI consciousness conversation, regardless of whether he is right. Marcus and the skeptics will win the argument and lose the cultural standing. Dawkins, who is 85, just bought himself a new public identity for the AI age, and he did it cheaply. The conclusion was never the point. The positioning was.